2012

|

| Carolin Walter; Gerd Bruder; Benjamin Bolte; Frank Steinicke Evaluation of Field of View Calibration Techniques for Head-mounted Displays and Effects on Distance Estimation Proceedings Article In: Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR), pp. 13–24, 2012. @inproceedings{WBBS12,

title = {Evaluation of Field of View Calibration Techniques for Head-mounted Displays and Effects on Distance Estimation},

author = {Carolin Walter and Gerd Bruder and Benjamin Bolte and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/WBBS12.pdf},

year = {2012},

date = {2012-01-01},

booktitle = {Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR)},

pages = {13--24},

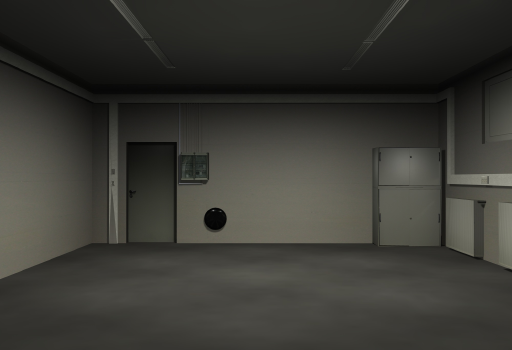

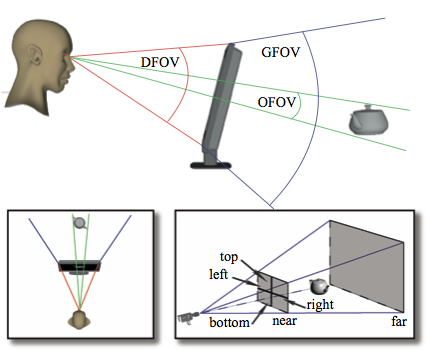

abstract = {Users in immersive virtual environments (VEs) with head-mounted displays (HMDs) often perceive compressed egocentric distances compared to the real world. Although various factors have been identified that affect egocentric distance perception, the main factors for this effect still remain unknown. Recent experiments suggest that miscalibration of the field of view (FOV) have a strong effect on distance perception. Unfortunately, it is not trivial to correctly set the FOV for a given HMD in such a way that the scene is rendered without mini- or magnification. In this paper we test two calibration techniques based on visual or visual-proprioceptive estimation tasks to determine the FOV of an immersive HMD and analyze the effect of the resulting FOVs on distance estimation in two experiments: (i) blind walking for long distances and (ii) blind grasping for arm-reach distances. We found an impact of the FOV on distance judgments, but calibrating the FOVs was not sufficient to compensate for distance underestimation effects.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Users in immersive virtual environments (VEs) with head-mounted displays (HMDs) often perceive compressed egocentric distances compared to the real world. Although various factors have been identified that affect egocentric distance perception, the main factors for this effect still remain unknown. Recent experiments suggest that miscalibration of the field of view (FOV) have a strong effect on distance perception. Unfortunately, it is not trivial to correctly set the FOV for a given HMD in such a way that the scene is rendered without mini- or magnification. In this paper we test two calibration techniques based on visual or visual-proprioceptive estimation tasks to determine the FOV of an immersive HMD and analyze the effect of the resulting FOVs on distance estimation in two experiments: (i) blind walking for long distances and (ii) blind grasping for arm-reach distances. We found an impact of the FOV on distance judgments, but calibrating the FOVs was not sufficient to compensate for distance underestimation effects. |

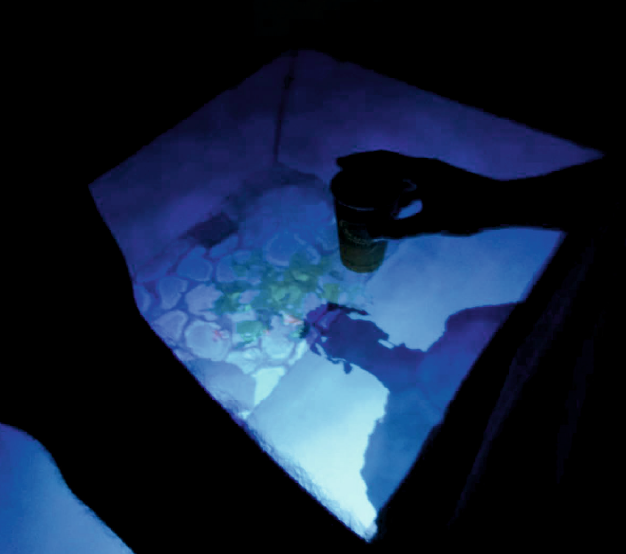

| Martin Fischbach; Dennis Wiebusch; Marc E. Latoschik; Gerd Bruder; Frank Steinicke Blending Real and Virtual Worlds using Self-Reflection and Fiducials Journal Article In: Entertainment Computing - ICEC 2012, Lecture Notes in Computer Science (LNCS), vol. 7522, pp. 465–468, 2012. @article{FWLBS12,

title = {Blending Real and Virtual Worlds using Self-Reflection and Fiducials},

author = {Martin Fischbach and Dennis Wiebusch and Marc E. Latoschik and Gerd Bruder and Frank Steinicke},

year = {2012},

date = {2012-01-01},

journal = {Entertainment Computing - ICEC 2012, Lecture Notes in Computer Science (LNCS)},

volume = {7522},

pages = {465--468},

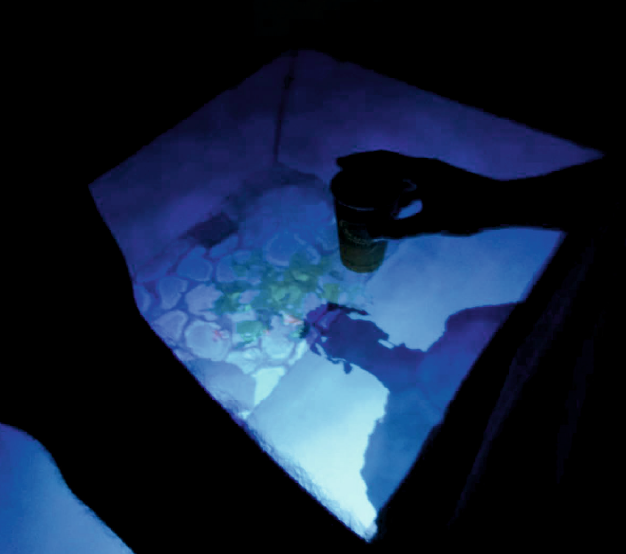

abstract = {This paper presents an enhanced version of a portable out-of-the-box platform for semi-immersive interactive applications. The enhanced version combines stereoscopic visualization, marker-less user tracking, and multi-touch with self-reflection of users and tangible ob- ject interaction. A virtual fish tank simulation demonstrates how real and virtual worlds are seamlessly blended by providing a multi-modal interaction experience that utilizes a user-centric projection, body, and object tracking, as well as a consistent integration of physical and virtual properties like appearance and causality into a mixed real/virtual world.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

This paper presents an enhanced version of a portable out-of-the-box platform for semi-immersive interactive applications. The enhanced version combines stereoscopic visualization, marker-less user tracking, and multi-touch with self-reflection of users and tangible ob- ject interaction. A virtual fish tank simulation demonstrates how real and virtual worlds are seamlessly blended by providing a multi-modal interaction experience that utilizes a user-centric projection, body, and object tracking, as well as a consistent integration of physical and virtual properties like appearance and causality into a mixed real/virtual world. |

| Gerd Bruder; Andreas Pusch; Frank Steinicke Analyzing Effects of Geometric Rendering Parameters on Size and Distance Estimation in On-Axis Stereographics Proceedings Article In: Proceedings of the ACM Symposium on Applied Perception (SAP), pp. 111–118, 2012. @inproceedings{BPS12,

title = {Analyzing Effects of Geometric Rendering Parameters on Size and Distance Estimation in On-Axis Stereographics},

author = {Gerd Bruder and Andreas Pusch and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BPS12.pdf},

year = {2012},

date = {2012-01-01},

booktitle = {Proceedings of the ACM Symposium on Applied Perception (SAP)},

pages = {111--118},

abstract = {Accurate perception of size and distance in a three-dimensional virtual environment is important for many applications. However, several experiments have revealed that spatial perception of virtual environments often deviates from the real world, even when the virtual scene is modeled as an accurate replica of a familiar physical environment. While previous research has elucidated various factors that can facilitate perceptual shifts, the effects of geometric rendering parameters on spatial cues are still not well understood. In this paper, we model and evaluate effects of spatial transformations caused by variations of the geometric field of view and the interpupillary distance in on-axis stereographic display environments. We evaluated different predictions in a psychophysical experiment in which subjects were asked to judge distance and size properties of virtual objects placed in a realistic virtual scene. Our results suggest that variations in the geometric field of view have a strong influence on distance judgments, whereas variations in the geometric interpupillary distance mainly affect size judgments.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Accurate perception of size and distance in a three-dimensional virtual environment is important for many applications. However, several experiments have revealed that spatial perception of virtual environments often deviates from the real world, even when the virtual scene is modeled as an accurate replica of a familiar physical environment. While previous research has elucidated various factors that can facilitate perceptual shifts, the effects of geometric rendering parameters on spatial cues are still not well understood. In this paper, we model and evaluate effects of spatial transformations caused by variations of the geometric field of view and the interpupillary distance in on-axis stereographic display environments. We evaluated different predictions in a psychophysical experiment in which subjects were asked to judge distance and size properties of virtual objects placed in a realistic virtual scene. Our results suggest that variations in the geometric field of view have a strong influence on distance judgments, whereas variations in the geometric interpupillary distance mainly affect size judgments. |

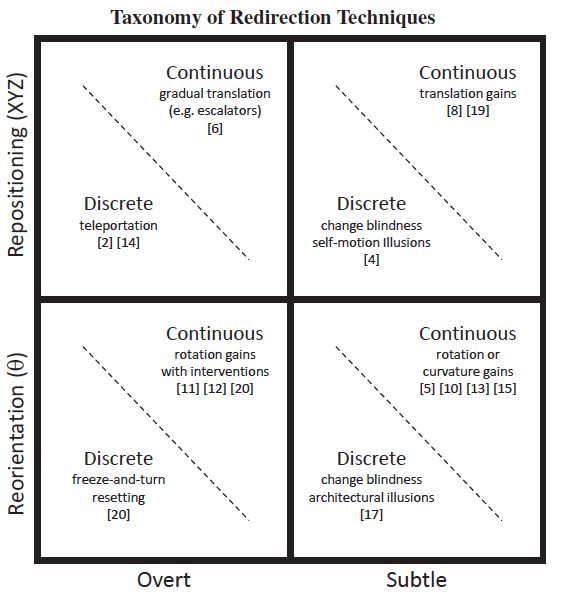

| Evan A. Suma; Gerd Bruder; Frank Steinicke; David M. Krum; Mark Bolas A Taxonomy for Deploying Redirection Techniques in Immersive Virtual Environments Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 43–46, 2012. @inproceedings{SBSKB12,

title = {A Taxonomy for Deploying Redirection Techniques in Immersive Virtual Environments},

author = {Evan A. Suma and Gerd Bruder and Frank Steinicke and David M. Krum and Mark Bolas},

year = {2012},

date = {2012-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {43--46},

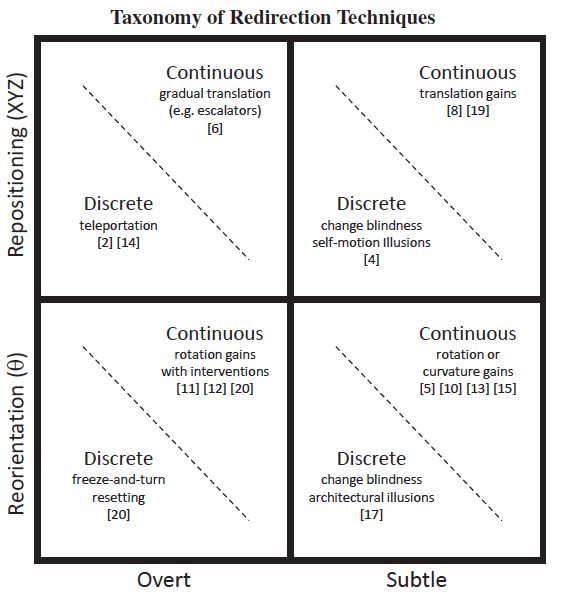

abstract = {Natural walking can provide a compelling experience in immersive virtual environments, but it remains an implementation challenge due to the physical space constraints imposed on the size of the virtual world. The use of redirection techniques is a promising approach that relaxes the space requirements of natural walking by manipulating the user’s route in the virtual environment, causing the real world path to remain within the boundaries of the physical workspace. In this paper, we present and apply a novel taxonomy that separates redirection techniques according to their geometric flexibility versus the likelihood that they will be noticed by users. Additionally, we conducted a user study of three reorientation techniques, which confirmed that participants were less likely to experience a break in presence when reoriented using the techniques classified as subtle in our taxonomy. Our results also suggest that reorientation with change blindness illusions may give the impression of exploring a more expansive environment than continuous rotation techniques, but at the cost of negatively impacting spatial knowledge acquisition.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Natural walking can provide a compelling experience in immersive virtual environments, but it remains an implementation challenge due to the physical space constraints imposed on the size of the virtual world. The use of redirection techniques is a promising approach that relaxes the space requirements of natural walking by manipulating the user’s route in the virtual environment, causing the real world path to remain within the boundaries of the physical workspace. In this paper, we present and apply a novel taxonomy that separates redirection techniques according to their geometric flexibility versus the likelihood that they will be noticed by users. Additionally, we conducted a user study of three reorientation techniques, which confirmed that participants were less likely to experience a break in presence when reoriented using the techniques classified as subtle in our taxonomy. Our results also suggest that reorientation with change blindness illusions may give the impression of exploring a more expansive environment than continuous rotation techniques, but at the cost of negatively impacting spatial knowledge acquisition. |

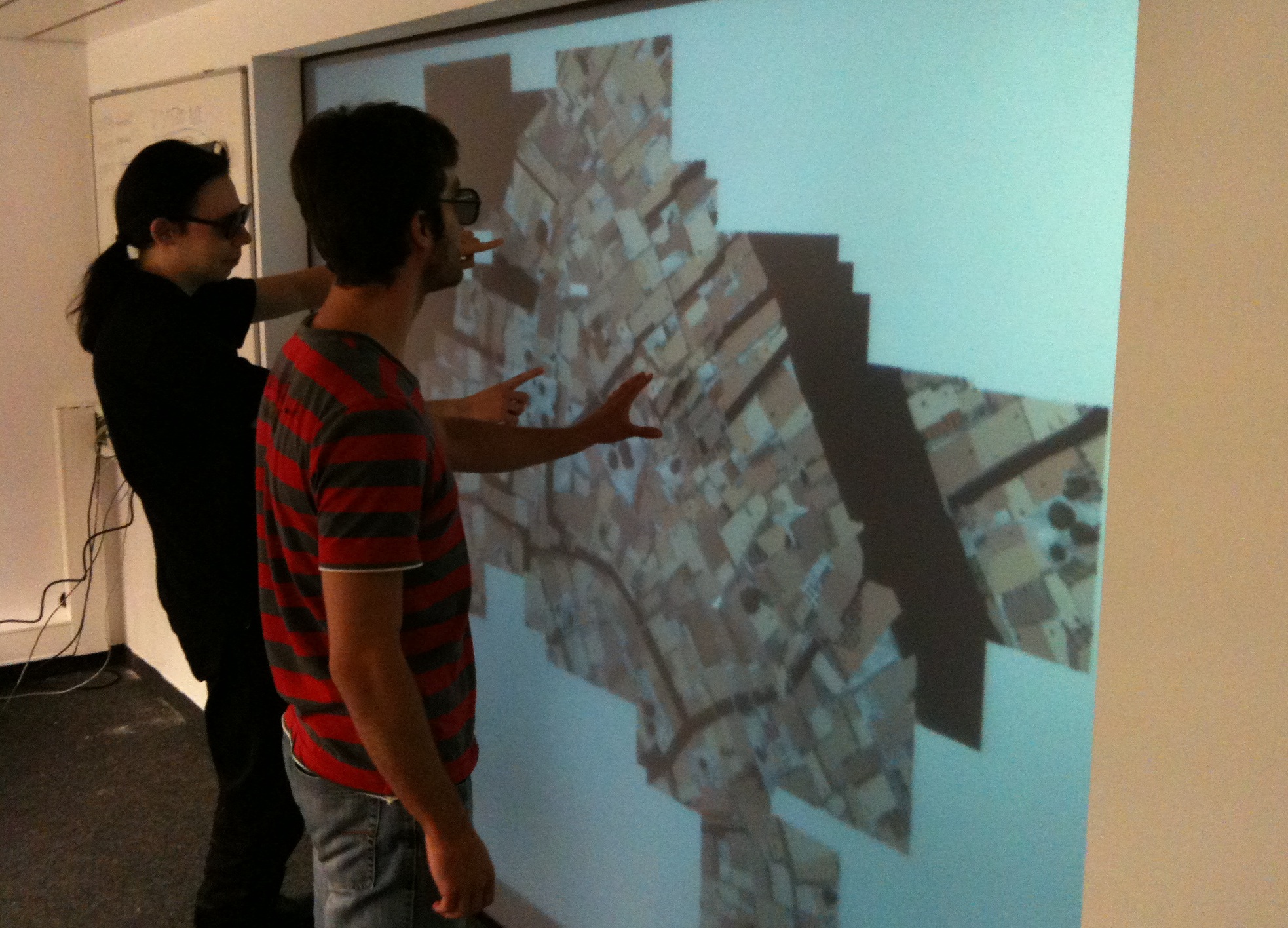

| Martin Fischbach; Christian Treffs; David Cyborra; Alexander Strehler; Thomas Wedler; Gerd Bruder; Andreas Pusch; Marc E. Latoschik; Frank Steinicke A Mixed Reality Space for Tangible User Interaction Proceedings Article In: Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR), pp. 25–36, 2012. @inproceedings{FTCSWBPLS12,

title = {A Mixed Reality Space for Tangible User Interaction},

author = {Martin Fischbach and Christian Treffs and David Cyborra and Alexander Strehler and Thomas Wedler and Gerd Bruder and Andreas Pusch and Marc E. Latoschik and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/FTCSWBPLS12.pdf},

year = {2012},

date = {2012-01-01},

booktitle = {Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR)},

pages = {25--36},

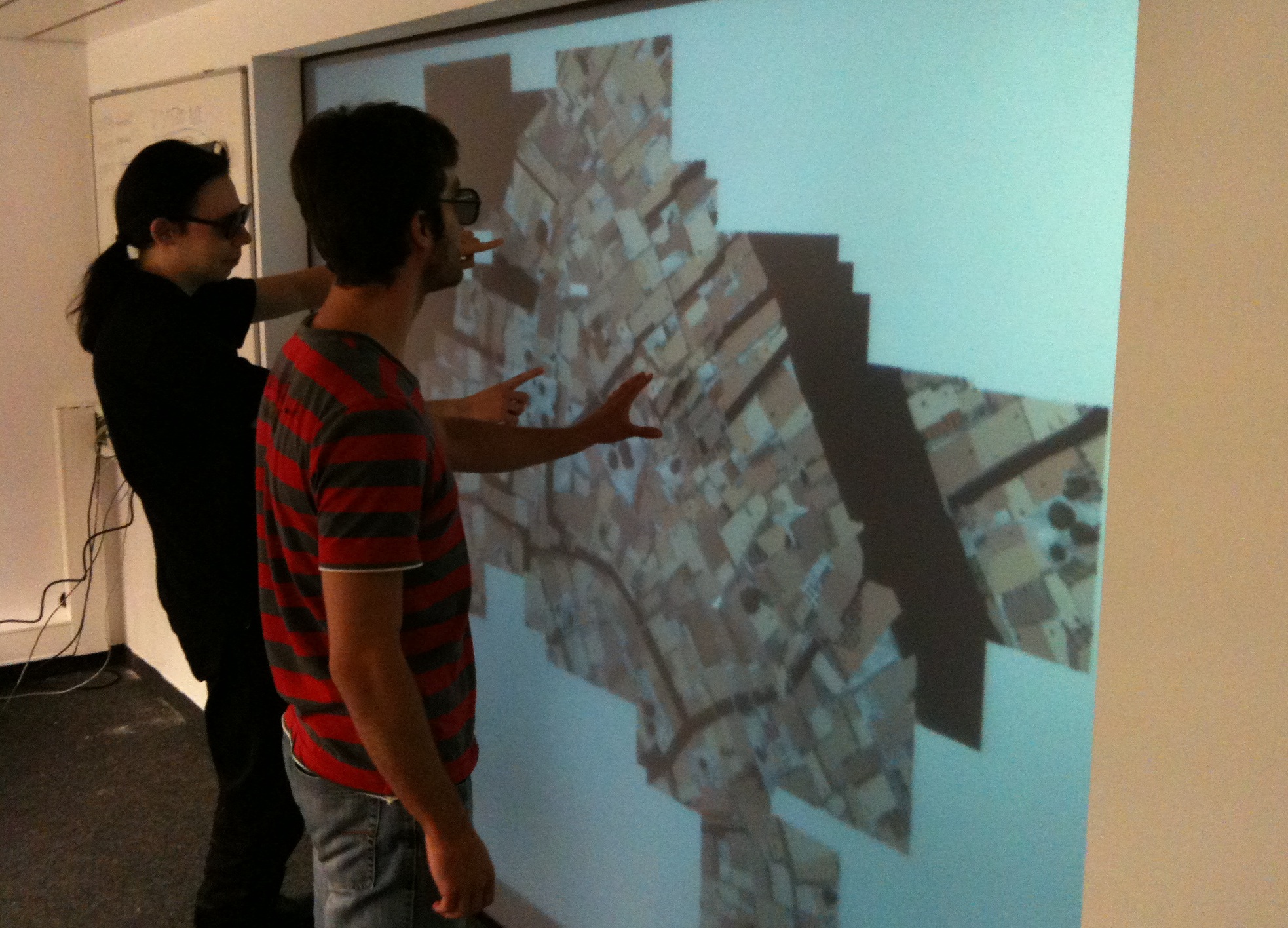

abstract = {Recent developments in the field of semi-immersive display technologies provide new possibilities for engaging users in interactive three-dimensional virtual environments (VEs). For instance, combining low-cost tracking systems (such as the Microsoft Kinect) and multi-touch interfaces enables inexpensive and easily maintainable interactive setups. The goal of this work is to bring together virtual as well as real objects on a stereoscopic multi-touch enabled tabletop surface. Therefore, we present a prototypical implementation of such a mixed reality (MR) space for tangible interaction by extending the smARTbox. The smARTbox is a responsive touch-enabled stereoscopic out-of-the-box system that is able to track users and objects above as well as on the surface. We describe the prototypical hard- and software setup which extends this setup to a MR space, and highlight design challenges for the several application examples.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Recent developments in the field of semi-immersive display technologies provide new possibilities for engaging users in interactive three-dimensional virtual environments (VEs). For instance, combining low-cost tracking systems (such as the Microsoft Kinect) and multi-touch interfaces enables inexpensive and easily maintainable interactive setups. The goal of this work is to bring together virtual as well as real objects on a stereoscopic multi-touch enabled tabletop surface. Therefore, we present a prototypical implementation of such a mixed reality (MR) space for tangible interaction by extending the smARTbox. The smARTbox is a responsive touch-enabled stereoscopic out-of-the-box system that is able to track users and objects above as well as on the surface. We describe the prototypical hard- and software setup which extends this setup to a MR space, and highlight design challenges for the several application examples. |

![[POSTER] Analysis of IR-based Virtual Reality Tracking Using Multiple Kinects](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBWS12.png) | Srivishnu Satyavolu; Gerd Bruder; Pete Willemsen; Frank Steinicke [POSTER] Analysis of IR-based Virtual Reality Tracking Using Multiple Kinects Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 149–150, 2012. @inproceedings{SBWS12,

title = {[POSTER] Analysis of IR-based Virtual Reality Tracking Using Multiple Kinects},

author = {Srivishnu Satyavolu and Gerd Bruder and Pete Willemsen and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBWS12.pdf},

year = {2012},

date = {2012-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {149--150},

abstract = {This article presents an analysis of using multiple Microsoft Kinect Sensors to track users in a VR system. This article focuses on using multiple Kinect sensors to track infrared points for use in virtual reality applications. Multiple Kinect sensors may serve as a low-cost and affordable means to track position information across a large lab space in applications where precise location tracking is not necessary. We present our findings and analysis of the tracking range of a Kinect sensor in situations in which multiple Kinects are present. Overall, the Kinect sensor works well for this application and in lieu of more expensive options, the Kinect sensors may be a viable option for very low-cost tracking in virtual reality applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

This article presents an analysis of using multiple Microsoft Kinect Sensors to track users in a VR system. This article focuses on using multiple Kinect sensors to track infrared points for use in virtual reality applications. Multiple Kinect sensors may serve as a low-cost and affordable means to track position information across a large lab space in applications where precise location tracking is not necessary. We present our findings and analysis of the tracking range of a Kinect sensor in situations in which multiple Kinects are present. Overall, the Kinect sensor works well for this application and in lieu of more expensive options, the Kinect sensors may be a viable option for very low-cost tracking in virtual reality applications. |

2011

|

| Dimitar Valkov; Gerd Bruder; Benjamin Bolte; Frank Steinicke VIARGO: A Generic VR-based Interaction Library Proceedings Article In: Proceedings of the Workshop on Software Engineering and Architectures for Realtime Interactive Systems (SEARIS), pp. 23–28, 2011. @inproceedings{VBBS11,

title = {VIARGO: A Generic VR-based Interaction Library},

author = { Dimitar Valkov and Gerd Bruder and Benjamin Bolte and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/VBBS11.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of the Workshop on Software Engineering and Architectures for Realtime Interactive Systems (SEARIS)},

pages = {23--28},

abstract = {Traditionally, interaction techniques for virtual reality applications are implemented in a proprietary way on specific target platforms, e. g., requiring specific hardware, physics or rendering libraries, which withholds reusability and portability. Though hardware abstraction layers for numerous devices are provided by multiple virtual reality libraries, they are usually tightly bound to a particular rendering environment. In this paper we introduce Viargo - a generic virtual reality interaction library, which serves as additional software layer that is independent from the application and its linked libraries, i. e., a once developed interaction technique, such as walking with a headmounted display or multi-touch interaction, can be ported to different hard- or software environments with minimal code adaptation. We describe the underlying concepts and present examples on how to integrate Viargo in different graphics engines, thus extending proprietary graphics libraries with a few lines of code to easy-to-use virtual reality engines.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Traditionally, interaction techniques for virtual reality applications are implemented in a proprietary way on specific target platforms, e. g., requiring specific hardware, physics or rendering libraries, which withholds reusability and portability. Though hardware abstraction layers for numerous devices are provided by multiple virtual reality libraries, they are usually tightly bound to a particular rendering environment. In this paper we introduce Viargo - a generic virtual reality interaction library, which serves as additional software layer that is independent from the application and its linked libraries, i. e., a once developed interaction technique, such as walking with a headmounted display or multi-touch interaction, can be ported to different hard- or software environments with minimal code adaptation. We describe the underlying concepts and present examples on how to integrate Viargo in different graphics engines, thus extending proprietary graphics libraries with a few lines of code to easy-to-use virtual reality engines. |

| Benjamin Bolte; Gerd Bruder; Frank Steinicke The Jumper Metaphor: An Effective Navigation Technique for Immersive Display Setups Proceedings Article In: Proceedings of the Virtual Reality International Conference (VRIC), pp. 1–7, 2011. @inproceedings{BBS11a,

title = {The Jumper Metaphor: An Effective Navigation Technique for Immersive Display Setups},

author = {Benjamin Bolte and Gerd Bruder and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BBS11a.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of the Virtual Reality International Conference (VRIC)},

pages = {1--7},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Gerd Bruder; Frank Steinicke; Phil Wieland Self-motion illusions in immersive virtual reality environments Proceedings Article In: Hirose, Michitaka; Lok, Benjamin; Majumder, Aditi; Schmalstieg, Dieter (Ed.): Proceedings of IEEE Virtual Reality (VR), pp. 39–46, 2011. @inproceedings{BSW11,

title = {Self-motion illusions in immersive virtual reality environments},

author = { Gerd Bruder and Frank Steinicke and Phil Wieland},

editor = {Michitaka Hirose and Benjamin Lok and Aditi Majumder and Dieter Schmalstieg},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSW11.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {39--46},

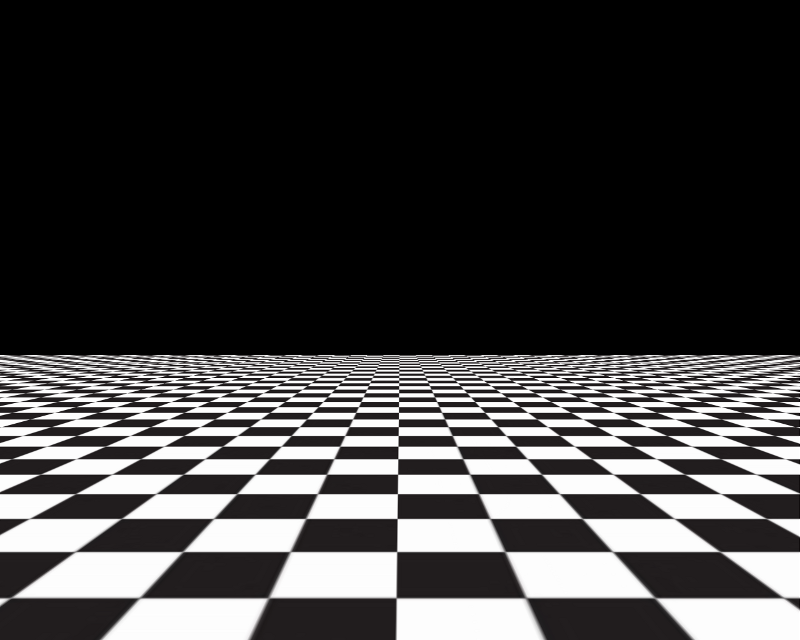

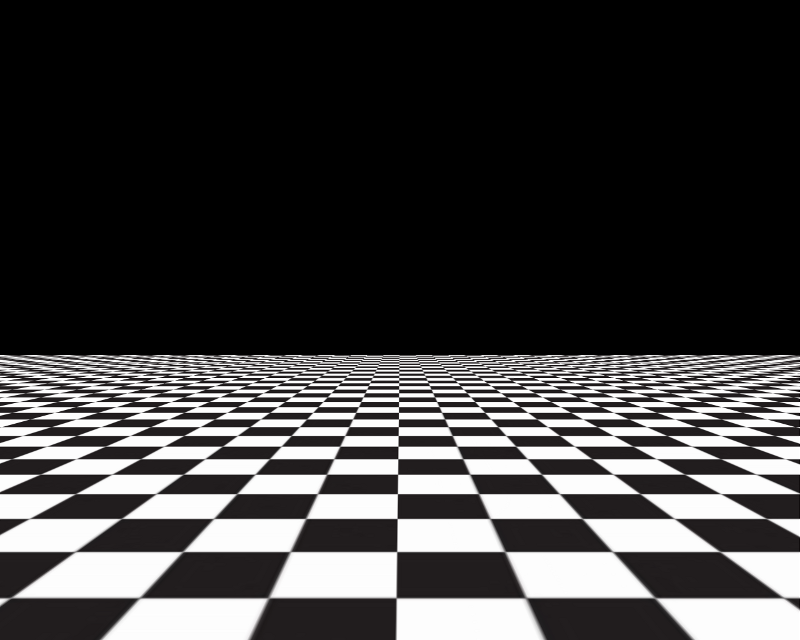

abstract = {Motion perception in immersive virtual reality environments significantly differs from the real world. For example, previous work has shown that users tend to underestimate travel distances in immersive virtual environments (VEs). As a solution to this problem, some researchers propose to scale the mapped virtual camera motion relative to the tracked real-world movement of a user until real and virtual motion appear to match, i.e., real-world movements could be mapped with a larger gain to the VE in order to compensate for the underestimation. Although this approach usually results in more accurate self-motion judgments by users, introducing discrepancies between real and virtual motion can become a problem, in particular, due to misalignments of both worlds and distorted space cognition. In this paper we describe a different approach that introduces apparent self-motion illusions by manipulating optic flow fields during movements in VEs. These manipulations can affect self-motion perception in VEs, but omit a quantitative discrepancy between real and virtual motions. We introduce four illusions and show in experiments that optic flow manipulation can significantly affect users' self-motion judgments. Furthermore, we show that with such manipulation of optic flow fields the underestimation of travel distances can be compensated.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Motion perception in immersive virtual reality environments significantly differs from the real world. For example, previous work has shown that users tend to underestimate travel distances in immersive virtual environments (VEs). As a solution to this problem, some researchers propose to scale the mapped virtual camera motion relative to the tracked real-world movement of a user until real and virtual motion appear to match, i.e., real-world movements could be mapped with a larger gain to the VE in order to compensate for the underestimation. Although this approach usually results in more accurate self-motion judgments by users, introducing discrepancies between real and virtual motion can become a problem, in particular, due to misalignments of both worlds and distorted space cognition. In this paper we describe a different approach that introduces apparent self-motion illusions by manipulating optic flow fields during movements in VEs. These manipulations can affect self-motion perception in VEs, but omit a quantitative discrepancy between real and virtual motions. We introduce four illusions and show in experiments that optic flow manipulation can significantly affect users' self-motion judgments. Furthermore, we show that with such manipulation of optic flow fields the underestimation of travel distances can be compensated. |

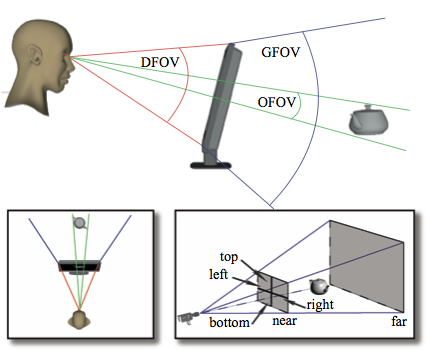

| Frank Steinicke; Gerd Bruder; Scott Kuhl Realistic Perspective Projections for Virtual Objects and Environments Journal Article In: ACM Transactions on Graphics (TOG), vol. 30, no. 5, pp. 112:1–112:10, 2011. @article{SBK11,

title = {Realistic Perspective Projections for Virtual Objects and Environments},

author = { Frank Steinicke and Gerd Bruder and Scott Kuhl},

year = {2011},

date = {2011-01-01},

journal = {ACM Transactions on Graphics (TOG)},

volume = {30},

number = {5},

pages = {112:1--112:10},

abstract = {Computer graphics systems provide sophisticated means to render virtual 3D space to 2D display surfaces by applying planar geometric projections. In a realistic viewing condition the perspective applied for rendering should appropriately account for the viewer's location relative to the image. As a result, an observer would not be able to distinguish between a rendering of a virtual environment on a computer screen and a view "through" the screen at an identical real-world scene. Until now, little effort has been made to identify perspective projections which cause human observers to judge them to be realistic. In this article we analyze observers' awareness of perspective distortions of virtual scenes displayed on a computer screen. These distortions warp the virtual scene and make it differ significantly from how the scene would look in reality. We describe psychophysical experiments that explore the subject's ability to discriminate between different perspective projections and identify projections that most closely match an equivalent real scene. We found that the field of view used for perspective rendering should match the actual visual angle of the display to provide users with a realistic view. However, we found that slight changes of the field of view in the range of 10-20% for two classes of test environments did not cause a distorted mental image of the observed models.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

Computer graphics systems provide sophisticated means to render virtual 3D space to 2D display surfaces by applying planar geometric projections. In a realistic viewing condition the perspective applied for rendering should appropriately account for the viewer's location relative to the image. As a result, an observer would not be able to distinguish between a rendering of a virtual environment on a computer screen and a view "through" the screen at an identical real-world scene. Until now, little effort has been made to identify perspective projections which cause human observers to judge them to be realistic. In this article we analyze observers' awareness of perspective distortions of virtual scenes displayed on a computer screen. These distortions warp the virtual scene and make it differ significantly from how the scene would look in reality. We describe psychophysical experiments that explore the subject's ability to discriminate between different perspective projections and identify projections that most closely match an equivalent real scene. We found that the field of view used for perspective rendering should match the actual visual angle of the display to provide users with a realistic view. However, we found that slight changes of the field of view in the range of 10-20% for two classes of test environments did not cause a distorted mental image of the observed models. |

| Gerd Bruder; Frank Steinicke Perceptual Evaluation of Interpupillary Distances in Head-mounted Display Environments Proceedings Article In: Proceedings of the GI-Workshop VR/AR, pp. 135–146, 2011. @inproceedings{BS11a,

title = {Perceptual Evaluation of Interpupillary Distances in Head-mounted Display Environments},

author = {Gerd Bruder and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BS11a.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of the GI-Workshop VR/AR},

pages = {135--146},

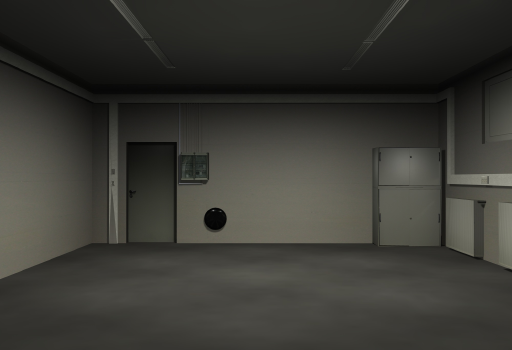

abstract = {Head-mounted displays (HMDs) allow users to explore virtual environments (VEs) from an egocentric perspective. In order to present a realistic view, the rendering system has to be adjusted to the characteristics of the HMD, e.g., the display's field of view (FOV), as well as to characteristics that are unique for each user, in particular, the interpupillary distance (IPD). In the optimal case, the rendering system is calibrated to the binocular configuration of the HMD, and adapted to the measured IPD of the user. A discrepancy between the user's IPD and stereoscopic rendering may distort the perception of the VE, since objects may appear minified or magnified. In this paper, we describe binocular calibration of HMDs, and evaluate which IPDs are judged as most natural by HMD users. In our experiment, subjects had to adjust the IPD for a rendered virtual replica of our laboratory until perception of the virtual replica matched perception of the real laboratory. Our results motivate that the IPDs which are estimated by subjects as most natural are affected by the FOV of the HMD, and the geometric FOV used for rendering. In particular, we found that with increasing fields of view, subjects tend to underestimate their geometric IPD.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Head-mounted displays (HMDs) allow users to explore virtual environments (VEs) from an egocentric perspective. In order to present a realistic view, the rendering system has to be adjusted to the characteristics of the HMD, e.g., the display's field of view (FOV), as well as to characteristics that are unique for each user, in particular, the interpupillary distance (IPD). In the optimal case, the rendering system is calibrated to the binocular configuration of the HMD, and adapted to the measured IPD of the user. A discrepancy between the user's IPD and stereoscopic rendering may distort the perception of the VE, since objects may appear minified or magnified. In this paper, we describe binocular calibration of HMDs, and evaluate which IPDs are judged as most natural by HMD users. In our experiment, subjects had to adjust the IPD for a rendered virtual replica of our laboratory until perception of the virtual replica matched perception of the real laboratory. Our results motivate that the IPDs which are estimated by subjects as most natural are affected by the FOV of the HMD, and the geometric FOV used for rendering. In particular, we found that with increasing fields of view, subjects tend to underestimate their geometric IPD. |

| Frank Steinicke; Gerd Bruder; Markus Lappe; Scott Kuhl; Pete Willemsen; Klaus H. Hinrichs Natural Perspective Projections for Head-Mounted Displays Journal Article In: IEEE Transactions on Visualization and Computer Graphics (TVCG), vol. 17, no. 7, pp. 888–899, 2011. @article{SBLKWH11,

title = {Natural Perspective Projections for Head-Mounted Displays},

author = {Frank Steinicke and Gerd Bruder and Markus Lappe and Scott Kuhl and Pete Willemsen and Klaus H. Hinrichs},

year = {2011},

date = {2011-01-01},

journal = {IEEE Transactions on Visualization and Computer Graphics (TVCG)},

volume = {17},

number = {7},

pages = {888--899},

abstract = {The display units integrated in today's head-mounted displays (HMDs) provide only a limited field of view (FOV) to the virtual world. In order to present an undistorted view to the virtual environment (VE), the perspective projection used to render the VE has to be adjusted to the limitations caused by the HMD characteristics. In particular, the geometric field of view (GFOV), which defines the virtual aperture angle used for rendering of the 3D scene, is set up according to the display field of view (DFOV). A discrepancy between these two fields of view distorts the geometry of the VE in a way that either minifies or magnifies the imagery displayed to the user. It has been shown that this distortion has the potential to affect a user's perception of the virtual space, sense of presence, and performance on visual search tasks. In this paper, we analyze the user's perception of a VE displayed in a HMD, which is rendered with different GFOVs. We introduce a psychophysical calibration method to determine the HMD's actual field of view, which may vary from the nominal values specified by the manufacturer. Furthermore, we conducted two experiments to identify perspective projections for HMDs, which are identified as natural by subjects--even if these perspectives deviate from the perspectives that are inherently defined by the DFOV. In the first experiment, subjects had to adjust the GFOV for a rendered virtual laboratory such that their perception of the virtual replica matched the perception of the real laboratory, which they saw before the virtual one. In the second experiment, we displayed the same virtual laboratory, but restricted the viewing condition in the real world to simulate the limited viewing condition in a HMD environment. We found that subjects evaluate a GFOV as natural when it is larger than the actual DFOV of the HMD--in some cases up to 50 percent--even when subjects viewed the real space with a limited field of view.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

The display units integrated in today's head-mounted displays (HMDs) provide only a limited field of view (FOV) to the virtual world. In order to present an undistorted view to the virtual environment (VE), the perspective projection used to render the VE has to be adjusted to the limitations caused by the HMD characteristics. In particular, the geometric field of view (GFOV), which defines the virtual aperture angle used for rendering of the 3D scene, is set up according to the display field of view (DFOV). A discrepancy between these two fields of view distorts the geometry of the VE in a way that either minifies or magnifies the imagery displayed to the user. It has been shown that this distortion has the potential to affect a user's perception of the virtual space, sense of presence, and performance on visual search tasks. In this paper, we analyze the user's perception of a VE displayed in a HMD, which is rendered with different GFOVs. We introduce a psychophysical calibration method to determine the HMD's actual field of view, which may vary from the nominal values specified by the manufacturer. Furthermore, we conducted two experiments to identify perspective projections for HMDs, which are identified as natural by subjects--even if these perspectives deviate from the perspectives that are inherently defined by the DFOV. In the first experiment, subjects had to adjust the GFOV for a rendered virtual laboratory such that their perception of the virtual replica matched the perception of the real laboratory, which they saw before the virtual one. In the second experiment, we displayed the same virtual laboratory, but restricted the viewing condition in the real world to simulate the limited viewing condition in a HMD environment. We found that subjects evaluate a GFOV as natural when it is larger than the actual DFOV of the HMD--in some cases up to 50 percent--even when subjects viewed the real space with a limited field of view. |

| Gerd Bruder Making Small Spaces Feel Large: Self-motion Perception, Redirection and Illusions PhD Thesis Department of Computer Science, University of M, 2011. @phdthesis{Bru11a,

title = {Making Small Spaces Feel Large: Self-motion Perception, Redirection and Illusions},

author = {Gerd Bruder},

year = {2011},

date = {2011-01-01},

school = {Department of Computer Science, University of M},

keywords = {},

pubstate = {published},

tppubtype = {phdthesis}

}

|

| Benjamin Bolte; Gerd Bruder; Frank Steinicke Jumping through Immersive Video Games Proceedings Article In: SIGGRAPH Asia 2011 Technical Sketches, pp. 1, 2011. @inproceedings{BBS11b,

title = {Jumping through Immersive Video Games},

author = {Benjamin Bolte and Gerd Bruder and Frank Steinicke},

year = {2011},

date = {2011-01-01},

booktitle = {SIGGRAPH Asia 2011 Technical Sketches},

pages = {1},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Benjamin Bolte; Gerd Bruder; Frank Steinicke Impact of Visual Orientation Cues on Angular Motion Redirection Proceedings Article In: Proceedings of the GI-Workshop VR/AR, 2011. @inproceedings{BBS11,

title = {Impact of Visual Orientation Cues on Angular Motion Redirection},

author = {Benjamin Bolte and Gerd Bruder and Frank Steinicke},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of the GI-Workshop VR/AR},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Gerd Bruder Augmenting Geometric Fields of View and Scaling Head Rotations for Efficient Exploration in Head-Mounted Display Environments Proceedings Article In: Proceedings of the IEEE Virtual Reality Workshop on Perceptual Illusions in Virtual Environments (PIVE), pp. 9, 2011. @inproceedings{Bru11,

title = {Augmenting Geometric Fields of View and Scaling Head Rotations for Efficient Exploration in Head-Mounted Display Environments},

author = {Gerd Bruder},

url = {Physical characteristics and constraints of today’s head-mounted displays (HMDs) often impair interaction in immersive virtual environments (VEs). For instance, due to the limited field of view (FOV) subtended by the display units in front of the user’s eyes more effort is required to explore a VE by head rotations than for exploration in the real world. In this paper we propose a combination of two augmentation techniques that have the potential to make exploration of VEs more efficient: (1) augmenting the geometric FOV (GFOV) used for rendering the VE, and (2) amplifying head rotations while the user changes her head orientation. In order to identify how much manipulation can be applied without users noticing, we conducted two psychophysical experiments in which we analyzed subjects’ ability to discriminate between virtual and real head pitch and roll rotations while three different geometric FOVs were used. Our results show that the combination of both techniques has great potential to support efficient exploration of VEs. We found that virtual pitch and roll rotations can be amplified by 30% and 44% respectively, when the GFOV matches the subject’s estimation of the most natural FOV. This leads to a possible reduction of the user’s effort required to explore the VE using a combination of both techniques by approximately 25%.},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of the IEEE Virtual Reality Workshop on Perceptual Illusions in Virtual Environments (PIVE)},

pages = {9},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Dimitar Valkov; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs 2Đ Touching of 3Đ Stereoscopic Objects Proceedings Article In: Proceedings of the ACM Conference on Human Factors in Computing Systems (CHI), pp. 1353–1362, 2011. @inproceedings{VSBH11,

title = {2Đ Touching of 3Đ Stereoscopic Objects},

author = {Dimitar Valkov and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/VSBH11.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {Proceedings of the ACM Conference on Human Factors in Computing Systems (CHI)},

pages = {1353--1362},

abstract = {Recent developments in the area of touch and display technologies have suggested to combine multi-touch systems and stereoscopic visualization. Stereoscopic perception requires each eye to see a slightly different perspective of the same scene, which results in two distinct projections on the display. Thus, if the user wants to select a 3D stereoscopic object in such a setup, the question arises where she would touch the 2D surface to indicate the selection. A user may apply different strategies, for instance touching the midpoint between the two projections, or touching one of them. In this paper we analyze the relation between the 3D positions of stereoscopically rendered objects and the on-surface touch points, where users touch the surface. We performed an experiment in which we determined the positions of the users' touches for objects, which were displayed with positive, negative or zero parallaxes. We found that users tend to touch between the projections for the two eyes with an offset towards the projection for the dominant eye. Our results give implications for the development of future touch-enabled interfaces, which support 3D stereoscopic visualization.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Recent developments in the area of touch and display technologies have suggested to combine multi-touch systems and stereoscopic visualization. Stereoscopic perception requires each eye to see a slightly different perspective of the same scene, which results in two distinct projections on the display. Thus, if the user wants to select a 3D stereoscopic object in such a setup, the question arises where she would touch the 2D surface to indicate the selection. A user may apply different strategies, for instance touching the midpoint between the two projections, or touching one of them. In this paper we analyze the relation between the 3D positions of stereoscopically rendered objects and the on-surface touch points, where users touch the surface. We performed an experiment in which we determined the positions of the users' touches for objects, which were displayed with positive, negative or zero parallaxes. We found that users tend to touch between the projections for the two eyes with an offset towards the projection for the dominant eye. Our results give implications for the development of future touch-enabled interfaces, which support 3D stereoscopic visualization. |

![[POSTER] Evaluation of Field of View Calibration Techniques for Head-mounted Displays](https://sreal.ucf.edu/wp-content/uploads/2017/02/BSWM11.png) | Gerd Bruder; Frank Steinicke; Carolin Walter; Mathias Möhring [POSTER] Evaluation of Field of View Calibration Techniques for Head-mounted Displays Proceedings Article In: ACM Symposium on Applied Perception in Graphics and Visualization, pp. 125, 2011. @inproceedings{BSWM11,

title = {[POSTER] Evaluation of Field of View Calibration Techniques for Head-mounted Displays},

author = {Gerd Bruder and Frank Steinicke and Carolin Walter and Mathias Möhring},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSWM11.pdf},

year = {2011},

date = {2011-01-01},

booktitle = {ACM Symposium on Applied Perception in Graphics and Visualization},

pages = {125},

abstract = {In this poster we present a comparison of two calibration techniques that allow to determine the field of view (FOV) for immersive headmounted displays (HMDs).},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this poster we present a comparison of two calibration techniques that allow to determine the field of view (FOV) for immersive headmounted displays (HMDs). |

2010

|

| Dimitar Valkov; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs Traveling in 3D Virtual Environments with Foot Gestures and a Multi-Touch enabled WIM Proceedings Article In: Proceedings of the Virtual Reality International Conference (VRIC), pp. 171–180, 2010. @inproceedings{VSBH10,

title = {Traveling in 3D Virtual Environments with Foot Gestures and a Multi-Touch enabled WIM},

author = { Dimitar Valkov and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/VSBH10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the Virtual Reality International Conference (VRIC)},

pages = {171--180},

abstract = {In this paper we demonstrate how foot gestures can be used to perform navigation tasks in interactive 3D environments and how a World-In-Miniature view can be manipulated trough multi-touch gestures, simplifying the way-finding task in such complex environments. Geographic Information Systems (GIS) are well suited as a complex test-bed for evaluation of user interfaces based on multi-modal input. Recent developments in the area of interactive surfaces enable the construction of low-cost multi-touch sensors and relatively inexpensive technology for detecting foot gestures allows exploring these input modalities for virtual reality environments. In this paper, we describe an intuitive 3D user interface setup, which combines multi-touch hand and foot gestures for interaction with spatial data.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we demonstrate how foot gestures can be used to perform navigation tasks in interactive 3D environments and how a World-In-Miniature view can be manipulated trough multi-touch gestures, simplifying the way-finding task in such complex environments. Geographic Information Systems (GIS) are well suited as a complex test-bed for evaluation of user interfaces based on multi-modal input. Recent developments in the area of interactive surfaces enable the construction of low-cost multi-touch sensors and relatively inexpensive technology for detecting foot gestures allows exploring these input modalities for virtual reality environments. In this paper, we describe an intuitive 3D user interface setup, which combines multi-touch hand and foot gestures for interaction with spatial data. |

| Dimitar Valkov; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Johannes Schöning; Florian Daiber; Antonio Krüger Touching Floating Objects in Projection-based Virtual Reality Environments Proceedings Article In: Proceedings of the Joint Virtual Reality Conference (JVRC), pp. 17–24, 2010. @inproceedings{VSBHSDK10,

title = {Touching Floating Objects in Projection-based Virtual Reality Environments},

author = {Dimitar Valkov and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Johannes Schöning and Florian Daiber and Antonio Krüger},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/VSBHSDK10-optimized.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the Joint Virtual Reality Conference (JVRC)},

pages = {17--24},

abstract = {Touch-sensitive screens enable natural interaction without any instrumentation and support tangible feedback on the touch surface. In particular multi-touch interaction has proven its usability for 2D tasks, but the challenges to exploit these technologies in virtual reality (VR) setups have rarely been studied. In this paper we address the challenge to allow users to interact with stereoscopically displayed virtual environments when the input is constrained to a 2D touch surface. During interaction with a large-scale touch display a user changes between three different states: (1) beyond the arm-reach distance from the surface, (2) at arm-reach distance and (3) interaction. We have analyzed the user's ability to discriminate stereoscopic display parallaxes while she moves through these states, i.e., if objects can be imperceptibly shifted onto the interactive surface and become accessible for natural touch interaction. Our results show that the detection thresholds for such manipulations are related to both user motion and stereoscopic parallax, and that users have problems to discriminate whether they touched an object or not, when tangible feedback is expected.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Touch-sensitive screens enable natural interaction without any instrumentation and support tangible feedback on the touch surface. In particular multi-touch interaction has proven its usability for 2D tasks, but the challenges to exploit these technologies in virtual reality (VR) setups have rarely been studied. In this paper we address the challenge to allow users to interact with stereoscopically displayed virtual environments when the input is constrained to a 2D touch surface. During interaction with a large-scale touch display a user changes between three different states: (1) beyond the arm-reach distance from the surface, (2) at arm-reach distance and (3) interaction. We have analyzed the user's ability to discriminate stereoscopic display parallaxes while she moves through these states, i.e., if objects can be imperceptibly shifted onto the interactive surface and become accessible for natural touch interaction. Our results show that the detection thresholds for such manipulations are related to both user motion and stereoscopic parallax, and that users have problems to discriminate whether they touched an object or not, when tangible feedback is expected. |

![[POSTER] Analysis of IR-based Virtual Reality Tracking Using Multiple Kinects](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBWS12.png)

![[POSTER] Evaluation of Field of View Calibration Techniques for Head-mounted Displays](https://sreal.ucf.edu/wp-content/uploads/2017/02/BSWM11.png)