2018

|

| Jason Hochreiter; Salam Daher; Gerd Bruder; Greg Welch Cognitive and touch performance effects of mismatched 3D physical and visual perceptions Proceedings Article In: IEEE Virtual Reality 2018 (VR 2018), 2018. @inproceedings{Hochreiter2018,

title = {Cognitive and touch performance effects of mismatched 3D physical and visual perceptions},

author = {Jason Hochreiter and Salam Daher and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/hochreiter2018.pdf},

year = {2018},

date = {2018-03-22},

booktitle = {IEEE Virtual Reality 2018 (VR 2018)},

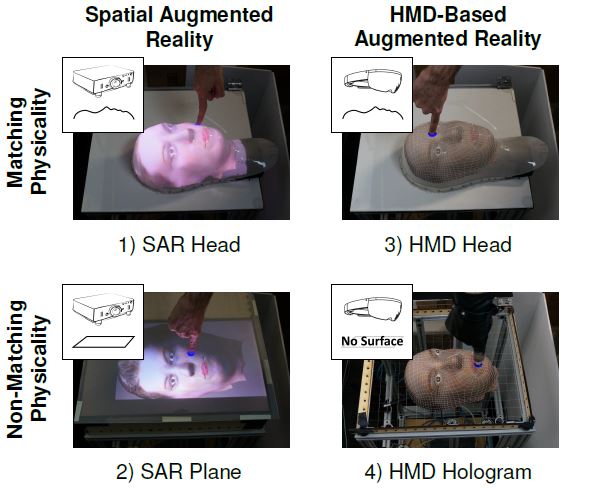

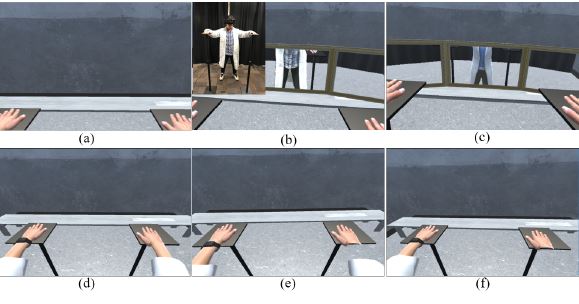

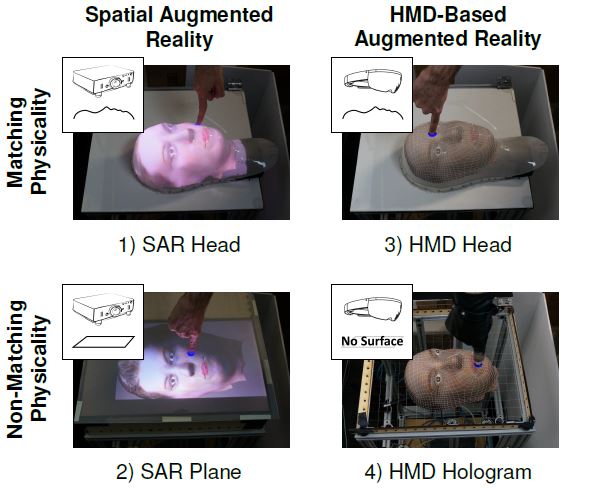

abstract = {In a controlled human-subject study we investigated the effects of mismatched physical and visual perception on cognitive load and performance in an Augmented Reality (AR) touching task by varying the physical fidelity (matching vs. non-matching physical shape) and visual mechanism (projector-based vs. HMD-based AR) of the representation. Participants touched visual targets on four corresponding physical-visual representations of a human head. We evaluated their performance in terms of touch accuracy, response time, and a cognitive load task requiring target size estimations during a concurrent (secondary) counting task. Results indicated higher performance, lower cognitive load, and increased usability when participants touched a matching physical head-shaped surface and with visuals provided by a projector from underneath.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a controlled human-subject study we investigated the effects of mismatched physical and visual perception on cognitive load and performance in an Augmented Reality (AR) touching task by varying the physical fidelity (matching vs. non-matching physical shape) and visual mechanism (projector-based vs. HMD-based AR) of the representation. Participants touched visual targets on four corresponding physical-visual representations of a human head. We evaluated their performance in terms of touch accuracy, response time, and a cognitive load task requiring target size estimations during a concurrent (secondary) counting task. Results indicated higher performance, lower cognitive load, and increased usability when participants touched a matching physical head-shaped surface and with visuals provided by a projector from underneath. |

| Sungchul Jung; Pamela Wisniewski; Charles E. Hughes In Limbo: The Effect of Gradual Visual Transition between Real and Virtual on Virtual Body Ownership Illusion and Presence Proceedings Article In: IEEE Virtual Reality Conference 2018 (IEEE VR 2018), Reutlingen, Germany, March 18-22, 2018. @inproceedings{Jung2018,

title = {In Limbo: The Effect of Gradual Visual Transition between Real and Virtual on Virtual Body Ownership Illusion and Presence},

author = {Sungchul Jung and Pamela Wisniewski and Charles E. Hughes},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Jung2018aa.pdf},

year = {2018},

date = {2018-03-18},

booktitle = {IEEE Virtual Reality Conference 2018 (IEEE VR 2018), Reutlingen, Germany, March 18-22},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Jason Hochreiter Optical Touch Sensing on Non-Parametric Rear-Projection Surfaces Proceedings Article In: 2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Reutlingen, pp. 805-806, 2018. @inproceedings{Hochreiter2018b,

title = {Optical Touch Sensing on Non-Parametric Rear-Projection Surfaces},

author = {Jason Hochreiter},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/06/Hochreiter2018b.pdf},

doi = {10.1109/VR.2018.8446552},

year = {2018},

date = {2018-03-18},

booktitle = {2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Reutlingen},

pages = {805-806},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

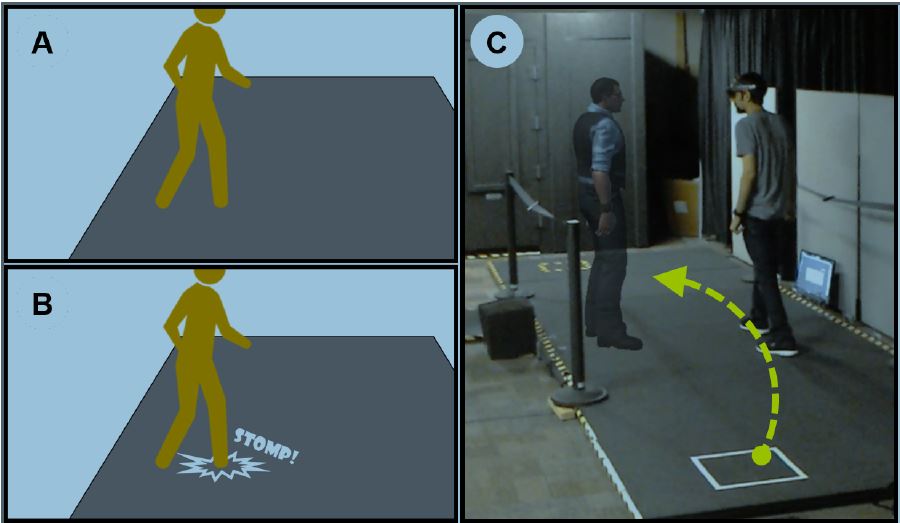

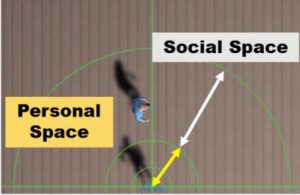

| Myungho Lee; Gerd Bruder; Tobias Hollerer; Greg Welch Effects of Unaugmented Periphery and Vibrotactile Feedback on Proxemics with Virtual Humans in AR Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 4, pp. 1525-1534, 2018. @article{Lee2018,

title = {Effects of Unaugmented Periphery and Vibrotactile Feedback on Proxemics with Virtual Humans in AR},

author = {Myungho Lee and Gerd Bruder and Tobias Hollerer and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/04/Lee2018.pdf},

doi = {10.1109/TVCG.2018.2794074 },

year = {2018},

date = {2018-02-23},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {24},

number = {4},

pages = {1525-1534},

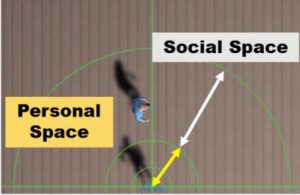

abstract = {In this paper, we investigate factors and issues related to human locomotion behavior and proxemics in the presence of a real or virtual human in augmented reality (AR). First, we discuss a unique issue with current-state optical see-through head-mounted displays. Second, we discuss the limited multimodal feedback provided by virtual humans in AR, present a potential improvement based on vibrotactile feedback induced via the floor to compensate for the limited augmented visual field, and report results showing that benefits of such vibrations are less visible in objective locomotion behavior than in subjective estimates of co-presence. Third, we investigate and document significant differences in the effects that real and virtual humans have on locomotion behavior in AR. We discuss potential explanations for these effects and analyze effects of different types of behaviors that such real or virtual humans may exhibit in the presence of an observer.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

In this paper, we investigate factors and issues related to human locomotion behavior and proxemics in the presence of a real or virtual human in augmented reality (AR). First, we discuss a unique issue with current-state optical see-through head-mounted displays. Second, we discuss the limited multimodal feedback provided by virtual humans in AR, present a potential improvement based on vibrotactile feedback induced via the floor to compensate for the limited augmented visual field, and report results showing that benefits of such vibrations are less visible in objective locomotion behavior than in subjective estimates of co-presence. Third, we investigate and document significant differences in the effects that real and virtual humans have on locomotion behavior in AR. We discuss potential explanations for these effects and analyze effects of different types of behaviors that such real or virtual humans may exhibit in the presence of an observer. |

| Roghayeh Barmaki; Charles Hughes Gesturing and Embodiment in Teaching: Investigating the Nonverbal Behavior of Teachers in a Virtual Rehearsal Environment Proceedings Article In: The Eighth Symposium on Educational Advances in Artificial Intelligence 2018 (EAAI-18), New Orleans, February 3-4, pp. 7893-7899, 2018. @inproceedings{Barmaki2018b,

title = {Gesturing and Embodiment in Teaching: Investigating the Nonverbal Behavior of Teachers in a Virtual Rehearsal Environment},

author = {Roghayeh Barmaki and Charles Hughes},

url = {https://www.aaai.org/ocs/index.php/AAAI/AAAI18/paper/viewFile/17145/16409},

year = {2018},

date = {2018-02-03},

booktitle = {The Eighth Symposium on Educational Advances in Artificial Intelligence 2018 (EAAI-18), New Orleans, February 3-4},

pages = {7893-7899},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Ahmad Abualsamid; Charles E. Hughes Why Is Video Modeling Not Used in Special Needs Classrooms? Proceedings Article In: Andre, Terence (Ed.): Advances in Human Factors in Training, Education, and Learning Sciences. AHFE 2017. Advances in Intelligent Systems and Computing, pp. 123-130, Springer, Cham., 2018. @inproceedings{Abualsamid2018c,

title = {Why Is Video Modeling Not Used in Special Needs Classrooms? },

author = {Ahmad Abualsamid and Charles E. Hughes},

editor = {Terence Andre},

doi = {https://doi.org/10.1007/978-3-319-60018-5},

year = {2018},

date = {2018-01-01},

booktitle = {Advances in Human Factors in Training, Education, and Learning Sciences. AHFE 2017. Advances in Intelligent Systems and Computing},

volume = {596},

pages = {123-130},

publisher = {Springer, Cham.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

2017

|

![[POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality](https://sreal.ucf.edu/wp-content/uploads/2018/05/Jo2017.jpg) | Dongsik Jo; Kangsoo Kim; Gregory F. Welch; Woojin Jeon; Yongwan Kim; Ki-Hong Kim; Gerard Jounghyun Kim [POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality Proceedings Article In: Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology, pp. 77:1–77:2, ACM, Gothenburg, Sweden, 2017, ISBN: 978-1-4503-5548-3. @inproceedings{Jo2017,

title = {[POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality},

author = {Dongsik Jo and Kangsoo Kim and Gregory F. Welch and Woojin Jeon and Yongwan Kim and Ki-Hong Kim and Gerard Jounghyun Kim},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/Jo2017.pdf},

doi = {10.1145/3139131.3141214},

isbn = {978-1-4503-5548-3},

year = {2017},

date = {2017-11-08},

booktitle = {Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology},

pages = {77:1--77:2},

publisher = {ACM},

address = {Gothenburg, Sweden},

series = {VRST '17},

abstract = {In this paper we report on an investigation of the effects of a self-avatar's visual similarity to a user's actual appearance, on their perceptions of the avatar in an immersive virtual reality (IVR) experience. We conducted a user study to examine the participant's sense of body ownership, presence and visual realism under three levels of avatar-owner visual similarity: (L1) an avatar reconstructed from real imagery of the participant's appearance, (L2) a cartoon-like virtual avatar created by a 3D artist for each participant, where the avatar shoes and clothing mimic that of the participant, but using a low-fidelity model, and (L3) a cartoon-like virtual avatar with a pre-defined appearance for the shoes and clothing. Surprisingly, the results indicate that the participants generally exhibited the highest sense of body ownership and presence when inhabiting the cartoon-like virtual avatar mimicking the outfit of the participant (L2), despite the relatively low participant similarity. We present our experiment and main findings, also, discuss the potential impact of a self-avatar's visual differences on human perceptions in IVR.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we report on an investigation of the effects of a self-avatar's visual similarity to a user's actual appearance, on their perceptions of the avatar in an immersive virtual reality (IVR) experience. We conducted a user study to examine the participant's sense of body ownership, presence and visual realism under three levels of avatar-owner visual similarity: (L1) an avatar reconstructed from real imagery of the participant's appearance, (L2) a cartoon-like virtual avatar created by a 3D artist for each participant, where the avatar shoes and clothing mimic that of the participant, but using a low-fidelity model, and (L3) a cartoon-like virtual avatar with a pre-defined appearance for the shoes and clothing. Surprisingly, the results indicate that the participants generally exhibited the highest sense of body ownership and presence when inhabiting the cartoon-like virtual avatar mimicking the outfit of the participant (L2), despite the relatively low participant similarity. We present our experiment and main findings, also, discuss the potential impact of a self-avatar's visual differences on human perceptions in IVR. |

| Behnaz Nojavanasghari; Charles E. Hughes; Tadas Baltrušaitis; Louis-Philippe Morency Hand2Face: Automatic Synthesis and Recognition of Hand Over Face Occlusions Proceedings Article In: Proceedings of Affective Computing and Intelligent Interaction (ACII 2017), San Antonio, TX, Oct. 23-26, pp. 209-215, San Antonio, TX, 2017. @inproceedings{Nojavanasghari2017,

title = {Hand2Face: Automatic Synthesis and Recognition of Hand Over Face Occlusions},

author = {Behnaz Nojavanasghari and Charles E. Hughes and Tadas Baltrušaitis and Louis-Philippe Morency},

url = {http://acii2017.org/accepted_papers},

year = {2017},

date = {2017-10-23},

booktitle = {Proceedings of Affective Computing and Intelligent Interaction (ACII 2017), San Antonio, TX, Oct. 23-26},

pages = {209-215},

address = {San Antonio, TX},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

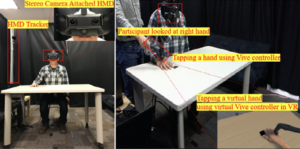

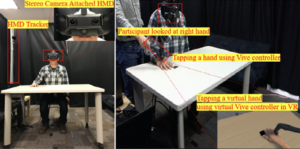

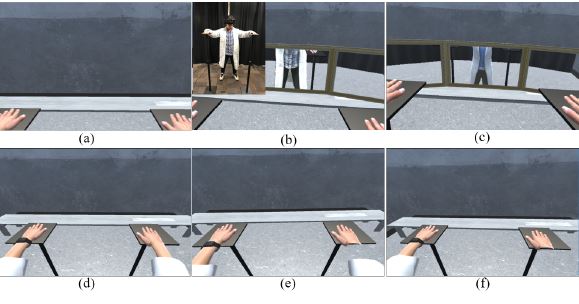

| Sungchul Jung; Christian Sandor; Pamela Wisniewski; Charles E. Hughes RealME: The Influence of Body and Hand Representations on Body Ownership and Presence Proceedings Article In: Proceedings of the 5th Symposium on Spatial User Interaction (SUI 2017), Brighton, UK, October 16-17, 2017, pp. 3-11, 2017. @inproceedings{Jung2017,

title = {RealME: The Influence of Body and Hand Representations on Body Ownership and Presence},

author = {Sungchul Jung and Christian Sandor and Pamela Wisniewski and Charles E. Hughes},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Jung2017.pdf},

doi = {10.1145/3131277.3132186},

year = {2017},

date = {2017-10-16},

booktitle = {Proceedings of the 5th Symposium on Spatial User Interaction (SUI 2017), Brighton, UK, October 16-17, 2017},

pages = {3-11},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

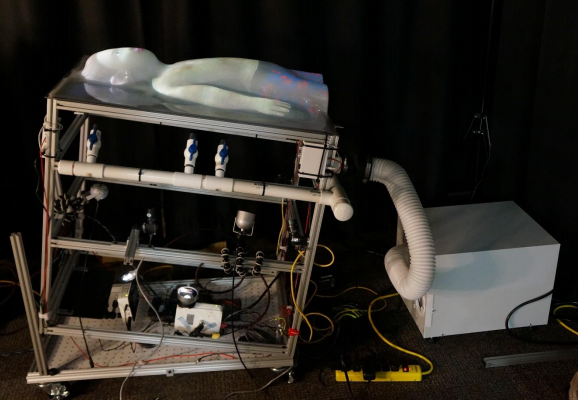

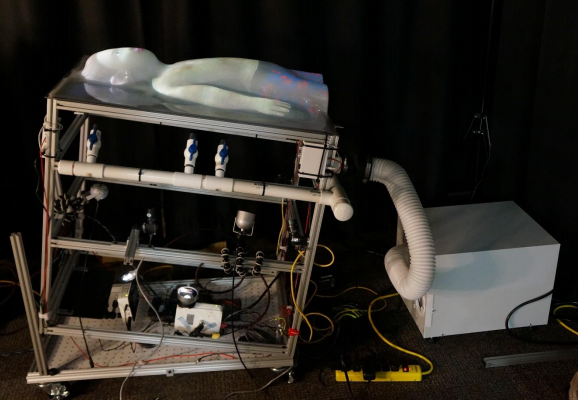

| Salam Daher Physical-Virtual Patient Bed Technical Report 2017, (Daher, Salam.(2017).Physical-Virtual Patient Bed. (Link Foundation Fellowship Final Reports: Modeling, Simulation, and Training Program.) Retrieved from The Scholarship Repository of Florida Institute of Technology website: https://repository.lib.fit.edu/). @techreport{daher2017linkfoundationreport,

title = {Physical-Virtual Patient Bed},

author = {Salam Daher},

url = {https://sreal.ucf.edu/linkfoundation_pvpbreport/},

year = {2017},

date = {2017-08-28},

note = {Daher, Salam.(2017).Physical-Virtual Patient Bed. (Link Foundation Fellowship Final Reports: Modeling, Simulation, and Training Program.) Retrieved from The Scholarship Repository of Florida Institute of Technology website: https://repository.lib.fit.edu/},

keywords = {},

pubstate = {published},

tppubtype = {techreport}

}

|

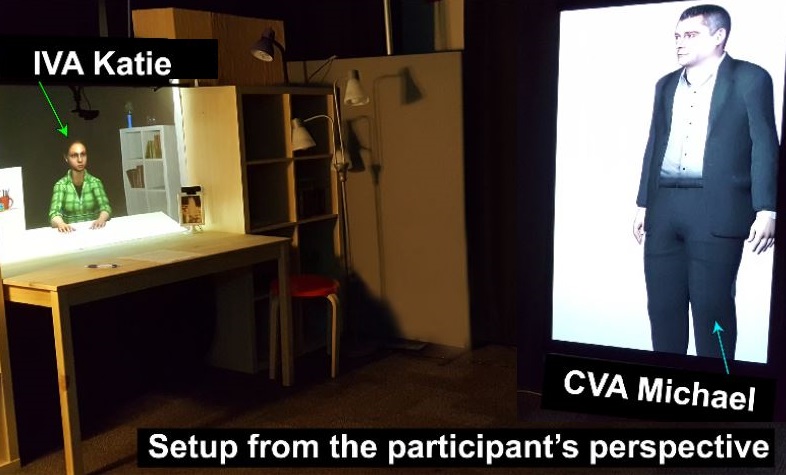

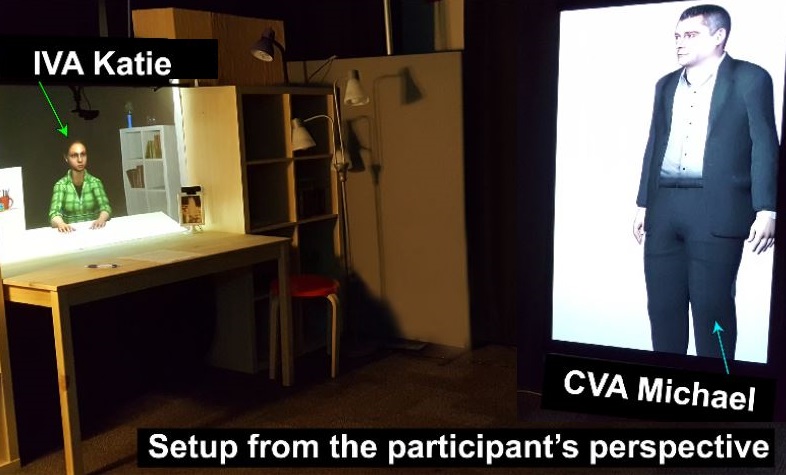

| Salam Daher; Kangsoo Kim; Myungho Lee; Ryan Schubert; Gerd Bruder; Jeremy Bailenson; Greg Welch Effects of Social Priming on Social Presence with Intelligent Virtual Agents Book Chapter In: Beskow, Jonas; Peters, Christopher; Castellano, Ginevra; O'Sullivan, Carol; Leite, Iolanda; Kopp, Stefan (Ed.): Intelligent Virtual Agents: 17th International Conference, IVA 2017, Stockholm, Sweden, August 27-30, 2017, Proceedings, vol. 10498, pp. 87-100, Springer International Publishing, 2017. @inbook{Daher2017ab,

title = {Effects of Social Priming on Social Presence with Intelligent Virtual Agents},

author = {Salam Daher and Kangsoo Kim and Myungho Lee and Ryan Schubert and Gerd Bruder and Jeremy Bailenson and Greg Welch},

editor = {Jonas Beskow and Christopher Peters and Ginevra Castellano and Carol O'Sullivan and Iolanda Leite and Stefan Kopp},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/12/Daher2017ab.pdf},

doi = {10.1007/978-3-319-67401-8_10},

year = {2017},

date = {2017-08-26},

booktitle = {Intelligent Virtual Agents: 17th International Conference, IVA 2017, Stockholm, Sweden, August 27-30, 2017, Proceedings},

volume = {10498},

pages = {87-100},

publisher = {Springer International Publishing},

keywords = {},

pubstate = {published},

tppubtype = {inbook}

}

|

| Sungchul Jung; Chistian Sandor; Charles E. Hughes Pilot Study: The Effect of Real User Body Cues to The Perception on Virtual Body Proceedings Article In: Proceedings of the 30th Conference on Computer Animation and Social Agents (CASA 2017), Seoul, Korea, May 22-24, 2017, pp. 47-50, 2017. @inproceedings{Jung2017b,

title = {Pilot Study: The Effect of Real User Body Cues to The Perception on Virtual Body},

author = {Sungchul Jung and Chistian Sandor and Charles E. Hughes},

url = {http://casa2017.kaist.ac.kr/wordpress/wp-content/uploads/2017/06/47-Jung.pdf},

year = {2017},

date = {2017-05-22},

booktitle = {Proceedings of the 30th Conference on Computer Animation and Social Agents (CASA 2017), Seoul, Korea, May 22-24, 2017},

pages = {47-50},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

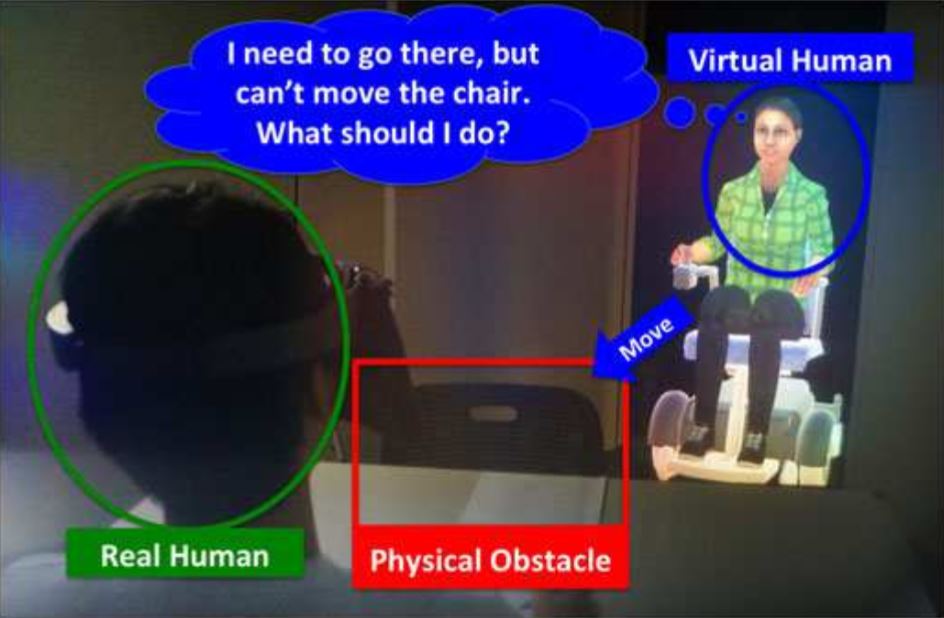

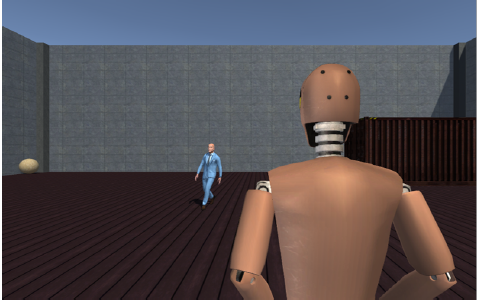

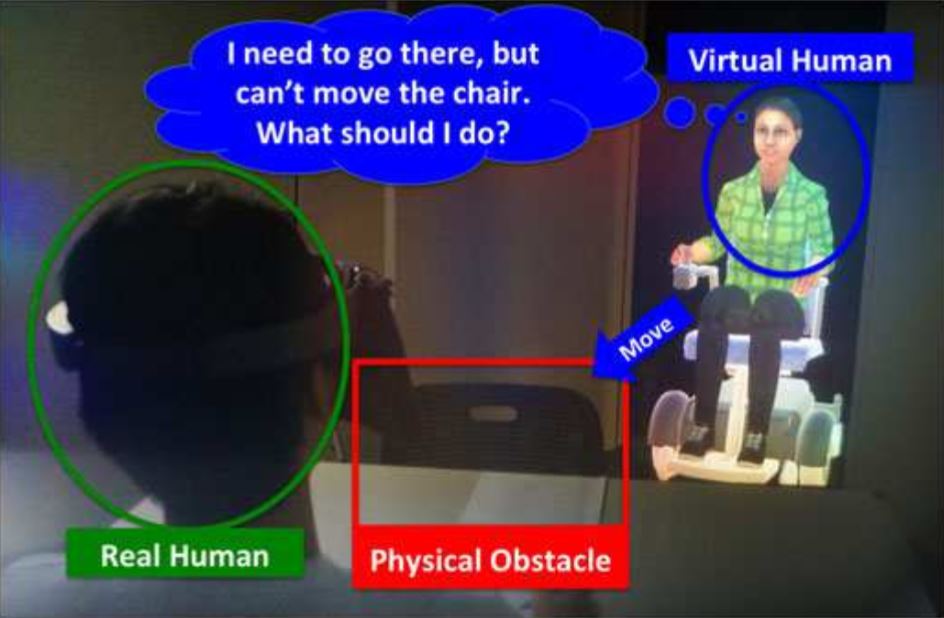

| Kangsoo Kim; Divine Maloney; Gerd Bruder; Jeremy Bailenson; Greg Welch The effects of virtual human's spatial and behavioral coherence with physical objects on social presence in AR Journal Article In: Computer Animation and Virtual Worlds, vol. 28, no. 3-4, pp. e1771-n/a, 2017. @article{Kim2017b,

title = {The effects of virtual human's spatial and behavioral coherence with physical objects on social presence in AR},

author = {Kangsoo Kim and Divine Maloney and Gerd Bruder and Jeremy Bailenson and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/12/Kim2017b.pdf},

doi = {10.1002/cav.1771},

year = {2017},

date = {2017-05-21},

journal = {Computer Animation and Virtual Worlds},

volume = {28},

number = {3-4},

pages = {e1771-n/a},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

![[DC] Optical See-Through vs. Spatial Augmented Reality Simulators for Medical Applications](https://sreal.ucf.edu/wp-content/uploads/2018/08/doctoralConsortium.png) | Salam Daher [DC] Optical See-Through vs. Spatial Augmented Reality Simulators for Medical Applications Proceedings Article In: Virtual Reality (VR), 2017 IEEE, pp. 417-418, IEEE 2017. @inproceedings{daher2017optical,

title = {[DC] Optical See-Through vs. Spatial Augmented Reality Simulators for Medical Applications},

author = {Salam Daher},

url = {https://sreal.ucf.edu/dc_ieeevr2017_20170226_0828/},

doi = {10.1109/VR.2017.7892354},

year = {2017},

date = {2017-05-20},

booktitle = {Virtual Reality (VR), 2017 IEEE},

pages = {417-418},

organization = {IEEE},

abstract = {Currently healthcare practitioners use standardized patients, physical mannequins, and virtual patients as surrogates for real patients to provide a safe learning environment for students. Each of these

simulators has different limitation that could be mitigated with various degrees of fidelity to represent medical cues. As we are exploring different ways to simulate a human patient and their effects on

learning, we would like to compare the dynamic visuals between spatial augmented reality and a optical see-through augmented reality where a patient is rendered using the HoloLens and how that

affects depth perception, task completion, and social presence.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Currently healthcare practitioners use standardized patients, physical mannequins, and virtual patients as surrogates for real patients to provide a safe learning environment for students. Each of these

simulators has different limitation that could be mitigated with various degrees of fidelity to represent medical cues. As we are exploring different ways to simulate a human patient and their effects on

learning, we would like to compare the dynamic visuals between spatial augmented reality and a optical see-through augmented reality where a patient is rendered using the HoloLens and how that

affects depth perception, task completion, and social presence. |

| Behnaz Nojavanasghari; Louis-Philippe Morency; Charles E. Hughes Hands-on: Context-Driven Hand Gesture Recognition for Automatic Recognition of Curiosity Proceedings Article In: Proceedings of CHI 2017 Workshop: Designing for Curiosity. Denver, CO, May 7, 2017, (Poster and Short Paper). @inproceedings{Nojavanasghari2017b,

title = {Hands-on: Context-Driven Hand Gesture Recognition for Automatic Recognition of Curiosity},

author = {Behnaz Nojavanasghari and Louis-Philippe Morency and Charles E. Hughes},

year = {2017},

date = {2017-05-07},

booktitle = {Proceedings of CHI 2017 Workshop: Designing for Curiosity. Denver, CO, May 7},

note = {Poster and Short Paper},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

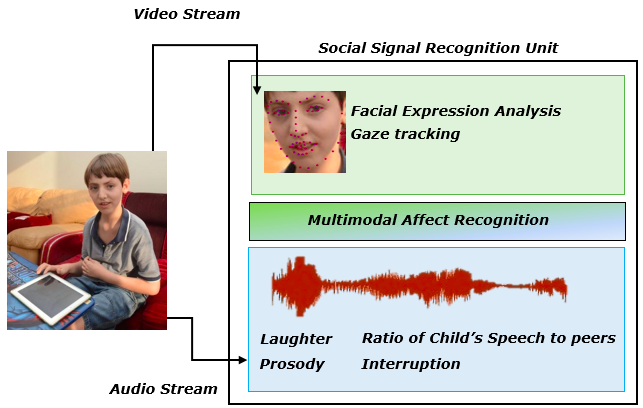

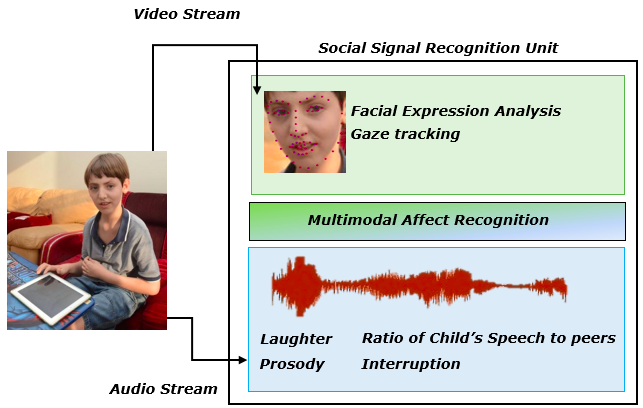

| Behnaz Nojavanasghari; Louis-Philippe Morency; Charles E. Hughes Exceptionally Social: Design of an Avatar-Mediated Interactive System for Promoting Social Skills in Children with Autism Proceedings Article In: Proceedings of CHI 2017. Denver, CO, May 6-11, pp. 1932-1939, 2017. @inproceedings{Nojavanasghari2017b,

title = {Exceptionally Social: Design of an Avatar-Mediated Interactive System for Promoting Social Skills in Children with Autism},

author = {Behnaz Nojavanasghari and Louis-Philippe Morency and Charles E. Hughes},

url = {https://dl.acm.org/citation.cfm?id=3053112&dl=ACM&coll=DL&CFID=835574635&CFTOKEN=94685731},

year = {2017},

date = {2017-05-06},

booktitle = {Proceedings of CHI 2017. Denver, CO, May 6-11},

pages = {1932-1939},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Myungho Lee; Gerd Bruder; Greg Welch Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment Journal Article In: Journal of Vision: Abstract Issue 2017, vol. 17, no. 10, pp. 357, 2017. @article{Lee2017,

title = {Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment},

author = {Myungho Lee and Gerd Bruder and Greg Welch},

doi = {10.1167/17.10.357},

year = {2017},

date = {2017-05-01},

journal = {Journal of Vision: Abstract Issue 2017},

volume = {17},

number = {10},

pages = {357},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Ryan Schubert; Gerd Bruder; Greg Welch Mitigating Perceptual Error in Synthetic Animatronics using Visual Feature Flow Journal Article In: Journal of Vision: Abstract Issue 2017, vol. 17, no. 10, pp. 331, 2017. @article{Schubert2017,

title = {Mitigating Perceptual Error in Synthetic Animatronics using Visual Feature Flow},

author = {Ryan Schubert and Gerd Bruder and Greg Welch},

doi = {10.1167/17.10.331},

year = {2017},

date = {2017-05-01},

journal = {Journal of Vision: Abstract Issue 2017},

volume = {17},

number = {10},

pages = {331},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

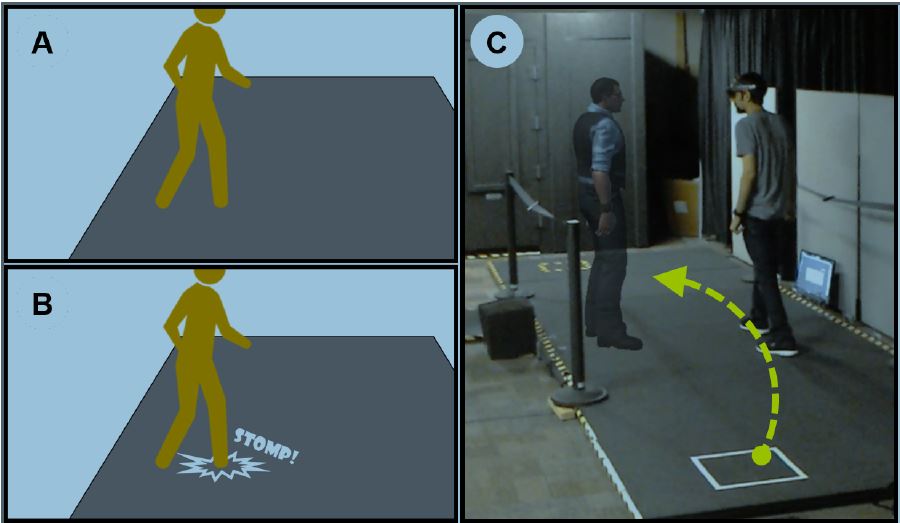

| Myungho Lee; Gerd Bruder; Greg Welch Exploring the Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment Proceedings Article In: Proceedings of IEEE Virtual Reality 2017, 2017. @inproceedings{Lee2017aa,

title = {Exploring the Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment},

author = {Myungho Lee and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/Lee2017aa.pdf},

doi = {10.1109/VR.2017.7892237 },

year = {2017},

date = {2017-03-19},

booktitle = {Proceedings of IEEE Virtual Reality 2017},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

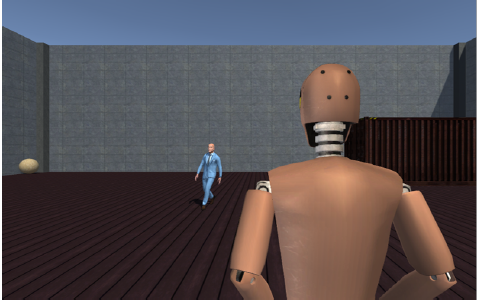

![[POSTER] Coherence Changes Gaze Behavior in Virtual Human Interactions](https://sreal.ucf.edu/wp-content/uploads/2017/02/Skarbez2017aa.png) | Richard Skarbez; Gregory F. Welch; Frederick P. Brooks; Mary Whitton [POSTER] Coherence Changes Gaze Behavior in Virtual Human Interactions Proceedings Article In: 2017 IEEE Virtual Reality (VR), pp. 287-288, 2017. @inproceedings{Skarbez2017aa,

title = {[POSTER] Coherence Changes Gaze Behavior in Virtual Human Interactions},

author = {Richard Skarbez and Gregory F. Welch and Frederick P. Brooks and Mary Whitton},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/Skarbez2017aa.pdf},

doi = {10.1109/VR.2017.7892289},

year = {2017},

date = {2017-03-19},

booktitle = {2017 IEEE Virtual Reality (VR)},

pages = {287-288},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality](https://sreal.ucf.edu/wp-content/uploads/2018/05/Jo2017.jpg)

![[DC] Optical See-Through vs. Spatial Augmented Reality Simulators for Medical Applications](https://sreal.ucf.edu/wp-content/uploads/2018/08/doctoralConsortium.png)

![[POSTER] Coherence Changes Gaze Behavior in Virtual Human Interactions](https://sreal.ucf.edu/wp-content/uploads/2017/02/Skarbez2017aa.png)