2018

|

| Kangsoo Kim; Gerd Bruder; Gregory F. Welch Blowing in the Wind: Increasing Copresence with a Virtual Human via Airflow Influence in Augmented Reality Proceedings Article In: Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018, pp. 183-190, 2018, (Honorable Mention Award). @inproceedings{Kim2018c,

title = {Blowing in the Wind: Increasing Copresence with a Virtual Human via Airflow Influence in Augmented Reality},

author = {Kangsoo Kim and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/10/Kim_Airflow_ICAT_EGVE2018.pdf},

doi = {10.2312/egve.20181332},

year = {2018},

date = {2018-11-07},

booktitle = {Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018},

pages = {183-190},

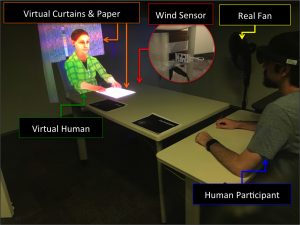

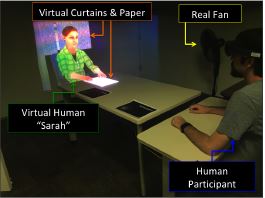

abstract = {In a social context where two or more interlocutors interact with each other in the same space, one's sense of copresence with the others is an important factor for the quality of communication and engagement in the interaction. Although augmented reality (AR) technology enables the superposition of virtual humans (VHs) as interlocutors in the real world, the resulting sense of copresence is usually far lower than with a real human interlocutor.

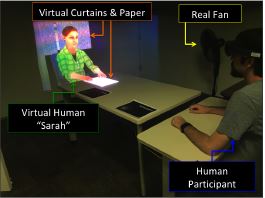

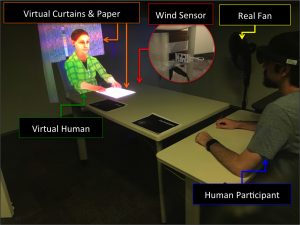

In this paper, we describe a human-subject study in which we explored and investigated the effects that subtle multi-modal interaction between the virtual environment and the real world, where a VH and human participants were co-located, can have on copresence. We compared two levels of gradually increased multi-modal interaction: (i) virtual objects being affected by real airflow as commonly experienced with fans in summer, and (ii) a VH showing awareness of this airflow. We chose airflow as one example of an environmental factor that can noticeably affect both the real and virtual worlds, and also cause subtle responses in interlocutors. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher copresence with airflow influence than without it, and the copresence would be even higher when the VH shows awareness of the airflow. The statistical analysis with the participant-reported copresence scores showed that there was an improvement of the perceived copresence with the VH when both the physical–virtual interactivity via airflow and the VH's awareness behaviors were present together. As the considered environmental factors are directed at the VH, i.e., they are not part of the direct interaction with the real human, they can provide a reasonably generalizable approach to support copresence in AR beyond the particular use case in the present experiment.},

note = {Honorable Mention Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a social context where two or more interlocutors interact with each other in the same space, one's sense of copresence with the others is an important factor for the quality of communication and engagement in the interaction. Although augmented reality (AR) technology enables the superposition of virtual humans (VHs) as interlocutors in the real world, the resulting sense of copresence is usually far lower than with a real human interlocutor.

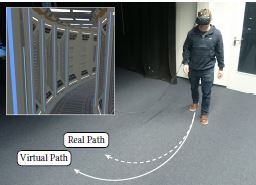

In this paper, we describe a human-subject study in which we explored and investigated the effects that subtle multi-modal interaction between the virtual environment and the real world, where a VH and human participants were co-located, can have on copresence. We compared two levels of gradually increased multi-modal interaction: (i) virtual objects being affected by real airflow as commonly experienced with fans in summer, and (ii) a VH showing awareness of this airflow. We chose airflow as one example of an environmental factor that can noticeably affect both the real and virtual worlds, and also cause subtle responses in interlocutors. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher copresence with airflow influence than without it, and the copresence would be even higher when the VH shows awareness of the airflow. The statistical analysis with the participant-reported copresence scores showed that there was an improvement of the perceived copresence with the VH when both the physical–virtual interactivity via airflow and the VH's awareness behaviors were present together. As the considered environmental factors are directed at the VH, i.e., they are not part of the direct interaction with the real human, they can provide a reasonably generalizable approach to support copresence in AR beyond the particular use case in the present experiment. |

| Nahal Norouzi; Kangsoo Kim; Jason Hochreiter; Myungho Lee; Salam Daher; Gerd Bruder; Gregory Welch A Systematic Survey of 15 Years of User Studies Published in the Intelligent Virtual Agents Conference Proceedings Article In: IVA '18 Proceedings of the 18th International Conference on Intelligent Virtual Agents, pp. 17-22, ACM ACM, 2018, ISBN: 978-1-4503-6013-5/18/11. @inproceedings{Norouzi2018c,

title = {A Systematic Survey of 15 Years of User Studies Published in the Intelligent Virtual Agents Conference},

author = {Nahal Norouzi and Kangsoo Kim and Jason Hochreiter and Myungho Lee and Salam Daher and Gerd Bruder and Gregory Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/p17-norouzi-2.pdf},

doi = {10.1145/3267851.3267901},

isbn = {978-1-4503-6013-5/18/11},

year = {2018},

date = {2018-11-05},

booktitle = {IVA '18 Proceedings of the 18th International Conference on Intelligent Virtual Agents},

pages = {17-22},

publisher = {ACM},

organization = {ACM},

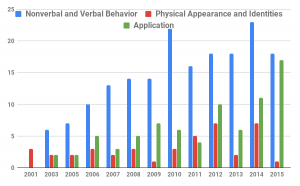

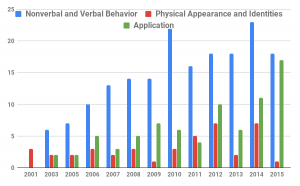

abstract = {The field of intelligent virtual agents (IVAs) has evolved immensely over the past 15 years, introducing new application opportunities in areas such as training, health care, and virtual assistants. In this survey paper, we provide a systematic review of the most influential user studies published in the IVA conference from 2001 to 2015 focusing on IVA development, human perception, and interactions. A total of 247 papers with 276 user studies have been classified and reviewed based on their contributions and impact. We identify the different areas of research and provide a summary of the papers with the highest impact. With the trends of past user studies and the current state of technology, we provide insights into future trends and research challenges.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

The field of intelligent virtual agents (IVAs) has evolved immensely over the past 15 years, introducing new application opportunities in areas such as training, health care, and virtual assistants. In this survey paper, we provide a systematic review of the most influential user studies published in the IVA conference from 2001 to 2015 focusing on IVA development, human perception, and interactions. A total of 247 papers with 276 user studies have been classified and reviewed based on their contributions and impact. We identify the different areas of research and provide a summary of the papers with the highest impact. With the trends of past user studies and the current state of technology, we provide insights into future trends and research challenges. |

| Salam Daher; Jason Hochreiter; Nahal Norouzi; Laura Gonzalez; Gerd Bruder; Greg Welch Physical-Virtual Agents for Healthcare Simulation Proceedings Article In: Proceedings of IVA 2018, November 5-8, 2018, Sydney, NSW, Australia, ACM, 2018. @inproceedings{daher2018physical,

title = {Physical-Virtual Agents for Healthcare Simulation},

author = {Salam Daher and Jason Hochreiter and Nahal Norouzi and Laura Gonzalez and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/10/IVA2018_StrokeStudy_CameraReady_Editor_20180911_1608.pdf},

year = {2018},

date = {2018-11-04},

booktitle = {Proceedings of IVA 2018, November 5-8, 2018, Sydney, NSW, Australia},

publisher = {ACM},

abstract = {Conventional Intelligent Virtual Agents (IVAs) focus primarily on the visual and auditory channels for both the agent and the interacting human: the agent displays a visual appearance and speech as output, while processing the human’s verbal and non-verbal behavior as input. However, some interactions, particularly those between a patient and healthcare provider, inherently include tactile components.We introduce an Intelligent Physical-Virtual Agent (IPVA) head that occupies an appropriate physical volume; can be touched; and via human-in-the-loop control can change appearance, listen, speak, and react physiologically in response to human behavior. Compared to a traditional IVA, it provides a physical affordance, allowing for more realistic and compelling human-agent interactions. In a user study focusing on neurological assessment of a simulated patient showing stroke symptoms, we compared the IPVA head with a high-fidelity touch-aware mannequin that has a static appearance. Various measures of the human subjects indicated greater attention, affinity for, and presence with the IPVA patient, all factors that can improve healthcare training.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Conventional Intelligent Virtual Agents (IVAs) focus primarily on the visual and auditory channels for both the agent and the interacting human: the agent displays a visual appearance and speech as output, while processing the human’s verbal and non-verbal behavior as input. However, some interactions, particularly those between a patient and healthcare provider, inherently include tactile components.We introduce an Intelligent Physical-Virtual Agent (IPVA) head that occupies an appropriate physical volume; can be touched; and via human-in-the-loop control can change appearance, listen, speak, and react physiologically in response to human behavior. Compared to a traditional IVA, it provides a physical affordance, allowing for more realistic and compelling human-agent interactions. In a user study focusing on neurological assessment of a simulated patient showing stroke symptoms, we compared the IPVA head with a high-fidelity touch-aware mannequin that has a static appearance. Various measures of the human subjects indicated greater attention, affinity for, and presence with the IPVA patient, all factors that can improve healthcare training. |

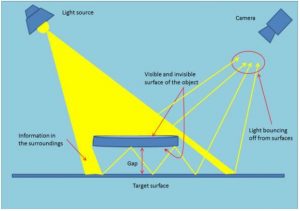

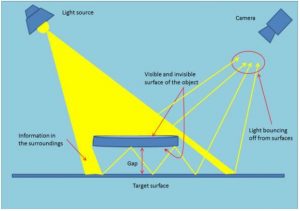

| Yazdan Jamshidi; Greg Welch Mine the Gap: Gap Estimation and Contact Detection Information via Adjacent Surface Observation Proceedings Article In: Proceedings of the International Conference on Pattern Recognition and Artificial Intelligence, pp. 54–58, ACM, New York, NY, USA, 2018. @inproceedings{Jamshidi2018aa,

title = {Mine the Gap: Gap Estimation and Contact Detection Information via Adjacent Surface Observation},

author = {Yazdan Jamshidi and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Jamshidi2018.pdf},

doi = {10.1145/3243250.3243260 },

year = {2018},

date = {2018-10-24},

urldate = {2018-11-08},

booktitle = {Proceedings of the International Conference on Pattern Recognition and Artificial Intelligence},

pages = {54--58},

publisher = {ACM},

address = {New York, NY, USA},

series = {PRAI 2018},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality](https://sreal.ucf.edu/wp-content/uploads/2018/08/Haesler2018Thumb-300x189.png) | Steffen Haesler; Kangsoo Kim; Gerd Bruder; Gregory F. Welch [POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality Proceedings Article In: Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018, 2018. @inproceedings{Haesler2018,

title = {[POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality},

author = {Steffen Haesler and Kangsoo Kim and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/08/Haesler2018.pdf},

doi = {10.1109/ISMAR-Adjunct.2018.00067},

year = {2018},

date = {2018-10-16},

booktitle = {Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018},

abstract = {Voice-activated Intelligent Virtual Assistants (IVAs) such as Amazon Alexa offer a natural and realistic form of interaction that pursues the level of social interaction among real humans. The user experience with such technologies depends to a large degree on the perceived trust in and reliability of the IVA. In this poster, we explore the effects of a three-dimensional embodied representation of Amazon Alexa in Augmented Reality (AR) on the user’s perceived trust in her being able to control Internet of Things (IoT) devices in a smart home environment. We present a preliminary study and discuss the potential of positive effects in perceived trust due to the embodied representation compared to a voice-only condition.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Voice-activated Intelligent Virtual Assistants (IVAs) such as Amazon Alexa offer a natural and realistic form of interaction that pursues the level of social interaction among real humans. The user experience with such technologies depends to a large degree on the perceived trust in and reliability of the IVA. In this poster, we explore the effects of a three-dimensional embodied representation of Amazon Alexa in Augmented Reality (AR) on the user’s perceived trust in her being able to control Internet of Things (IoT) devices in a smart home environment. We present a preliminary study and discuss the potential of positive effects in perceived trust due to the embodied representation compared to a voice-only condition. |

| Kangsoo Kim; Luke Boelling; Steffen Haesler; Jeremy N. Bailenson; Gerd Bruder; Gregory F. Welch Does a Digital Assistant Need a Body? The Influence of Visual Embodiment and Social Behavior on the Perception of Intelligent Virtual Agents in AR Proceedings Article In: Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018, 2018. @inproceedings{Kim2018a,

title = {Does a Digital Assistant Need a Body? The Influence of Visual Embodiment and Social Behavior on the Perception of Intelligent Virtual Agents in AR},

author = {Kangsoo Kim and Luke Boelling and Steffen Haesler and Jeremy N. Bailenson and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/08/Kim2018a.pdf},

doi = {10.1109/ISMAR.2018.00039},

year = {2018},

date = {2018-10-16},

booktitle = {Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018},

abstract = {Intelligent Virtual Agents (IVAs) are becoming part of our everyday life, thanks to artificial intelligence technology and Internet of Things devices. For example, users can control their connected home appliances through natural voice commands to the IVA. However, most current-state commercial IVAs, such as Amazon Alexa, mainly focus on voice commands and voice feedback, and lack the ability to provide non-verbal cues which are an important part of social interaction. Augmented Reality (AR) has the potential to overcome this challenge by providing a visual embodiment of the IVA.

In this paper we investigate how visual embodiment and social behaviors influence the perception of the IVA. We hypothesize that a user's confidence in an IVA's ability to perform tasks is improved when imbuing the agent with a human body and social behaviors compared to the agent solely depending on voice feedback. In other words, an agent's embodied gesture and locomotion behavior exhibiting awareness of the surrounding real world or exerting influence over the environment can improve the perceived social presence with and confidence in the agent. We present a human-subject study, in which we evaluated the hypothesis and compared different forms of IVAs with speech, gesturing, and locomotion behaviors in an interactive AR scenario. The results show support for the hypothesis with measures of confidence, trust, and social presence. We discuss implications for future developments in the field of IVAs.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Intelligent Virtual Agents (IVAs) are becoming part of our everyday life, thanks to artificial intelligence technology and Internet of Things devices. For example, users can control their connected home appliances through natural voice commands to the IVA. However, most current-state commercial IVAs, such as Amazon Alexa, mainly focus on voice commands and voice feedback, and lack the ability to provide non-verbal cues which are an important part of social interaction. Augmented Reality (AR) has the potential to overcome this challenge by providing a visual embodiment of the IVA.

In this paper we investigate how visual embodiment and social behaviors influence the perception of the IVA. We hypothesize that a user's confidence in an IVA's ability to perform tasks is improved when imbuing the agent with a human body and social behaviors compared to the agent solely depending on voice feedback. In other words, an agent's embodied gesture and locomotion behavior exhibiting awareness of the surrounding real world or exerting influence over the environment can improve the perceived social presence with and confidence in the agent. We present a human-subject study, in which we evaluated the hypothesis and compared different forms of IVAs with speech, gesturing, and locomotion behaviors in an interactive AR scenario. The results show support for the hypothesis with measures of confidence, trust, and social presence. We discuss implications for future developments in the field of IVAs. |

| Bruce H. Thomas; Gregory F. Welch; Pierre Dragicevic; Niklas Elmqvist; Pourang Irani; Yvonne Jansen; Dieter Schmalstieg; Aurélien Tabard; Neven A. M. ElSayed; Ross T. Smith; Wesley Willett Situated Analytics Book Chapter In: Marriott, Kim; Schreiber, Falk; Dwyer, Tim; Klein, Karsten; Riche, Nathalie Henry; Itoh, Takayuki; Stuerzlinger, Wolfgang; Thomas, Bruce H. (Ed.): Immersive Analytics. Lecture Notes in Computer Science, vol. 11190, Chapter 7, pp. 185–220, Springer International Publishing, Cham, 2018, ISBN: 978-3-030-01388-2. @inbook{Thomas2018aa,

title = {Situated Analytics },

author = {Bruce H. Thomas and Gregory F. Welch and Pierre Dragicevic and Niklas Elmqvist and Pourang Irani and Yvonne Jansen and Dieter Schmalstieg and Aurélien Tabard and Neven A. M. ElSayed and Ross T. Smith and Wesley Willett},

editor = {Kim Marriott and Falk Schreiber and Tim Dwyer and Karsten Klein and Nathalie Henry Riche and Takayuki Itoh and Wolfgang Stuerzlinger and Bruce H. Thomas

},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/10/Thomas2018aa.pdf},

doi = {10.1007/978-3-030-01388-2_7},

isbn = {978-3-030-01388-2},

year = {2018},

date = {2018-10-16},

urldate = {2018-10-23},

booktitle = {Immersive Analytics. Lecture Notes in Computer Science},

volume = {11190},

pages = {185--220},

publisher = {Springer International Publishing},

address = {Cham},

chapter = {7},

abstract = {This chapter introduces the concept of situated analytics that employs data representations organized in relation to germane objects, places, and persons for the purpose of understanding, sensemaking, and decision-making. The components of situated analytics are characterized in greater detail, including the users, tasks, data, representations, interactions, and analytical processes involved. Several case studies of projects and products are presented that exemplify situated analytics in action. Based on these case studies, a set of derived design considerations for building situated analytics applications are presented. Finally, there is a an outline of a research agenda of challenges and research questions to explore in the future.},

keywords = {},

pubstate = {published},

tppubtype = {inbook}

}

This chapter introduces the concept of situated analytics that employs data representations organized in relation to germane objects, places, and persons for the purpose of understanding, sensemaking, and decision-making. The components of situated analytics are characterized in greater detail, including the users, tasks, data, representations, interactions, and analytical processes involved. Several case studies of projects and products are presented that exemplify situated analytics in action. Based on these case studies, a set of derived design considerations for building situated analytics applications are presented. Finally, there is a an outline of a research agenda of challenges and research questions to explore in the future. |

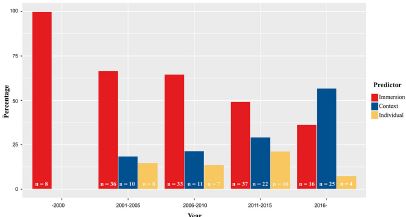

| Catherine S. Oh; Jeremy N. Bailenson; Gregory F. Welch A Systematic Review of Social Presence: Definition, Antecedents, and Implications Journal Article In: Frontiers in Robotics and AI, vol. 5, no. 114, 2018, ISBN: 2296-9144. @article{Oh2018,

title = {A Systematic Review of Social Presence: Definition, Antecedents, and Implications},

author = {Catherine S. Oh and Jeremy N. Bailenson and Gregory F. Welch},

editor = {Doron Friedman},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/10/Oh2018.pdf},

doi = {10.3389/frobt.2018.00114},

isbn = {2296-9144},

year = {2018},

date = {2018-10-15},

journal = {Frontiers in Robotics and AI},

volume = {5},

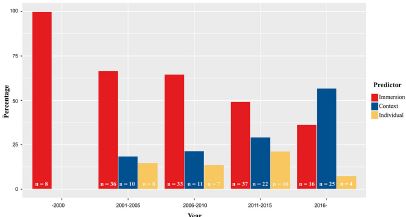

number = {114},

abstract = {Social presence, or the feeling of being there with a “real” person, is a crucial component of interactions that take place in virtual reality. This paper reviews the concept, antecedents, and implications of social presence, with a focus on the literature regarding the predictors of social presence. The article begins by exploring the concept of social presence, distinguishing it from two other dimensions of presence—telepresence and self-presence. After establishing the definition of social presence, the article offers a systematic review of 222 separate findings identified from 150 studies that investigate the factors (i.e., immersive qualities, contextual differences, and individual psychological traits) that predict social presence. Finally, the paper discusses the implications of heightened social presence and when it does and does not enhance one’s experience in a virtual environment.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

Social presence, or the feeling of being there with a “real” person, is a crucial component of interactions that take place in virtual reality. This paper reviews the concept, antecedents, and implications of social presence, with a focus on the literature regarding the predictors of social presence. The article begins by exploring the concept of social presence, distinguishing it from two other dimensions of presence—telepresence and self-presence. After establishing the definition of social presence, the article offers a systematic review of 222 separate findings identified from 150 studies that investigate the factors (i.e., immersive qualities, contextual differences, and individual psychological traits) that predict social presence. Finally, the paper discusses the implications of heightened social presence and when it does and does not enhance one’s experience in a virtual environment. |

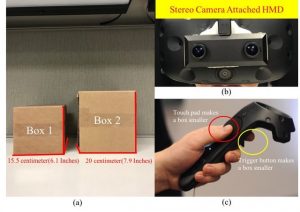

| Sungchul Jung; Gerd Bruder; Pamela Wisniewski; Chistian Sandor; Charles E. Hughes Over My Hand: Using a Personalized Hand in VR to Improve Object Size Estimation, Body Ownership, and Presence Proceedings Article In: Proceedings of the 6th ACM Symposium on Spatial User Interaction (SUI 2018), Berlin, Germany, October 13-14, 2018, 2018, (Best Paper Award). @inproceedings{Jung2018b,

title = {Over My Hand: Using a Personalized Hand in VR to Improve Object Size Estimation, Body Ownership, and Presence},

author = {Sungchul Jung and Gerd Bruder and Pamela Wisniewski and Chistian Sandor and Charles E. Hughes},

year = {2018},

date = {2018-10-13},

booktitle = {Proceedings of the 6th ACM Symposium on Spatial User Interaction (SUI 2018), Berlin, Germany, October 13-14, 2018},

note = {Best Paper Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Gregory F. Welch The Rise of Allocentric Interfaces and the Collapse of the Virtuality Continuum Proceedings Article In: Proceedings of the Symposium on Spatial User Interaction, pp. 192–192, ACM, Berlin, Germany, 2018, ISBN: 978-1-4503-5708-1. @inproceedings{Welch:2018,

title = {The Rise of Allocentric Interfaces and the Collapse of the Virtuality Continuum},

author = {Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Welch2018.pdf},

doi = {10.1145/3267782.3278470},

isbn = {978-1-4503-5708-1},

year = {2018},

date = {2018-10-01},

booktitle = {Proceedings of the Symposium on Spatial User Interaction},

pages = {192--192},

publisher = {ACM},

address = {Berlin, Germany},

series = {SUI '18},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

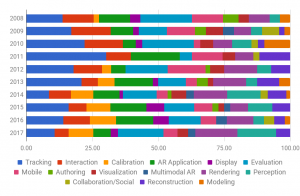

| Kangsoo Kim; Mark Billinghurst; Gerd Bruder; Henry Been-Lirn Duh; Gregory F. Welch Revisiting Trends in Augmented Reality Research: A Review of the 2nd Decade of ISMAR (2008–2017) Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 11, pp. 2947-2962, 2018, ISSN: 1077-2626. @article{Kim2018b,

title = {Revisiting Trends in Augmented Reality Research: A Review of the 2nd Decade of ISMAR (2008–2017)},

author = {Kangsoo Kim and Mark Billinghurst and Gerd Bruder and Henry Been-Lirn Duh and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/08/Kim2018b.pdf},

doi = {10.1109/TVCG.2018.2868591},

issn = {1077-2626},

year = {2018},

date = {2018-09-06},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {24},

number = {11},

pages = {2947-2962},

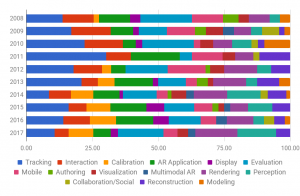

abstract = {In 2008, Zhou et al. presented a survey paper summarizing the previous ten years of ISMAR publications, which provided invaluable insights into the research challenges and trends associated with that time period. Ten years later, we review the research that has been presented at ISMAR conferences since the survey of Zhou et al., at a time when both academia and the AR industry are enjoying dramatic technological changes. Here we consider the research results and trends of the last decade of ISMAR by carefully reviewing the ISMAR publications from the period of 2008–2017, in the context of the first ten years. The numbers of papers for different research topics and their impacts by citations were analyzed while reviewing them—which reveals that there is a sharp increase in AR evaluation and rendering research. Based on this review we offer some observations related to potential future research areas or trends, which could be helpful to AR researchers and industry members looking ahead.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

In 2008, Zhou et al. presented a survey paper summarizing the previous ten years of ISMAR publications, which provided invaluable insights into the research challenges and trends associated with that time period. Ten years later, we review the research that has been presented at ISMAR conferences since the survey of Zhou et al., at a time when both academia and the AR industry are enjoying dramatic technological changes. Here we consider the research results and trends of the last decade of ISMAR by carefully reviewing the ISMAR publications from the period of 2008–2017, in the context of the first ten years. The numbers of papers for different research topics and their impacts by citations were analyzed while reviewing them—which reveals that there is a sharp increase in AR evaluation and rendering research. Based on this review we offer some observations related to potential future research areas or trends, which could be helpful to AR researchers and industry members looking ahead. |

| Nahal Norouzi; Luke Bölling; Gerd Bruder; Greg Welch Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement Proceedings Article In: Proceedings of the 12th international conference on disability, virtual reality and associated technologies (ICDVRAT 2018), pp. 8, 2018, ISBN: 978-0-7049-1548-0. @inproceedings{Norouzi2018b,

title = {Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement},

author = {Nahal Norouzi and Luke Bölling and Gerd Bruder and Greg Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/ICDVRAT-ITAG-2018-Conference-Proceedings-122-129.pdf},

isbn = {978-0-7049-1548-0},

year = {2018},

date = {2018-09-04},

booktitle = {Proceedings of the 12th international conference on disability, virtual reality and associated technologies (ICDVRAT 2018)},

pages = {8},

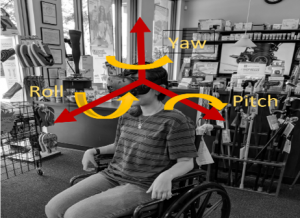

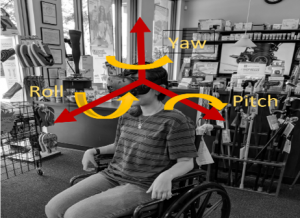

abstract = {A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement. In this paper, we present techniques that allow these users to get full-movement benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds. We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker, e.g., in cases when no physical head rotations are possible. We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement. In this paper, we present techniques that allow these users to get full-movement benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds. We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker, e.g., in cases when no physical head rotations are possible. We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces. |

| Nahal Norouzi; Gerd Bruder; Greg Welch Assessing Vignetting as a Means to Reduce VR Sickness During Amplified Head Rotations Proceedings Article In: ACM Symposium on Applied Perception 2018, pp. 8, ACM 2018, ISBN: 978-1-4503-5894-1/18/08. @inproceedings{Norouzi2018,

title = {Assessing Vignetting as a Means to Reduce VR Sickness During Amplified Head Rotations},

author = {Nahal Norouzi and Gerd Bruder and Greg Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/19-32-norouzi-1.pdf},

doi = {10.1145/3225153.3225162},

isbn = {978-1-4503-5894-1/18/08},

year = {2018},

date = {2018-08-10},

booktitle = {ACM Symposium on Applied Perception 2018},

pages = {8},

organization = {ACM},

abstract = {Redirected and amplified head movements have the potential to provide more natural interaction with virtual environments (VEs) than using controller-based input, which causes large discrepancies between visual and vestibular self-motion cues and leads to increased VR sickness.

However, such amplified head movements may also exacerbate VR sickness symptoms over no amplification.

Several general methods have been introduced to reduce VR sickness for controller-based input inside a VE, including a popular vignetting method that gradually reduces the field of view.

In this paper, we investigate the use of vignetting to reduce VR sickness when using amplified head rotations instead of controller-based input.

We also investigate whether the induced VR sickness is a result of the user's head acceleration or velocity by introducing two different modes of vignetting, one triggered by acceleration and the other by velocity.

Our dependent measures were pre and post VR sickness questionnaires as well as estimated discomfort levels that were assessed each minute of the experiment.

Our results show interesting effects between a baseline condition without vignetting, as well as the two vignetting methods, generally indicating that the vignetting methods did not succeed in reducing VR sickness for most of the participants and, instead, lead to a significant increase.

We discuss the results and potential explanations of our findings.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Redirected and amplified head movements have the potential to provide more natural interaction with virtual environments (VEs) than using controller-based input, which causes large discrepancies between visual and vestibular self-motion cues and leads to increased VR sickness.

However, such amplified head movements may also exacerbate VR sickness symptoms over no amplification.

Several general methods have been introduced to reduce VR sickness for controller-based input inside a VE, including a popular vignetting method that gradually reduces the field of view.

In this paper, we investigate the use of vignetting to reduce VR sickness when using amplified head rotations instead of controller-based input.

We also investigate whether the induced VR sickness is a result of the user's head acceleration or velocity by introducing two different modes of vignetting, one triggered by acceleration and the other by velocity.

Our dependent measures were pre and post VR sickness questionnaires as well as estimated discomfort levels that were assessed each minute of the experiment.

Our results show interesting effects between a baseline condition without vignetting, as well as the two vignetting methods, generally indicating that the vignetting methods did not succeed in reducing VR sickness for most of the participants and, instead, lead to a significant increase.

We discuss the results and potential explanations of our findings. |

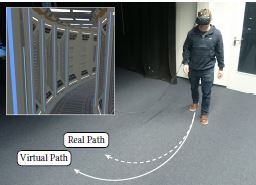

| Eike Langbehn; Frank Steinicke; Markus Lappe; Gregory F. Welch; Gerd Bruder In the Blink of an Eye – Leveraging Blink-Induced Suppression for Imperceptible Position andOrientation Redirection in Virtual Reality Journal Article In: ACM Transactions of Graphics (TOG), Special Issue on ACM SIGGRAPH 2018, vol. 37, no. 4, pp. 1-11, 2018. @article{Langbehn2018,

title = {In the Blink of an Eye – Leveraging Blink-Induced Suppression for Imperceptible Position andOrientation Redirection in Virtual Reality},

author = {Eike Langbehn and Frank Steinicke and Markus Lappe and Gregory F. Welch and Gerd Bruder},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/Langbehn2018.pdf},

doi = {10.1145/3197517.3201335},

year = {2018},

date = {2018-08-01},

journal = {ACM Transactions of Graphics (TOG), Special Issue on ACM SIGGRAPH 2018},

volume = {37},

number = {4},

pages = {1-11},

chapter = {66},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Ahmad Abualsamid; Charles E. Hughes Modeling Augmentative Communication with Amazon Lex and Polly Proceedings Article In: Ahram, Tareq Z.; Falcão, Christianne (Ed.): Advances in Usability, User Experience and Assistive Technology. AHFE 2018. Advances in Intelligent Systems and Computing, pp. 871-879, Springer, Cham, 2018. @inproceedings{Abualsamid2018b,

title = {Modeling Augmentative Communication with Amazon Lex and Polly},

author = {Ahmad Abualsamid and Charles E. Hughes},

editor = {Tareq Z. Ahram and Christianne Falcão

},

url = {https://doi.org/10.1007/978-3-319-94947-5_85},

year = {2018},

date = {2018-06-28},

urldate = {2018-08-22},

booktitle = {Advances in Usability, User Experience and Assistive Technology. AHFE 2018. Advances in Intelligent Systems and Computing},

volume = {794},

pages = {871-879},

publisher = {Springer, Cham},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Roghayeh Barmaki; Charles E. Hughes Embodiment Analytics of Practicing Teachers in a Virtual Rehearsal Environment Journal Article In: Journal of Computer Assisted Learning, vol. 34, no. 4, pp. 387-396, 2018. @article{Barmaki2018,

title = {Embodiment Analytics of Practicing Teachers in a Virtual Rehearsal Environment},

author = {Roghayeh Barmaki and Charles E. Hughes},

doi = {10.1111/jcal.12268},

year = {2018},

date = {2018-05-21},

journal = {Journal of Computer Assisted Learning},

volume = {34},

number = {4},

pages = {387-396},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Ahmad Abualsamid; Charles E. Hughes Using a Mobile App to Reduce Off-Task Behaviors in Classrooms: A Pilot Study Journal Article In: Journal on Technology and Persons with Disabilities, vol. 6, pp. 378-384, 2018. @article{Abualsamid2018,

title = {Using a Mobile App to Reduce Off-Task Behaviors in Classrooms: A Pilot Study},

author = {Ahmad Abualsamid and Charles E. Hughes},

doi = {10211.3/203008 },

year = {2018},

date = {2018-05-17},

journal = {Journal on Technology and Persons with Disabilities},

volume = {6},

pages = {378-384},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Glenn Taylor; Anthony Deschamps; Alyssa Tanaka; Denise Nicholson; Gerd Bruder; Gregory Welch; Francisco Guido-Sanz Augmented Reality for Tactical Combat Casualty Care Training Proceedings Article In: Augmented Cognition: Users and Contexts, pp. 227–239, Springer International Publishing, 2018, ISBN: 978-3-319-91467-1. @inproceedings{Taylor2018aa,

title = {Augmented Reality for Tactical Combat Casualty Care Training},

author = {Glenn Taylor and Anthony Deschamps and Alyssa Tanaka and Denise Nicholson and Gerd Bruder and Gregory Welch and Francisco Guido-Sanz},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Taylor2018aa.pdf},

isbn = {978-3-319-91467-1},

year = {2018},

date = {2018-05-03},

booktitle = {Augmented Cognition: Users and Contexts},

pages = {227--239},

publisher = {Springer International Publishing},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Kangsoo Kim Improving Social Presence with a Virtual Human via Multimodal Physical–Virtual Interactivity in AR Proceedings Article In: Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada — April 21 - 26, 2018 , pp. SRC09:1–SRC09:6, ACM, New York, NY, USA, 2018, ISBN: 978-1-4503-5621-3. @inproceedings{Kim2018,

title = {Improving Social Presence with a Virtual Human via Multimodal Physical–Virtual Interactivity in AR},

author = {Kangsoo Kim},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/Kim2018.pdf},

doi = {10.1145/3170427.3180291},

isbn = {978-1-4503-5621-3},

year = {2018},

date = {2018-04-26},

urldate = {2018-05-11},

booktitle = {Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada — April 21 - 26, 2018 },

number = {SRC09},

pages = {SRC09:1--SRC09:6},

publisher = {ACM},

address = {New York, NY, USA},

series = {CHI EA '18},

abstract = {In a social context where a real human interacts with a virtual human (VH) in the same space, one's sense of social/co-presence with the VH is an important factor for the quality of interaction and the VH's social influence to the human user in context. Although augmented reality (AR) enables the superposition of VHs in the real world, the resulting sense of social/co-presence is usually far lower than with a real human. In this paper, we introduce a research approach employing multimodal interactivity between the virtual environment and the physical world, where a VH and a human user are co-located, to improve the social/co-presence with the VH. A preliminary study suggests a promising effect on the sense of copresence with a VH when a subtle airflow from a real fan can blow a virtual paper and curtains next to the VH as a physical–virtual interactivity. Our approach can be generalized to support social/co-presence with any virtual contents in AR beyond the particular VH scenarios.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a social context where a real human interacts with a virtual human (VH) in the same space, one's sense of social/co-presence with the VH is an important factor for the quality of interaction and the VH's social influence to the human user in context. Although augmented reality (AR) enables the superposition of VHs in the real world, the resulting sense of social/co-presence is usually far lower than with a real human. In this paper, we introduce a research approach employing multimodal interactivity between the virtual environment and the physical world, where a VH and a human user are co-located, to improve the social/co-presence with the VH. A preliminary study suggests a promising effect on the sense of copresence with a VH when a subtle airflow from a real fan can blow a virtual paper and curtains next to the VH as a physical–virtual interactivity. Our approach can be generalized to support social/co-presence with any virtual contents in AR beyond the particular VH scenarios. |

![[POSTER] Fear of falling and eye movement behavior in young adults and older adults during walking: A case study](https://sreal.ucf.edu/wp-content/uploads/2018/05/Thiamwong2018aa10.jpg) | Ladda Thiamwong; Nahal Norouzi; Gregory Welch [POSTER] Fear of falling and eye movement behavior in young adults and older adults during walking: A case study Proceedings Article In: 39th Annual Southern Gerontological Society Meeting, Buford, GA USA, 2018. @inproceedings{Thiamwong2018aa,

title = {[POSTER] Fear of falling and eye movement behavior in young adults and older adults during walking: A case study},

author = {Ladda Thiamwong and Nahal Norouzi and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/03/Thiamwong2018aa.pdf},

year = {2018},

date = {2018-04-11},

booktitle = {39th Annual Southern Gerontological Society Meeting},

address = {Buford, GA USA},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality](https://sreal.ucf.edu/wp-content/uploads/2018/08/Haesler2018Thumb-300x189.png)

![[POSTER] Fear of falling and eye movement behavior in young adults and older adults during walking: A case study](https://sreal.ucf.edu/wp-content/uploads/2018/05/Thiamwong2018aa10.jpg)