2010

|

| Frank Steinicke; Gerd Bruder; Scott Kuhl Perception of Perspective Distortions of Man-Made Virtual Objects Proceedings Article In: Proceedings of the ACM International Conference and Exhibition on Computer Graphics and Interactive Techniques (SIGGRAPH) (Conference DVD), ACM Press, 2010. @inproceedings{SBK10,

title = {Perception of Perspective Distortions of Man-Made Virtual Objects},

author = { Frank Steinicke and Gerd Bruder and Scott Kuhl},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBK10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the ACM International Conference and Exhibition on Computer Graphics and Interactive Techniques (SIGGRAPH) (Conference DVD)},

publisher = {ACM Press},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

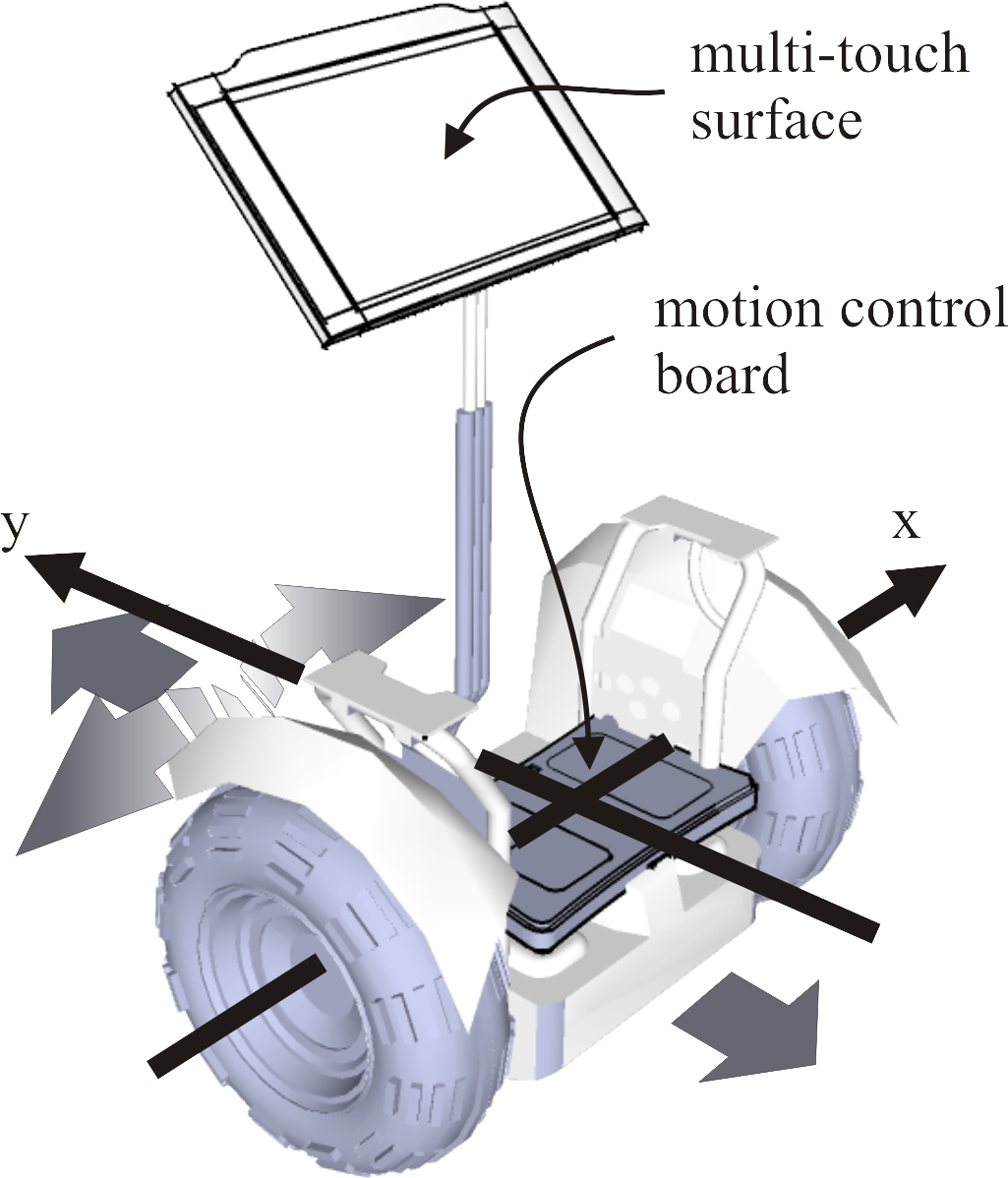

| Dimitar Valkov; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs Navigation through Geospatial Environments with a Multi-Touch enabled Human-Transporter Metaphor Proceedings Article In: Proceedings of Geoinformatik, 2010. @inproceedings{VSBH10a,

title = {Navigation through Geospatial Environments with a Multi-Touch enabled Human-Transporter Metaphor},

author = { Dimitar Valkov and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

year = {2010},

date = {2010-01-01},

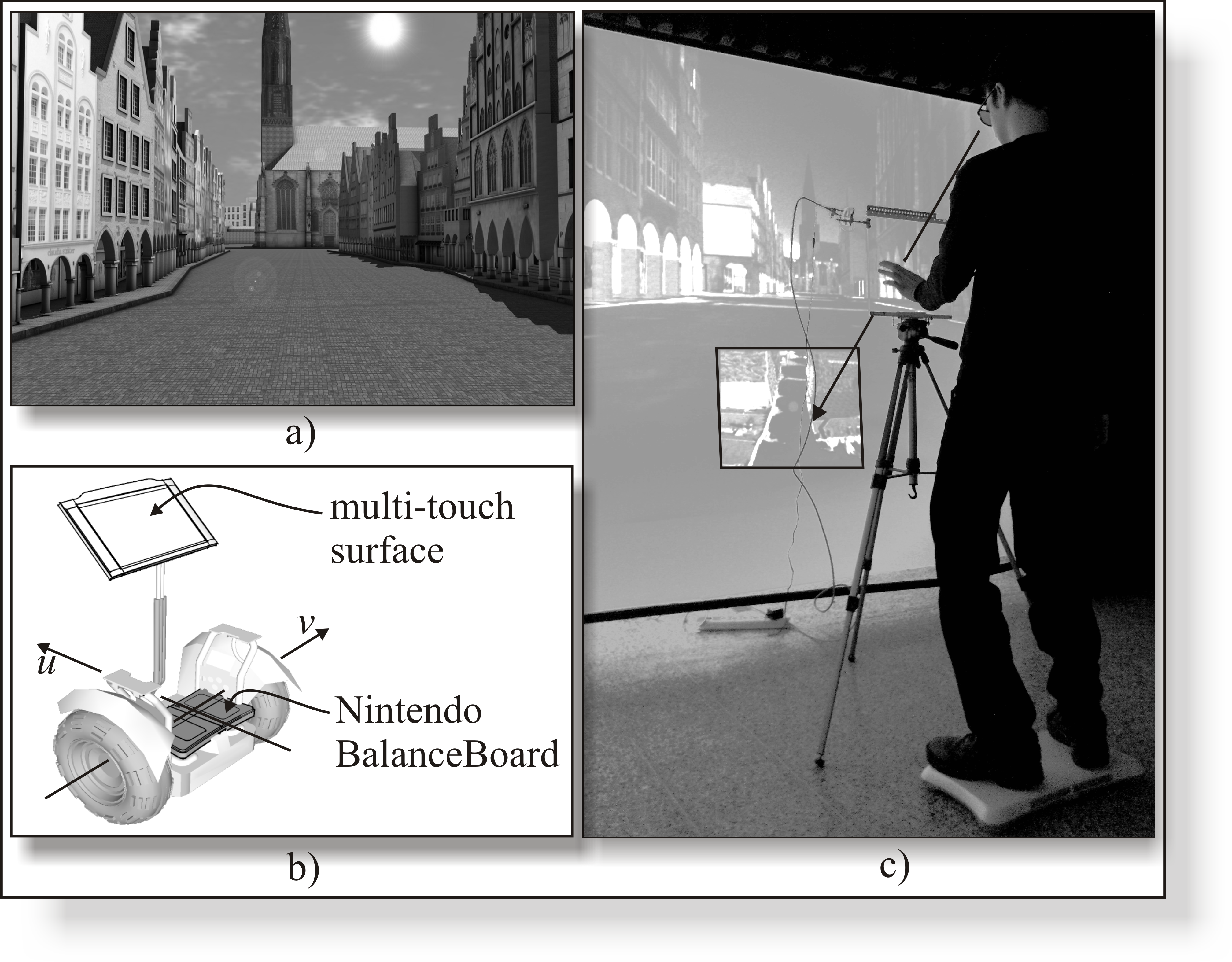

booktitle = {Proceedings of Geoinformatik},

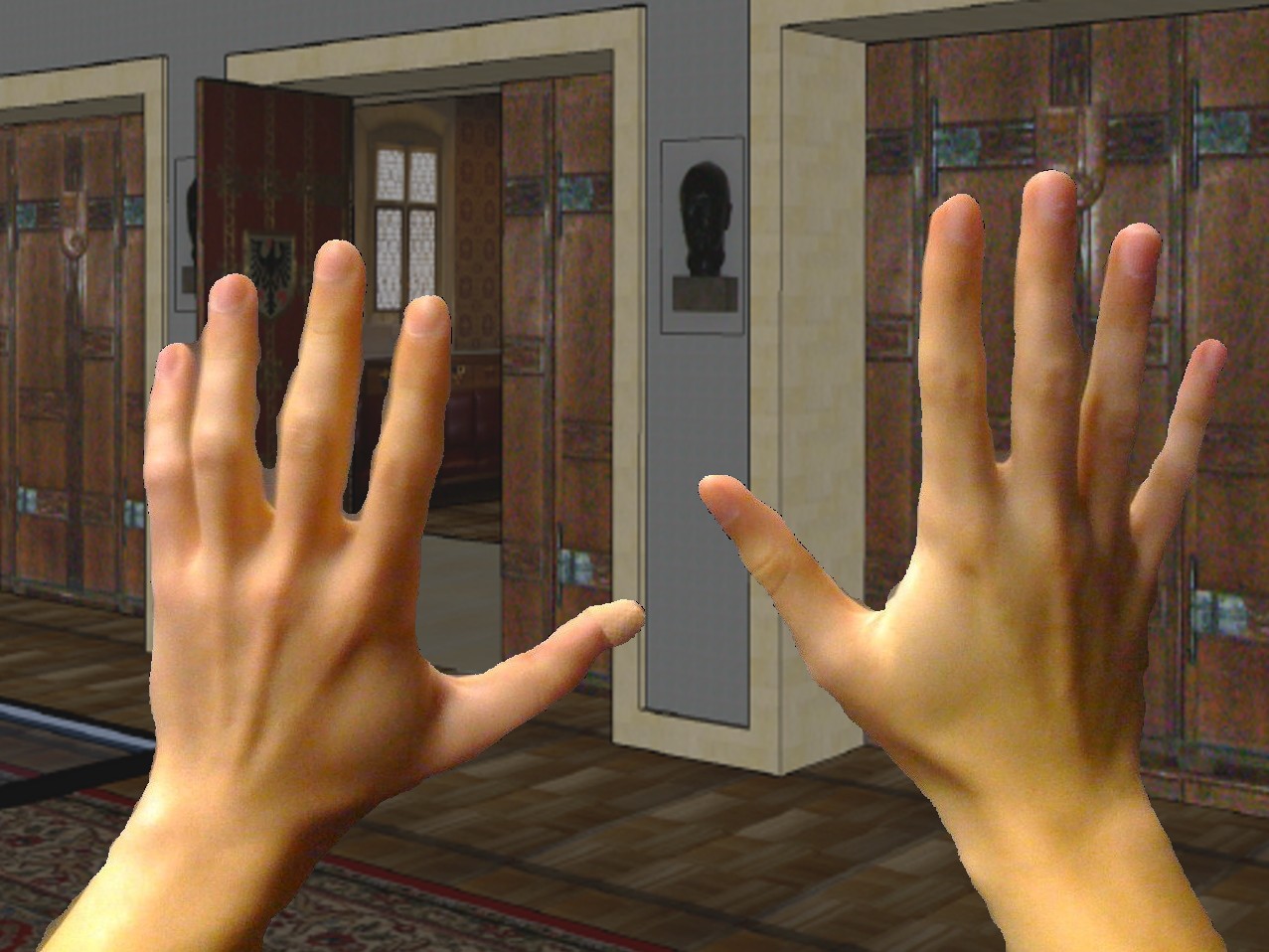

abstract = {Geospatial environments provide users with complex and detailed 3D data sets. While many different visualization techniques allow valuable insights, intuitive and natural exploration approaches are often missing, and hence usually only domain-experts are able to efficiently explore such data sets. In this paper we demonstrate a virtual reality (VR) based setup for geographic information systems (GIS), which allows users to perform navigation tasks in stereoscopically displayed interactive 3D geospatial environments using multi-touch hand gestures in combination with foot input. Furthermore, we have performed an initial user study in which we analyzed the proposed setup.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Geospatial environments provide users with complex and detailed 3D data sets. While many different visualization techniques allow valuable insights, intuitive and natural exploration approaches are often missing, and hence usually only domain-experts are able to efficiently explore such data sets. In this paper we demonstrate a virtual reality (VR) based setup for geographic information systems (GIS), which allows users to perform navigation tasks in stereoscopically displayed interactive 3D geospatial environments using multi-touch hand gestures in combination with foot input. Furthermore, we have performed an initial user study in which we analyzed the proposed setup. |

| Gerd Bruder; Betty Mohler; Gabriel Cirio JVRC Tutorial: Walking Experiences in Virtual Worlds Book ACM Press, 2010. @book{BMC10,

title = {JVRC Tutorial: Walking Experiences in Virtual Worlds},

author = {Gerd Bruder and Betty Mohler and Gabriel Cirio},

year = {2010},

date = {2010-01-01},

publisher = {ACM Press},

series = {Course Notes of the Joint Virtual Reality Conference of EuroVR - EGVE - VEC},

abstract = {Active exploration enables us humans to construct a rich and coherent percept of our environment. By far the most natural way to move through the real world is via locomotion like walking or running. The same should also be true for computer generated three-dimensional environments. Keeping such an active and dynamic ability to navigate through large-scale virtual scenes is of great interest for many 3D applications demanding locomotion, such as tourism, architecture or interactive entertainment. However, today it is still mostly impossible to freely walk through computer generated environments in order to actively explore them. The primary reason for this is the scientific and technological underdevelopment in this sector. While moving in the real world, sensory information such as vestibular, proprioceptive, and efferent copy signals as well as visual information create consistent multi-sensory cues that indicate one's own motion, i.e., acceleration, speed and direction of travel. Computer graphics environments were initially restricted to visual displays, combined with interaction devices, e.g. joystick or mouse, for providing (often unnatural) inputs to generate self-motion. Nowadays, more and more interaction devices, e.g., Nintendo's Wii or Sony's EyeToy, enable intuitive and natural interaction. In this context, many research groups are investigating natural, multimodal methods of generating self-motion in virtual worlds based on these consumer hardware. An obvious approach is to transfer the user's tracked head movements to changes of the camera in the virtual world by means of a one-to-one mapping. Then, a one meter movement in the real world is mapped to a one meter movement of the virtual camera in the corresponding direction in the virtual environment (VE). This technique has the drawback that the user's movements are restricted by a limited range of the tracking sensors, e.g. optical cameras, and a rather small workspace in the real world. The size of the virtual world often differs from the size of the tracked space so that a straightforward implementation of omni-directional and unlimited walking is not possible. Thus, concepts for virtual locomotion methods are needed that enable walking over large distances in the virtual world while remaining within a relatively small space in the real world. In this tutorial we will present an overview about the development of locomotion interfaces for computer generated virtual environments ranging from desktop-based camera manipulations simulating walking, and different walking metaphors for virtual reality (VR)-based environments to state-of-the-art hardware-based solutions that enable omni-directional and unlimited real walking through virtual worlds.},

keywords = {},

pubstate = {published},

tppubtype = {book}

}

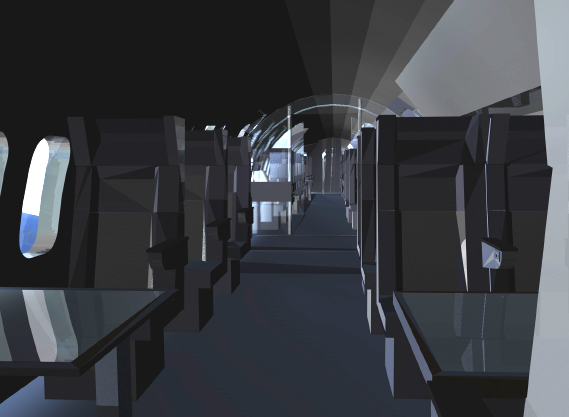

Active exploration enables us humans to construct a rich and coherent percept of our environment. By far the most natural way to move through the real world is via locomotion like walking or running. The same should also be true for computer generated three-dimensional environments. Keeping such an active and dynamic ability to navigate through large-scale virtual scenes is of great interest for many 3D applications demanding locomotion, such as tourism, architecture or interactive entertainment. However, today it is still mostly impossible to freely walk through computer generated environments in order to actively explore them. The primary reason for this is the scientific and technological underdevelopment in this sector. While moving in the real world, sensory information such as vestibular, proprioceptive, and efferent copy signals as well as visual information create consistent multi-sensory cues that indicate one's own motion, i.e., acceleration, speed and direction of travel. Computer graphics environments were initially restricted to visual displays, combined with interaction devices, e.g. joystick or mouse, for providing (often unnatural) inputs to generate self-motion. Nowadays, more and more interaction devices, e.g., Nintendo's Wii or Sony's EyeToy, enable intuitive and natural interaction. In this context, many research groups are investigating natural, multimodal methods of generating self-motion in virtual worlds based on these consumer hardware. An obvious approach is to transfer the user's tracked head movements to changes of the camera in the virtual world by means of a one-to-one mapping. Then, a one meter movement in the real world is mapped to a one meter movement of the virtual camera in the corresponding direction in the virtual environment (VE). This technique has the drawback that the user's movements are restricted by a limited range of the tracking sensors, e.g. optical cameras, and a rather small workspace in the real world. The size of the virtual world often differs from the size of the tracked space so that a straightforward implementation of omni-directional and unlimited walking is not possible. Thus, concepts for virtual locomotion methods are needed that enable walking over large distances in the virtual world while remaining within a relatively small space in the real world. In this tutorial we will present an overview about the development of locomotion interfaces for computer generated virtual environments ranging from desktop-based camera manipulations simulating walking, and different walking metaphors for virtual reality (VR)-based environments to state-of-the-art hardware-based solutions that enable omni-directional and unlimited real walking through virtual worlds. |

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Anthony Steed Gradual Transitions and their Effects on Presence and Distance Estimation Journal Article In: Computers & Graphics, vol. 34, no. 1, pp. 26–33, 2010. @article{SBHS10,

title = {Gradual Transitions and their Effects on Presence and Distance Estimation},

author = {Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Anthony Steed},

year = {2010},

date = {2010-01-01},

journal = {Computers & Graphics},

volume = {34},

number = {1},

pages = {26--33},

abstract = {Several experiments have provided evidence that ego-centric distances are perceived as compressed in immersive virtual environments relative to the real world. The principal factors responsible for this phenomenon have remained largely unknown. However, recent experiments suggest that when the virtual environment (VE) is an exact replica of a user's real physical surroundings, the person's distance perception improves. Based on this observation, it sounds reasonable that if subjects feel a high degree of situational awareness in a known VE, their ability for estimating distances may be much better compared to an unfamiliar virtual world. This raises the question, whether starting the virtual reality (VR) experience in such a virtual replica and gradually transiting to a different VE has potential to increase a person's sense of presence as well as distance perception skills in an unknown virtual world. In this case the virtual replica serves as transitional environment between reality and a virtual world. Although transitional environments are already applied in some VR demonstrations, until now it has not been verified whether such a gradual transition improves a user's VR experience. We have conducted two experiments to quantify to what extent a gradual transition to a virtual world via a transitional environment improves a person's level of presence and ability to estimate distances in the VE. We have found that the subjects' self-reported sense of presence shows significantly higher scores, and that the subjects' distance estimation skills in the VE improved significantly, when they entered the VE via a transitional environment.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

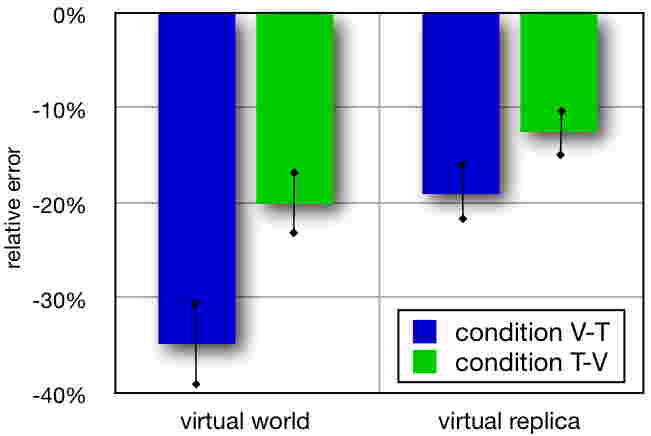

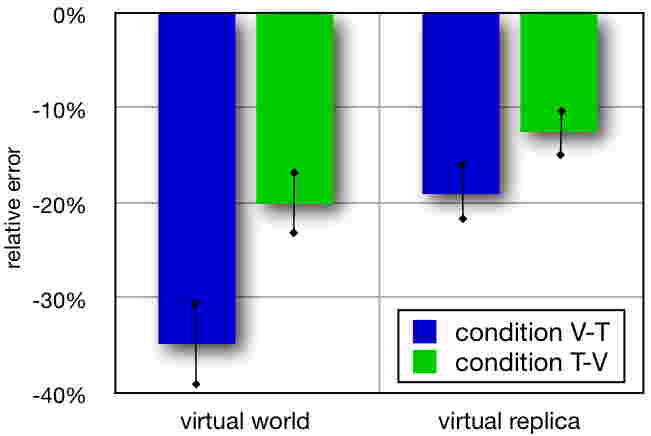

Several experiments have provided evidence that ego-centric distances are perceived as compressed in immersive virtual environments relative to the real world. The principal factors responsible for this phenomenon have remained largely unknown. However, recent experiments suggest that when the virtual environment (VE) is an exact replica of a user's real physical surroundings, the person's distance perception improves. Based on this observation, it sounds reasonable that if subjects feel a high degree of situational awareness in a known VE, their ability for estimating distances may be much better compared to an unfamiliar virtual world. This raises the question, whether starting the virtual reality (VR) experience in such a virtual replica and gradually transiting to a different VE has potential to increase a person's sense of presence as well as distance perception skills in an unknown virtual world. In this case the virtual replica serves as transitional environment between reality and a virtual world. Although transitional environments are already applied in some VR demonstrations, until now it has not been verified whether such a gradual transition improves a user's VR experience. We have conducted two experiments to quantify to what extent a gradual transition to a virtual world via a transitional environment improves a person's level of presence and ability to estimate distances in the VE. We have found that the subjects' self-reported sense of presence shows significantly higher scores, and that the subjects' distance estimation skills in the VE improved significantly, when they entered the VE via a transitional environment. |

| Frank Steinicke; Gerd Bruder; Jason Jerald; Harald Frenz; Markus Lappe Estimation of Detection Thresholds for Redirected Walking Techniques Journal Article In: IEEE Transactions on Visualization and Computer Graphics (TVCG), vol. 16, no. 1, pp. 17–27, 2010. @article{SBJFL10,

title = {Estimation of Detection Thresholds for Redirected Walking Techniques},

author = {Frank Steinicke and Gerd Bruder and Jason Jerald and Harald Frenz and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBJFL10-optimized.pdf},

year = {2010},

date = {2010-01-01},

journal = {IEEE Transactions on Visualization and Computer Graphics (TVCG)},

volume = {16},

number = {1},

pages = {17--27},

abstract = {In immersive virtual environments users can control their virtual viewpoint by walking through the real world, and movements are mapped one-to-one to virtual camera motions. With redirection techniques, the virtual camera is manipulated by applying gains so that the virtual world moves differently than the real world. We have quantified how much humans can unknowingly be redirected on paths, which are different from the visually perceived paths. We tested 12 subjects in three different psychophysical experiments. In experiment E1, subjects performed rotations with different gains, and then had to choose whether the visually perceived rotation was smaller or greater than the physical rotation. In experiment E2, subjects chose whether the physical walk was shorter or longer than the visually perceived scaled travel distance. In experiment E3, subjects estimate the path curvature when walking a curved path in the real world while the visual display shows a straight path in the virtual world. Our results show that users can be turned physically about 49% more or 20% less than the perceived virtual rotation, distances can be downscaled by 14% and up-scaled by 26%, and users can be redirected on a circular arc with a radius greater than 22m.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

In immersive virtual environments users can control their virtual viewpoint by walking through the real world, and movements are mapped one-to-one to virtual camera motions. With redirection techniques, the virtual camera is manipulated by applying gains so that the virtual world moves differently than the real world. We have quantified how much humans can unknowingly be redirected on paths, which are different from the visually perceived paths. We tested 12 subjects in three different psychophysical experiments. In experiment E1, subjects performed rotations with different gains, and then had to choose whether the visually perceived rotation was smaller or greater than the physical rotation. In experiment E2, subjects chose whether the physical walk was shorter or longer than the visually perceived scaled travel distance. In experiment E3, subjects estimate the path curvature when walking a curved path in the real world while the visual display shows a straight path in the virtual world. Our results show that users can be turned physically about 49% more or 20% less than the perceived virtual rotation, distances can be downscaled by 14% and up-scaled by 26%, and users can be redirected on a circular arc with a radius greater than 22m. |

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Pete Willemsen Change Blindness Phenomena for Stereoscopic Projection Systems Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 187–194, 2010. @inproceedings{SBHW10,

title = {Change Blindness Phenomena for Stereoscopic Projection Systems},

author = {Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Pete Willemsen},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBHW10-optimized.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {187--194},

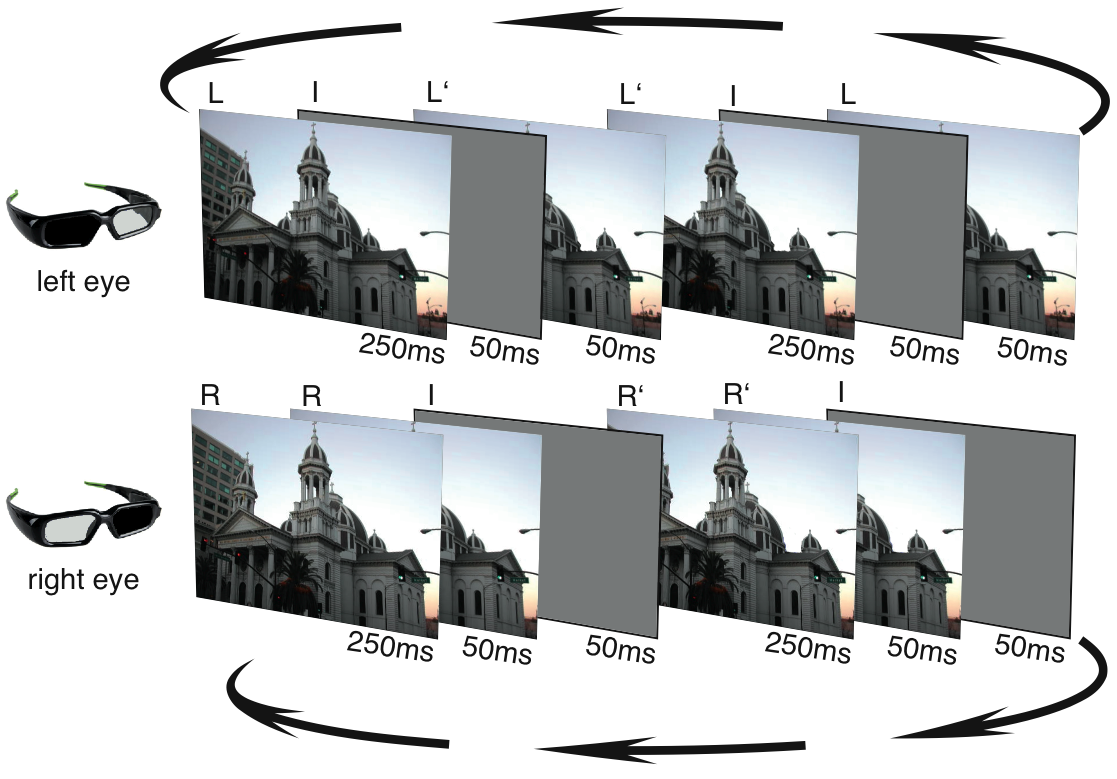

abstract = {In visual perception, change blindness describes the phenomenon that persons viewing a visual scene may apparently fail to detect significant changes in that scene. These phenomena have been observed in both computer generated imagery and real-world scenes. Several studies have demonstrated that change blindness effects occur primarily during visual disruptions such as blinks or saccadic eye movements. However, until now the influence of stereoscopic vision on change blindness has not been studied thoroughly in the context of visual perception research. In this paper we introduce change blindness techniques for stereoscopic projection systems, providing the ability to substantially modify a virtual scene in a manner that is difficult for observers to perceive. We evaluate techniques for passive and active stereoscopic viewing and compare the results to those of monoscopic viewing conditions. For stereoscopic viewing conditions, we find that change blindness phenomena can be applied with a larger magnitude as compared to monoscopic viewing of a scene. We have also evaluated the potential of the presented techniques for allowing abrupt, and yet significant, changes of a stereoscopically displayed virtual reality environment.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

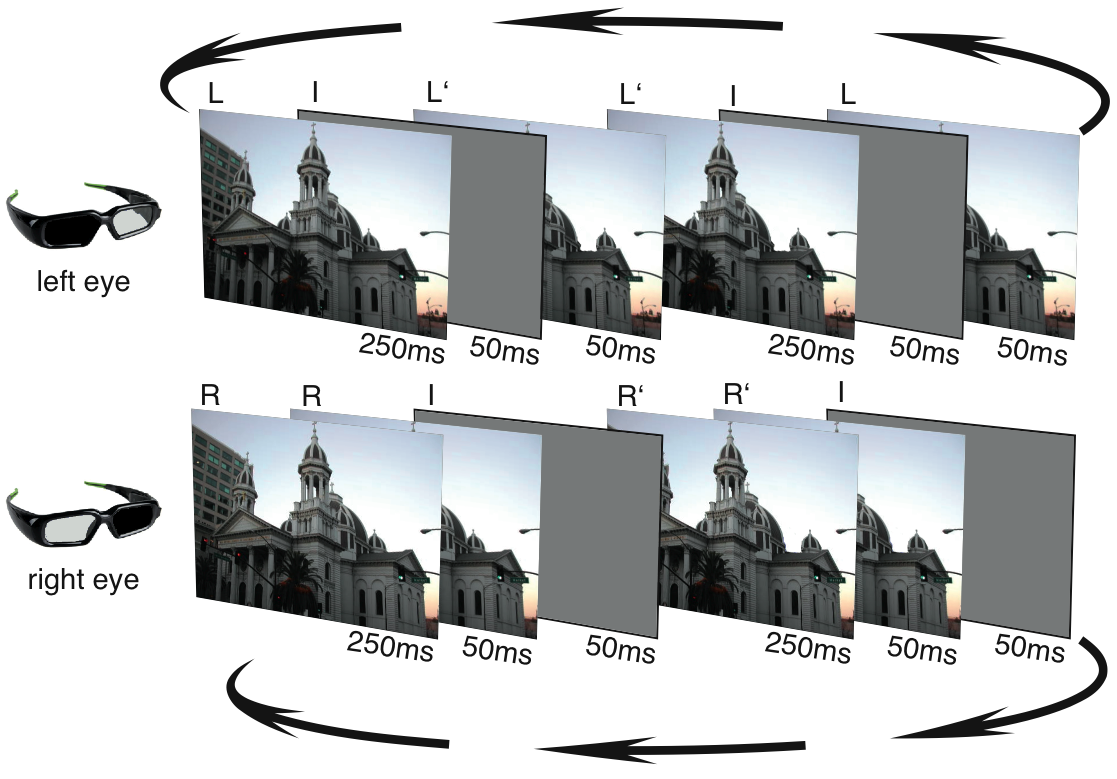

In visual perception, change blindness describes the phenomenon that persons viewing a visual scene may apparently fail to detect significant changes in that scene. These phenomena have been observed in both computer generated imagery and real-world scenes. Several studies have demonstrated that change blindness effects occur primarily during visual disruptions such as blinks or saccadic eye movements. However, until now the influence of stereoscopic vision on change blindness has not been studied thoroughly in the context of visual perception research. In this paper we introduce change blindness techniques for stereoscopic projection systems, providing the ability to substantially modify a virtual scene in a manner that is difficult for observers to perceive. We evaluate techniques for passive and active stereoscopic viewing and compare the results to those of monoscopic viewing conditions. For stereoscopic viewing conditions, we find that change blindness phenomena can be applied with a larger magnitude as compared to monoscopic viewing of a scene. We have also evaluated the potential of the presented techniques for allowing abrupt, and yet significant, changes of a stereoscopically displayed virtual reality environment. |

| Annika Busch; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs Auswirkungen biokularer Videobilder als Selbst-Repr Proceedings Article In: Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR), pp. 157–170, 2010. @inproceedings{BSBH10,

title = {Auswirkungen biokularer Videobilder als Selbst-Repr},

author = {Annika Busch and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR)},

pages = {157--170},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

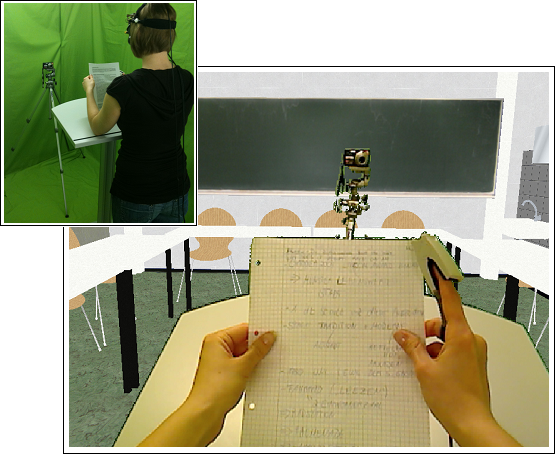

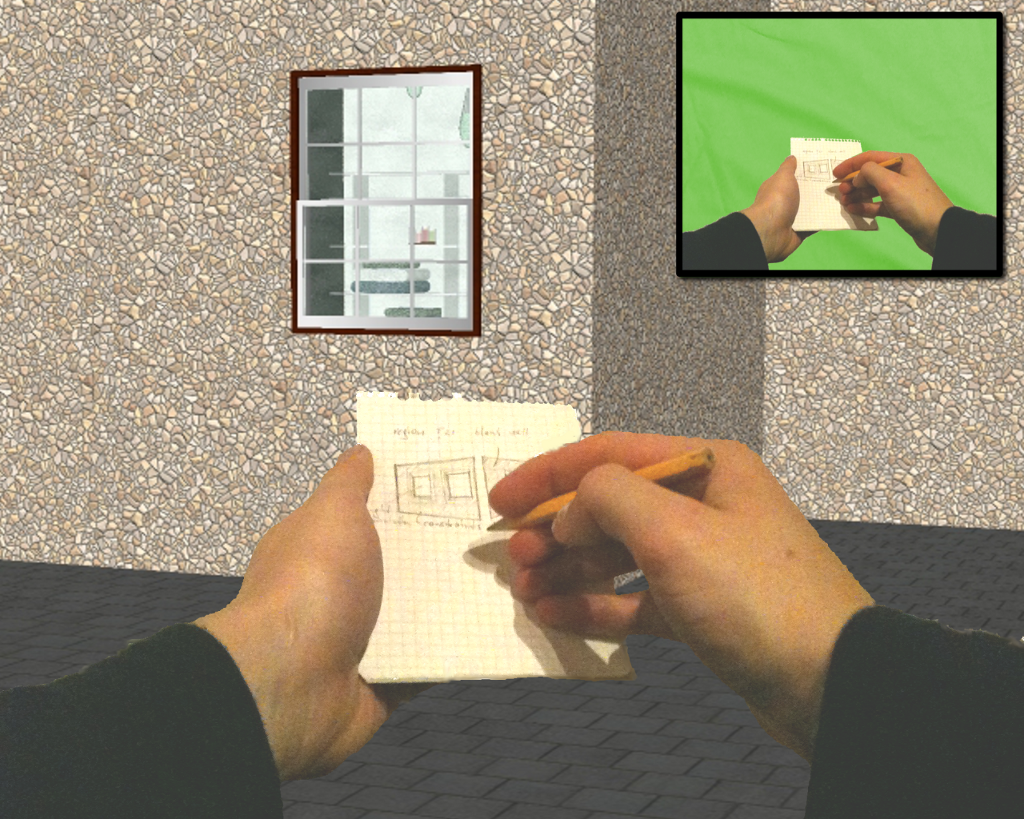

| Gerd Bruder; Frank Steinicke; Dimitar Valkov; Klaus H. Hinrichs Augmented Virtual Studio for Architectural Exploration Proceedings Article In: Proceedings of the Virtual Reality International Conference (VRIC), pp. 1–8, 2010. @inproceedings{BSVH10,

title = {Augmented Virtual Studio for Architectural Exploration},

author = {Gerd Bruder and Frank Steinicke and Dimitar Valkov and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSVH10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the Virtual Reality International Conference (VRIC)},

pages = {1--8},

abstract = {Immersive virtual environments and natural 3D user interfaces have shown great potential in the field of architecture, especially for exploration, presentation and review of designs. In this paper we propose an augmented virtual studio environment for architectural exploration based on a mixed-reality head-mounted display environment. The proposed system supports (1) real walking through large virtual building models, (2) visual feedback about the user's body and (3) display of real-world objects in the virtual view based on color transfer functions. We describe the locomotion user interface for immersive exploration, as well as a mixed-reality 3D user interface for interaction with virtual designs.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

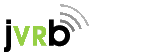

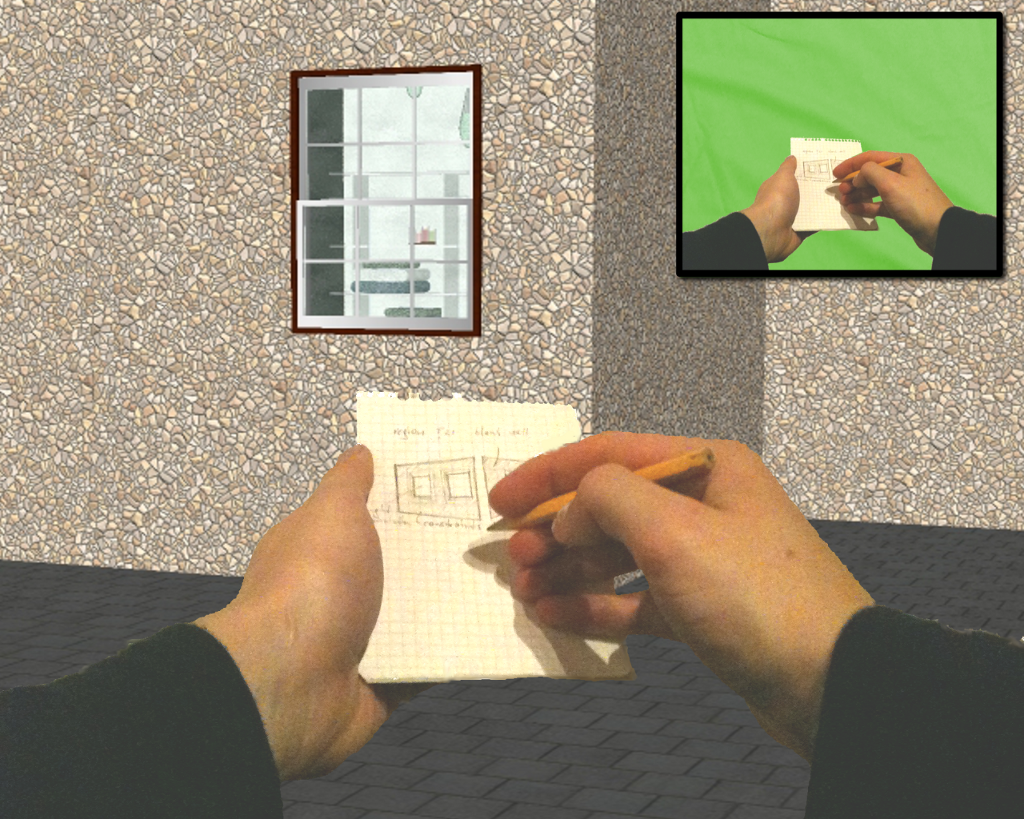

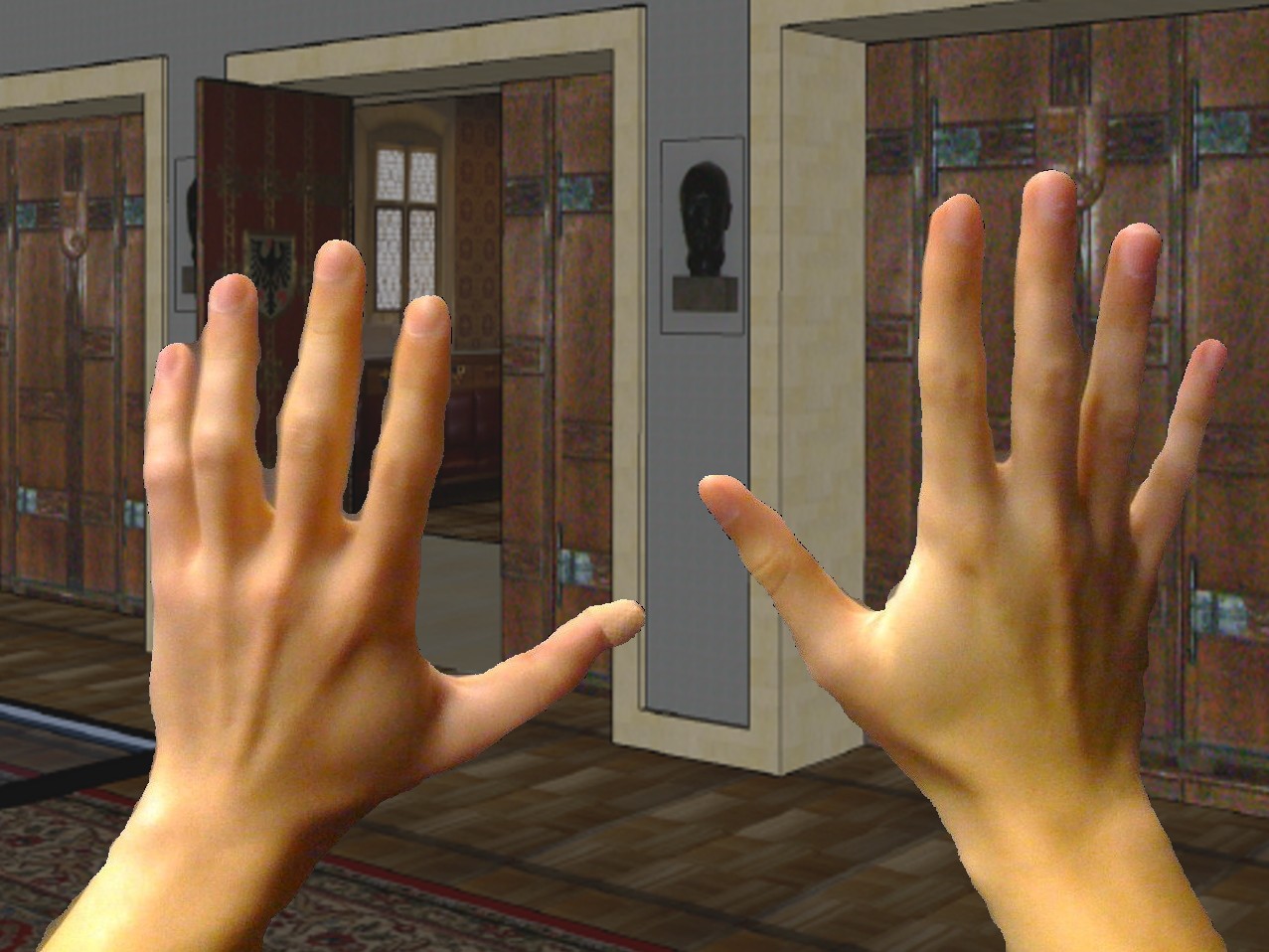

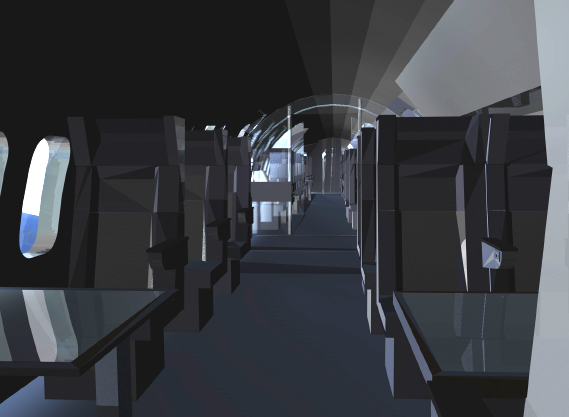

Immersive virtual environments and natural 3D user interfaces have shown great potential in the field of architecture, especially for exploration, presentation and review of designs. In this paper we propose an augmented virtual studio environment for architectural exploration based on a mixed-reality head-mounted display environment. The proposed system supports (1) real walking through large virtual building models, (2) visual feedback about the user's body and (3) display of real-world objects in the virtual view based on color transfer functions. We describe the locomotion user interface for immersive exploration, as well as a mixed-reality 3D user interface for interaction with virtual designs. |

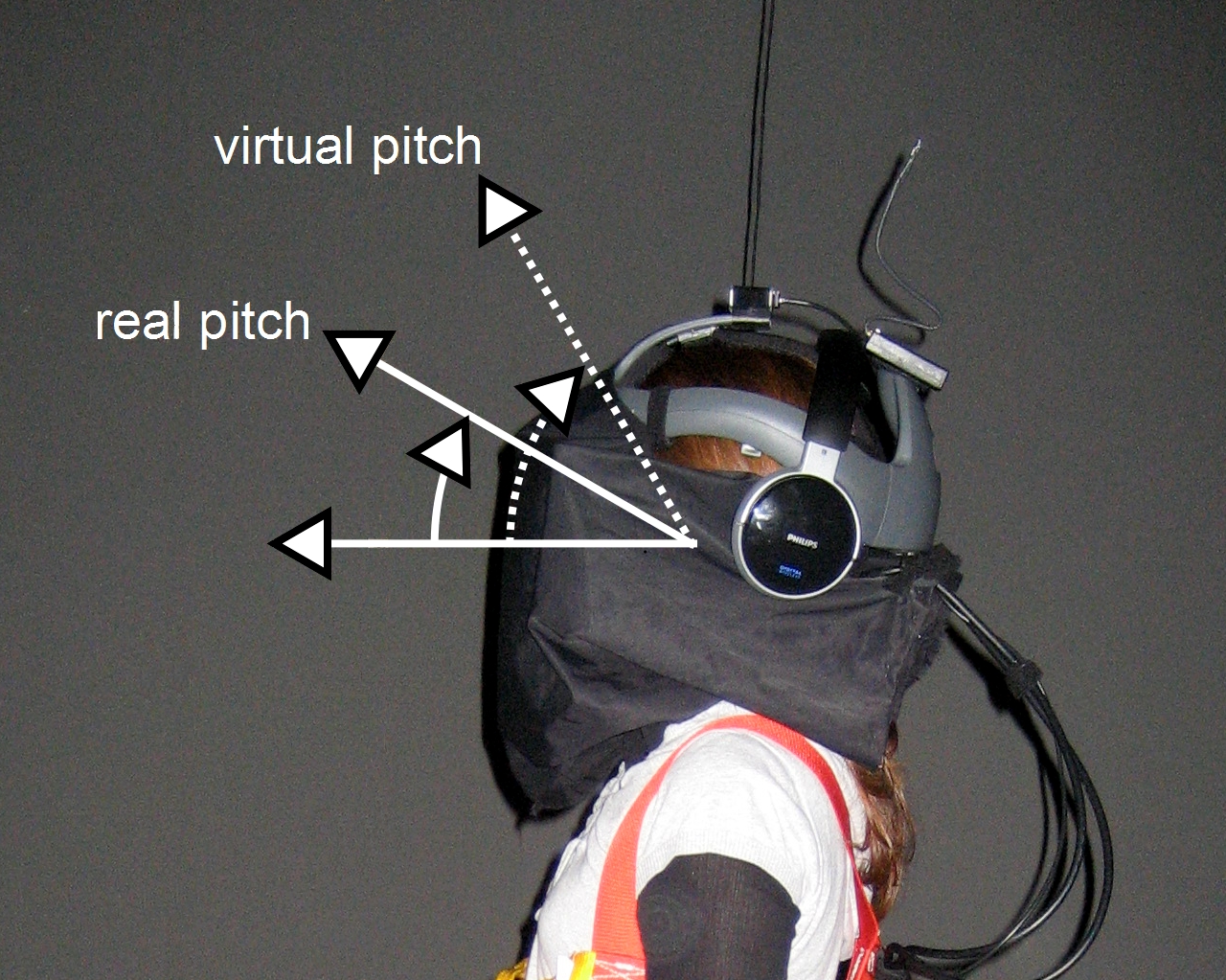

| Benjamin Bolte; Gerd Bruder; Frank Steinicke; Klaus H. Hinrichs; Markus Lappe Augmentation Techniques for Efficient Exploration in Head-Mounted Display Environments Proceedings Article In: Proceedings of the ACM Symposium on Virtual Reality Software and Technology (VRST), pp. 11–18, 2010. @inproceedings{BBSHL10,

title = {Augmentation Techniques for Efficient Exploration in Head-Mounted Display Environments},

author = {Benjamin Bolte and Gerd Bruder and Frank Steinicke and Klaus H. Hinrichs and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BBSHL10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the ACM Symposium on Virtual Reality Software and Technology (VRST)},

pages = {11--18},

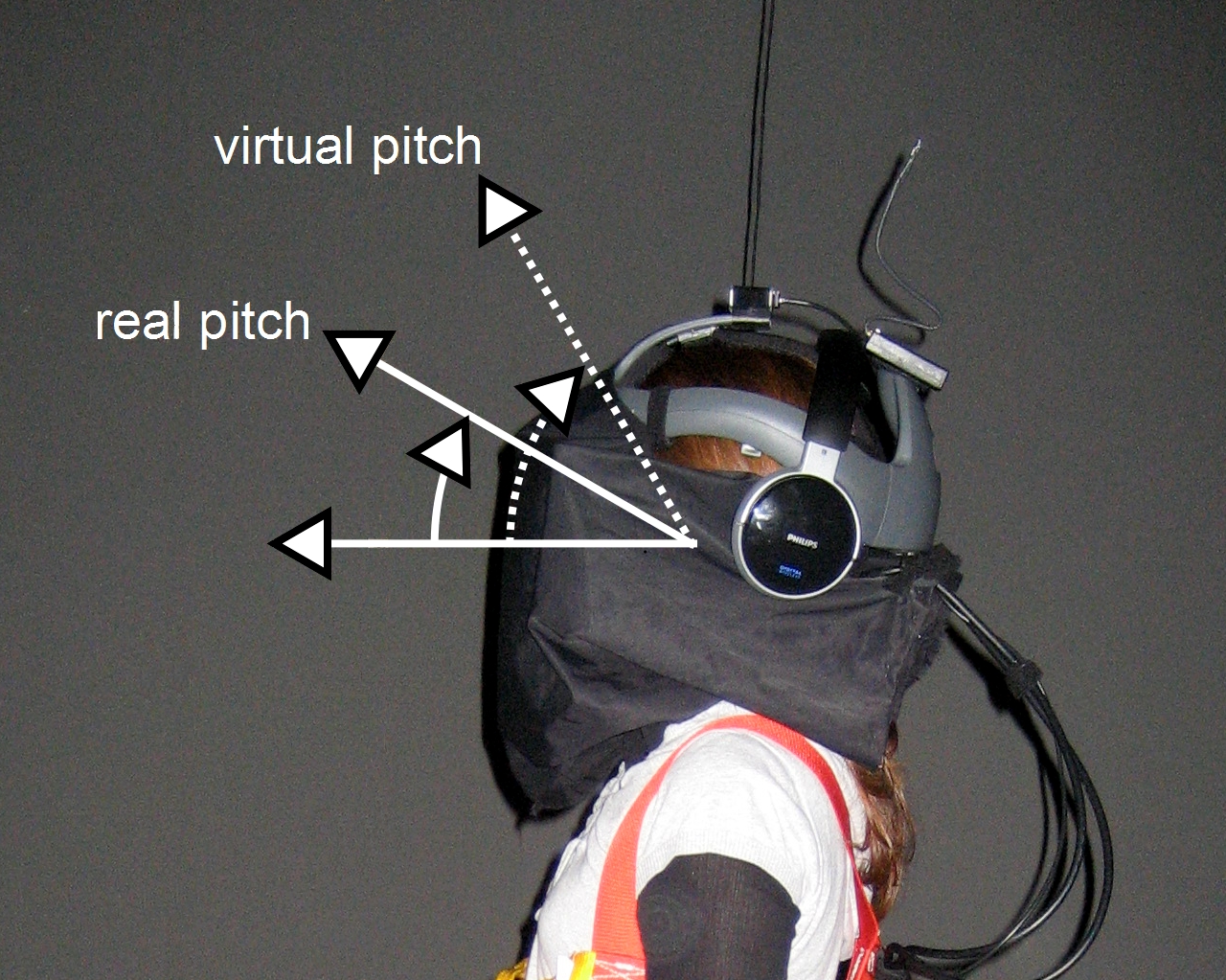

abstract = {Physical characteristics and constraints of today's head-mounted displays (HMDs) often impair interaction in immersive virtual environments (VEs). For instance, due to the limited field of view (FOV) subtended by the display units in front of the user's eyes more effort is required to explore a VE by head rotations than for exploration in the real world. In this paper we propose a combination of two augmentation techniques that have the potential to make exploration of VEs more efficient: (1) augmenting the geometric FOV (GFOV) used for rendering the VE, and (2) amplifying head rotations while the user changes her head orientation. In order to identify how much manipulation can be applied without users noticing, we conducted two psychophysical experiments in which we analyzed subjects' ability to discriminate between virtual and real head pitch and roll rotations while three different geometric FOVs were used. Our results show that the combination of both techniques has great potential to support efficient exploration of VEs. We found that virtual pitch and roll rotations can be amplified by 30% and 44% respectively, when the GFOV matches the subject's estimation of the most natural FOV. This leads to a possible reduction of the user's effort required to explore the VE using a combination of both techniques by approximately 25%.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Physical characteristics and constraints of today's head-mounted displays (HMDs) often impair interaction in immersive virtual environments (VEs). For instance, due to the limited field of view (FOV) subtended by the display units in front of the user's eyes more effort is required to explore a VE by head rotations than for exploration in the real world. In this paper we propose a combination of two augmentation techniques that have the potential to make exploration of VEs more efficient: (1) augmenting the geometric FOV (GFOV) used for rendering the VE, and (2) amplifying head rotations while the user changes her head orientation. In order to identify how much manipulation can be applied without users noticing, we conducted two psychophysical experiments in which we analyzed subjects' ability to discriminate between virtual and real head pitch and roll rotations while three different geometric FOVs were used. Our results show that the combination of both techniques has great potential to support efficient exploration of VEs. We found that virtual pitch and roll rotations can be amplified by 30% and 44% respectively, when the GFOV matches the subject's estimation of the most natural FOV. This leads to a possible reduction of the user's effort required to explore the VE using a combination of both techniques by approximately 25%. |

| Dimitar Valkov; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs A Multi-Touch enabled Human-Transporter Metaphor for Virtual 3D Traveling Proceedings Article In: Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI), pp. 79–82, 2010, ((acceptance rate 25%)). @inproceedings{VSBH10b,

title = {A Multi-Touch enabled Human-Transporter Metaphor for Virtual 3D Traveling},

author = {Dimitar Valkov and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/VSBH10b.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI)},

pages = {79--82},

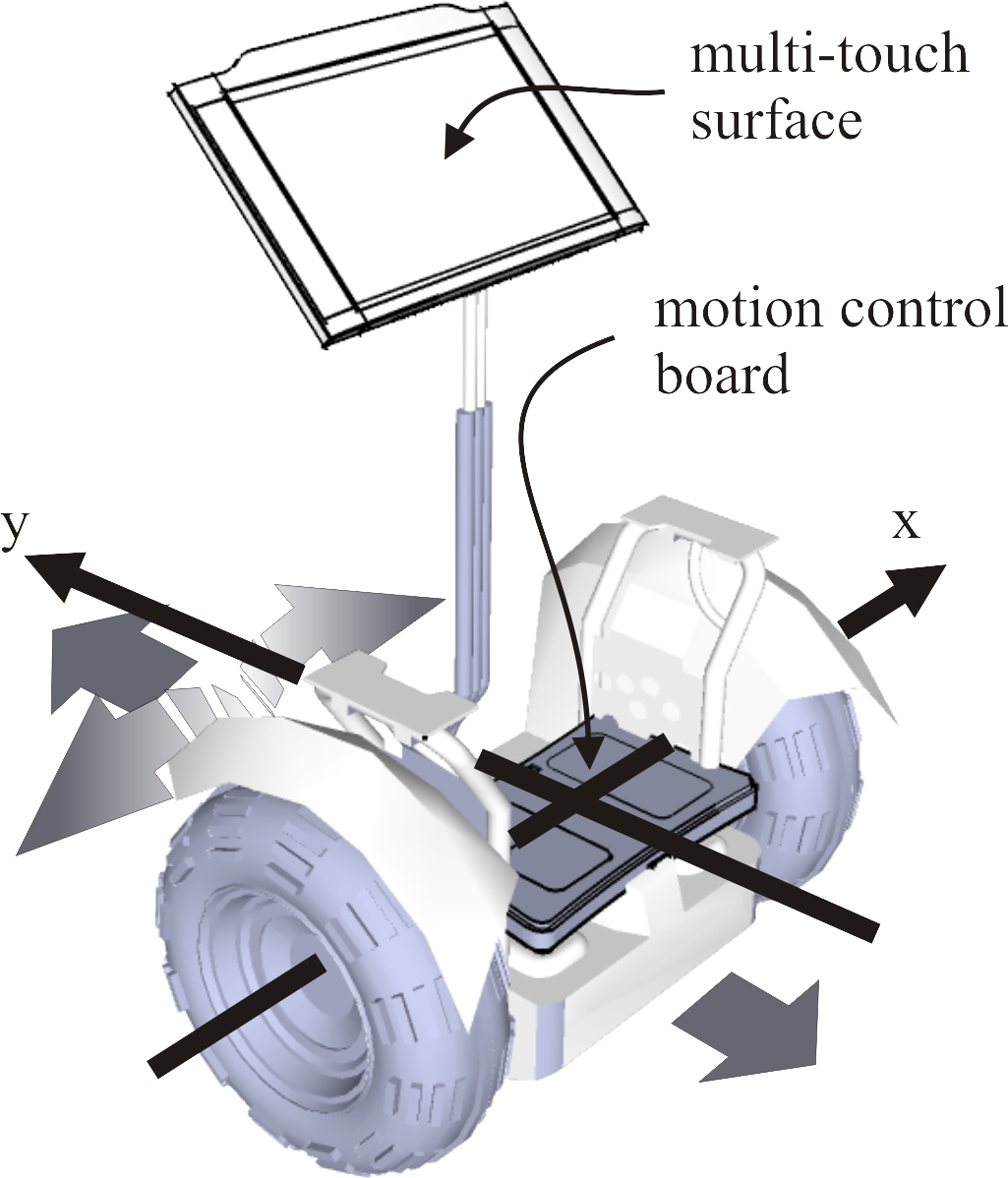

abstract = {In this tech-note we demonstrate how multi-touch hand gestures in combination with foot gestures can be used to perform navigation tasks in interactive 3D environments. Geographic Information Systems (GIS) are well suited as a complex testbed for evaluation of user interfaces based on multi-modal input. Recent developments in the area of interactive surfaces enable the construction of lowcost multi-touch displays and relatively inexpensive sensor technology to detect foot gestures, which allows to explore these input modalities for virtual reality environments. In this tech-note, we describe an intuitive 3D user interface metaphor and corresponding hardware, which combine multi-touch hand and foot gestures for interaction with spatial data.},

note = {(acceptance rate 25%)},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this tech-note we demonstrate how multi-touch hand gestures in combination with foot gestures can be used to perform navigation tasks in interactive 3D environments. Geographic Information Systems (GIS) are well suited as a complex testbed for evaluation of user interfaces based on multi-modal input. Recent developments in the area of interactive surfaces enable the construction of lowcost multi-touch displays and relatively inexpensive sensor technology to detect foot gestures, which allows to explore these input modalities for virtual reality environments. In this tech-note, we describe an intuitive 3D user interface metaphor and corresponding hardware, which combine multi-touch hand and foot gestures for interaction with spatial data. |

| David Donszik; Bastian Lengert; Gerd Bruder; Klaus H. Hinrichs; Frank Steinicke 3D-Manipulationstechnik f Proceedings Article In: Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR), pp. 83–92, 2010. @inproceedings{DLBHS10,

title = {3D-Manipulationstechnik f},

author = { David Donszik and Bastian Lengert and Gerd Bruder and Klaus H. Hinrichs and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/DLBHS10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR)},

pages = {83--92},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] Estimation of Virtual Interpupillary Distances for Immersive Head-Mounted Displays](https://sreal.ucf.edu/wp-content/uploads/2017/02/BSH10.png) | Gerd Bruder; Frank Steinicke; Klaus H. Hinrichs [POSTER] Estimation of Virtual Interpupillary Distances for Immersive Head-Mounted Displays Proceedings Article In: Proceedings of the ACM Symposium on Applied Perception in Graphics and Visualization (APGV) (Poster Presentation), pp. 168, 2010. @inproceedings{BSH10,

title = {[POSTER] Estimation of Virtual Interpupillary Distances for Immersive Head-Mounted Displays},

author = {Gerd Bruder and Frank Steinicke and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSH10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the ACM Symposium on Applied Perception in Graphics and Visualization (APGV) (Poster Presentation)},

pages = {168},

abstract = {Head-mounted displays (HMDs) allow users to observe virtual environments (VEs) from an egocentric perspective. In order to present a realistic stereoscopic view, the rendering system has to be adjusted to the characteristics of the HMD, e. g., the display's field of view (FOV), as well as to characteristics that are unique for each user, in particular her interpupillary distance (IPD). Typically, the user's IPD is measured, and then applied to the virtual IPD used for rendering, assuming that the HMD's display units are correctly adjusted in front of the user's eyes. A discrepancy between the user's IPD and the virtual IPD may distort the perception of the VE. In this poster we analyze the user's perception of a VE in a HMD environment, which is displayed stereoscopically with different IPDs. We conducted an experiment to identify virtual IPDs that are identified as natural by subjects for different FOVs. In our experiment, subjects had to adjust the IPD for a rendered virtual replica of our real laboratory until perception of the virtual replica matched perception of the real laboratory. We found that the virtual IPDs subjects estimate as most natural are often not identical to their IPDs, and that the estimations were affected by the FOV of the HMD and the virtual FOV used for rendering.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Head-mounted displays (HMDs) allow users to observe virtual environments (VEs) from an egocentric perspective. In order to present a realistic stereoscopic view, the rendering system has to be adjusted to the characteristics of the HMD, e. g., the display's field of view (FOV), as well as to characteristics that are unique for each user, in particular her interpupillary distance (IPD). Typically, the user's IPD is measured, and then applied to the virtual IPD used for rendering, assuming that the HMD's display units are correctly adjusted in front of the user's eyes. A discrepancy between the user's IPD and the virtual IPD may distort the perception of the VE. In this poster we analyze the user's perception of a VE in a HMD environment, which is displayed stereoscopically with different IPDs. We conducted an experiment to identify virtual IPDs that are identified as natural by subjects for different FOVs. In our experiment, subjects had to adjust the IPD for a rendered virtual replica of our real laboratory until perception of the virtual replica matched perception of the real laboratory. We found that the virtual IPDs subjects estimate as most natural are often not identical to their IPDs, and that the estimations were affected by the FOV of the HMD and the virtual FOV used for rendering. |

![[POSTER] Immersive Virtual Studio for Architectural Exploration](https://sreal.ucf.edu/wp-content/uploads/2017/02/BSVH10a.jpg) | Gerd Bruder; Frank Steinicke; Dimitar Valkov; Klaus H. Hinrichs [POSTER] Immersive Virtual Studio for Architectural Exploration Proceedings Article In: Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI) (Poster Presentation), pp. 125–126, 2010. @inproceedings{BSVH10a,

title = {[POSTER] Immersive Virtual Studio for Architectural Exploration},

author = {Gerd Bruder and Frank Steinicke and Dimitar Valkov and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSVH10a-optimized.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI) (Poster Presentation)},

pages = {125--126},

abstract = {Architects use a variety of analog and digital tools and media to plan and design constructions. Immersive virtual reality (VR) technologies have shown great potential for architectural design, especially for exploration and review of design proposals. In this work we propose a virtual studio system, which allows architects and clients to use arbitrary real-world tools such as maps or rulers during immersive exploration of virtual 3D models. The user interface allows architects and clients to review designs and compose 3D architectural scenes, combining benefits of mixed-reality environments with immersive head-mounted display setups.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Architects use a variety of analog and digital tools and media to plan and design constructions. Immersive virtual reality (VR) technologies have shown great potential for architectural design, especially for exploration and review of design proposals. In this work we propose a virtual studio system, which allows architects and clients to use arbitrary real-world tools such as maps or rulers during immersive exploration of virtual 3D models. The user interface allows architects and clients to review designs and compose 3D architectural scenes, combining benefits of mixed-reality environments with immersive head-mounted display setups. |

![[POSTER] A Virtual Reality Handball Goalkeeper Analysis System](https://sreal.ucf.edu/wp-content/uploads/2017/02/BZBSHFS10.jpg) | Benjamin Bolte; Florian Zeidler; Gerd Bruder; Frank Steinicke; Klaus H. Hinrichs; Lennart Fischer; Jőrg Schorer [POSTER] A Virtual Reality Handball Goalkeeper Analysis System Proceedings Article In: Proceedings of the Joint Virtual Reality Conference (JVRC) (Poster Presentation), pp. 1–2, 2010. @inproceedings{BZBSHFS10,

title = {[POSTER] A Virtual Reality Handball Goalkeeper Analysis System},

author = {Benjamin Bolte and Florian Zeidler and Gerd Bruder and Frank Steinicke and Klaus H. Hinrichs and Lennart Fischer and Jőrg Schorer},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BZBSHFS10.pdf},

year = {2010},

date = {2010-01-01},

booktitle = {Proceedings of the Joint Virtual Reality Conference (JVRC) (Poster Presentation)},

pages = {1--2},

abstract = {Understanding how professional handball goalkeepers acquire skills to combine decision-making and complex motor tasks is a multidisciplinary challenge. In order to improve a goalkeeper's training by allowing insights into their complex perception, learning and action processes, virtual reality (VR) technologies provide a way to standardize experimental sport situations. In this poster we describe a VR-based handball system, which supports the evaluation of perceptual-motor skills of handball goalkeepers during shots. In order to allow reliable analyses it is essential that goalkeepers can move naturally like they would do in a real game situation, which is often inhibited by wires or markers that are usually used in VR systems. To address this challenge, we developed a camera-based goalkeeper analysis system, which allows to detect and measure motions of goalkeepers in real-time.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Understanding how professional handball goalkeepers acquire skills to combine decision-making and complex motor tasks is a multidisciplinary challenge. In order to improve a goalkeeper's training by allowing insights into their complex perception, learning and action processes, virtual reality (VR) technologies provide a way to standardize experimental sport situations. In this poster we describe a VR-based handball system, which supports the evaluation of perceptual-motor skills of handball goalkeepers during shots. In order to allow reliable analyses it is essential that goalkeepers can move naturally like they would do in a real game situation, which is often inhibited by wires or markers that are usually used in VR systems. To address this challenge, we developed a camera-based goalkeeper analysis system, which allows to detect and measure motions of goalkeepers in real-time. |

2009

|

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Markus Lappe; Brian Ries; Victoria Interrante Transitional Environments Enhance Distance Perception in Immersive Virtual Reality Systems Proceedings Article In: Proceedings of the ACM Symposium on Applied Perception in Graphics and Visualization (APGV), pp. 19–26, ACM Press, 2009. @inproceedings{SBHLRI09,

title = {Transitional Environments Enhance Distance Perception in Immersive Virtual Reality Systems},

author = { Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Markus Lappe and Brian Ries and Victoria Interrante},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBHLRI09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the ACM Symposium on Applied Perception in Graphics and Visualization (APGV)},

pages = {19--26},

publisher = {ACM Press},

abstract = {Several experiments have provided evidence that ego-centric distances are perceived as compressed in immersive virtual environments relative to the real world. The principal factors responsible for this phenomenon have remained largely unknown. However, recent experiments suggest that when the virtual environment (VE) is an exact replica of a user's real physical surroundings, the person's distance perception improves. Furthermore, it has been shown that when users start their virtual reality (VR) experience in such a virtual replica and then gradually transition to a different VE, their sense of presence in the actual virtual world increases significantly. In this case the virtual replica serves as a transitional environment between the real and virtual world. In this paper we examine whether a person's distance estimation skills can be transferred from a transitional environment to a different VE. We have conducted blind walking experiments to analyze if starting the VR experience in a transitional environment can improve a person's ability to estimate distances in an immersive VR system. We found that users significantly improve their distance estimation skills when they enter the virtual world via a transitional environment.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Several experiments have provided evidence that ego-centric distances are perceived as compressed in immersive virtual environments relative to the real world. The principal factors responsible for this phenomenon have remained largely unknown. However, recent experiments suggest that when the virtual environment (VE) is an exact replica of a user's real physical surroundings, the person's distance perception improves. Furthermore, it has been shown that when users start their virtual reality (VR) experience in such a virtual replica and then gradually transition to a different VE, their sense of presence in the actual virtual world increases significantly. In this case the virtual replica serves as a transitional environment between the real and virtual world. In this paper we examine whether a person's distance estimation skills can be transferred from a transitional environment to a different VE. We have conducted blind walking experiments to analyze if starting the VR experience in a transitional environment can improve a person's ability to estimate distances in an immersive VR system. We found that users significantly improve their distance estimation skills when they enter the virtual world via a transitional environment. |

| Gerd Bruder; Frank Steinicke; Klaus H. Hinrichs; Markus Lappe Reorientation during Body Turns Proceedings Article In: Proceedings of the Joint Virtual Reality Conference (JVRC), pp. 145–152, 2009. @inproceedings{BSHL09,

title = {Reorientation during Body Turns},

author = { Gerd Bruder and Frank Steinicke and Klaus H. Hinrichs and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSHL09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the Joint Virtual Reality Conference (JVRC)},

pages = {145--152},

abstract = {Immersive virtual environment (IVE) systems allow users to control their virtual viewpoint by moving their tracked head and by walking through the real world, but usually the virtual space which can be explored by walking is restricted to the size of the tracked space of the laboratory. However, as the user approaches an edge of the tracked walking area, reorientation techniques can be applied to imperceptibly turn the user by manipulating the mapping between real-world body turns and virtual camera rotations. With such reorientation techniques, users can walk through large-scale IVEs while physically remaining in a reasonably small workspace. In psychophysical experiments we have quantified how much users can unknowingly be reoriented during body turns. We tested 18 subjects in two different experiments. First, in a just-noticeable difference test subjects had to perform two successive body turns between which they had to discriminate. In the second experiment subjects performed body turns that were mapped to different virtual camera rotations. Subjects had to estimate whether the visually perceived rotation was slower or faster than the physical rotation. Our results show that the detection thresholds for reorientation as well as the point of subjective equality between real movement and visual stimuli depend on the virtual rotation angle.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Immersive virtual environment (IVE) systems allow users to control their virtual viewpoint by moving their tracked head and by walking through the real world, but usually the virtual space which can be explored by walking is restricted to the size of the tracked space of the laboratory. However, as the user approaches an edge of the tracked walking area, reorientation techniques can be applied to imperceptibly turn the user by manipulating the mapping between real-world body turns and virtual camera rotations. With such reorientation techniques, users can walk through large-scale IVEs while physically remaining in a reasonably small workspace. In psychophysical experiments we have quantified how much users can unknowingly be reoriented during body turns. We tested 18 subjects in two different experiments. First, in a just-noticeable difference test subjects had to perform two successive body turns between which they had to discriminate. In the second experiment subjects performed body turns that were mapped to different virtual camera rotations. Subjects had to estimate whether the visually perceived rotation was slower or faster than the physical rotation. Our results show that the detection thresholds for reorientation as well as the point of subjective equality between real movement and visual stimuli depend on the virtual rotation angle. |

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Jason Jerald; Harald Frenz; Markus Lappe Real Walking through Virtual Environments by Redirection Techniques Journal Article In: Journal of Virtual Reality and Broadcasting (JVRB), vol. 6, no. 2, pp. 1–16, 2009. @article{SBHJFL09,

title = {Real Walking through Virtual Environments by Redirection Techniques},

author = { Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Jason Jerald and Harald Frenz and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBHJFL09.pdf},

year = {2009},

date = {2009-01-01},

journal = {Journal of Virtual Reality and Broadcasting (JVRB)},

volume = {6},

number = {2},

pages = {1--16},

abstract = {We present redirection techniques that support exploration of large-scale virtual environments (VEs) by means of real walking. We quantify to what degree users can unknowingly be redirected in order to guide them through VEs in which virtual paths differ from the physical paths. We further introduce the concept of dynamic passive haptics by which any number of virtual objects can be mapped to real physical proxy props having similar haptic properties (i. e., size, shape, and surface structure), such that the user can sense these virtual objects by touching their real world counterparts. Dynamic passive haptics provides the user with the illusion of interacting with a desired virtual object by redirecting her to the corresponding proxy prop. We describe the concepts of generic redirected walking and dynamic passive haptics and present experiments in which we have evaluated these concepts. Furthermore, we discuss implications that have been derived from a user study, and we present approaches that derive physical paths which may vary from the virtual counterparts.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

We present redirection techniques that support exploration of large-scale virtual environments (VEs) by means of real walking. We quantify to what degree users can unknowingly be redirected in order to guide them through VEs in which virtual paths differ from the physical paths. We further introduce the concept of dynamic passive haptics by which any number of virtual objects can be mapped to real physical proxy props having similar haptic properties (i. e., size, shape, and surface structure), such that the user can sense these virtual objects by touching their real world counterparts. Dynamic passive haptics provides the user with the illusion of interacting with a desired virtual object by redirecting her to the corresponding proxy prop. We describe the concepts of generic redirected walking and dynamic passive haptics and present experiments in which we have evaluated these concepts. Furthermore, we discuss implications that have been derived from a user study, and we present approaches that derive physical paths which may vary from the virtual counterparts. |

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Anthony Steed Presence-Enhancing Real Walking User Interface for First-Person Video Games Proceedings Article In: Proceedings of the ACM International Conference and Exhibition on Computer Graphics and Interactive Techniques (SIGGRAPH), Game Papers, ACM Press, 2009, ((acceptance rate 25%)). @inproceedings{SBHS09,

title = {Presence-Enhancing Real Walking User Interface for First-Person Video Games},

author = { Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Anthony Steed},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBHS09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the ACM International Conference and Exhibition on Computer Graphics and Interactive Techniques (SIGGRAPH), Game Papers},

publisher = {ACM Press},

abstract = {For most first-person video games it is important that players have a high level of feeling presence in the displayed game environment. Virtual reality (VR) technologies have enormous potential to enhance gameplay since players can experience the game immersively from the perspective of the player's virtual character. However, the VR technology itself, such as tracking devices and cabling, has until recently restricted the ability of users to really walk over long distances. In this paper we introduce a VR-based user interface for presenceenhancing gameplay with which players can explore the game environment in the most natural way, i. e., by real walking. While the player walks through the virtual game environment, we guide him/her on a physical path which is different from the virtual path and fits into the VR laboratory space. In order to further increase the VR experience, we introduce the concept of transitional environments. Such a transitional environment is a virtual replica of the laboratory environment, where the VR experience starts and which enables a gradual transition to the game environment. We have quantified how much humans can unknowingly be redirected and whether or not a gradual transition to a first-person game via a transitional environment increases the user's sense of presence.},

note = {(acceptance rate 25%)},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

For most first-person video games it is important that players have a high level of feeling presence in the displayed game environment. Virtual reality (VR) technologies have enormous potential to enhance gameplay since players can experience the game immersively from the perspective of the player's virtual character. However, the VR technology itself, such as tracking devices and cabling, has until recently restricted the ability of users to really walk over long distances. In this paper we introduce a VR-based user interface for presenceenhancing gameplay with which players can explore the game environment in the most natural way, i. e., by real walking. While the player walks through the virtual game environment, we guide him/her on a physical path which is different from the virtual path and fits into the VR laboratory space. In order to further increase the VR experience, we introduce the concept of transitional environments. Such a transitional environment is a virtual replica of the laboratory environment, where the VR experience starts and which enables a gradual transition to the game environment. We have quantified how much humans can unknowingly be redirected and whether or not a gradual transition to a first-person game via a transitional environment increases the user's sense of presence. |

| Frank Steinicke; Gerd Bruder; Scott Kuhl; Pete Willemsen; Markus Lappe; Klaus H. Hinrichs Judgment of Natural Perspective Projections in Head-Mounted Display Environments Proceedings Article In: Proceedings of the ACM Symposium on Virtual Reality Software and Technology (VRST), pp. 35–42, ACM Press, 2009. @inproceedings{SBKWLH09,

title = {Judgment of Natural Perspective Projections in Head-Mounted Display Environments},

author = { Frank Steinicke and Gerd Bruder and Scott Kuhl and Pete Willemsen and Markus Lappe and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBKWLH09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the ACM Symposium on Virtual Reality Software and Technology (VRST)},

pages = {35--42},

publisher = {ACM Press},

abstract = {The display units integrated in todays head-mounted displays (HMDs) provide only a limited field of view (FOV) to the virtual world. In order to present an undistorted view to the virtual environment (VE), the perspective projection used to render the VE has to be adjusted to the limitations caused by the HMD characteristics. In particular, the geometric field of view (GFOV), which defines the virtual aperture angle used for rendering of the 3D scene, is set up according to the display's field of view. A discrepancy between these two fields of view distorts the geometry of the VE in such a way that objects and distances appear to be "warped". Although discrepancies between the geometric and the HMD's field of view affect a person's perception of space, the resulting mini- and magnification of the displayed scene can be useful in some applications and may improve specific aspects of immersive virtual environments, for example, distance judgment, presence, and visual search task performance. In this paper we analyze if a user is consciously aware of perspective distortions of the VE displayed in the HMD. We introduce a psychophysical calibration method to determine the HMD's actual field of view, which may vary from the nominal values specified by the manufacturer. Furthermore, we conducted an experiment to identify perspective projections for HMDs, which are perceived as natural by subjects-even if these perspectives deviate from the perspectives that are inherently defined by the display's field of view. We found that subjects evaluate a field of view as natural when it is larger than the actual field of view of the HMD; in some case up to 50%.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

The display units integrated in todays head-mounted displays (HMDs) provide only a limited field of view (FOV) to the virtual world. In order to present an undistorted view to the virtual environment (VE), the perspective projection used to render the VE has to be adjusted to the limitations caused by the HMD characteristics. In particular, the geometric field of view (GFOV), which defines the virtual aperture angle used for rendering of the 3D scene, is set up according to the display's field of view. A discrepancy between these two fields of view distorts the geometry of the VE in such a way that objects and distances appear to be "warped". Although discrepancies between the geometric and the HMD's field of view affect a person's perception of space, the resulting mini- and magnification of the displayed scene can be useful in some applications and may improve specific aspects of immersive virtual environments, for example, distance judgment, presence, and visual search task performance. In this paper we analyze if a user is consciously aware of perspective distortions of the VE displayed in the HMD. We introduce a psychophysical calibration method to determine the HMD's actual field of view, which may vary from the nominal values specified by the manufacturer. Furthermore, we conducted an experiment to identify perspective projections for HMDs, which are perceived as natural by subjects-even if these perspectives deviate from the perspectives that are inherently defined by the display's field of view. We found that subjects evaluate a field of view as natural when it is larger than the actual field of view of the HMD; in some case up to 50%. |

| Gerd Bruder; Frank Steinicke; Kai Rothaus; Klaus H. Hinrichs Enhancing Presence in Head-mounted Display Environments by Visual Body Feedback using Head-mounted Cameras Proceedings Article In: Proceedings of the International Conference on CyberWorlds, pp. 43–50, IEEE Press, 2009. @inproceedings{BSRH09,

title = {Enhancing Presence in Head-mounted Display Environments by Visual Body Feedback using Head-mounted Cameras},

author = { Gerd Bruder and Frank Steinicke and Kai Rothaus and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSRH09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the International Conference on CyberWorlds},

pages = {43--50},

publisher = {IEEE Press},

abstract = {A fully-articulated visual representation of a user in an immersive virtual environment (IVE) can enhance the user's subjective sense of feeling present in the virtual world. Usually this means that a user has to wear a full-body motion capture suit to track real-world body motions and map them to a virtual body model. In this paper we present an augmented virtuality approach that allows to incorporate a realistic view of oneself in virtual environments using cameras attached to head mounted displays. The described system can easily be integrated into typical virtual reality setups. Egocentric camera images captured by a video-see-through system are segmented in real-time into foreground, showing parts of the user's body, e. g., her hands or feet, and background. The segmented foreground is then displayed as inset in the user's current view of the virtual world. Thus the user is able to see her physical body in an arbitrary virtual world, including individual characteristics such as skin pigmentation, hairiness etc.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

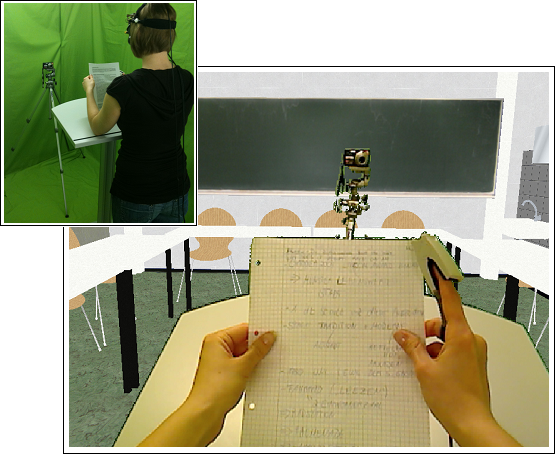

A fully-articulated visual representation of a user in an immersive virtual environment (IVE) can enhance the user's subjective sense of feeling present in the virtual world. Usually this means that a user has to wear a full-body motion capture suit to track real-world body motions and map them to a virtual body model. In this paper we present an augmented virtuality approach that allows to incorporate a realistic view of oneself in virtual environments using cameras attached to head mounted displays. The described system can easily be integrated into typical virtual reality setups. Egocentric camera images captured by a video-see-through system are segmented in real-time into foreground, showing parts of the user's body, e. g., her hands or feet, and background. The segmented foreground is then displayed as inset in the user's current view of the virtual world. Thus the user is able to see her physical body in an arbitrary virtual world, including individual characteristics such as skin pigmentation, hairiness etc. |

![[POSTER] Estimation of Virtual Interpupillary Distances for Immersive Head-Mounted Displays](https://sreal.ucf.edu/wp-content/uploads/2017/02/BSH10.png)

![[POSTER] Immersive Virtual Studio for Architectural Exploration](https://sreal.ucf.edu/wp-content/uploads/2017/02/BSVH10a.jpg)

![[POSTER] A Virtual Reality Handball Goalkeeper Analysis System](https://sreal.ucf.edu/wp-content/uploads/2017/02/BZBSHFS10.jpg)