2018

|

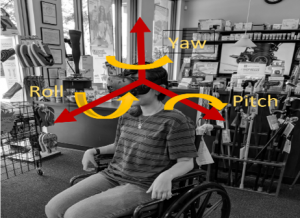

| Nahal Norouzi; Luke Bölling; Gerd Bruder; Greg Welch Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement Proceedings Article In: Proceedings of the 12th international conference on disability, virtual reality and associated technologies (ICDVRAT 2018), pp. 8, 2018, ISBN: 978-0-7049-1548-0. @inproceedings{Norouzi2018b,

title = {Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement},

author = {Nahal Norouzi and Luke Bölling and Gerd Bruder and Greg Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/ICDVRAT-ITAG-2018-Conference-Proceedings-122-129.pdf},

isbn = {978-0-7049-1548-0},

year = {2018},

date = {2018-09-04},

booktitle = {Proceedings of the 12th international conference on disability, virtual reality and associated technologies (ICDVRAT 2018)},

pages = {8},

abstract = {A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement. In this paper, we present techniques that allow these users to get full-movement benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds. We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker, e.g., in cases when no physical head rotations are possible. We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

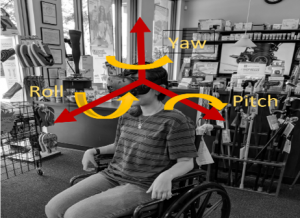

A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement. In this paper, we present techniques that allow these users to get full-movement benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds. We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker, e.g., in cases when no physical head rotations are possible. We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces. |

| Nahal Norouzi; Gerd Bruder; Greg Welch Assessing Vignetting as a Means to Reduce VR Sickness During Amplified Head Rotations Proceedings Article In: ACM Symposium on Applied Perception 2018, pp. 8, ACM 2018, ISBN: 978-1-4503-5894-1/18/08. @inproceedings{Norouzi2018,

title = {Assessing Vignetting as a Means to Reduce VR Sickness During Amplified Head Rotations},

author = {Nahal Norouzi and Gerd Bruder and Greg Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/19-32-norouzi-1.pdf},

doi = {10.1145/3225153.3225162},

isbn = {978-1-4503-5894-1/18/08},

year = {2018},

date = {2018-08-10},

booktitle = {ACM Symposium on Applied Perception 2018},

pages = {8},

organization = {ACM},

abstract = {Redirected and amplified head movements have the potential to provide more natural interaction with virtual environments (VEs) than using controller-based input, which causes large discrepancies between visual and vestibular self-motion cues and leads to increased VR sickness.

However, such amplified head movements may also exacerbate VR sickness symptoms over no amplification.

Several general methods have been introduced to reduce VR sickness for controller-based input inside a VE, including a popular vignetting method that gradually reduces the field of view.

In this paper, we investigate the use of vignetting to reduce VR sickness when using amplified head rotations instead of controller-based input.

We also investigate whether the induced VR sickness is a result of the user's head acceleration or velocity by introducing two different modes of vignetting, one triggered by acceleration and the other by velocity.

Our dependent measures were pre and post VR sickness questionnaires as well as estimated discomfort levels that were assessed each minute of the experiment.

Our results show interesting effects between a baseline condition without vignetting, as well as the two vignetting methods, generally indicating that the vignetting methods did not succeed in reducing VR sickness for most of the participants and, instead, lead to a significant increase.

We discuss the results and potential explanations of our findings.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Redirected and amplified head movements have the potential to provide more natural interaction with virtual environments (VEs) than using controller-based input, which causes large discrepancies between visual and vestibular self-motion cues and leads to increased VR sickness.

However, such amplified head movements may also exacerbate VR sickness symptoms over no amplification.

Several general methods have been introduced to reduce VR sickness for controller-based input inside a VE, including a popular vignetting method that gradually reduces the field of view.

In this paper, we investigate the use of vignetting to reduce VR sickness when using amplified head rotations instead of controller-based input.

We also investigate whether the induced VR sickness is a result of the user's head acceleration or velocity by introducing two different modes of vignetting, one triggered by acceleration and the other by velocity.

Our dependent measures were pre and post VR sickness questionnaires as well as estimated discomfort levels that were assessed each minute of the experiment.

Our results show interesting effects between a baseline condition without vignetting, as well as the two vignetting methods, generally indicating that the vignetting methods did not succeed in reducing VR sickness for most of the participants and, instead, lead to a significant increase.

We discuss the results and potential explanations of our findings. |

| Eike Langbehn; Frank Steinicke; Markus Lappe; Gregory F. Welch; Gerd Bruder In the Blink of an Eye – Leveraging Blink-Induced Suppression for Imperceptible Position andOrientation Redirection in Virtual Reality Journal Article In: ACM Transactions of Graphics (TOG), Special Issue on ACM SIGGRAPH 2018, vol. 37, no. 4, pp. 1-11, 2018. @article{Langbehn2018,

title = {In the Blink of an Eye – Leveraging Blink-Induced Suppression for Imperceptible Position andOrientation Redirection in Virtual Reality},

author = {Eike Langbehn and Frank Steinicke and Markus Lappe and Gregory F. Welch and Gerd Bruder},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/Langbehn2018.pdf},

doi = {10.1145/3197517.3201335},

year = {2018},

date = {2018-08-01},

journal = {ACM Transactions of Graphics (TOG), Special Issue on ACM SIGGRAPH 2018},

volume = {37},

number = {4},

pages = {1-11},

chapter = {66},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Glenn Taylor; Anthony Deschamps; Alyssa Tanaka; Denise Nicholson; Gerd Bruder; Gregory Welch; Francisco Guido-Sanz Augmented Reality for Tactical Combat Casualty Care Training Proceedings Article In: Augmented Cognition: Users and Contexts, pp. 227–239, Springer International Publishing, 2018, ISBN: 978-3-319-91467-1. @inproceedings{Taylor2018aa,

title = {Augmented Reality for Tactical Combat Casualty Care Training},

author = {Glenn Taylor and Anthony Deschamps and Alyssa Tanaka and Denise Nicholson and Gerd Bruder and Gregory Welch and Francisco Guido-Sanz},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Taylor2018aa.pdf},

isbn = {978-3-319-91467-1},

year = {2018},

date = {2018-05-03},

booktitle = {Augmented Cognition: Users and Contexts},

pages = {227--239},

publisher = {Springer International Publishing},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Jason Hochreiter; Salam Daher; Gerd Bruder; Greg Welch Cognitive and touch performance effects of mismatched 3D physical and visual perceptions Proceedings Article In: IEEE Virtual Reality 2018 (VR 2018), 2018. @inproceedings{Hochreiter2018,

title = {Cognitive and touch performance effects of mismatched 3D physical and visual perceptions},

author = {Jason Hochreiter and Salam Daher and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/hochreiter2018.pdf},

year = {2018},

date = {2018-03-22},

booktitle = {IEEE Virtual Reality 2018 (VR 2018)},

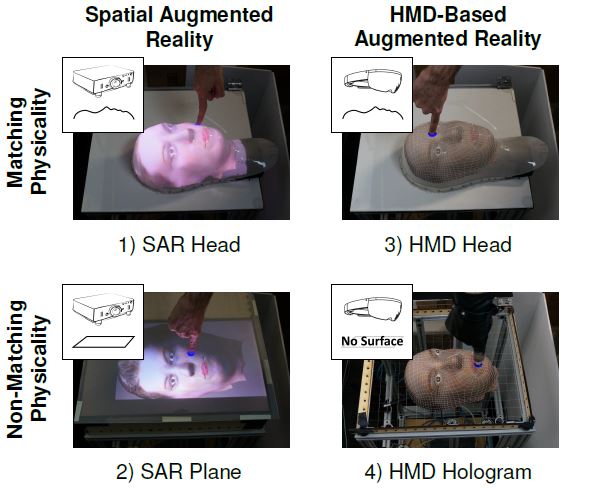

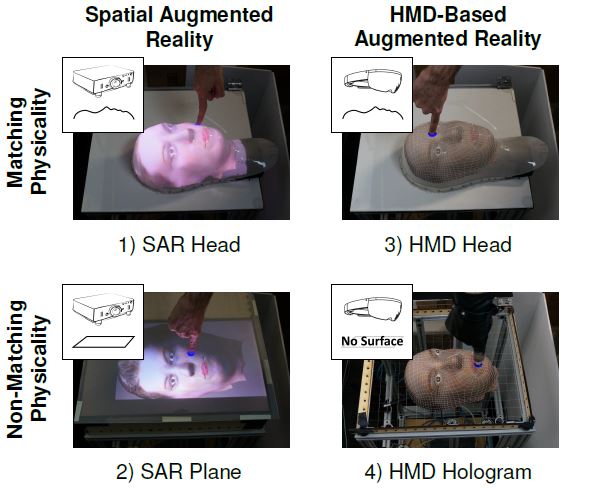

abstract = {In a controlled human-subject study we investigated the effects of mismatched physical and visual perception on cognitive load and performance in an Augmented Reality (AR) touching task by varying the physical fidelity (matching vs. non-matching physical shape) and visual mechanism (projector-based vs. HMD-based AR) of the representation. Participants touched visual targets on four corresponding physical-visual representations of a human head. We evaluated their performance in terms of touch accuracy, response time, and a cognitive load task requiring target size estimations during a concurrent (secondary) counting task. Results indicated higher performance, lower cognitive load, and increased usability when participants touched a matching physical head-shaped surface and with visuals provided by a projector from underneath.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a controlled human-subject study we investigated the effects of mismatched physical and visual perception on cognitive load and performance in an Augmented Reality (AR) touching task by varying the physical fidelity (matching vs. non-matching physical shape) and visual mechanism (projector-based vs. HMD-based AR) of the representation. Participants touched visual targets on four corresponding physical-visual representations of a human head. We evaluated their performance in terms of touch accuracy, response time, and a cognitive load task requiring target size estimations during a concurrent (secondary) counting task. Results indicated higher performance, lower cognitive load, and increased usability when participants touched a matching physical head-shaped surface and with visuals provided by a projector from underneath. |

| Oscar Ariza; Gerd Bruder; Nicholas Katzakis; Frank Steinicke Analysis of Proximity-Based Multimodal Feedback for 3D Selection in Immersive Virtual Environments Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 327-334, 2018. @inproceedings{Ariza2018a,

title = {Analysis of Proximity-Based Multimodal Feedback for 3D Selection in Immersive Virtual Environments},

author = {Oscar Ariza and Gerd Bruder and Nicholas Katzakis and Frank Steinicke},

doi = {10.1109/VR.2018.8446317},

year = {2018},

date = {2018-03-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {327-334},

abstract = {Interaction tasks in virtual reality (VR) such as three-dimensional (3D) selection or manipulation of objects often suffer from reduced performance due to missing or different feedback provided by VR systems than during corresponding real-world interactions. Vibrotactile and auditory feedback have been suggested as additional perceptual cues complementing the visual channel to improve interaction in VR. However, it has rarely been shown that multimodal feedback improves performance or reduces errors during 3D object selection. Only little research has been conducted in the area of proximity-based multimodal feedback, in which stimulus intensities depend on spatiotemporal relations between input device and the virtual target object. In this paper, we analyzed the effects of unimodal and bimodal feedback provided through the visual, auditory and tactile modalities, while users perform 3D object selections in VEs, by comparing both binary and continuous proximity-based feedback. We conducted a Fitts' Law experiment and evaluated the different feedback approaches. The results show that the feedback types affect ballistic and correction phases of the selection movement, and significantly influence the user performance.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Interaction tasks in virtual reality (VR) such as three-dimensional (3D) selection or manipulation of objects often suffer from reduced performance due to missing or different feedback provided by VR systems than during corresponding real-world interactions. Vibrotactile and auditory feedback have been suggested as additional perceptual cues complementing the visual channel to improve interaction in VR. However, it has rarely been shown that multimodal feedback improves performance or reduces errors during 3D object selection. Only little research has been conducted in the area of proximity-based multimodal feedback, in which stimulus intensities depend on spatiotemporal relations between input device and the virtual target object. In this paper, we analyzed the effects of unimodal and bimodal feedback provided through the visual, auditory and tactile modalities, while users perform 3D object selections in VEs, by comparing both binary and continuous proximity-based feedback. We conducted a Fitts' Law experiment and evaluated the different feedback approaches. The results show that the feedback types affect ballistic and correction phases of the selection movement, and significantly influence the user performance. |

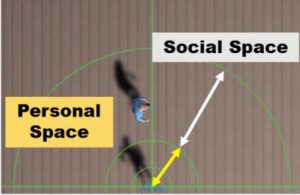

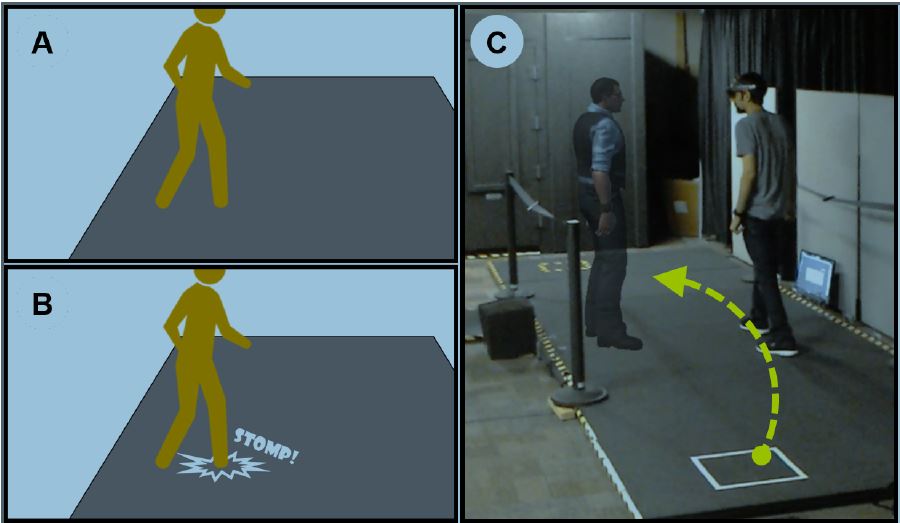

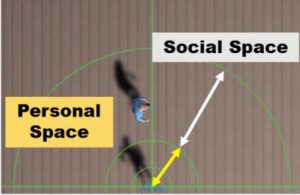

| Myungho Lee; Gerd Bruder; Tobias Hollerer; Greg Welch Effects of Unaugmented Periphery and Vibrotactile Feedback on Proxemics with Virtual Humans in AR Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 4, pp. 1525-1534, 2018. @article{Lee2018,

title = {Effects of Unaugmented Periphery and Vibrotactile Feedback on Proxemics with Virtual Humans in AR},

author = {Myungho Lee and Gerd Bruder and Tobias Hollerer and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/04/Lee2018.pdf},

doi = {10.1109/TVCG.2018.2794074 },

year = {2018},

date = {2018-02-23},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {24},

number = {4},

pages = {1525-1534},

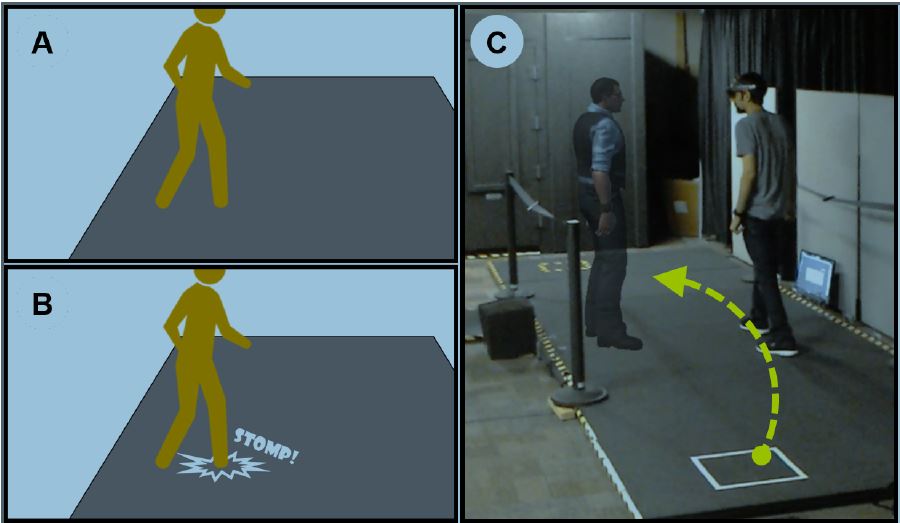

abstract = {In this paper, we investigate factors and issues related to human locomotion behavior and proxemics in the presence of a real or virtual human in augmented reality (AR). First, we discuss a unique issue with current-state optical see-through head-mounted displays. Second, we discuss the limited multimodal feedback provided by virtual humans in AR, present a potential improvement based on vibrotactile feedback induced via the floor to compensate for the limited augmented visual field, and report results showing that benefits of such vibrations are less visible in objective locomotion behavior than in subjective estimates of co-presence. Third, we investigate and document significant differences in the effects that real and virtual humans have on locomotion behavior in AR. We discuss potential explanations for these effects and analyze effects of different types of behaviors that such real or virtual humans may exhibit in the presence of an observer.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

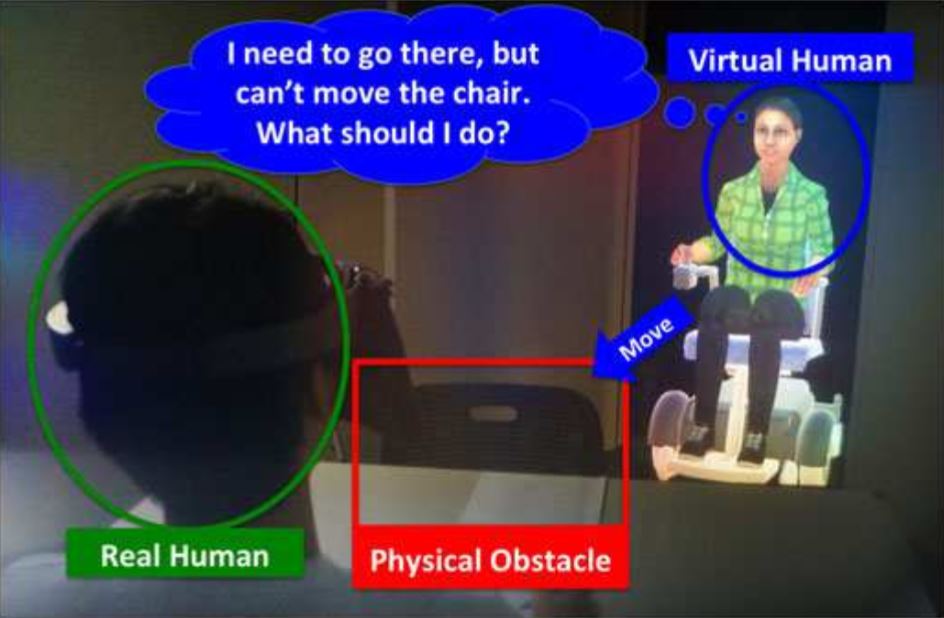

In this paper, we investigate factors and issues related to human locomotion behavior and proxemics in the presence of a real or virtual human in augmented reality (AR). First, we discuss a unique issue with current-state optical see-through head-mounted displays. Second, we discuss the limited multimodal feedback provided by virtual humans in AR, present a potential improvement based on vibrotactile feedback induced via the floor to compensate for the limited augmented visual field, and report results showing that benefits of such vibrations are less visible in objective locomotion behavior than in subjective estimates of co-presence. Third, we investigate and document significant differences in the effects that real and virtual humans have on locomotion behavior in AR. We discuss potential explanations for these effects and analyze effects of different types of behaviors that such real or virtual humans may exhibit in the presence of an observer. |

2017

|

| Susanne Schmidt; Gerd Bruder; Frank Steinicke Moving Towards Consistent Depth Perception in Stereoscopic Projection-based Augmented Reality Proceedings Article In: Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE), pp. 161-168, 2017. @inproceedings{Schmidt2017b,

title = {Moving Towards Consistent Depth Perception in Stereoscopic Projection-based Augmented Reality},

author = {Susanne Schmidt and Gerd Bruder and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/01/Schmidt2017b.pdf},

year = {2017},

date = {2017-11-01},

booktitle = {Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE)},

pages = {161-168},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] A Pilot Study of Altering Depth Perception with Projection-Based Illusions](https://sreal.ucf.edu/wp-content/uploads/2019/01/Schmidt2017a-295x300.png) | Susanne Schmidt; Gerd Bruder; Frank Steinicke [POSTER] A Pilot Study of Altering Depth Perception with Projection-Based Illusions Proceedings Article In: Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE), pp. 33-34, 2017. @inproceedings{Schmidt2017a,

title = {[POSTER] A Pilot Study of Altering Depth Perception with Projection-Based Illusions},

author = {Susanne Schmidt and Gerd Bruder and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/01/Schmidt2017a.pdf},

year = {2017},

date = {2017-11-01},

booktitle = {Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE)},

pages = {33-34},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

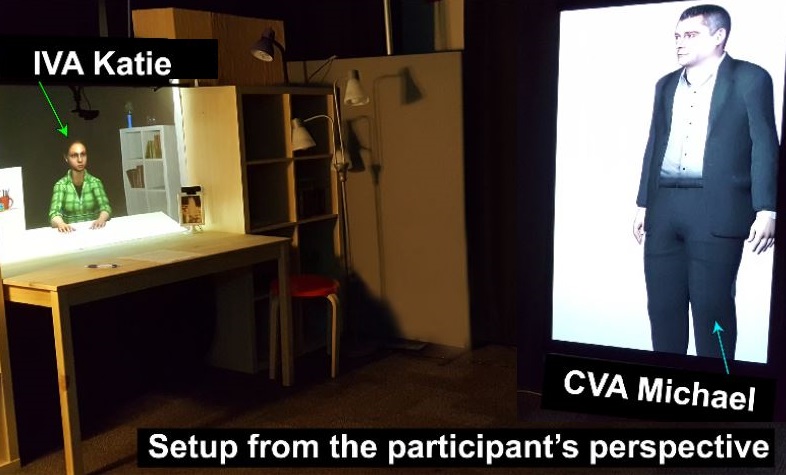

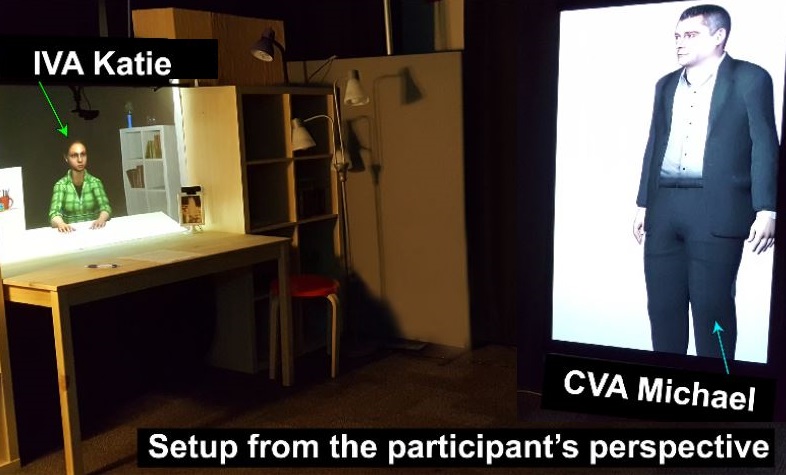

| Salam Daher; Kangsoo Kim; Myungho Lee; Ryan Schubert; Gerd Bruder; Jeremy Bailenson; Greg Welch Effects of Social Priming on Social Presence with Intelligent Virtual Agents Book Chapter In: Beskow, Jonas; Peters, Christopher; Castellano, Ginevra; O'Sullivan, Carol; Leite, Iolanda; Kopp, Stefan (Ed.): Intelligent Virtual Agents: 17th International Conference, IVA 2017, Stockholm, Sweden, August 27-30, 2017, Proceedings, vol. 10498, pp. 87-100, Springer International Publishing, 2017. @inbook{Daher2017ab,

title = {Effects of Social Priming on Social Presence with Intelligent Virtual Agents},

author = {Salam Daher and Kangsoo Kim and Myungho Lee and Ryan Schubert and Gerd Bruder and Jeremy Bailenson and Greg Welch},

editor = {Jonas Beskow and Christopher Peters and Ginevra Castellano and Carol O'Sullivan and Iolanda Leite and Stefan Kopp},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/12/Daher2017ab.pdf},

doi = {10.1007/978-3-319-67401-8_10},

year = {2017},

date = {2017-08-26},

booktitle = {Intelligent Virtual Agents: 17th International Conference, IVA 2017, Stockholm, Sweden, August 27-30, 2017, Proceedings},

volume = {10498},

pages = {87-100},

publisher = {Springer International Publishing},

keywords = {},

pubstate = {published},

tppubtype = {inbook}

}

|

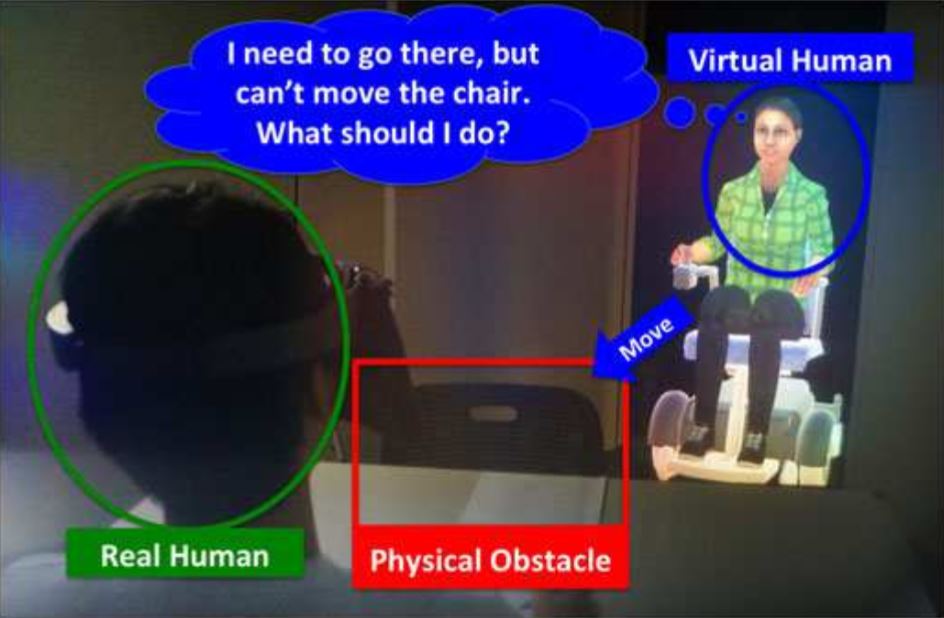

| Kangsoo Kim; Divine Maloney; Gerd Bruder; Jeremy Bailenson; Greg Welch The effects of virtual human's spatial and behavioral coherence with physical objects on social presence in AR Journal Article In: Computer Animation and Virtual Worlds, vol. 28, no. 3-4, pp. e1771-n/a, 2017. @article{Kim2017b,

title = {The effects of virtual human's spatial and behavioral coherence with physical objects on social presence in AR},

author = {Kangsoo Kim and Divine Maloney and Gerd Bruder and Jeremy Bailenson and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/12/Kim2017b.pdf},

doi = {10.1002/cav.1771},

year = {2017},

date = {2017-05-21},

journal = {Computer Animation and Virtual Worlds},

volume = {28},

number = {3-4},

pages = {e1771-n/a},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Myungho Lee; Gerd Bruder; Greg Welch Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment Journal Article In: Journal of Vision: Abstract Issue 2017, vol. 17, no. 10, pp. 357, 2017. @article{Lee2017,

title = {Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment},

author = {Myungho Lee and Gerd Bruder and Greg Welch},

doi = {10.1167/17.10.357},

year = {2017},

date = {2017-05-01},

journal = {Journal of Vision: Abstract Issue 2017},

volume = {17},

number = {10},

pages = {357},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Ryan Schubert; Gerd Bruder; Greg Welch Mitigating Perceptual Error in Synthetic Animatronics using Visual Feature Flow Journal Article In: Journal of Vision: Abstract Issue 2017, vol. 17, no. 10, pp. 331, 2017. @article{Schubert2017,

title = {Mitigating Perceptual Error in Synthetic Animatronics using Visual Feature Flow},

author = {Ryan Schubert and Gerd Bruder and Greg Welch},

doi = {10.1167/17.10.331},

year = {2017},

date = {2017-05-01},

journal = {Journal of Vision: Abstract Issue 2017},

volume = {17},

number = {10},

pages = {331},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

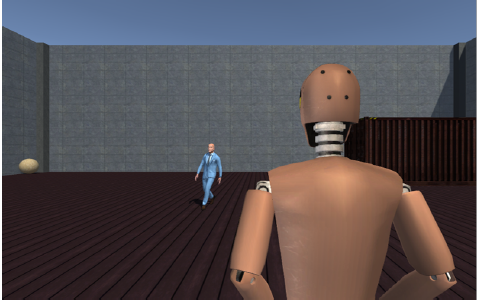

| Myungho Lee; Gerd Bruder; Greg Welch Exploring the Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment Proceedings Article In: Proceedings of IEEE Virtual Reality 2017, 2017. @inproceedings{Lee2017aa,

title = {Exploring the Effect of Vibrotactile Feedback through the Floor on Social Presence in an Immersive Virtual Environment},

author = {Myungho Lee and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/Lee2017aa.pdf},

doi = {10.1109/VR.2017.7892237 },

year = {2017},

date = {2017-03-19},

booktitle = {Proceedings of IEEE Virtual Reality 2017},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] Can Social Presence be Contagious? Effects of Social Presence Priming on Interaction with Virtual Humans](https://sreal.ucf.edu/wp-content/uploads/2017/02/Daher2017aa.png) | Salam Daher; Kangsoo Kim; Myungho Lee; Gerd Bruder; Ryan Schubert; Jeremy Bailenson; Greg Welch [POSTER] Can Social Presence be Contagious? Effects of Social Presence Priming on Interaction with Virtual Humans Proceedings Article In: 3D User Interfaces (3DUI), 2017 IEEE Symposium on , 2017. @inproceedings{Daher2017aa,

title = {[POSTER] Can Social Presence be Contagious? Effects of Social Presence Priming on Interaction with Virtual Humans},

author = {Salam Daher and Kangsoo Kim and Myungho Lee and Gerd Bruder and Ryan Schubert and Jeremy Bailenson and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/Daher2017aa_red.pdf},

doi = {10.1109/3DUI.2017.7893341 },

year = {2017},

date = {2017-03-18},

booktitle = {3D User Interfaces (3DUI), 2017 IEEE Symposium on },

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

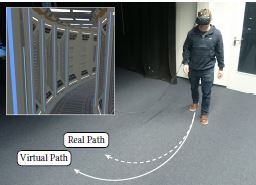

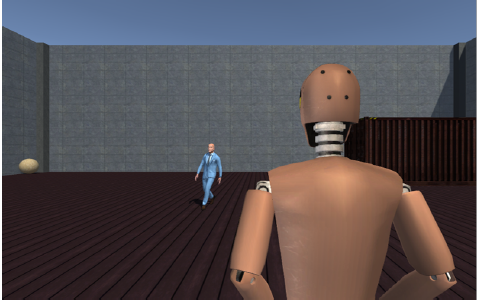

| Eike Langbehn; Paul Lubos; Gerd Bruder; Frank Steinicke Bending the Curve: Sensitivity to Bending of Curved Paths and Application in Room-Scale VR Journal Article In: IEEE Transactions on Visualization and Computer Graphics (TVCG), Special Issue on IEEE Virtual Reality (VR), vol. 23, no. 4, pp. 1389-1398, 2017. @article{Langbehn2017b,

title = {Bending the Curve: Sensitivity to Bending of Curved Paths and Application in Room-Scale VR},

author = {Eike Langbehn and Paul Lubos and Gerd Bruder and Frank Steinicke},

doi = {10.1109/TVCG.2017.2657220},

year = {2017},

date = {2017-03-01},

journal = {IEEE Transactions on Visualization and Computer Graphics (TVCG), Special Issue on IEEE Virtual Reality (VR)},

volume = {23},

number = {4},

pages = {1389-1398},

abstract = {Redirected walking (RDW) promises to allow near-natural walking in an infinitely large virtual environment (VE) by subtle manipulations of the virtual camera. Previous experiments analyzed the human sensitivity to RDW manipulations by focusing on the worst-case scenario, in which users walk perfectly straight ahead in the VE, whereas they are redirected on a circular path in the real world. The results showed that a physical radius of at least 22 meters is required for undetectable RDW. However, users do not always walk exactly straight in a VE. So far, it has not been investigated how much a physical path can be bent in situations in which users walk a virtual curved path instead of a straight one. Such curved walking paths can be often observed, for example, when users walk on virtual trails, through bent corridors, or when circling around obstacles. In such situations the question is not, whether or not the physical path can be bent, but how much the bending of the physical path may vary from the bending of the virtual path. In this article, we analyze this question and present redirection by means of bending gains that describe the discrepancy between the bending of curved paths in the real and virtual environment. Furthermore, we report the psychophysical experiments in which we analyzed the human sensitivity to these gains. The results reveal encouragingly wider detection thresholds than for straightforward walking. Based on our findings, we discuss the potential of curved walking and present a first approach to leverage bent paths in a way that can provide undetectable RDW manipulations even in room-scale VR.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

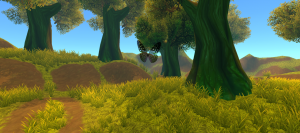

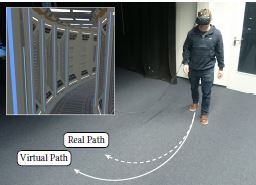

Redirected walking (RDW) promises to allow near-natural walking in an infinitely large virtual environment (VE) by subtle manipulations of the virtual camera. Previous experiments analyzed the human sensitivity to RDW manipulations by focusing on the worst-case scenario, in which users walk perfectly straight ahead in the VE, whereas they are redirected on a circular path in the real world. The results showed that a physical radius of at least 22 meters is required for undetectable RDW. However, users do not always walk exactly straight in a VE. So far, it has not been investigated how much a physical path can be bent in situations in which users walk a virtual curved path instead of a straight one. Such curved walking paths can be often observed, for example, when users walk on virtual trails, through bent corridors, or when circling around obstacles. In such situations the question is not, whether or not the physical path can be bent, but how much the bending of the physical path may vary from the bending of the virtual path. In this article, we analyze this question and present redirection by means of bending gains that describe the discrepancy between the bending of curved paths in the real and virtual environment. Furthermore, we report the psychophysical experiments in which we analyzed the human sensitivity to these gains. The results reveal encouragingly wider detection thresholds than for straightforward walking. Based on our findings, we discuss the potential of curved walking and present a first approach to leverage bent paths in a way that can provide undetectable RDW manipulations even in room-scale VR. |

| Oscar Ariza; Markus Lange; Frank Steinicke; Gerd Bruder Vibrotactile Assistance for User Guidance Towards Selection Targets in VR and the Cognitive Resources Involved Proceedings Article In: Proceedings of IEEE Symposium on 3D User Interfaces (3DUI), pp. 95-98, 2017. @inproceedings{Ariza2017a,

title = {Vibrotactile Assistance for User Guidance Towards Selection Targets in VR and the Cognitive Resources Involved},

author = {Oscar Ariza and Markus Lange and Frank Steinicke and Gerd Bruder},

year = {2017},

date = {2017-03-01},

booktitle = {Proceedings of IEEE Symposium on 3D User Interfaces (3DUI)},

pages = {95-98},

abstract = {Current head-mounted displays (HMDs) provide a large binocular field of view (FOV) for natural interaction in virtual environments (VEs). However, the selection of objects located in the periphery and outside the FOV requires visual search by head rotations, which can reduce the performance of interaction in virtual reality (VR). This technote explores the use of a pair of self-made wireless and wearable devices, which once attached to both hemispheres of the user's head provide assistive vibrotactile cues for guidance in order to reduce the time used to turn and locate a target object. We present an experiment based on a dual-tasking method to analyze cognitive demands and performance metrics during a set of selection tasks followed by a working memory task.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Current head-mounted displays (HMDs) provide a large binocular field of view (FOV) for natural interaction in virtual environments (VEs). However, the selection of objects located in the periphery and outside the FOV requires visual search by head rotations, which can reduce the performance of interaction in virtual reality (VR). This technote explores the use of a pair of self-made wireless and wearable devices, which once attached to both hemispheres of the user's head provide assistive vibrotactile cues for guidance in order to reduce the time used to turn and locate a target object. We present an experiment based on a dual-tasking method to analyze cognitive demands and performance metrics during a set of selection tasks followed by a working memory task. |

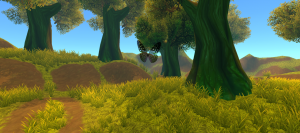

![[DEMO] Application of Redirected Walking in Room-Scale VR](https://sreal.ucf.edu/wp-content/uploads/2019/01/Langbehn2017a-300x186.png) | Eike Langbehn; Paul Lubos; Gerd Bruder; Frank Steinicke [DEMO] Application of Redirected Walking in Room-Scale VR Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 449-450, 2017. @inproceedings{Langbehn2017a,

title = {[DEMO] Application of Redirected Walking in Room-Scale VR},

author = {Eike Langbehn and Paul Lubos and Gerd Bruder and Frank Steinicke},

year = {2017},

date = {2017-03-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

journal = {Proceedings of IEEE Virtual Reality (VR)},

pages = {449-450},

abstract = {Redirected walking (RDW) promises to allow near-natural walking in an infinitely large virtual environment (VE) by subtle manipulations of the virtual camera. Previous experiments showed that a physical radius of at least 22 meters is required for undetectable RDW. However, we found that it is possible to decrease this radius and to apply RDW to room-scale VR, i. e., up to approximately 5m x 5m. This is done by using curved paths in the VE instead of straight paths, and by coupling them together in a way that enables continuous walking. Furthermore, the corresponding paths in the real world are laid out in a way that fits perfectly into room-scale VR. In this research demo, users can experience RDW in a room-scale head-mounted display VR setup and explore a VE of approximately 25m x 25m.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Redirected walking (RDW) promises to allow near-natural walking in an infinitely large virtual environment (VE) by subtle manipulations of the virtual camera. Previous experiments showed that a physical radius of at least 22 meters is required for undetectable RDW. However, we found that it is possible to decrease this radius and to apply RDW to room-scale VR, i. e., up to approximately 5m x 5m. This is done by using curved paths in the VE instead of straight paths, and by coupling them together in a way that enables continuous walking. Furthermore, the corresponding paths in the real world are laid out in a way that fits perfectly into room-scale VR. In this research demo, users can experience RDW in a room-scale head-mounted display VR setup and explore a VE of approximately 25m x 25m. |

| Omar Janeh; Eike Langbehn; Frank Steinicke; Gerd Bruder; Alessandro Gulberti; Monika Poetter-Nerger Walking in Virtual Reality: Effects of Manipulated Visual Self-Motion on Walking Biomechanics Journal Article In: ACM Transactions on Applied Perception (TAP), vol. 14, no. 2, pp. 12:1–12:15, 2017. @article{JBLGPS17,

title = {Walking in Virtual Reality: Effects of Manipulated Visual Self-Motion on Walking Biomechanics},

author = {Omar Janeh and Eike Langbehn and Frank Steinicke and Gerd Bruder and Alessandro Gulberti and Monika Poetter-Nerger },

url = {https://sreal.ucf.edu/wp-content/uploads/2017/05/JBLGPS17-red.pdf},

doi = {10.1145/3022731 },

year = {2017},

date = {2017-01-01},

journal = {ACM Transactions on Applied Perception (TAP)},

volume = {14},

number = {2},

pages = {12:1--12:15},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

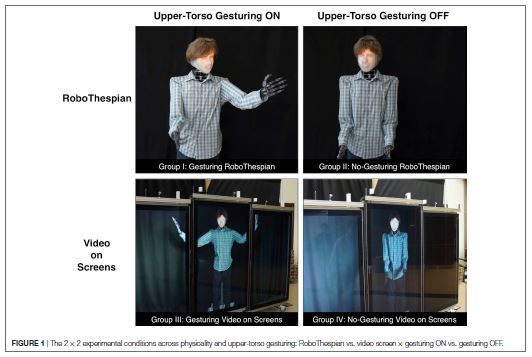

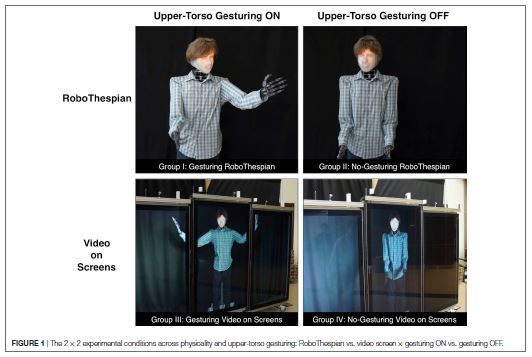

| Kangsoo Kim; Arjun Nagendran; Jeremy N. Bailenson; Andrew Raij; Gerd Bruder; Myungho Lee; Ryan Schubert; Xin Yan; Gregory F. Welch A Large-Scale Study of Surrogate Physicality and Gesturing on Human–Surrogate Interactions in a Public Space Journal Article In: Frontiers in Robotics and AI, vol. 4, pp. 1-20, 2017, ISSN: 2296-9144. @article{Kim2017,

title = {A Large-Scale Study of Surrogate Physicality and Gesturing on Human–Surrogate Interactions in a Public Space},

author = {Kangsoo Kim and Arjun Nagendran and Jeremy N. Bailenson and Andrew Raij and Gerd Bruder and Myungho Lee and Ryan Schubert and Xin Yan and Gregory F. Welch},

url = {http://journal.frontiersin.org/article/10.3389/frobt.2017.00032

https://sreal.ucf.edu/wp-content/uploads/2017/07/Kim2017.pdf},

doi = {10.3389/frobt.2017.00032},

issn = {2296-9144},

year = {2017},

date = {2017-01-01},

journal = {Frontiers in Robotics and AI},

volume = {4},

pages = {1-20},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

![[POSTER] A Pilot Study of Altering Depth Perception with Projection-Based Illusions](https://sreal.ucf.edu/wp-content/uploads/2019/01/Schmidt2017a-295x300.png)

![[POSTER] Can Social Presence be Contagious? Effects of Social Presence Priming on Interaction with Virtual Humans](https://sreal.ucf.edu/wp-content/uploads/2017/02/Daher2017aa.png)

![[DEMO] Application of Redirected Walking in Room-Scale VR](https://sreal.ucf.edu/wp-content/uploads/2019/01/Langbehn2017a-300x186.png)