2019

|

| Lisa Dieker; Carrie Straub; Michael Hynes; Charles Hughes; Caitlyn Bukathy; Taylor Bousfield; Samantha Mrstik Using Virtual Rehearsal in a Simulator to Impact the Performance of Science Teachers Journal Article In: International Journal of Gaming and Computer-Mediated Simulations, vol. 11, no. 4, pp. 1-20, 2019. @article{Dieker2019uvr,

title = {Using Virtual Rehearsal in a Simulator to Impact the Performance of Science Teachers},

author = {Lisa Dieker and Carrie Straub and Michael Hynes and Charles Hughes and Caitlyn Bukathy and Taylor Bousfield and Samantha Mrstik},

doi = {10.4018/IJGCMS.2019100101},

year = {2019},

date = {2019-10-01},

journal = {International Journal of Gaming and Computer-Mediated Simulations},

volume = {11},

number = {4},

pages = {1-20},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Susanne Schmidt; Gerd Bruder; Frank Steinicke Effects of Virtual Agent and Object Representation on Experiencing Exhibited Artifacts Journal Article In: Elsevier Computers and Graphics, vol. 83, pp. 1-10, 2019. @article{Schmidt2019,

title = {Effects of Virtual Agent and Object Representation on Experiencing Exhibited Artifacts },

author = {Susanne Schmidt and Gerd Bruder and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/07/Schmidt2019.pdf},

doi = {10.1016/j.cag.2019.06.002},

year = {2019},

date = {2019-10-01},

journal = {Elsevier Computers and Graphics},

volume = {83},

pages = {1-10},

abstract = {With the emergence of speech-controlled virtual agents (VAs) in consumer devices such as Amazon’s Echo or Apple’s HomePod, we have seen a large public interest in related technologies. While most of the current interactive conversational VAs appear in the form of voice-only assistants, other representations showing, for example, a contextually related or generic humanoid body are possible. In our previous work, we analyzed the effectiveness of different forms of VAs in the context of a virtual reality (VR) exhibition space. We found positive evidence that agent embodiment induces a higher sense of spatial and social presence. The results also suggest that both embodied and thematically related audio-visual representations of VAs positively affect the overall user experience. We extend this work by further analyzing the effects of the physicality of the agent’s environment (i.e., virtual vs. real). The results of the follow-up study indicate some benefits of virtual environments, e.g., regarding user engagement and learning of visual facts. We also evaluate some interaction effects between the representations of the virtual agent and its surrounding and discuss implications on the design of exhibition spaces.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

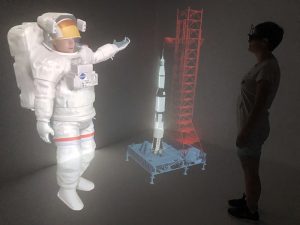

With the emergence of speech-controlled virtual agents (VAs) in consumer devices such as Amazon’s Echo or Apple’s HomePod, we have seen a large public interest in related technologies. While most of the current interactive conversational VAs appear in the form of voice-only assistants, other representations showing, for example, a contextually related or generic humanoid body are possible. In our previous work, we analyzed the effectiveness of different forms of VAs in the context of a virtual reality (VR) exhibition space. We found positive evidence that agent embodiment induces a higher sense of spatial and social presence. The results also suggest that both embodied and thematically related audio-visual representations of VAs positively affect the overall user experience. We extend this work by further analyzing the effects of the physicality of the agent’s environment (i.e., virtual vs. real). The results of the follow-up study indicate some benefits of virtual environments, e.g., regarding user engagement and learning of visual facts. We also evaluate some interaction effects between the representations of the virtual agent and its surrounding and discuss implications on the design of exhibition spaces. |

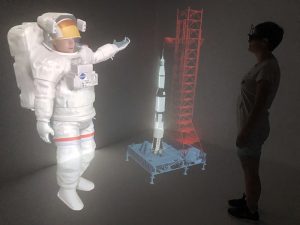

| Gregory F. Welch; Gerd Bruder; Peter Squire; Ryan Schubert Anticipating Widespread Augmented Reality: Insights from the 2018 AR Visioning Workshop Technical Report University of Central Florida and Office of Naval Research no. 786, 2019. @techreport{Welch2019b,

title = {Anticipating Widespread Augmented Reality: Insights from the 2018 AR Visioning Workshop},

author = {Gregory F. Welch and Gerd Bruder and Peter Squire and Ryan Schubert},

url = {https://stars.library.ucf.edu/ucfscholar/786/

https://sreal.ucf.edu/wp-content/uploads/2019/08/Welch2019b-1.pdf},

year = {2019},

date = {2019-08-06},

issuetitle = {Faculty Scholarship and Creative Works},

number = {786},

institution = {University of Central Florida and Office of Naval Research},

abstract = {In August of 2018 a group of academic, government, and industry experts in the field of Augmented Reality gathered for four days to consider potential technological and societal issues and opportunities that could accompany a future where AR is pervasive in location and duration of use. This report is intended to summarize some of the most novel and potentially impactful insights and opportunities identified by the group.

Our target audience includes AR researchers, government leaders, and thought leaders in general. It is our intent to share some compelling technological and societal questions that we believe are unique to AR, and to engender new thinking about the potentially impactful synergies associated with the convergence of AR and some other conventionally distinct areas of research.},

keywords = {},

pubstate = {published},

tppubtype = {techreport}

}

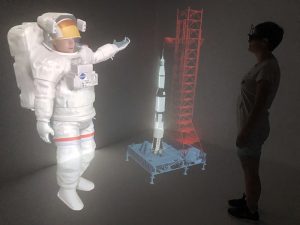

In August of 2018 a group of academic, government, and industry experts in the field of Augmented Reality gathered for four days to consider potential technological and societal issues and opportunities that could accompany a future where AR is pervasive in location and duration of use. This report is intended to summarize some of the most novel and potentially impactful insights and opportunities identified by the group.

Our target audience includes AR researchers, government leaders, and thought leaders in general. It is our intent to share some compelling technological and societal questions that we believe are unique to AR, and to engender new thinking about the potentially impactful synergies associated with the convergence of AR and some other conventionally distinct areas of research. |

| Jason Hochreiter Multi-touch Detection and Semantic Response on Non-parametric Rear-projection Surfaces PhD Thesis 2019. @phdthesis{Hochreiter2019,

title = {Multi-touch Detection and Semantic Response on Non-parametric Rear-projection Surfaces},

author = {Jason Hochreiter},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/08/Hochreiter2019-dissertation_Multi-touch-Detection-and-Semantic-Response-on-Non-parametric-Rea-1.pdf

https://stars.library.ucf.edu/do/search/?q=jason%20hochreiter&start=0&context=7014507&facet=},

year = {2019},

date = {2019-08-01},

keywords = {},

pubstate = {published},

tppubtype = {phdthesis}

}

|

| Kathleen Ingraham; Charles Hughes; Nicholas Westers; Lisa Dieker; Michael Hynes Using Digital Puppetry to Prepare Physicians to Address Non-Suicidal Self-Injury Among Teens Book Section In: Antona, Magherita; Stephanidis, Constantine (Ed.): Universal Access in Human-Computer Interaction. Theory, Methods and Tools, vol. 11572, no. 555-568, Springer, Cham Switzerland, 2019. @incollection{Ingraham2019udp,

title = {Using Digital Puppetry to Prepare Physicians to Address Non-Suicidal Self-Injury Among Teens},

author = {Kathleen Ingraham and Charles Hughes and Nicholas Westers and Lisa Dieker and Michael Hynes},

editor = {Magherita Antona and Constantine Stephanidis},

doi = {10.1007/978-3-030-23560-4},

year = {2019},

date = {2019-07-26},

booktitle = {Universal Access in Human-Computer Interaction. Theory, Methods and Tools},

journal = {Lecture Notes in Computer Science},

volume = {11572},

number = {555-568},

publisher = {Springer},

address = {Cham Switzerland},

keywords = {},

pubstate = {published},

tppubtype = {incollection}

}

|

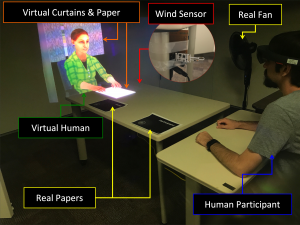

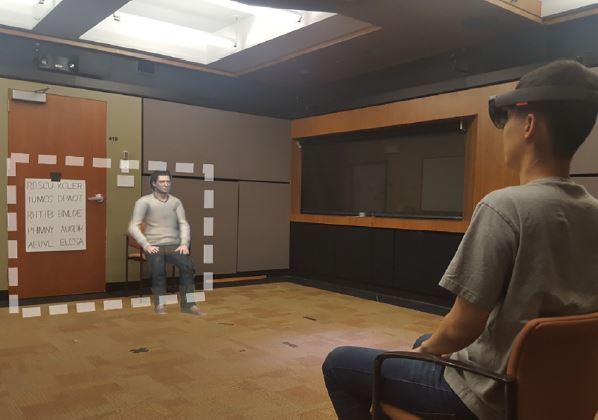

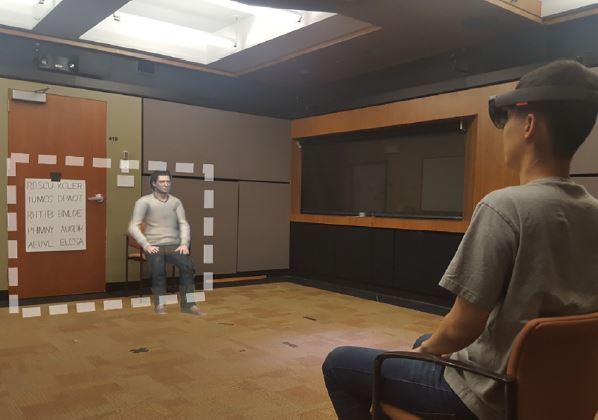

| Kangsoo Kim; Ryan Schubert; Jason Hochreiter; Gerd Bruder; Gregory Welch Blowing in the Wind: Increasing Social Presence with a Virtual Human via Environmental Airflow Interaction in Mixed Reality Journal Article In: Elsevier Computers and Graphics, vol. 83, no. October 2019, pp. 23-32, 2019. @article{Kim2019blow,

title = {Blowing in the Wind: Increasing Social Presence with a Virtual Human via Environmental Airflow Interaction in Mixed Reality},

author = {Kangsoo Kim and Ryan Schubert and Jason Hochreiter and Gerd Bruder and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/06/ELSEVIER_C_G2019_Special_BlowWindinMR_ICAT_EGVE2018_20190606_reduced.pdf},

doi = {10.1016/j.cag.2019.06.006},

year = {2019},

date = {2019-07-05},

journal = {Elsevier Computers and Graphics},

volume = {83},

number = {October 2019},

pages = {23-32},

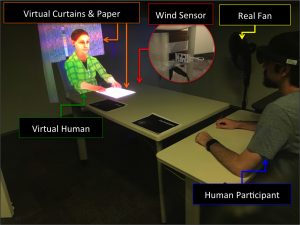

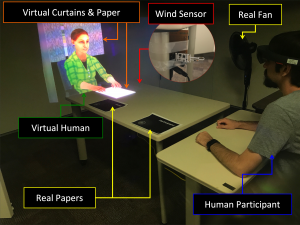

abstract = {In this paper, we describe two human-subject studies in which we explored and investigated the effects of subtle multimodal interaction on social presence with a virtual human (VH) in mixed reality (MR). In the studies, participants interacted with a VH, which was co-located with them across a table, with two different platforms: a projection based MR environment and an optical see-through head-mounted display (OST-HMD) based MR environment. While the two studies were not intended to be directly comparable, the second study with an OST-HMD was carefully designed based on the insights and lessons learned from the first projection-based study. For both studies, we compared two levels of gradually increased multimodal interaction: (i) virtual objects being affected by real airflow (e.g., as commonly experienced with fans during warm weather), and (ii) a VH showing awareness of this airflow. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher social presence with airflow influence than without it, and the social presence would be even higher when the VH showed awareness of the airflow. We observed an increased social presence in the second study when both physical–virtual interaction via airflow and VH awareness behaviors were present, but we observed no clear difference in participant-reported social presence with the VH in the first study. As the considered environmental factors are incidental to the direct interaction with the real human, i.e., they are not significant or necessary for the interaction task, they can provide a reasonably generalizable approach to increase social presence in HMD-based MR environments beyond the specific scenario and environment described here.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

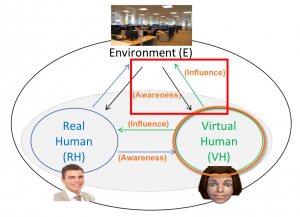

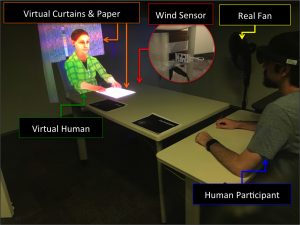

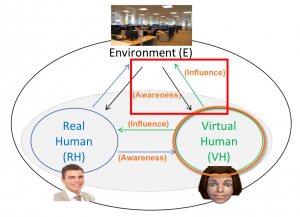

In this paper, we describe two human-subject studies in which we explored and investigated the effects of subtle multimodal interaction on social presence with a virtual human (VH) in mixed reality (MR). In the studies, participants interacted with a VH, which was co-located with them across a table, with two different platforms: a projection based MR environment and an optical see-through head-mounted display (OST-HMD) based MR environment. While the two studies were not intended to be directly comparable, the second study with an OST-HMD was carefully designed based on the insights and lessons learned from the first projection-based study. For both studies, we compared two levels of gradually increased multimodal interaction: (i) virtual objects being affected by real airflow (e.g., as commonly experienced with fans during warm weather), and (ii) a VH showing awareness of this airflow. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher social presence with airflow influence than without it, and the social presence would be even higher when the VH showed awareness of the airflow. We observed an increased social presence in the second study when both physical–virtual interaction via airflow and VH awareness behaviors were present, but we observed no clear difference in participant-reported social presence with the VH in the first study. As the considered environmental factors are incidental to the direct interaction with the real human, i.e., they are not significant or necessary for the interaction task, they can provide a reasonably generalizable approach to increase social presence in HMD-based MR environments beyond the specific scenario and environment described here. |

| Michelle Aebersold; Salam Daher; Cynthia Foronda; Jone Tiffany; Margaret Verkuyl Virtual/ Augmented Reality for Health Professions Education Symposium Conference INACSL 2019. @conference{inacsl_2019_arvr,

title = {Virtual/ Augmented Reality for Health Professions Education Symposium},

author = {Michelle Aebersold and Salam Daher and Cynthia Foronda and Jone Tiffany and Margaret Verkuyl},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/08/INACSL-ConferenceAR-VRSymposium.pdf},

year = {2019},

date = {2019-06-19},

organization = {INACSL},

keywords = {},

pubstate = {published},

tppubtype = {conference}

}

|

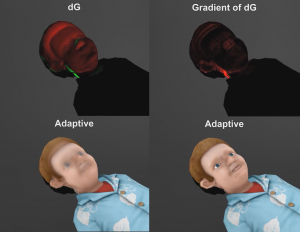

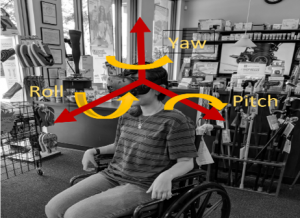

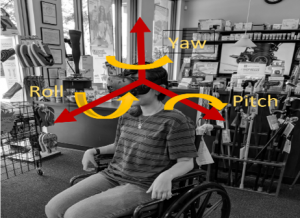

| Nahal Norouzi; Luke Bölling; Gerd Bruder; Gregory F. Welch Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement Journal Article In: Journal of Rehabilitation and Assistive Technologies Engineering, vol. 6, pp. 1-9, 2019. @article{Norouzi2019c,

title = {Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement},

author = {Nahal Norouzi and Luke Bölling and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/05/RATE2019_AugmentedRotations.pdf},

doi = {10.1177/2055668319841309},

year = {2019},

date = {2019-05-21},

journal = {Journal of Rehabilitation and Assistive Technologies Engineering},

volume = {6},

pages = {1-9},

abstract = {Introduction: A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement.

Methods: In this paper, we present techniques that allow these users to get virtual benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds.

Results: We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker,e.g., in cases when no physical head rotations are possible.

Conclusions: We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

Introduction: A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement.

Methods: In this paper, we present techniques that allow these users to get virtual benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds.

Results: We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker,e.g., in cases when no physical head rotations are possible.

Conclusions: We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces. |

| Salam Daher; Veenadhari Kollipara NCWIT Panel Presentation 05.05.2019. @misc{daher_2019_ncwit,

title = {NCWIT Panel},

author = {Salam Daher and Veenadhari Kollipara},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/08/ncwit2019.png},

year = {2019},

date = {2019-05-05},

keywords = {},

pubstate = {published},

tppubtype = {presentation}

}

|

| Mark Roman Miller; Hanseul Jun; Fernanda Herrera; Jacob Yu Villa; Greg Welch; Jeremy N Bailenson Social Interaction in Augmented Reality Journal Article In: PLOS ONE, vol. 14, no. 5, pp. 1-26, 2019. @article{Miller2019,

title = {Social Interaction in Augmented Reality},

author = {Mark Roman Miller and Hanseul Jun and Fernanda Herrera and Jacob Yu Villa and Greg Welch and Jeremy N Bailenson},

url = {https://doi.org/10.1371/journal.pone.0216290

https://sreal.ucf.edu/wp-content/uploads/2019/05/Miller2019.pdf},

doi = {10.1371/journal.pone.0216290},

year = {2019},

date = {2019-05-01},

journal = {PLOS ONE},

volume = {14},

number = {5},

pages = {1-26},

publisher = {Public Library of Science},

abstract = {There have been decades of research on the usability and educational value of augmented reality. However, less is known about how augmented reality affects social interactions. The current paper presents three studies that test the social psychological effects of augmented reality. Study 1 examined participants’ task performance in the presence of embodied agents and replicated the typical pattern of social facilitation and inhibition. Participants performed a simple task better, but a hard task worse, in the presence of an agent compared to when participants complete the tasks alone. Study 2 examined nonverbal behavior. Participants met an agent sitting in one of two chairs and were asked to choose one of the chairs to sit on. Participants wearing the headset never sat directly on the agent when given the choice of two seats, and while approaching, most of the participants chose the rotation direction to avoid turning their heads away from the agent. A separate group of participants chose a seat after removing the augmented reality headset, and the majority still avoided the seat previously occupied by the agent. Study 3 examined the social costs of using an augmented reality headset with others who are not using a headset. Participants talked in dyads, and augmented reality users reported less social connection to their partner compared to those not using augmented reality. Overall, these studies provide evidence suggesting that task performance, nonverbal behavior, and social connectedness are significantly affected by the presence or absence of virtual content.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

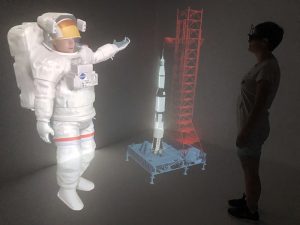

There have been decades of research on the usability and educational value of augmented reality. However, less is known about how augmented reality affects social interactions. The current paper presents three studies that test the social psychological effects of augmented reality. Study 1 examined participants’ task performance in the presence of embodied agents and replicated the typical pattern of social facilitation and inhibition. Participants performed a simple task better, but a hard task worse, in the presence of an agent compared to when participants complete the tasks alone. Study 2 examined nonverbal behavior. Participants met an agent sitting in one of two chairs and were asked to choose one of the chairs to sit on. Participants wearing the headset never sat directly on the agent when given the choice of two seats, and while approaching, most of the participants chose the rotation direction to avoid turning their heads away from the agent. A separate group of participants chose a seat after removing the augmented reality headset, and the majority still avoided the seat previously occupied by the agent. Study 3 examined the social costs of using an augmented reality headset with others who are not using a headset. Participants talked in dyads, and augmented reality users reported less social connection to their partner compared to those not using augmented reality. Overall, these studies provide evidence suggesting that task performance, nonverbal behavior, and social connectedness are significantly affected by the presence or absence of virtual content. |

| Myungho Lee Mediated Physicality: Inducing Illusory Physicality of Virtual Humans via Their Interactions with Physical Objects PhD Thesis University of Central Florida, 2019. @phdthesis{Lee2019,

title = {Mediated Physicality: Inducing Illusory Physicality of Virtual Humans via Their Interactions with Physical Objects},

author = {Myungho Lee},

url = {https://nam02.safelinks.protection.outlook.com/?url=https%3A%2F%2Fstars.library.ucf.edu%2Fcgi%2Fviewcontent.cgi%3Farticle%3D7295%26context%3Detd&data=02%7C01%7CBarbara.Lee%40ucf.edu%7C41a8f0bd17084e9aa2d508d6ee7fec5a%7Cbb932f15ef3842ba91fcf3c59d5dd1f1%7C0%7C0%7C636958630521181141&sdata=tXZmmQkOfuU71LPQkIe0DBtFiUZwqWP8MQ9NDqoEvRw%3D&reserved=0

https://sreal.ucf.edu/wp-content/uploads/2019/06/Lee-2019-Dissertation.pdf},

year = {2019},

date = {2019-04-02},

school = {University of Central Florida},

abstract = {The term virtual human (VH) generally refers to a human-like entity comprised of computer graphics and/or physical body. In the associated research literature, a VH can be further classified as an avatar—a human-controlled VH, or an agent—a computer-controlled VH. Because of the resemblance with humans, people naturally distinguish them from non-human objects, and often treat them in ways similar to real humans. Sometimes people develop a sense of co-presence or social

presence with the VH—a phenomenon that is often exploited for training simulations where the VH assumes the role of a human.

Prior research associated with VHs has primarily focused on the realism of various visual traits, e.g., appearance, shape, and gestures. However, our sense of the presence of other humans is also affected by other physical sensations conveyed through nearby space or physical objects. For example, we humans can perceive the presence of other individuals via the sound or tactile sensation of approaching footsteps, or by the presence of complementary or opposing forces when carrying a physical box with another person.

In my research, I exploit the fact that these sensations, when correlated with events in the shared space, affect one’s feeling of social/co-presence with another person. In this dissertation, I introduce novel methods for utilizing direct and indirect physical-virtual interactions with VHs to increase the sense of social/co-presence with the VHs—an approach I refer to as mediated physicality. I present results from controlled user studies, in various virtual environment settings, that support the idea that mediated physicality can increase a user’s sense of social/co-presence with the VH, and/or induced realistic social behavior. I discuss relationships to prior research, possible explanations for my findings, and areas for future research.},

keywords = {},

pubstate = {published},

tppubtype = {phdthesis}

}

The term virtual human (VH) generally refers to a human-like entity comprised of computer graphics and/or physical body. In the associated research literature, a VH can be further classified as an avatar—a human-controlled VH, or an agent—a computer-controlled VH. Because of the resemblance with humans, people naturally distinguish them from non-human objects, and often treat them in ways similar to real humans. Sometimes people develop a sense of co-presence or social

presence with the VH—a phenomenon that is often exploited for training simulations where the VH assumes the role of a human.

Prior research associated with VHs has primarily focused on the realism of various visual traits, e.g., appearance, shape, and gestures. However, our sense of the presence of other humans is also affected by other physical sensations conveyed through nearby space or physical objects. For example, we humans can perceive the presence of other individuals via the sound or tactile sensation of approaching footsteps, or by the presence of complementary or opposing forces when carrying a physical box with another person.

In my research, I exploit the fact that these sensations, when correlated with events in the shared space, affect one’s feeling of social/co-presence with another person. In this dissertation, I introduce novel methods for utilizing direct and indirect physical-virtual interactions with VHs to increase the sense of social/co-presence with the VHs—an approach I refer to as mediated physicality. I present results from controlled user studies, in various virtual environment settings, that support the idea that mediated physicality can increase a user’s sense of social/co-presence with the VH, and/or induced realistic social behavior. I discuss relationships to prior research, possible explanations for my findings, and areas for future research. |

![[POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents](https://sreal.ucf.edu/wp-content/uploads/2019/03/ieeevr_poster_thumbnail-1.png) | Salam Daher; Jason Hochreiter; Nahal Norouzi; Ryan Schubert; Gerd Bruder; Laura Gonzalez; Mindi Anderson; Desiree Diaz; Juan Cendan; Greg Welch [POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), 2019, 2019. @inproceedings{daher2019matching,

title = {[POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents},

author = {Salam Daher and Jason Hochreiter and Nahal Norouzi and Ryan Schubert and Gerd Bruder and Laura Gonzalez and Mindi Anderson and Desiree Diaz and Juan Cendan and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/03/IEEEVR2019_Poster_PVChildStudy.pdf},

year = {2019},

date = {2019-03-27},

publisher = {Proceedings of IEEE Virtual Reality (VR), 2019},

abstract = {Embodied virtual agents serving as patient simulators are widely used in medical training scenarios, ranging from physical patients to virtual patients presented via virtual and augmented reality technologies. Physical-virtual patients are a hybrid solution that combines the benefits of dynamic visuals integrated into a human-shaped physical

form that can also present other cues, such as pulse, breathing sounds, and temperature. Sometimes in simulation the visuals and shape do not match. We carried out a human-participant study employing graduate nursing students in pediatric patient simulations comprising conditions associated with matching/non-matching of the visuals and shape.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Embodied virtual agents serving as patient simulators are widely used in medical training scenarios, ranging from physical patients to virtual patients presented via virtual and augmented reality technologies. Physical-virtual patients are a hybrid solution that combines the benefits of dynamic visuals integrated into a human-shaped physical

form that can also present other cues, such as pulse, breathing sounds, and temperature. Sometimes in simulation the visuals and shape do not match. We carried out a human-participant study employing graduate nursing students in pediatric patient simulations comprising conditions associated with matching/non-matching of the visuals and shape. |

| Myungho Lee; Gerd Bruder; Greg Welch The Virtual Pole: Exploring Human Responses to Fear of Heights in Immersive Virtual Environments Journal Article In: Journal of Virtual Reality and Broadcasting, vol. 14(2017), no. 6, 2019, ISSN: 1860-2037. @article{Lee2018b,

title = {The Virtual Pole: Exploring Human Responses to Fear of Heights in Immersive Virtual Environments},

author = {Myungho Lee and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/06/Lee2019b.pdf},

doi = {10.20385/1860-2037/14.2017.6},

issn = {1860-2037},

year = {2019},

date = {2019-02-04},

journal = {Journal of Virtual Reality and Broadcasting},

volume = {14(2017)},

number = {6},

abstract = {Measuring how effective immersive virtual environments (IVEs) are in reproducing sensations as in similar situations in the real world is an important task for many application fields. In this paper, we present an experimental setup which we call the virtual pole, where we evaluated human responses to fear of heights. We conducted experiments where we analyzed correlations between subjective and physiological anxiety measures and the participant’s view direction. Our results show that the view direction plays an important role in subjective and physiological anxiety in an IVE due to the limited field of view, and that the subjective and physiological anxiety measures monotonically increase with the increasing height. In addition, we also found that participants recollected the virtual content they saw at the top more accurately compared to that at the medium height. We discuss the results and provide guidelines for simulations aimed at evoking fear of heights responses in IVEs.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

Measuring how effective immersive virtual environments (IVEs) are in reproducing sensations as in similar situations in the real world is an important task for many application fields. In this paper, we present an experimental setup which we call the virtual pole, where we evaluated human responses to fear of heights. We conducted experiments where we analyzed correlations between subjective and physiological anxiety measures and the participant’s view direction. Our results show that the view direction plays an important role in subjective and physiological anxiety in an IVE due to the limited field of view, and that the subjective and physiological anxiety measures monotonically increase with the increasing height. In addition, we also found that participants recollected the virtual content they saw at the top more accurately compared to that at the medium height. We discuss the results and provide guidelines for simulations aimed at evoking fear of heights responses in IVEs. |

| Salam Daher Patient Simulators: the Past, Present, and Future Presentation 21.01.2019. @misc{daher_2019_otronicon,

title = {Patient Simulators: the Past, Present, and Future},

author = {Salam Daher},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/08/otronicon_2019.pdf},

year = {2019},

date = {2019-01-21},

keywords = {},

pubstate = {published},

tppubtype = {presentation}

}

|

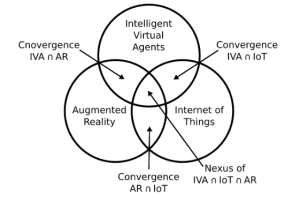

| Nahal Norouzi; Gerd Bruder; Brandon Belna; Stefanie Mutter; Damla Turgut; Greg Welch A Systematic Review of the Convergence of Augmented Reality, Intelligent Virtual Agents, and the Internet of Things Book Chapter In: Artificial Intelligence in IoT, pp. 37, Springer, 2019, ISBN: 978-3-030-04109-0. @inbook{Norouzi2019,

title = {A Systematic Review of the Convergence of Augmented Reality, Intelligent Virtual Agents, and the Internet of Things},

author = {Nahal Norouzi and Gerd Bruder and Brandon Belna and Stefanie Mutter and Damla Turgut and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/05/Norouzi-2019-IoT-AR-Final.pdf},

doi = {10.1007/978-3-030-04110-6_1},

isbn = {978-3-030-04109-0},

year = {2019},

date = {2019-01-10},

booktitle = {Artificial Intelligence in IoT},

pages = {37},

publisher = {Springer},

abstract = {In recent years we are beginning to see the convergence of three distinct research fields: Augmented Reality (AR), Intelligent Virtual Agents (IVAs), and the Internet of Things (IoT). Each of these has been classified as a disruptive technology for our society. Since their inception, the advancement of knowledge and development of technologies and systems in these fields was traditionally performed with limited input from each other. However, over the last years, we have seen research prototypes and commercial products being developed that cross the boundaries between these distinct fields to leverage their collective strengths. In this review paper, we resume the body of literature published at the intersections between each two of these fields, and we discuss a vision for the nexus of all three technologies.},

keywords = {},

pubstate = {published},

tppubtype = {inbook}

}

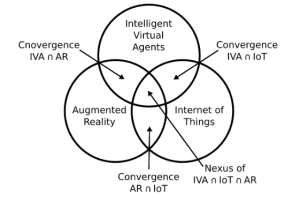

In recent years we are beginning to see the convergence of three distinct research fields: Augmented Reality (AR), Intelligent Virtual Agents (IVAs), and the Internet of Things (IoT). Each of these has been classified as a disruptive technology for our society. Since their inception, the advancement of knowledge and development of technologies and systems in these fields was traditionally performed with limited input from each other. However, over the last years, we have seen research prototypes and commercial products being developed that cross the boundaries between these distinct fields to leverage their collective strengths. In this review paper, we resume the body of literature published at the intersections between each two of these fields, and we discuss a vision for the nexus of all three technologies. |

2018

|

| Myungho Lee; Nahal Norouzi; Gerd Bruder; Pamela J. Wisniewski; Gregory F. Welch The Physical-virtual Table: Exploring the Effects of a Virtual Human's Physical Influence on Social Interaction Proceedings Article In: Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology, pp. 25:1–25:11, ACM, New York, NY, USA, 2018, ISBN: 978-1-4503-6086-9, (Best Paper Award). @inproceedings{Lee2018ac,

title = {The Physical-virtual Table: Exploring the Effects of a Virtual Human's Physical Influence on Social Interaction},

author = {Myungho Lee and Nahal Norouzi and Gerd Bruder and Pamela J. Wisniewski and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Lee2018ab.pdf},

doi = {10.1145/3281505.3281533},

isbn = {978-1-4503-6086-9},

year = {2018},

date = {2018-11-28},

booktitle = {Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology},

journal = {Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology},

pages = {25:1--25:11},

publisher = {ACM},

address = {New York, NY, USA},

series = {VRST '18},

abstract = {In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in augmented reality (AR). In our study, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We com- pared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through AR glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s phys- ical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further ob- served transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. We discuss potential explanations for the findings and implications for future shared AR tabletop setups.},

note = {Best Paper Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in augmented reality (AR). In our study, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We com- pared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through AR glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s phys- ical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further ob- served transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. We discuss potential explanations for the findings and implications for future shared AR tabletop setups. |

| Kangsoo Kim Environmental Physical–Virtual Interaction to Improve Social Presence of a Virtual Human in Mixed Reality PhD Thesis The University of Central Florida, 2018. @phdthesis{Kim2018Thesis,

title = {Environmental Physical–Virtual Interaction to Improve Social Presence of a Virtual Human in Mixed Reality},

author = {Kangsoo Kim},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/KangsooKIM_PhD_Dissertation_20181119.pdf},

year = {2018},

date = {2018-11-21},

school = {The University of Central Florida},

abstract = {Interactive Virtual Humans (VHs) are increasingly used to replace or assist real humans in various applications, e.g., military and medical training, education, or entertainment. In most VH research, the perceived social presence with a VH, which denotes the user's sense of being socially connected or co-located with the VH, is the decisive factor in evaluating the social influence of the VH—a phenomenon where human users' emotions, opinions, or behaviors are affected by the VH. The purpose of this dissertation is to develop new knowledge about how characteristics and behaviors of a VH in a Mixed Reality (MR) environment can affect the perception of and resulting behavior with the VH, and to find effective and efficient ways to improve the quality and performance of social interactions with VHs. Important issues and challenges in real–virtual human interactions in MR, e.g., lack of physical–virtual interaction, are identified and discussed through several user studies incorporating interactions with VH systems. In the studies, different features of VHs are prototyped and evaluated, such as a VH's ability to be aware of and influence the surrounding physical environment, while measuring objective behavioral data as well as collecting subjective responses from the participants. The results from the studies support the idea that the VH's aware- ness and influence of the physical environment can improve not only the perceived social presence with the VH, but also the trustworthiness of the VH within a social context. The findings will contribute towards designing more influential VHs that can benefit a wide range of simulation and training applications for which a high level of social realism is important, and that can be more easily incorporated into our daily lives as social companions, providing reliable relationships and convenience in assisting with daily tasks.},

keywords = {},

pubstate = {published},

tppubtype = {phdthesis}

}

Interactive Virtual Humans (VHs) are increasingly used to replace or assist real humans in various applications, e.g., military and medical training, education, or entertainment. In most VH research, the perceived social presence with a VH, which denotes the user's sense of being socially connected or co-located with the VH, is the decisive factor in evaluating the social influence of the VH—a phenomenon where human users' emotions, opinions, or behaviors are affected by the VH. The purpose of this dissertation is to develop new knowledge about how characteristics and behaviors of a VH in a Mixed Reality (MR) environment can affect the perception of and resulting behavior with the VH, and to find effective and efficient ways to improve the quality and performance of social interactions with VHs. Important issues and challenges in real–virtual human interactions in MR, e.g., lack of physical–virtual interaction, are identified and discussed through several user studies incorporating interactions with VH systems. In the studies, different features of VHs are prototyped and evaluated, such as a VH's ability to be aware of and influence the surrounding physical environment, while measuring objective behavioral data as well as collecting subjective responses from the participants. The results from the studies support the idea that the VH's aware- ness and influence of the physical environment can improve not only the perceived social presence with the VH, but also the trustworthiness of the VH within a social context. The findings will contribute towards designing more influential VHs that can benefit a wide range of simulation and training applications for which a high level of social realism is important, and that can be more easily incorporated into our daily lives as social companions, providing reliable relationships and convenience in assisting with daily tasks. |

| Susanne Schmidt; Gerd Bruder; Frank Steinicke Effects of Embodiment on Generic and Content-Specific Intelligent Virtual Agents as Exhibition Guides Proceedings Article In: Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018, pp. 13-20, 2018, (Best Paper Award). @inproceedings{Schmidt2018a,

title = {Effects of Embodiment on Generic and Content-Specific Intelligent Virtual Agents as Exhibition Guides},

author = {Susanne Schmidt and Gerd Bruder and Frank Steinicke},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/01/Schmidt2018a.pdf},

doi = {10.2312/egve.20181309},

year = {2018},

date = {2018-11-07},

booktitle = {Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018},

pages = {13-20},

abstract = {Intelligent Virtual Agents (IVAs) received enormous attention in recent years due to significant improvements in voice communication technologies and the convergence of different research fields such as Machine Learning, Internet of Things, and Virtual Reality (VR). Interactive conversational IVAs can appear in different forms such as voice-only or with embodied audio-visual representations showing, for example, human-like contextually related or generic three-dimensional bodies. In this paper, we analyzed the benefits of different forms of virtual agents in the context of a VR exhibition space. Our results suggest positive evidence showing large benefits of both embodied and thematically related audio-visual representations of IVAs. We discuss implications and suggestions for content developers to design believable virtual agents in the context of such installations.},

note = {Best Paper Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Intelligent Virtual Agents (IVAs) received enormous attention in recent years due to significant improvements in voice communication technologies and the convergence of different research fields such as Machine Learning, Internet of Things, and Virtual Reality (VR). Interactive conversational IVAs can appear in different forms such as voice-only or with embodied audio-visual representations showing, for example, human-like contextually related or generic three-dimensional bodies. In this paper, we analyzed the benefits of different forms of virtual agents in the context of a VR exhibition space. Our results suggest positive evidence showing large benefits of both embodied and thematically related audio-visual representations of IVAs. We discuss implications and suggestions for content developers to design believable virtual agents in the context of such installations. |

| Kangsoo Kim; Gerd Bruder; Gregory F. Welch Blowing in the Wind: Increasing Copresence with a Virtual Human via Airflow Influence in Augmented Reality Proceedings Article In: Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018, pp. 183-190, 2018, (Honorable Mention Award). @inproceedings{Kim2018c,

title = {Blowing in the Wind: Increasing Copresence with a Virtual Human via Airflow Influence in Augmented Reality},

author = {Kangsoo Kim and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/10/Kim_Airflow_ICAT_EGVE2018.pdf},

doi = {10.2312/egve.20181332},

year = {2018},

date = {2018-11-07},

booktitle = {Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018},

pages = {183-190},

abstract = {In a social context where two or more interlocutors interact with each other in the same space, one's sense of copresence with the others is an important factor for the quality of communication and engagement in the interaction. Although augmented reality (AR) technology enables the superposition of virtual humans (VHs) as interlocutors in the real world, the resulting sense of copresence is usually far lower than with a real human interlocutor.

In this paper, we describe a human-subject study in which we explored and investigated the effects that subtle multi-modal interaction between the virtual environment and the real world, where a VH and human participants were co-located, can have on copresence. We compared two levels of gradually increased multi-modal interaction: (i) virtual objects being affected by real airflow as commonly experienced with fans in summer, and (ii) a VH showing awareness of this airflow. We chose airflow as one example of an environmental factor that can noticeably affect both the real and virtual worlds, and also cause subtle responses in interlocutors. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher copresence with airflow influence than without it, and the copresence would be even higher when the VH shows awareness of the airflow. The statistical analysis with the participant-reported copresence scores showed that there was an improvement of the perceived copresence with the VH when both the physical–virtual interactivity via airflow and the VH's awareness behaviors were present together. As the considered environmental factors are directed at the VH, i.e., they are not part of the direct interaction with the real human, they can provide a reasonably generalizable approach to support copresence in AR beyond the particular use case in the present experiment.},

note = {Honorable Mention Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a social context where two or more interlocutors interact with each other in the same space, one's sense of copresence with the others is an important factor for the quality of communication and engagement in the interaction. Although augmented reality (AR) technology enables the superposition of virtual humans (VHs) as interlocutors in the real world, the resulting sense of copresence is usually far lower than with a real human interlocutor.

In this paper, we describe a human-subject study in which we explored and investigated the effects that subtle multi-modal interaction between the virtual environment and the real world, where a VH and human participants were co-located, can have on copresence. We compared two levels of gradually increased multi-modal interaction: (i) virtual objects being affected by real airflow as commonly experienced with fans in summer, and (ii) a VH showing awareness of this airflow. We chose airflow as one example of an environmental factor that can noticeably affect both the real and virtual worlds, and also cause subtle responses in interlocutors. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher copresence with airflow influence than without it, and the copresence would be even higher when the VH shows awareness of the airflow. The statistical analysis with the participant-reported copresence scores showed that there was an improvement of the perceived copresence with the VH when both the physical–virtual interactivity via airflow and the VH's awareness behaviors were present together. As the considered environmental factors are directed at the VH, i.e., they are not part of the direct interaction with the real human, they can provide a reasonably generalizable approach to support copresence in AR beyond the particular use case in the present experiment. |

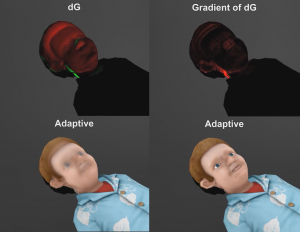

| Ryan Schubert; Gerd Bruder; Greg Welch Adaptive filtering of physical-virtual artifacts for synthetic animatronics Proceedings Article In: Bruder, G.; Cobb, S.; Yoshimoto, S. (Ed.): ICAT-EGVE 2018 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments, Limassol, Cyprus, November 7-9 2018, 2018. @inproceedings{Schubert2018,

title = {Adaptive filtering of physical-virtual artifacts for synthetic animatronics},

author = {Ryan Schubert and Gerd Bruder and Greg Welch},

editor = {G. Bruder and S. Cobb and S. Yoshimoto},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/01/Schubert2018.pdf},

year = {2018},

date = {2018-11-07},

booktitle = {ICAT-EGVE 2018 - International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments, Limassol, Cyprus, November 7-9 2018},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents](https://sreal.ucf.edu/wp-content/uploads/2019/03/ieeevr_poster_thumbnail-1.png)