2020

|

| Nahal Norouzi Augmented Reality Animals: Are They Our Future Companions? Presentation 22.03.2020, (IEEE VR 2020 Doctoral Consortium). @misc{Norouzi2020,

title = {Augmented Reality Animals: Are They Our Future Companions?},

author = {Nahal Norouzi },

url = {https://sreal.ucf.edu/wp-content/uploads/2020/03/vr20c-sub1054-cam-i5.pdf},

year = {2020},

date = {2020-03-22},

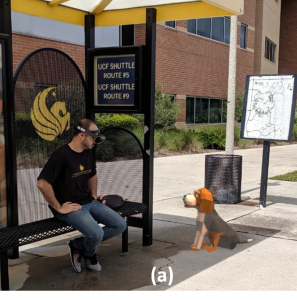

abstract = {Previous research in the field of human-animal interaction has captured the multitude of benefits of this relationship on different aspects of human health. Existing limitations for accompanying pets/animals in some public spaces, allergies, and inability to provide adequate care for animals/pets limits the possible benefits of this relationship. However, the increased popularity of augmented reality and virtual reality devices and the introduction of new social behaviors since their utilization offers the opportunity of using such platforms for the realization of virtual animals and investigation of their influences on human perception and behavior.

In this paper, two prior experiments are presented, which were designed to provide a better understanding of the requirements of virtual animals in augmented reality as companions and investigate some of their capabilities in the provision of support. Through these findings, future research directions are identified and discussed.

},

note = {IEEE VR 2020 Doctoral Consortium},

keywords = {},

pubstate = {published},

tppubtype = {presentation}

}

Previous research in the field of human-animal interaction has captured the multitude of benefits of this relationship on different aspects of human health. Existing limitations for accompanying pets/animals in some public spaces, allergies, and inability to provide adequate care for animals/pets limits the possible benefits of this relationship. However, the increased popularity of augmented reality and virtual reality devices and the introduction of new social behaviors since their utilization offers the opportunity of using such platforms for the realization of virtual animals and investigation of their influences on human perception and behavior.

In this paper, two prior experiments are presented, which were designed to provide a better understanding of the requirements of virtual animals in augmented reality as companions and investigate some of their capabilities in the provision of support. Through these findings, future research directions are identified and discussed.

|

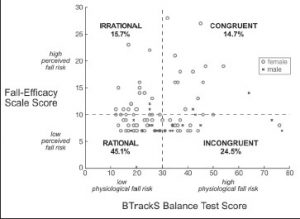

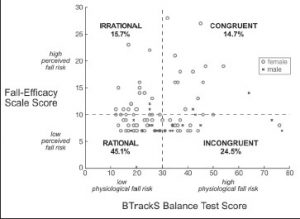

| Ladda Thiamwong; Mary Lou Sole; Boon Peng; Gregory F. Welch; Helen J. Huang; Jeffrey R. Stout Assessing Fall Risk Appraisal Through Combined Physiological and Perceived Fall Risk Measures Using Innovative Technology Journal Article In: Journal of Gerontological Nursing, vol. 46, no. 4, pp. 41–47, 2020. @article{Thiamwong2020aa,

title = {Assessing Fall Risk Appraisal Through Combined Physiological and Perceived Fall Risk Measures Using Innovative Technology},

author = {Ladda Thiamwong and Mary Lou Sole and Boon Peng and Gregory F. Welch and Helen J. Huang and Jeffrey R. Stout},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/08/Thiamwong2020aa.pdf},

year = {2020},

date = {2020-03-01},

journal = {Journal of Gerontological Nursing},

volume = {46},

number = {4},

pages = {41--47},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

| Austin Erickson; Nahal Norouzi; Kangsoo Kim; Joseph J. LaViola Jr.; Gerd Bruder; Gregory F. Welch Effects of Depth Information on Visual Target Identification Task Performance in Shared Gaze Environments Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 26, no. 5, pp. 1934-1944, 2020, ISSN: 1077-2626, (Presented at IEEE VR 2020). @article{Erickson2020c,

title = {Effects of Depth Information on Visual Target Identification Task Performance in Shared Gaze Environments},

author = {Austin Erickson and Nahal Norouzi and Kangsoo Kim and Joseph J. LaViola Jr. and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/02/shared_gaze_2_FINAL.pdf

https://www.youtube.com/watch?v=JQO_iosY62Y&t=6s, YouTube Presentation},

doi = {10.1109/TVCG.2020.2973054},

issn = {1077-2626},

year = {2020},

date = {2020-02-13},

urldate = {2020-02-13},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {26},

number = {5},

pages = {1934-1944},

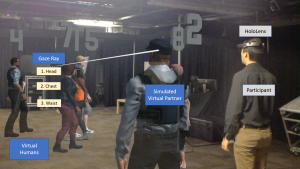

abstract = {Human gaze awareness is important for social and collaborative interactions. Recent technological advances in augmented reality (AR) displays and sensors provide us with the means to extend collaborative spaces with real-time dynamic AR indicators of one's gaze, for example via three-dimensional cursors or rays emanating from a partner's head. However, such gaze cues are only as useful as the quality of the underlying gaze estimation and the accuracy of the display mechanism. Depending on the type of the visualization, and the characteristics of the errors, AR gaze cues could either enhance or interfere with collaborations. In this paper, we present two human-subject studies in which we investigate the influence of angular and depth errors, target distance, and the type of gaze visualization on participants' performance and subjective evaluation during a collaborative task with a virtual human partner, where participants identified targets within a dynamically walking crowd. First, our results show that there is a significant difference in performance for the two gaze visualizations ray and cursor in conditions with simulated angular and depth errors: the ray visualization provided significantly faster response times and fewer errors compared to the cursor visualization. Second, our results show that under optimal conditions, among four different gaze visualization methods, a ray without depth information provides the worst performance and is rated lowest, while a combination of a ray and cursor with depth information is rated highest. We discuss the subjective and objective performance thresholds and provide guidelines for practitioners in this field.},

note = {Presented at IEEE VR 2020},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

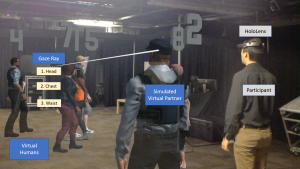

Human gaze awareness is important for social and collaborative interactions. Recent technological advances in augmented reality (AR) displays and sensors provide us with the means to extend collaborative spaces with real-time dynamic AR indicators of one's gaze, for example via three-dimensional cursors or rays emanating from a partner's head. However, such gaze cues are only as useful as the quality of the underlying gaze estimation and the accuracy of the display mechanism. Depending on the type of the visualization, and the characteristics of the errors, AR gaze cues could either enhance or interfere with collaborations. In this paper, we present two human-subject studies in which we investigate the influence of angular and depth errors, target distance, and the type of gaze visualization on participants' performance and subjective evaluation during a collaborative task with a virtual human partner, where participants identified targets within a dynamically walking crowd. First, our results show that there is a significant difference in performance for the two gaze visualizations ray and cursor in conditions with simulated angular and depth errors: the ray visualization provided significantly faster response times and fewer errors compared to the cursor visualization. Second, our results show that under optimal conditions, among four different gaze visualization methods, a ray without depth information provides the worst performance and is rated lowest, while a combination of a ray and cursor with depth information is rated highest. We discuss the subjective and objective performance thresholds and provide guidelines for practitioners in this field. |

| Andrei State; Herman Towles; Tyler Johnson; Ryan Schubert; Brendan Walters; Greg Welch; Henry Fuchs The A-Desk: A Unified Workspace of the Future Journal Article In: IEEE Computer Graphics and Applications, vol. 40, no. 1, pp. 56-71, 2020, ISSN: 1558-1756. @article{State2020aa,

title = {The A-Desk: A Unified Workspace of the Future},

author = {Andrei State and Herman Towles and Tyler Johnson and Ryan Schubert and Brendan Walters and Greg Welch and Henry Fuchs},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/01/State2020aa-1.pdf},

doi = {10.1109/MCG.2019.2951273},

issn = {1558-1756},

year = {2020},

date = {2020-01-01},

journal = {IEEE Computer Graphics and Applications},

volume = {40},

number = {1},

pages = {56-71},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

|

2019

|

| Myungho Lee; Nahal Norouzi; Gerd Bruder; Pamela J. Wisniewski; Gregory F. Welch Mixed Reality Tabletop Gameplay: Social Interaction with a Virtual Human Capable of Physical Influence Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 8, pp. 1-12, 2019, ISSN: 1077-2626. @article{Lee2020,

title = {Mixed Reality Tabletop Gameplay: Social Interaction with a Virtual Human Capable of Physical Influence},

author = {Myungho Lee and Nahal Norouzi and Gerd Bruder and Pamela J. Wisniewski and Gregory F. Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2019/12/TVCG_Physical_Virtual_Table_2019.pdf},

doi = {10.1109/TVCG.2019.2959575},

issn = {1077-2626},

year = {2019},

date = {2019-12-18},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {24},

number = {8},

pages = {1-12},

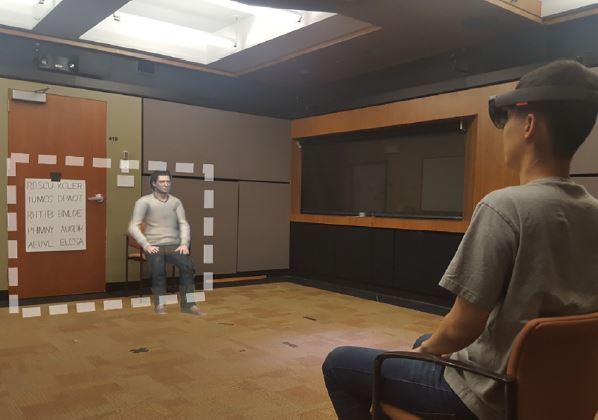

abstract = {In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in a mixed reality environment. In Experiment 1, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We compared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through augmented reality (AR) glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s physical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further observed transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. In Experiment 2, we further looked into the question how the physical-virtual latency in movements affected the perceived plausibility of the VH’s interaction with the real world. Our results indicate that a slight temporal difference between the physical token reacting to the virtual hand’s movement increased the perceived realism and causality of the mixed reality interaction. We discuss potential explanations for the findings and implications for future shared mixed reality tabletop setups.

},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in a mixed reality environment. In Experiment 1, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We compared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through augmented reality (AR) glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s physical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further observed transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. In Experiment 2, we further looked into the question how the physical-virtual latency in movements affected the perceived plausibility of the VH’s interaction with the real world. Our results indicate that a slight temporal difference between the physical token reacting to the virtual hand’s movement increased the perceived realism and causality of the mixed reality interaction. We discuss potential explanations for the findings and implications for future shared mixed reality tabletop setups.

|

| Kangsoo Kim; Nahal Norouzi; Tiffany Losekamp; Gerd Bruder; Mindi Anderson; Gregory Welch Effects of Patient Care Assistant Embodiment and Computer Mediation on User Experience Proceedings Article In: Proceedings of the IEEE International Conference on Artificial Intelligence & Virtual Reality (AIVR), pp. 17-24, IEEE, 2019. @inproceedings{Kim2019epc,

title = {Effects of Patient Care Assistant Embodiment and Computer Mediation on User Experience},

author = {Kangsoo Kim and Nahal Norouzi and Tiffany Losekamp and Gerd Bruder and Mindi Anderson and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/11/AIVR2019_Caregiver.pdf},

doi = {10.1109/AIVR46125.2019.00013},

year = {2019},

date = {2019-12-09},

booktitle = {Proceedings of the IEEE International Conference on Artificial Intelligence & Virtual Reality (AIVR)},

pages = {17-24},

publisher = {IEEE},

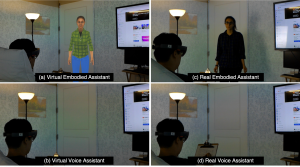

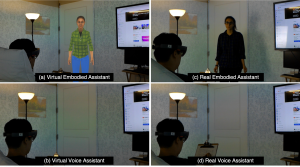

abstract = {Providers of patient care environments are facing an increasing demand for technological solutions that can facilitate increased patient satisfaction while being cost effective and practically feasible. Recent developments with respect to smart hospital room setups and smart home care environments have an immense potential to leverage advances in technologies such as Intelligent Virtual Agents, Internet of Things devices, and Augmented Reality to enable novel forms of patient interaction with caregivers and their environment.

In this paper, we present a human-subjects study in which we compared four types of simulated patient care environments for a range of typical tasks. In particular, we tested two forms of caregiver mediation with a real person or a virtual agent, and we compared two forms of caregiver embodiment with disembodied verbal or embodied interaction. Our results show that, as expected, a real caregiver provides the optimal user experience but an embodied virtual assistant is also a viable option for patient care environments, providing significantly higher social presence and engagement than voice-only interaction. We discuss the implications in the field of patient care and digital assistant.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Providers of patient care environments are facing an increasing demand for technological solutions that can facilitate increased patient satisfaction while being cost effective and practically feasible. Recent developments with respect to smart hospital room setups and smart home care environments have an immense potential to leverage advances in technologies such as Intelligent Virtual Agents, Internet of Things devices, and Augmented Reality to enable novel forms of patient interaction with caregivers and their environment.

In this paper, we present a human-subjects study in which we compared four types of simulated patient care environments for a range of typical tasks. In particular, we tested two forms of caregiver mediation with a real person or a virtual agent, and we compared two forms of caregiver embodiment with disembodied verbal or embodied interaction. Our results show that, as expected, a real caregiver provides the optimal user experience but an embodied virtual assistant is also a viable option for patient care environments, providing significantly higher social presence and engagement than voice-only interaction. We discuss the implications in the field of patient care and digital assistant. |

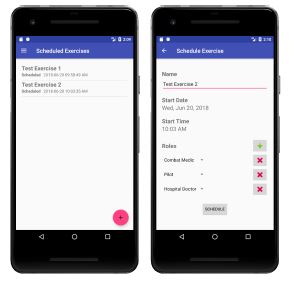

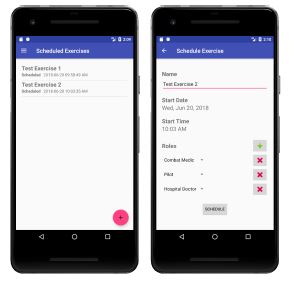

| Alyssa Tanaka; Brian Stensrud; Greg Welch; Fransisco Guido-Sanz; Lee Sciarini; Henry Phillips The Development and Implementation of Speech Understanding for Medical Handoff Training Proceedings Article In: Proceedings of 2019 Interservice/Industry Training, Simulation, and Education Conference (I/ITSEC 2019), Orlando, Florida, U.S.A., 2019. @inproceedings{Tanaka2019aa,

title = {The Development and Implementation of Speech Understanding for Medical Handoff Training},

author = {Alyssa Tanaka and Brian Stensrud and Greg Welch and Fransisco Guido-Sanz and Lee Sciarini and Henry Phillips},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/12/Tanaka2019aa.pdf},

year = {2019},

date = {2019-12-01},

booktitle = {Proceedings of 2019 Interservice/Industry Training, Simulation, and Education Conference (I/ITSEC 2019)},

address = {Orlando, Florida, U.S.A.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Nahal Norouzi; Austin Erickson; Kangsoo Kim; Ryan Schubert; Joseph J. LaViola Jr.; Gerd Bruder; Gregory F. Welch Effects of Shared Gaze Parameters on Visual Target Identification Task Performance in Augmented Reality Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 12:1-12:11, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10, (Best Paper Award). @inproceedings{Norouzi2019esg,

title = {Effects of Shared Gaze Parameters on Visual Target Identification Task Performance in Augmented Reality},

author = {Nahal Norouzi and Austin Erickson and Kangsoo Kim and Ryan Schubert and Joseph J. LaViola Jr. and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/a12-norouzi.pdf},

doi = {10.1145/3357251.3357587},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

urldate = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {12:1-12:11},

publisher = {ACM},

abstract = {Augmented reality (AR) technologies provide a shared platform for users to collaborate in a physical context involving both real and virtual content. To enhance the quality of interaction between AR users, researchers have proposed augmenting users' interpersonal space with embodied cues such as their gaze direction. While beneficial in achieving improved interpersonal spatial communication, such shared gaze environments suffer from multiple types of errors related to eye tracking and networking, that can reduce objective performance and subjective experience.

In this paper, we conducted a human-subject study to understand the impact of accuracy, precision, latency, and dropout based errors on users' performance when using shared gaze cues to identify a target among a crowd of people. We simulated varying amounts of errors and the target distances and measured participants' objective performance through their response time and error rate, and their subjective experience and cognitive load through questionnaires. We found some significant differences suggesting that the simulated error levels had stronger effects on participants' performance than target distance with accuracy and latency having a high impact on participants' error rate. We also observed that participants assessed their own performance as lower than it objectively was, and we discuss implications for practical shared gaze applications.},

note = {Best Paper Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Augmented reality (AR) technologies provide a shared platform for users to collaborate in a physical context involving both real and virtual content. To enhance the quality of interaction between AR users, researchers have proposed augmenting users' interpersonal space with embodied cues such as their gaze direction. While beneficial in achieving improved interpersonal spatial communication, such shared gaze environments suffer from multiple types of errors related to eye tracking and networking, that can reduce objective performance and subjective experience.

In this paper, we conducted a human-subject study to understand the impact of accuracy, precision, latency, and dropout based errors on users' performance when using shared gaze cues to identify a target among a crowd of people. We simulated varying amounts of errors and the target distances and measured participants' objective performance through their response time and error rate, and their subjective experience and cognitive load through questionnaires. We found some significant differences suggesting that the simulated error levels had stronger effects on participants' performance than target distance with accuracy and latency having a high impact on participants' error rate. We also observed that participants assessed their own performance as lower than it objectively was, and we discuss implications for practical shared gaze applications. |

| Kangsoo Kim; Austin Erickson; Alexis Lambert; Gerd Bruder; Gregory F. Welch Effects of Dark Mode on Visual Fatigue and Acuity in Optical See-Through Head-Mounted Displays Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 9:1-9:9, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10. @inproceedings{Kim2019edm,

title = {Effects of Dark Mode on Visual Fatigue and Acuity in Optical See-Through Head-Mounted Displays},

author = {Kangsoo Kim and Austin Erickson and Alexis Lambert and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Kim2019edm.pdf},

doi = {10.1145/3357251.3357584},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

urldate = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {9:1-9:9},

publisher = {ACM},

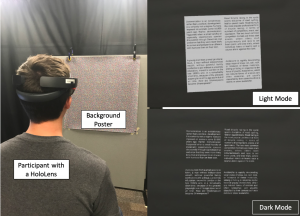

abstract = {Light-on-dark color schemes, so-called "Dark Mode," are becoming more and more popular over a wide range of display technologies and application fields. Many people who have to look at computer screens for hours at a time, such as computer programmers and computer graphics artists, indicate a preference for switching colors on a computer screen from dark text on a light background to light text on a dark background due to perceived advantages related to visual comfort and acuity, specifically when working in low-light environments.

In this paper, we investigate the effects of dark mode color schemes in the field of optical see-through head-mounted displays (OST-HMDs), where the characteristic "additive" light model implies that bright graphics are visible but dark graphics are transparent. We describe a human-subject study in which we evaluated a normal and inverted color mode in front of different physical backgrounds and among different lighting conditions. Our results show that dark mode graphics on OST-HMDs have significant benefits for visual acuity, fatigue, and usability, while user preferences depend largely on the lighting in the physical environment. We discuss the implications of these effects on user interfaces and applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

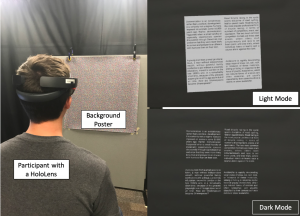

Light-on-dark color schemes, so-called "Dark Mode," are becoming more and more popular over a wide range of display technologies and application fields. Many people who have to look at computer screens for hours at a time, such as computer programmers and computer graphics artists, indicate a preference for switching colors on a computer screen from dark text on a light background to light text on a dark background due to perceived advantages related to visual comfort and acuity, specifically when working in low-light environments.

In this paper, we investigate the effects of dark mode color schemes in the field of optical see-through head-mounted displays (OST-HMDs), where the characteristic "additive" light model implies that bright graphics are visible but dark graphics are transparent. We describe a human-subject study in which we evaluated a normal and inverted color mode in front of different physical backgrounds and among different lighting conditions. Our results show that dark mode graphics on OST-HMDs have significant benefits for visual acuity, fatigue, and usability, while user preferences depend largely on the lighting in the physical environment. We discuss the implications of these effects on user interfaces and applications. |

| Kendra Richards; Nikhil Mahalanobis; Kangsoo Kim; Ryan Schubert; Myungho Lee; Salam Daher; Nahal Norouzi; Jason Hochreiter; Gerd Bruder; Gregory F. Welch Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 3:1-3:9, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10. @inproceedings{Richards2019b,

title = {Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality},

author = {Kendra Richards and Nikhil Mahalanobis and Kangsoo Kim and Ryan Schubert and Myungho Lee and Salam Daher and Nahal Norouzi and Jason Hochreiter and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Richards2019b.pdf},

doi = {10.1145/3357251.3357585},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {3:1-3:9},

publisher = {ACM},

abstract = {A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants' perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants' perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications. |

| Austin Erickson; Ryan Schubert; Kangsoo Kim; Gerd Bruder; Greg Welch Is It Cold in Here or Is It Just Me? Analysis of Augmented Reality Temperature Visualization for Computer-Mediated Thermoception Proceedings Article In: Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), pp. 319-327, IEEE, 2019, ISBN: 978-1-7281-4765-9. @inproceedings{Erickson2019iic,

title = {Is It Cold in Here or Is It Just Me? Analysis of Augmented Reality Temperature Visualization for Computer-Mediated Thermoception},

author = {Austin Erickson and Ryan Schubert and Kangsoo Kim and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Erickson2019IIC.pdf},

doi = {10.1109/ISMAR.2019.00046},

isbn = {978-1-7281-4765-9},

year = {2019},

date = {2019-10-19},

urldate = {2019-10-19},

booktitle = {Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR)},

pages = {319-327},

publisher = {IEEE},

abstract = {Modern augmented reality (AR) head-mounted displays comprise a multitude of sensors that allow them to sense the environment around them. We have extended these capabilities by mounting two heat-wavelength infrared cameras to a Microsoft HoloLens, facilitating the acquisition of thermal data and enabling stereoscopic thermal overlays in the user’s augmented view. The ability to visualize live thermal information opens several avenues of investigation on how that thermal awareness may affect a user’s thermoception. We present a human-subject study, in which we simulated different temperature shifts using either heat vision overlays or 3D AR virtual effects associated with thermal cause-effect relationships (e.g., flames burn and ice cools). We further investigated differences in estimated temperatures when the stimuli were applied to either the user’s body or their environment. Our analysis showed significant effects and first trends for the AR virtual effects and heat vision, respectively, on participants’ temperature estimates for their body and the environment though with different strengths and characteristics, which we discuss in this paper. },

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Modern augmented reality (AR) head-mounted displays comprise a multitude of sensors that allow them to sense the environment around them. We have extended these capabilities by mounting two heat-wavelength infrared cameras to a Microsoft HoloLens, facilitating the acquisition of thermal data and enabling stereoscopic thermal overlays in the user’s augmented view. The ability to visualize live thermal information opens several avenues of investigation on how that thermal awareness may affect a user’s thermoception. We present a human-subject study, in which we simulated different temperature shifts using either heat vision overlays or 3D AR virtual effects associated with thermal cause-effect relationships (e.g., flames burn and ice cools). We further investigated differences in estimated temperatures when the stimuli were applied to either the user’s body or their environment. Our analysis showed significant effects and first trends for the AR virtual effects and heat vision, respectively, on participants’ temperature estimates for their body and the environment though with different strengths and characteristics, which we discuss in this paper. |

| Nahal Norouzi; Gerd Bruder; Jeremy Bailenson; Greg Welch Investigating Augmented Reality Animals as Companions Workshop Adjunct proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR) Mixed/Augmented Reality and Mental Health Workshop, 2019, IEEE, 2019, ISBN: 978-1-7281-4765-9. @workshop{Norouzi2019f,

title = {Investigating Augmented Reality Animals as Companions},

author = {Nahal Norouzi and Gerd Bruder and Jeremy Bailenson and Greg Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/ISMAR_Workshop_Paper__NN.pdf},

doi = {10.1109/ISMAR-Adjunct.2019.00104},

isbn = {978-1-7281-4765-9},

year = {2019},

date = {2019-10-18},

booktitle = {Adjunct proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR) Mixed/Augmented Reality and Mental Health Workshop, 2019},

pages = {371-374},

publisher = {IEEE},

abstract = {Human-animal interaction has been studied in a variety of settings and for a range of populations, with some findings pointing towards its benefits for physical, mental and social human health.

Technological advances opened up new opportunities for researchers to replicate human-animal interactions with robotic and graphical animals, and to investigate human-animal relationships for different applications such as mental health and education. Although graphical animals have been studied in the past in the physical health and education domains, most of the time, their realizations were bound to computer screens, limiting their full potential, especially in terms of companionship and the provision of support.

In this work, we describe past research efforts investigating influences of human-animal interaction on mental health and different realization of such animals. We discuss the idea that augmented reality could offer potential for human-animal interaction in terms of mental and social health, and propose several aspects of augmented reality animals that warrant further research for such interactions.},

keywords = {},

pubstate = {published},

tppubtype = {workshop}

}

Human-animal interaction has been studied in a variety of settings and for a range of populations, with some findings pointing towards its benefits for physical, mental and social human health.

Technological advances opened up new opportunities for researchers to replicate human-animal interactions with robotic and graphical animals, and to investigate human-animal relationships for different applications such as mental health and education. Although graphical animals have been studied in the past in the physical health and education domains, most of the time, their realizations were bound to computer screens, limiting their full potential, especially in terms of companionship and the provision of support.

In this work, we describe past research efforts investigating influences of human-animal interaction on mental health and different realization of such animals. We discuss the idea that augmented reality could offer potential for human-animal interaction in terms of mental and social health, and propose several aspects of augmented reality animals that warrant further research for such interactions. |

| Nahal Norouzi; Kangsoo Kim; Myungho Lee; Ryan Schubert; Austin Erickson; Jeremy Bailenson; Gerd Bruder; Greg Welch

Walking Your Virtual Dog: Analysis of Awareness and Proxemics with Simulated Support Animals in Augmented Reality Proceedings Article In: Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2019, pp. 253-264, IEEE, 2019, ISBN: 978-1-7281-4765-9. @inproceedings{Norouzi2019cb,

title = {Walking Your Virtual Dog: Analysis of Awareness and Proxemics with Simulated Support Animals in Augmented Reality },

author = {Nahal Norouzi and Kangsoo Kim and Myungho Lee and Ryan Schubert and Austin Erickson and Jeremy Bailenson and Gerd Bruder and Greg Welch

},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Final__AR_Animal_ISMAR.pdf},

doi = {10.1109/ISMAR.2019.00040},

isbn = {978-1-7281-4765-9},

year = {2019},

date = {2019-10-16},

urldate = {2019-10-16},

booktitle = {Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2019},

pages = {253-264},

publisher = {IEEE},

abstract = {Domestic animals have a long history of enriching human lives physically and mentally by filling a variety of different roles, such as service animals, emotional support animals, companions, and pets. Despite this, technological realizations of such animals in augmented reality (AR) are largely underexplored in terms of their behavior and interactions as well as effects they might have on human users' perception or behavior. In this paper, we describe a simulated virtual companion animal, in the form of a dog, in a shared AR space. We investigated its effects on participants' perception and behavior, including locomotion related to proxemics, with respect to their AR dog and other real people in the environment. We conducted a 2 by 2 mixed factorial human-subject study, in which we varied (i) the AR dog's awareness and behavior with respect to other people in the physical environment and (ii) the awareness and behavior of those people with respect to the AR dog. Our results show that having an AR companion dog changes participants' locomotion behavior, proxemics, and social interaction with other people who can or can not see the AR dog. We also show that the AR dog's simulated awareness and behaviors have an impact on participants' perception, including co-presence, animalism, perceived physicality, and dog's perceived awareness of the participant and environment. We discuss our findings and present insights and implications for the realization of effective AR animal companions.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

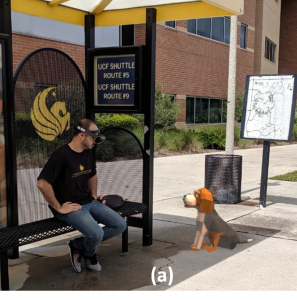

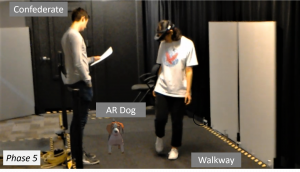

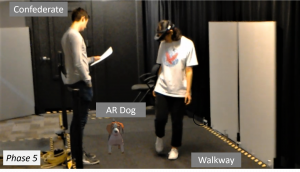

Domestic animals have a long history of enriching human lives physically and mentally by filling a variety of different roles, such as service animals, emotional support animals, companions, and pets. Despite this, technological realizations of such animals in augmented reality (AR) are largely underexplored in terms of their behavior and interactions as well as effects they might have on human users' perception or behavior. In this paper, we describe a simulated virtual companion animal, in the form of a dog, in a shared AR space. We investigated its effects on participants' perception and behavior, including locomotion related to proxemics, with respect to their AR dog and other real people in the environment. We conducted a 2 by 2 mixed factorial human-subject study, in which we varied (i) the AR dog's awareness and behavior with respect to other people in the physical environment and (ii) the awareness and behavior of those people with respect to the AR dog. Our results show that having an AR companion dog changes participants' locomotion behavior, proxemics, and social interaction with other people who can or can not see the AR dog. We also show that the AR dog's simulated awareness and behaviors have an impact on participants' perception, including co-presence, animalism, perceived physicality, and dog's perceived awareness of the participant and environment. We discuss our findings and present insights and implications for the realization of effective AR animal companions. |

| Gregory F. Welch; Gerd Bruder; Peter Squire; Ryan Schubert Anticipating Widespread Augmented Reality: Insights from the 2018 AR Visioning Workshop Technical Report University of Central Florida and Office of Naval Research no. 786, 2019. @techreport{Welch2019b,

title = {Anticipating Widespread Augmented Reality: Insights from the 2018 AR Visioning Workshop},

author = {Gregory F. Welch and Gerd Bruder and Peter Squire and Ryan Schubert},

url = {https://stars.library.ucf.edu/ucfscholar/786/

https://sreal.ucf.edu/wp-content/uploads/2019/08/Welch2019b-1.pdf},

year = {2019},

date = {2019-08-06},

issuetitle = {Faculty Scholarship and Creative Works},

number = {786},

institution = {University of Central Florida and Office of Naval Research},

abstract = {In August of 2018 a group of academic, government, and industry experts in the field of Augmented Reality gathered for four days to consider potential technological and societal issues and opportunities that could accompany a future where AR is pervasive in location and duration of use. This report is intended to summarize some of the most novel and potentially impactful insights and opportunities identified by the group.

Our target audience includes AR researchers, government leaders, and thought leaders in general. It is our intent to share some compelling technological and societal questions that we believe are unique to AR, and to engender new thinking about the potentially impactful synergies associated with the convergence of AR and some other conventionally distinct areas of research.},

keywords = {},

pubstate = {published},

tppubtype = {techreport}

}

In August of 2018 a group of academic, government, and industry experts in the field of Augmented Reality gathered for four days to consider potential technological and societal issues and opportunities that could accompany a future where AR is pervasive in location and duration of use. This report is intended to summarize some of the most novel and potentially impactful insights and opportunities identified by the group.

Our target audience includes AR researchers, government leaders, and thought leaders in general. It is our intent to share some compelling technological and societal questions that we believe are unique to AR, and to engender new thinking about the potentially impactful synergies associated with the convergence of AR and some other conventionally distinct areas of research. |

| Kangsoo Kim; Ryan Schubert; Jason Hochreiter; Gerd Bruder; Gregory Welch Blowing in the Wind: Increasing Social Presence with a Virtual Human via Environmental Airflow Interaction in Mixed Reality Journal Article In: Elsevier Computers and Graphics, vol. 83, no. October 2019, pp. 23-32, 2019. @article{Kim2019blow,

title = {Blowing in the Wind: Increasing Social Presence with a Virtual Human via Environmental Airflow Interaction in Mixed Reality},

author = {Kangsoo Kim and Ryan Schubert and Jason Hochreiter and Gerd Bruder and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/06/ELSEVIER_C_G2019_Special_BlowWindinMR_ICAT_EGVE2018_20190606_reduced.pdf},

doi = {10.1016/j.cag.2019.06.006},

year = {2019},

date = {2019-07-05},

journal = {Elsevier Computers and Graphics},

volume = {83},

number = {October 2019},

pages = {23-32},

abstract = {In this paper, we describe two human-subject studies in which we explored and investigated the effects of subtle multimodal interaction on social presence with a virtual human (VH) in mixed reality (MR). In the studies, participants interacted with a VH, which was co-located with them across a table, with two different platforms: a projection based MR environment and an optical see-through head-mounted display (OST-HMD) based MR environment. While the two studies were not intended to be directly comparable, the second study with an OST-HMD was carefully designed based on the insights and lessons learned from the first projection-based study. For both studies, we compared two levels of gradually increased multimodal interaction: (i) virtual objects being affected by real airflow (e.g., as commonly experienced with fans during warm weather), and (ii) a VH showing awareness of this airflow. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher social presence with airflow influence than without it, and the social presence would be even higher when the VH showed awareness of the airflow. We observed an increased social presence in the second study when both physical–virtual interaction via airflow and VH awareness behaviors were present, but we observed no clear difference in participant-reported social presence with the VH in the first study. As the considered environmental factors are incidental to the direct interaction with the real human, i.e., they are not significant or necessary for the interaction task, they can provide a reasonably generalizable approach to increase social presence in HMD-based MR environments beyond the specific scenario and environment described here.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

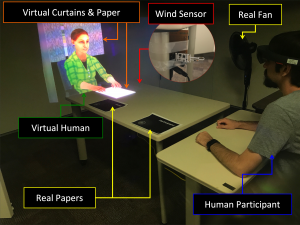

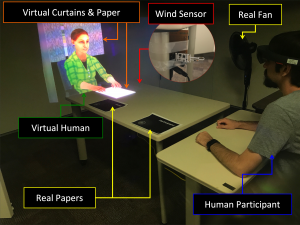

In this paper, we describe two human-subject studies in which we explored and investigated the effects of subtle multimodal interaction on social presence with a virtual human (VH) in mixed reality (MR). In the studies, participants interacted with a VH, which was co-located with them across a table, with two different platforms: a projection based MR environment and an optical see-through head-mounted display (OST-HMD) based MR environment. While the two studies were not intended to be directly comparable, the second study with an OST-HMD was carefully designed based on the insights and lessons learned from the first projection-based study. For both studies, we compared two levels of gradually increased multimodal interaction: (i) virtual objects being affected by real airflow (e.g., as commonly experienced with fans during warm weather), and (ii) a VH showing awareness of this airflow. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher social presence with airflow influence than without it, and the social presence would be even higher when the VH showed awareness of the airflow. We observed an increased social presence in the second study when both physical–virtual interaction via airflow and VH awareness behaviors were present, but we observed no clear difference in participant-reported social presence with the VH in the first study. As the considered environmental factors are incidental to the direct interaction with the real human, i.e., they are not significant or necessary for the interaction task, they can provide a reasonably generalizable approach to increase social presence in HMD-based MR environments beyond the specific scenario and environment described here. |

| Laura Gonzalez; Salam Daher; Gregory Welch Vera Real : Stroke Assessment Using a Physical Virtual Patient (PVP) Conference INACSL 2019. @conference{gonzalez_2019_vera,

title = {Vera Real : Stroke Assessment Using a Physical Virtual Patient (PVP)},

author = {Laura Gonzalez and Salam Daher and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/07/INACSL-_Conference_VERA.pdf},

year = {2019},

date = {2019-06-21},

organization = {INACSL},

abstract = {Introduction: Simulation has revolutionized the way we teach and learn; and the pedagogy of simulation continues to mature. Mannequins have limitations such as its inability to exhibit emotions, idle movements, or interactive patient gaze. As a result, students struggle with suspension of disbelief and may be unable to relate to the “patient” authentically. Physical virtual patients (PVP) are a new type of simulator which combines the physicality of mannequins plus the richness of dynamic imagery such as blink, smile, and other facial expressions. The purpose of this study was to compare a traditional mannequin vs. a more realistic PVP head. The concept under consideration is realism and its influence on engagement and learning. Methods: The study used a pre-test, post-test, randomized in-between subject design (N=59) with undergraduate nursing students. Students assessed an evolving stroke patient, and completed post simulation questions to evaluate engagement and sense of urgency. A knowledge pre-simulation and post-simulation test were administered to evaluate learning. Results: Participants where more engaged with the PVP condition; which provoked a higher sense of urgency. There was a significant change between the pre-simulation test, and post-simulation test which supported increased learning for the PVP when compared to the mannequin. Discussion: This study demonstrated that increasing realism, could increase engagement which may result in a greater sense of urgency and learning. This PVP technology is a viable addition to mannequin based simulation. Future works includes extending this technology to a full body PVP. },

keywords = {},

pubstate = {published},

tppubtype = {conference}

}

Introduction: Simulation has revolutionized the way we teach and learn; and the pedagogy of simulation continues to mature. Mannequins have limitations such as its inability to exhibit emotions, idle movements, or interactive patient gaze. As a result, students struggle with suspension of disbelief and may be unable to relate to the “patient” authentically. Physical virtual patients (PVP) are a new type of simulator which combines the physicality of mannequins plus the richness of dynamic imagery such as blink, smile, and other facial expressions. The purpose of this study was to compare a traditional mannequin vs. a more realistic PVP head. The concept under consideration is realism and its influence on engagement and learning. Methods: The study used a pre-test, post-test, randomized in-between subject design (N=59) with undergraduate nursing students. Students assessed an evolving stroke patient, and completed post simulation questions to evaluate engagement and sense of urgency. A knowledge pre-simulation and post-simulation test were administered to evaluate learning. Results: Participants where more engaged with the PVP condition; which provoked a higher sense of urgency. There was a significant change between the pre-simulation test, and post-simulation test which supported increased learning for the PVP when compared to the mannequin. Discussion: This study demonstrated that increasing realism, could increase engagement which may result in a greater sense of urgency and learning. This PVP technology is a viable addition to mannequin based simulation. Future works includes extending this technology to a full body PVP. |

| Nahal Norouzi; Luke Bölling; Gerd Bruder; Gregory F. Welch Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement Journal Article In: Journal of Rehabilitation and Assistive Technologies Engineering, vol. 6, pp. 1-9, 2019. @article{Norouzi2019c,

title = {Augmented Rotations in Virtual Reality for Users with a Reduced Range of Head Movement},

author = {Nahal Norouzi and Luke Bölling and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/05/RATE2019_AugmentedRotations.pdf},

doi = {10.1177/2055668319841309},

year = {2019},

date = {2019-05-21},

journal = {Journal of Rehabilitation and Assistive Technologies Engineering},

volume = {6},

pages = {1-9},

abstract = {Introduction: A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement.

Methods: In this paper, we present techniques that allow these users to get virtual benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds.

Results: We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker,e.g., in cases when no physical head rotations are possible.

Conclusions: We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

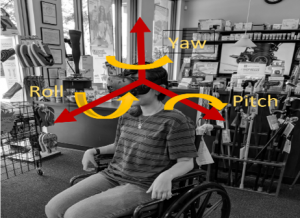

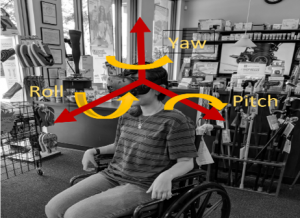

Introduction: A large body of research in the field of virtual reality (VR) is focused on making user interfaces more natural and intuitive by leveraging natural body movements to explore a virtual environment. For example, head-tracked user interfaces allow users to naturally look around a virtual space by moving their head. However, such approaches may not be appropriate for users with temporary or permanent limitations of their head movement.

Methods: In this paper, we present techniques that allow these users to get virtual benefits from a reduced range of physical movements. Specifically, we describe two techniques that augment virtual rotations relative to physical movement thresholds.

Results: We describe how each of the two techniques can be implemented with either a head tracker or an eye tracker,e.g., in cases when no physical head rotations are possible.

Conclusions: We discuss their differences and limitations and we provide guidelines for the practical use of such augmented user interfaces. |

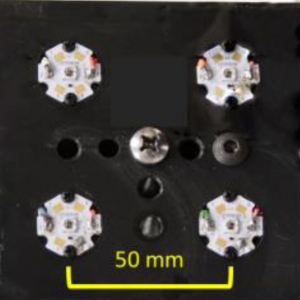

| Alex Blate; Mary Whitton; Montek Singh; Greg Welch; Andrei State; Turner Whitted; Henry Fuchs Implementation and Evaluation of a 50kHz, 28μs Motion-to-Pose Latency Head Tracking Instrument Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 25, no. 5, pp. 1970-1980, 2019, ISSN: 1077-2626. @article{Blate2019aa,

title = {Implementation and Evaluation of a 50kHz, 28μs Motion-to-Pose Latency Head Tracking Instrument},

author = {Alex Blate and Mary Whitton and Montek Singh and Greg Welch and Andrei State and Turner Whitted and Henry Fuchs},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/07/Blate2019aa.pdf},

doi = {10.1109/TVCG.2019.2899233},

issn = {1077-2626},

year = {2019},

date = {2019-05-01},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {25},

number = {5},

pages = {1970-1980},

abstract = {This paper presents the implementation and evaluation of a 50,000-pose-sample-per-second, 6-degree-of-freedom optical head tracking instrument with motion-to-pose latency of 28μs and dynamic precision of 1-2 arcminutes. The instrument uses high-intensity infrared emitters and two duo-lateral photodiode-based optical sensors to triangulate pose. This instrument serves two purposes: it is the first step towards the requisite head tracking component in sub-100μs motion-to-photon latency optical see- through augmented reality (OST AR) head-mounted display (HMD) systems; and it enables new avenues of research into human visual perception – including measuring the thresholds for perceptible real-virtual displacement during head rotation and other human research requiring high-sample-rate motion tracking. The instrument’s tracking volume is limited to about 120×120×250mm but allows for the full range of natural head rotation and is sufficient for research involving seated users. We discuss how the instrument’s tracking volume is scalable in multiple ways and some of the trade-offs involved therein. Finally, we introduce a novel laser-pointer-based measurement technique for assessing the instrument’s tracking latency and repeatability. We show that the instrument’s motion-to-pose latency is 28μs and that it is repeatable within 1-2 arcminutes at mean rotational velocities (yaw) in excess of 500°/sec.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

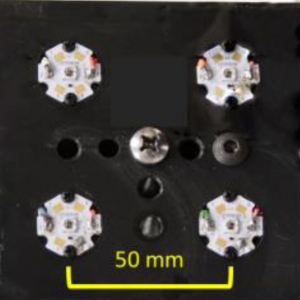

This paper presents the implementation and evaluation of a 50,000-pose-sample-per-second, 6-degree-of-freedom optical head tracking instrument with motion-to-pose latency of 28μs and dynamic precision of 1-2 arcminutes. The instrument uses high-intensity infrared emitters and two duo-lateral photodiode-based optical sensors to triangulate pose. This instrument serves two purposes: it is the first step towards the requisite head tracking component in sub-100μs motion-to-photon latency optical see- through augmented reality (OST AR) head-mounted display (HMD) systems; and it enables new avenues of research into human visual perception – including measuring the thresholds for perceptible real-virtual displacement during head rotation and other human research requiring high-sample-rate motion tracking. The instrument’s tracking volume is limited to about 120×120×250mm but allows for the full range of natural head rotation and is sufficient for research involving seated users. We discuss how the instrument’s tracking volume is scalable in multiple ways and some of the trade-offs involved therein. Finally, we introduce a novel laser-pointer-based measurement technique for assessing the instrument’s tracking latency and repeatability. We show that the instrument’s motion-to-pose latency is 28μs and that it is repeatable within 1-2 arcminutes at mean rotational velocities (yaw) in excess of 500°/sec. |

| Mark Roman Miller; Hanseul Jun; Fernanda Herrera; Jacob Yu Villa; Greg Welch; Jeremy N Bailenson Social Interaction in Augmented Reality Journal Article In: PLOS ONE, vol. 14, no. 5, pp. 1-26, 2019. @article{Miller2019,

title = {Social Interaction in Augmented Reality},

author = {Mark Roman Miller and Hanseul Jun and Fernanda Herrera and Jacob Yu Villa and Greg Welch and Jeremy N Bailenson},

url = {https://doi.org/10.1371/journal.pone.0216290

https://sreal.ucf.edu/wp-content/uploads/2019/05/Miller2019.pdf},

doi = {10.1371/journal.pone.0216290},

year = {2019},

date = {2019-05-01},

journal = {PLOS ONE},

volume = {14},

number = {5},

pages = {1-26},

publisher = {Public Library of Science},

abstract = {There have been decades of research on the usability and educational value of augmented reality. However, less is known about how augmented reality affects social interactions. The current paper presents three studies that test the social psychological effects of augmented reality. Study 1 examined participants’ task performance in the presence of embodied agents and replicated the typical pattern of social facilitation and inhibition. Participants performed a simple task better, but a hard task worse, in the presence of an agent compared to when participants complete the tasks alone. Study 2 examined nonverbal behavior. Participants met an agent sitting in one of two chairs and were asked to choose one of the chairs to sit on. Participants wearing the headset never sat directly on the agent when given the choice of two seats, and while approaching, most of the participants chose the rotation direction to avoid turning their heads away from the agent. A separate group of participants chose a seat after removing the augmented reality headset, and the majority still avoided the seat previously occupied by the agent. Study 3 examined the social costs of using an augmented reality headset with others who are not using a headset. Participants talked in dyads, and augmented reality users reported less social connection to their partner compared to those not using augmented reality. Overall, these studies provide evidence suggesting that task performance, nonverbal behavior, and social connectedness are significantly affected by the presence or absence of virtual content.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

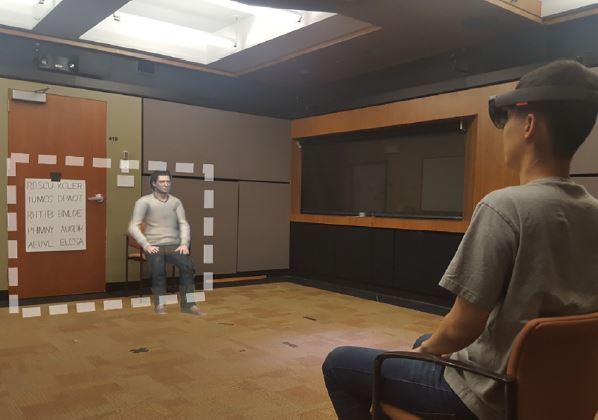

There have been decades of research on the usability and educational value of augmented reality. However, less is known about how augmented reality affects social interactions. The current paper presents three studies that test the social psychological effects of augmented reality. Study 1 examined participants’ task performance in the presence of embodied agents and replicated the typical pattern of social facilitation and inhibition. Participants performed a simple task better, but a hard task worse, in the presence of an agent compared to when participants complete the tasks alone. Study 2 examined nonverbal behavior. Participants met an agent sitting in one of two chairs and were asked to choose one of the chairs to sit on. Participants wearing the headset never sat directly on the agent when given the choice of two seats, and while approaching, most of the participants chose the rotation direction to avoid turning their heads away from the agent. A separate group of participants chose a seat after removing the augmented reality headset, and the majority still avoided the seat previously occupied by the agent. Study 3 examined the social costs of using an augmented reality headset with others who are not using a headset. Participants talked in dyads, and augmented reality users reported less social connection to their partner compared to those not using augmented reality. Overall, these studies provide evidence suggesting that task performance, nonverbal behavior, and social connectedness are significantly affected by the presence or absence of virtual content. |

![[POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents](https://sreal.ucf.edu/wp-content/uploads/2019/03/ieeevr_poster_thumbnail-1.png) | Salam Daher; Jason Hochreiter; Nahal Norouzi; Ryan Schubert; Gerd Bruder; Laura Gonzalez; Mindi Anderson; Desiree Diaz; Juan Cendan; Greg Welch [POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), 2019, 2019. @inproceedings{daher2019matching,

title = {[POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents},

author = {Salam Daher and Jason Hochreiter and Nahal Norouzi and Ryan Schubert and Gerd Bruder and Laura Gonzalez and Mindi Anderson and Desiree Diaz and Juan Cendan and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/03/IEEEVR2019_Poster_PVChildStudy.pdf},

year = {2019},

date = {2019-03-27},

publisher = {Proceedings of IEEE Virtual Reality (VR), 2019},

abstract = {Embodied virtual agents serving as patient simulators are widely used in medical training scenarios, ranging from physical patients to virtual patients presented via virtual and augmented reality technologies. Physical-virtual patients are a hybrid solution that combines the benefits of dynamic visuals integrated into a human-shaped physical

form that can also present other cues, such as pulse, breathing sounds, and temperature. Sometimes in simulation the visuals and shape do not match. We carried out a human-participant study employing graduate nursing students in pediatric patient simulations comprising conditions associated with matching/non-matching of the visuals and shape.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Embodied virtual agents serving as patient simulators are widely used in medical training scenarios, ranging from physical patients to virtual patients presented via virtual and augmented reality technologies. Physical-virtual patients are a hybrid solution that combines the benefits of dynamic visuals integrated into a human-shaped physical

form that can also present other cues, such as pulse, breathing sounds, and temperature. Sometimes in simulation the visuals and shape do not match. We carried out a human-participant study employing graduate nursing students in pediatric patient simulations comprising conditions associated with matching/non-matching of the visuals and shape. |

![[POSTER] Matching vs. Non-Matching Visuals and Shape for Embodied Virtual Healthcare Agents](https://sreal.ucf.edu/wp-content/uploads/2019/03/ieeevr_poster_thumbnail-1.png)