2019

|

| Myungho Lee; Nahal Norouzi; Gerd Bruder; Pamela J. Wisniewski; Gregory F. Welch Mixed Reality Tabletop Gameplay: Social Interaction with a Virtual Human Capable of Physical Influence Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 8, pp. 1-12, 2019, ISSN: 1077-2626. @article{Lee2020,

title = {Mixed Reality Tabletop Gameplay: Social Interaction with a Virtual Human Capable of Physical Influence},

author = {Myungho Lee and Nahal Norouzi and Gerd Bruder and Pamela J. Wisniewski and Gregory F. Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2019/12/TVCG_Physical_Virtual_Table_2019.pdf},

doi = {10.1109/TVCG.2019.2959575},

issn = {1077-2626},

year = {2019},

date = {2019-12-18},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {24},

number = {8},

pages = {1-12},

abstract = {In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in a mixed reality environment. In Experiment 1, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We compared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through augmented reality (AR) glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s physical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further observed transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. In Experiment 2, we further looked into the question how the physical-virtual latency in movements affected the perceived plausibility of the VH’s interaction with the real world. Our results indicate that a slight temporal difference between the physical token reacting to the virtual hand’s movement increased the perceived realism and causality of the mixed reality interaction. We discuss potential explanations for the findings and implications for future shared mixed reality tabletop setups.

},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in a mixed reality environment. In Experiment 1, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We compared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through augmented reality (AR) glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s physical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further observed transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. In Experiment 2, we further looked into the question how the physical-virtual latency in movements affected the perceived plausibility of the VH’s interaction with the real world. Our results indicate that a slight temporal difference between the physical token reacting to the virtual hand’s movement increased the perceived realism and causality of the mixed reality interaction. We discuss potential explanations for the findings and implications for future shared mixed reality tabletop setups.

|

| Kendra Richards; Nikhil Mahalanobis; Kangsoo Kim; Ryan Schubert; Myungho Lee; Salam Daher; Nahal Norouzi; Jason Hochreiter; Gerd Bruder; Gregory F. Welch Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 3:1-3:9, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10. @inproceedings{Richards2019b,

title = {Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality},

author = {Kendra Richards and Nikhil Mahalanobis and Kangsoo Kim and Ryan Schubert and Myungho Lee and Salam Daher and Nahal Norouzi and Jason Hochreiter and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Richards2019b.pdf},

doi = {10.1145/3357251.3357585},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {3:1-3:9},

publisher = {ACM},

abstract = {A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants' perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants' perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications. |

| Nahal Norouzi; Kangsoo Kim; Myungho Lee; Ryan Schubert; Austin Erickson; Jeremy Bailenson; Gerd Bruder; Greg Welch

Walking Your Virtual Dog: Analysis of Awareness and Proxemics with Simulated Support Animals in Augmented Reality Proceedings Article In: Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2019, pp. 253-264, IEEE, 2019, ISBN: 978-1-7281-4765-9. @inproceedings{Norouzi2019cb,

title = {Walking Your Virtual Dog: Analysis of Awareness and Proxemics with Simulated Support Animals in Augmented Reality },

author = {Nahal Norouzi and Kangsoo Kim and Myungho Lee and Ryan Schubert and Austin Erickson and Jeremy Bailenson and Gerd Bruder and Greg Welch

},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Final__AR_Animal_ISMAR.pdf},

doi = {10.1109/ISMAR.2019.00040},

isbn = {978-1-7281-4765-9},

year = {2019},

date = {2019-10-16},

urldate = {2019-10-16},

booktitle = {Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2019},

pages = {253-264},

publisher = {IEEE},

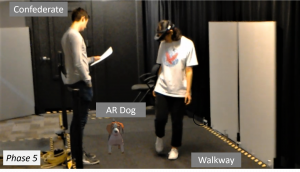

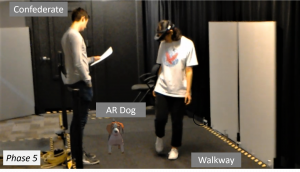

abstract = {Domestic animals have a long history of enriching human lives physically and mentally by filling a variety of different roles, such as service animals, emotional support animals, companions, and pets. Despite this, technological realizations of such animals in augmented reality (AR) are largely underexplored in terms of their behavior and interactions as well as effects they might have on human users' perception or behavior. In this paper, we describe a simulated virtual companion animal, in the form of a dog, in a shared AR space. We investigated its effects on participants' perception and behavior, including locomotion related to proxemics, with respect to their AR dog and other real people in the environment. We conducted a 2 by 2 mixed factorial human-subject study, in which we varied (i) the AR dog's awareness and behavior with respect to other people in the physical environment and (ii) the awareness and behavior of those people with respect to the AR dog. Our results show that having an AR companion dog changes participants' locomotion behavior, proxemics, and social interaction with other people who can or can not see the AR dog. We also show that the AR dog's simulated awareness and behaviors have an impact on participants' perception, including co-presence, animalism, perceived physicality, and dog's perceived awareness of the participant and environment. We discuss our findings and present insights and implications for the realization of effective AR animal companions.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Domestic animals have a long history of enriching human lives physically and mentally by filling a variety of different roles, such as service animals, emotional support animals, companions, and pets. Despite this, technological realizations of such animals in augmented reality (AR) are largely underexplored in terms of their behavior and interactions as well as effects they might have on human users' perception or behavior. In this paper, we describe a simulated virtual companion animal, in the form of a dog, in a shared AR space. We investigated its effects on participants' perception and behavior, including locomotion related to proxemics, with respect to their AR dog and other real people in the environment. We conducted a 2 by 2 mixed factorial human-subject study, in which we varied (i) the AR dog's awareness and behavior with respect to other people in the physical environment and (ii) the awareness and behavior of those people with respect to the AR dog. Our results show that having an AR companion dog changes participants' locomotion behavior, proxemics, and social interaction with other people who can or can not see the AR dog. We also show that the AR dog's simulated awareness and behaviors have an impact on participants' perception, including co-presence, animalism, perceived physicality, and dog's perceived awareness of the participant and environment. We discuss our findings and present insights and implications for the realization of effective AR animal companions. |

| Myungho Lee Mediated Physicality: Inducing Illusory Physicality of Virtual Humans via Their Interactions with Physical Objects PhD Thesis University of Central Florida, 2019. @phdthesis{Lee2019,

title = {Mediated Physicality: Inducing Illusory Physicality of Virtual Humans via Their Interactions with Physical Objects},

author = {Myungho Lee},

url = {https://nam02.safelinks.protection.outlook.com/?url=https%3A%2F%2Fstars.library.ucf.edu%2Fcgi%2Fviewcontent.cgi%3Farticle%3D7295%26context%3Detd&data=02%7C01%7CBarbara.Lee%40ucf.edu%7C41a8f0bd17084e9aa2d508d6ee7fec5a%7Cbb932f15ef3842ba91fcf3c59d5dd1f1%7C0%7C0%7C636958630521181141&sdata=tXZmmQkOfuU71LPQkIe0DBtFiUZwqWP8MQ9NDqoEvRw%3D&reserved=0

https://sreal.ucf.edu/wp-content/uploads/2019/06/Lee-2019-Dissertation.pdf},

year = {2019},

date = {2019-04-02},

school = {University of Central Florida},

abstract = {The term virtual human (VH) generally refers to a human-like entity comprised of computer graphics and/or physical body. In the associated research literature, a VH can be further classified as an avatar—a human-controlled VH, or an agent—a computer-controlled VH. Because of the resemblance with humans, people naturally distinguish them from non-human objects, and often treat them in ways similar to real humans. Sometimes people develop a sense of co-presence or social

presence with the VH—a phenomenon that is often exploited for training simulations where the VH assumes the role of a human.

Prior research associated with VHs has primarily focused on the realism of various visual traits, e.g., appearance, shape, and gestures. However, our sense of the presence of other humans is also affected by other physical sensations conveyed through nearby space or physical objects. For example, we humans can perceive the presence of other individuals via the sound or tactile sensation of approaching footsteps, or by the presence of complementary or opposing forces when carrying a physical box with another person.

In my research, I exploit the fact that these sensations, when correlated with events in the shared space, affect one’s feeling of social/co-presence with another person. In this dissertation, I introduce novel methods for utilizing direct and indirect physical-virtual interactions with VHs to increase the sense of social/co-presence with the VHs—an approach I refer to as mediated physicality. I present results from controlled user studies, in various virtual environment settings, that support the idea that mediated physicality can increase a user’s sense of social/co-presence with the VH, and/or induced realistic social behavior. I discuss relationships to prior research, possible explanations for my findings, and areas for future research.},

keywords = {},

pubstate = {published},

tppubtype = {phdthesis}

}

The term virtual human (VH) generally refers to a human-like entity comprised of computer graphics and/or physical body. In the associated research literature, a VH can be further classified as an avatar—a human-controlled VH, or an agent—a computer-controlled VH. Because of the resemblance with humans, people naturally distinguish them from non-human objects, and often treat them in ways similar to real humans. Sometimes people develop a sense of co-presence or social

presence with the VH—a phenomenon that is often exploited for training simulations where the VH assumes the role of a human.

Prior research associated with VHs has primarily focused on the realism of various visual traits, e.g., appearance, shape, and gestures. However, our sense of the presence of other humans is also affected by other physical sensations conveyed through nearby space or physical objects. For example, we humans can perceive the presence of other individuals via the sound or tactile sensation of approaching footsteps, or by the presence of complementary or opposing forces when carrying a physical box with another person.

In my research, I exploit the fact that these sensations, when correlated with events in the shared space, affect one’s feeling of social/co-presence with another person. In this dissertation, I introduce novel methods for utilizing direct and indirect physical-virtual interactions with VHs to increase the sense of social/co-presence with the VHs—an approach I refer to as mediated physicality. I present results from controlled user studies, in various virtual environment settings, that support the idea that mediated physicality can increase a user’s sense of social/co-presence with the VH, and/or induced realistic social behavior. I discuss relationships to prior research, possible explanations for my findings, and areas for future research. |

2018

|

| Myungho Lee; Nahal Norouzi; Gerd Bruder; Pamela J. Wisniewski; Gregory F. Welch The Physical-virtual Table: Exploring the Effects of a Virtual Human's Physical Influence on Social Interaction Proceedings Article In: Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology, pp. 25:1–25:11, ACM, New York, NY, USA, 2018, ISBN: 978-1-4503-6086-9, (Best Paper Award). @inproceedings{Lee2018ac,

title = {The Physical-virtual Table: Exploring the Effects of a Virtual Human's Physical Influence on Social Interaction},

author = {Myungho Lee and Nahal Norouzi and Gerd Bruder and Pamela J. Wisniewski and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/Lee2018ab.pdf},

doi = {10.1145/3281505.3281533},

isbn = {978-1-4503-6086-9},

year = {2018},

date = {2018-11-28},

booktitle = {Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology},

journal = {Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology},

pages = {25:1--25:11},

publisher = {ACM},

address = {New York, NY, USA},

series = {VRST '18},

abstract = {In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in augmented reality (AR). In our study, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We com- pared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through AR glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s phys- ical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further ob- served transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. We discuss potential explanations for the findings and implications for future shared AR tabletop setups.},

note = {Best Paper Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper, we investigate the effects of the physical influence of a virtual human (VH) in the context of face-to-face interaction in augmented reality (AR). In our study, participants played a tabletop game with a VH, in which each player takes a turn and moves their own token along the designated spots on the shared table. We com- pared two conditions as follows: the VH in the virtual condition moves a virtual token that can only be seen through AR glasses, while the VH in the physical condition moves a physical token as the participants do; therefore the VH’s token can be seen even in the periphery of the AR glasses. For the physical condition, we designed an actuator system underneath the table. The actuator moves a magnet under the table which then moves the VH’s phys- ical token over the surface of the table. Our results indicate that participants felt higher co-presence with the VH in the physical condition, and participants assessed the VH as a more physical entity compared to the VH in the virtual condition. We further ob- served transference effects when participants attributed the VH’s ability to move physical objects to other elements in the real world. Also, the VH’s physical influence improved participants’ overall experience with the VH. We discuss potential explanations for the findings and implications for future shared AR tabletop setups. |