2020

|

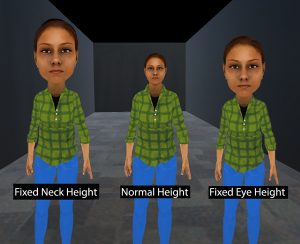

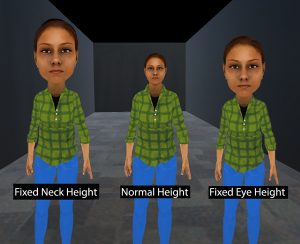

| Zubin Choudhary; Kangsoo Kim; Ryan Schubert; Gerd Bruder; Gregory F. Welch Virtual Big Heads: Analysis of Human Perception and Comfort of Head Scales in Social Virtual Reality Proceedings Article In: Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR), pp. 425-433, Atlanta, Georgia, 2020. @inproceedings{Choudhary2020vbh,

title = {Virtual Big Heads: Analysis of Human Perception and Comfort of Head Scales in Social Virtual Reality},

author = {Zubin Choudhary and Kangsoo Kim and Ryan Schubert and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/02/IEEEVR2020_BigHead.pdf

https://www.youtube.com/watch?v=14289nufYf0, YouTube Presentation},

doi = {10.1109/VR46266.2020.00-41},

year = {2020},

date = {2020-03-23},

booktitle = {Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR)},

pages = {425-433},

address = {Atlanta, Georgia},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Austin Erickson; Kangsoo Kim; Gerd Bruder; Greg Welch Effects of Dark Mode Graphics on Visual Acuity and Fatigue with Virtual Reality Head-Mounted Displays Proceedings Article In: Proceedings of IEEE International Conference on Virtual Reality and 3D User Interfaces (IEEE VR), pp. 434-442, Atlanta, Georgia, 2020. @inproceedings{Erickson2020,

title = {Effects of Dark Mode Graphics on Visual Acuity and Fatigue with Virtual Reality Head-Mounted Displays},

author = {Austin Erickson and Kangsoo Kim and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/02/VR2020_DarkMode2_0.pdf

https://www.youtube.com/watch?v=wePUk0xTLA0&t=5s, YouTube Presentation},

doi = {10.1109/VR46266.2020.00-40},

year = {2020},

date = {2020-03-23},

urldate = {2020-03-23},

booktitle = {Proceedings of IEEE International Conference on Virtual Reality and 3D User Interfaces (IEEE VR)},

pages = {434-442},

address = {Atlanta, Georgia},

abstract = {Current virtual reality (VR) head-mounted displays (HMDs) are characterized by a low angular resolution that makes it difficult to make out details, leading to reduced legibility of text and increased visual fatigue. Light-on-dark graphics modes, so-called ``dark mode'' graphics, are becoming more and more popular over a wide range of display technologies, and have been correlated with increased visual comfort and acuity, specifically when working in low-light environments, which suggests that they might provide significant advantages for VR HMDs.

In this paper, we present a human-subject study investigating the correlations between the color mode and the ambient lighting with respect to visual acuity and fatigue on VR HMDs.

We compare two color schemes, characterized by light letters on a dark background (dark mode), or dark letters on a light background (light mode), and show that the dark background in dark mode provides a significant advantage in terms of reduced visual fatigue and increased visual acuity in dim virtual environments on current HMDs. Based on our results, we discuss guidelines for user interfaces and applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Current virtual reality (VR) head-mounted displays (HMDs) are characterized by a low angular resolution that makes it difficult to make out details, leading to reduced legibility of text and increased visual fatigue. Light-on-dark graphics modes, so-called ``dark mode'' graphics, are becoming more and more popular over a wide range of display technologies, and have been correlated with increased visual comfort and acuity, specifically when working in low-light environments, which suggests that they might provide significant advantages for VR HMDs.

In this paper, we present a human-subject study investigating the correlations between the color mode and the ambient lighting with respect to visual acuity and fatigue on VR HMDs.

We compare two color schemes, characterized by light letters on a dark background (dark mode), or dark letters on a light background (light mode), and show that the dark background in dark mode provides a significant advantage in terms of reduced visual fatigue and increased visual acuity in dim virtual environments on current HMDs. Based on our results, we discuss guidelines for user interfaces and applications. |

![[Tutorial] Developing Embodied Interactive Virtual Characters for Human-Subjects Studies](https://sreal.ucf.edu/wp-content/uploads/2020/04/IEEEVR2020Tutorial-300x176.png) | Kangsoo Kim; Nahal Norouzi; Austin Erickson [Tutorial] Developing Embodied Interactive Virtual Characters for Human-Subjects Studies Presentation 22.03.2020. @misc{Kim2020dei,

title = {[Tutorial] Developing Embodied Interactive Virtual Characters for Human-Subjects Studies},

author = {Kangsoo Kim and Nahal Norouzi and Austin Erickson},

url = {https://www.youtube.com/watch?v=UgT_-LVrQlc&list=PLMvKdHzC3SyacMfUj3qqd-pIjKmjtmwnz

https://sreal.ucf.edu/ieee-vr-2020-tutorial-developing-embodied-interactive-virtual-characters-for-human-subjects-studies/},

year = {2020},

date = {2020-03-22},

urldate = {2020-03-22},

booktitle = {IEEE International Conference on Virtual Reality and 3D User Interfaces (IEEE VR)},

keywords = {},

pubstate = {published},

tppubtype = {presentation}

}

|

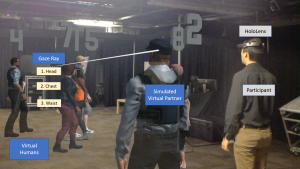

| Austin Erickson; Nahal Norouzi; Kangsoo Kim; Joseph J. LaViola Jr.; Gerd Bruder; Gregory F. Welch Effects of Depth Information on Visual Target Identification Task Performance in Shared Gaze Environments Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 26, no. 5, pp. 1934-1944, 2020, ISSN: 1077-2626, (Presented at IEEE VR 2020). @article{Erickson2020c,

title = {Effects of Depth Information on Visual Target Identification Task Performance in Shared Gaze Environments},

author = {Austin Erickson and Nahal Norouzi and Kangsoo Kim and Joseph J. LaViola Jr. and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2020/02/shared_gaze_2_FINAL.pdf

https://www.youtube.com/watch?v=JQO_iosY62Y&t=6s, YouTube Presentation},

doi = {10.1109/TVCG.2020.2973054},

issn = {1077-2626},

year = {2020},

date = {2020-02-13},

urldate = {2020-02-13},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {26},

number = {5},

pages = {1934-1944},

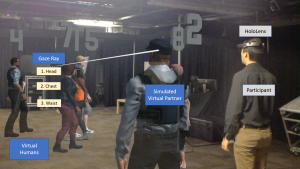

abstract = {Human gaze awareness is important for social and collaborative interactions. Recent technological advances in augmented reality (AR) displays and sensors provide us with the means to extend collaborative spaces with real-time dynamic AR indicators of one's gaze, for example via three-dimensional cursors or rays emanating from a partner's head. However, such gaze cues are only as useful as the quality of the underlying gaze estimation and the accuracy of the display mechanism. Depending on the type of the visualization, and the characteristics of the errors, AR gaze cues could either enhance or interfere with collaborations. In this paper, we present two human-subject studies in which we investigate the influence of angular and depth errors, target distance, and the type of gaze visualization on participants' performance and subjective evaluation during a collaborative task with a virtual human partner, where participants identified targets within a dynamically walking crowd. First, our results show that there is a significant difference in performance for the two gaze visualizations ray and cursor in conditions with simulated angular and depth errors: the ray visualization provided significantly faster response times and fewer errors compared to the cursor visualization. Second, our results show that under optimal conditions, among four different gaze visualization methods, a ray without depth information provides the worst performance and is rated lowest, while a combination of a ray and cursor with depth information is rated highest. We discuss the subjective and objective performance thresholds and provide guidelines for practitioners in this field.},

note = {Presented at IEEE VR 2020},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

Human gaze awareness is important for social and collaborative interactions. Recent technological advances in augmented reality (AR) displays and sensors provide us with the means to extend collaborative spaces with real-time dynamic AR indicators of one's gaze, for example via three-dimensional cursors or rays emanating from a partner's head. However, such gaze cues are only as useful as the quality of the underlying gaze estimation and the accuracy of the display mechanism. Depending on the type of the visualization, and the characteristics of the errors, AR gaze cues could either enhance or interfere with collaborations. In this paper, we present two human-subject studies in which we investigate the influence of angular and depth errors, target distance, and the type of gaze visualization on participants' performance and subjective evaluation during a collaborative task with a virtual human partner, where participants identified targets within a dynamically walking crowd. First, our results show that there is a significant difference in performance for the two gaze visualizations ray and cursor in conditions with simulated angular and depth errors: the ray visualization provided significantly faster response times and fewer errors compared to the cursor visualization. Second, our results show that under optimal conditions, among four different gaze visualization methods, a ray without depth information provides the worst performance and is rated lowest, while a combination of a ray and cursor with depth information is rated highest. We discuss the subjective and objective performance thresholds and provide guidelines for practitioners in this field. |

2019

|

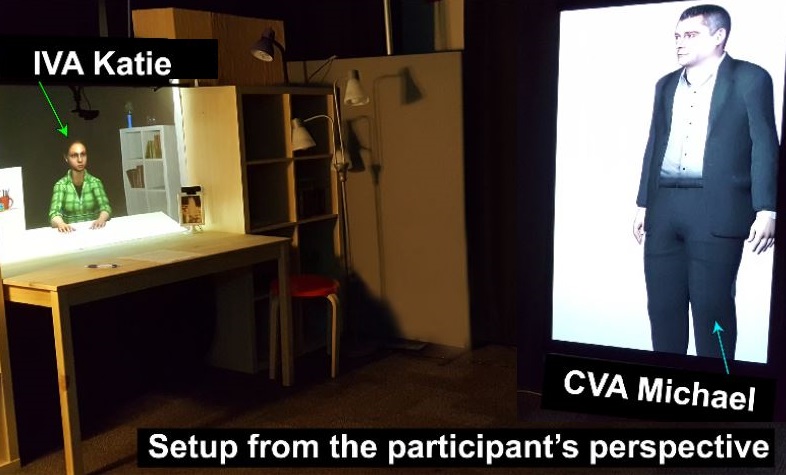

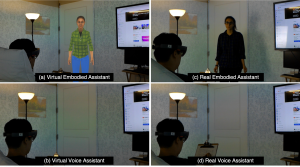

| Kangsoo Kim; Nahal Norouzi; Tiffany Losekamp; Gerd Bruder; Mindi Anderson; Gregory Welch Effects of Patient Care Assistant Embodiment and Computer Mediation on User Experience Proceedings Article In: Proceedings of the IEEE International Conference on Artificial Intelligence & Virtual Reality (AIVR), pp. 17-24, IEEE, 2019. @inproceedings{Kim2019epc,

title = {Effects of Patient Care Assistant Embodiment and Computer Mediation on User Experience},

author = {Kangsoo Kim and Nahal Norouzi and Tiffany Losekamp and Gerd Bruder and Mindi Anderson and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/11/AIVR2019_Caregiver.pdf},

doi = {10.1109/AIVR46125.2019.00013},

year = {2019},

date = {2019-12-09},

booktitle = {Proceedings of the IEEE International Conference on Artificial Intelligence & Virtual Reality (AIVR)},

pages = {17-24},

publisher = {IEEE},

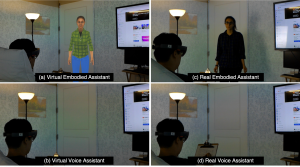

abstract = {Providers of patient care environments are facing an increasing demand for technological solutions that can facilitate increased patient satisfaction while being cost effective and practically feasible. Recent developments with respect to smart hospital room setups and smart home care environments have an immense potential to leverage advances in technologies such as Intelligent Virtual Agents, Internet of Things devices, and Augmented Reality to enable novel forms of patient interaction with caregivers and their environment.

In this paper, we present a human-subjects study in which we compared four types of simulated patient care environments for a range of typical tasks. In particular, we tested two forms of caregiver mediation with a real person or a virtual agent, and we compared two forms of caregiver embodiment with disembodied verbal or embodied interaction. Our results show that, as expected, a real caregiver provides the optimal user experience but an embodied virtual assistant is also a viable option for patient care environments, providing significantly higher social presence and engagement than voice-only interaction. We discuss the implications in the field of patient care and digital assistant.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Providers of patient care environments are facing an increasing demand for technological solutions that can facilitate increased patient satisfaction while being cost effective and practically feasible. Recent developments with respect to smart hospital room setups and smart home care environments have an immense potential to leverage advances in technologies such as Intelligent Virtual Agents, Internet of Things devices, and Augmented Reality to enable novel forms of patient interaction with caregivers and their environment.

In this paper, we present a human-subjects study in which we compared four types of simulated patient care environments for a range of typical tasks. In particular, we tested two forms of caregiver mediation with a real person or a virtual agent, and we compared two forms of caregiver embodiment with disembodied verbal or embodied interaction. Our results show that, as expected, a real caregiver provides the optimal user experience but an embodied virtual assistant is also a viable option for patient care environments, providing significantly higher social presence and engagement than voice-only interaction. We discuss the implications in the field of patient care and digital assistant. |

| Austin Erickson; Ryan Schubert; Kangsoo Kim; Gerd Bruder; Greg Welch Is It Cold in Here or Is It Just Me? Analysis of Augmented Reality Temperature Visualization for Computer-Mediated Thermoception Proceedings Article In: Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), pp. 319-327, IEEE, 2019, ISBN: 978-1-7281-4765-9. @inproceedings{Erickson2019iic,

title = {Is It Cold in Here or Is It Just Me? Analysis of Augmented Reality Temperature Visualization for Computer-Mediated Thermoception},

author = {Austin Erickson and Ryan Schubert and Kangsoo Kim and Gerd Bruder and Greg Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Erickson2019IIC.pdf},

doi = {10.1109/ISMAR.2019.00046},

isbn = {978-1-7281-4765-9},

year = {2019},

date = {2019-10-19},

urldate = {2019-10-19},

booktitle = {Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR)},

pages = {319-327},

publisher = {IEEE},

abstract = {Modern augmented reality (AR) head-mounted displays comprise a multitude of sensors that allow them to sense the environment around them. We have extended these capabilities by mounting two heat-wavelength infrared cameras to a Microsoft HoloLens, facilitating the acquisition of thermal data and enabling stereoscopic thermal overlays in the user’s augmented view. The ability to visualize live thermal information opens several avenues of investigation on how that thermal awareness may affect a user’s thermoception. We present a human-subject study, in which we simulated different temperature shifts using either heat vision overlays or 3D AR virtual effects associated with thermal cause-effect relationships (e.g., flames burn and ice cools). We further investigated differences in estimated temperatures when the stimuli were applied to either the user’s body or their environment. Our analysis showed significant effects and first trends for the AR virtual effects and heat vision, respectively, on participants’ temperature estimates for their body and the environment though with different strengths and characteristics, which we discuss in this paper. },

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Modern augmented reality (AR) head-mounted displays comprise a multitude of sensors that allow them to sense the environment around them. We have extended these capabilities by mounting two heat-wavelength infrared cameras to a Microsoft HoloLens, facilitating the acquisition of thermal data and enabling stereoscopic thermal overlays in the user’s augmented view. The ability to visualize live thermal information opens several avenues of investigation on how that thermal awareness may affect a user’s thermoception. We present a human-subject study, in which we simulated different temperature shifts using either heat vision overlays or 3D AR virtual effects associated with thermal cause-effect relationships (e.g., flames burn and ice cools). We further investigated differences in estimated temperatures when the stimuli were applied to either the user’s body or their environment. Our analysis showed significant effects and first trends for the AR virtual effects and heat vision, respectively, on participants’ temperature estimates for their body and the environment though with different strengths and characteristics, which we discuss in this paper. |

| Kendra Richards; Nikhil Mahalanobis; Kangsoo Kim; Ryan Schubert; Myungho Lee; Salam Daher; Nahal Norouzi; Jason Hochreiter; Gerd Bruder; Gregory F. Welch Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 3:1-3:9, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10. @inproceedings{Richards2019b,

title = {Analysis of Peripheral Vision and Vibrotactile Feedback During Proximal Search Tasks with Dynamic Virtual Entities in Augmented Reality},

author = {Kendra Richards and Nikhil Mahalanobis and Kangsoo Kim and Ryan Schubert and Myungho Lee and Salam Daher and Nahal Norouzi and Jason Hochreiter and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Richards2019b.pdf},

doi = {10.1145/3357251.3357585},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {3:1-3:9},

publisher = {ACM},

abstract = {A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants' perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

A primary goal of augmented reality (AR) is to seamlessly embed virtual content into a real environment. There are many factors that can affect the perceived physicality and co-presence of virtual entities, including the hardware capabilities, the fidelity of the virtual behaviors, and sensory feedback associated with the interactions. In this paper, we present a study investigating participants' perceptions and behaviors during a time-limited search task in close proximity with virtual entities in AR. In particular, we analyze the effects of (i) visual conflicts in the periphery of an optical see-through head-mounted display, a Microsoft HoloLens, (ii) overall lighting in the physical environment, and (iii) multimodal feedback based on vibrotactile transducers mounted on a physical platform. Our results show significant benefits of vibrotactile feedback and reduced peripheral lighting for spatial and social presence, and engagement. We discuss implications of these effects for AR applications. |

| Nahal Norouzi; Austin Erickson; Kangsoo Kim; Ryan Schubert; Joseph J. LaViola Jr.; Gerd Bruder; Gregory F. Welch Effects of Shared Gaze Parameters on Visual Target Identification Task Performance in Augmented Reality Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 12:1-12:11, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10, (Best Paper Award). @inproceedings{Norouzi2019esg,

title = {Effects of Shared Gaze Parameters on Visual Target Identification Task Performance in Augmented Reality},

author = {Nahal Norouzi and Austin Erickson and Kangsoo Kim and Ryan Schubert and Joseph J. LaViola Jr. and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/a12-norouzi.pdf},

doi = {10.1145/3357251.3357587},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

urldate = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {12:1-12:11},

publisher = {ACM},

abstract = {Augmented reality (AR) technologies provide a shared platform for users to collaborate in a physical context involving both real and virtual content. To enhance the quality of interaction between AR users, researchers have proposed augmenting users' interpersonal space with embodied cues such as their gaze direction. While beneficial in achieving improved interpersonal spatial communication, such shared gaze environments suffer from multiple types of errors related to eye tracking and networking, that can reduce objective performance and subjective experience.

In this paper, we conducted a human-subject study to understand the impact of accuracy, precision, latency, and dropout based errors on users' performance when using shared gaze cues to identify a target among a crowd of people. We simulated varying amounts of errors and the target distances and measured participants' objective performance through their response time and error rate, and their subjective experience and cognitive load through questionnaires. We found some significant differences suggesting that the simulated error levels had stronger effects on participants' performance than target distance with accuracy and latency having a high impact on participants' error rate. We also observed that participants assessed their own performance as lower than it objectively was, and we discuss implications for practical shared gaze applications.},

note = {Best Paper Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Augmented reality (AR) technologies provide a shared platform for users to collaborate in a physical context involving both real and virtual content. To enhance the quality of interaction between AR users, researchers have proposed augmenting users' interpersonal space with embodied cues such as their gaze direction. While beneficial in achieving improved interpersonal spatial communication, such shared gaze environments suffer from multiple types of errors related to eye tracking and networking, that can reduce objective performance and subjective experience.

In this paper, we conducted a human-subject study to understand the impact of accuracy, precision, latency, and dropout based errors on users' performance when using shared gaze cues to identify a target among a crowd of people. We simulated varying amounts of errors and the target distances and measured participants' objective performance through their response time and error rate, and their subjective experience and cognitive load through questionnaires. We found some significant differences suggesting that the simulated error levels had stronger effects on participants' performance than target distance with accuracy and latency having a high impact on participants' error rate. We also observed that participants assessed their own performance as lower than it objectively was, and we discuss implications for practical shared gaze applications. |

| Kangsoo Kim; Austin Erickson; Alexis Lambert; Gerd Bruder; Gregory F. Welch Effects of Dark Mode on Visual Fatigue and Acuity in Optical See-Through Head-Mounted Displays Proceedings Article In: Proceedings of the ACM Symposium on Spatial User Interaction (SUI), pp. 9:1-9:9, ACM, 2019, ISBN: 978-1-4503-6975-6/19/10. @inproceedings{Kim2019edm,

title = {Effects of Dark Mode on Visual Fatigue and Acuity in Optical See-Through Head-Mounted Displays},

author = {Kangsoo Kim and Austin Erickson and Alexis Lambert and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Kim2019edm.pdf},

doi = {10.1145/3357251.3357584},

isbn = {978-1-4503-6975-6/19/10},

year = {2019},

date = {2019-10-19},

urldate = {2019-10-19},

booktitle = {Proceedings of the ACM Symposium on Spatial User Interaction (SUI)},

pages = {9:1-9:9},

publisher = {ACM},

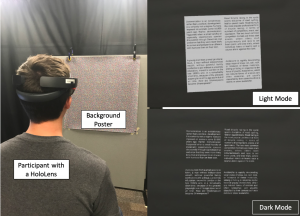

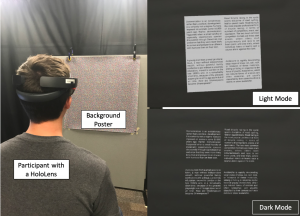

abstract = {Light-on-dark color schemes, so-called "Dark Mode," are becoming more and more popular over a wide range of display technologies and application fields. Many people who have to look at computer screens for hours at a time, such as computer programmers and computer graphics artists, indicate a preference for switching colors on a computer screen from dark text on a light background to light text on a dark background due to perceived advantages related to visual comfort and acuity, specifically when working in low-light environments.

In this paper, we investigate the effects of dark mode color schemes in the field of optical see-through head-mounted displays (OST-HMDs), where the characteristic "additive" light model implies that bright graphics are visible but dark graphics are transparent. We describe a human-subject study in which we evaluated a normal and inverted color mode in front of different physical backgrounds and among different lighting conditions. Our results show that dark mode graphics on OST-HMDs have significant benefits for visual acuity, fatigue, and usability, while user preferences depend largely on the lighting in the physical environment. We discuss the implications of these effects on user interfaces and applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Light-on-dark color schemes, so-called "Dark Mode," are becoming more and more popular over a wide range of display technologies and application fields. Many people who have to look at computer screens for hours at a time, such as computer programmers and computer graphics artists, indicate a preference for switching colors on a computer screen from dark text on a light background to light text on a dark background due to perceived advantages related to visual comfort and acuity, specifically when working in low-light environments.

In this paper, we investigate the effects of dark mode color schemes in the field of optical see-through head-mounted displays (OST-HMDs), where the characteristic "additive" light model implies that bright graphics are visible but dark graphics are transparent. We describe a human-subject study in which we evaluated a normal and inverted color mode in front of different physical backgrounds and among different lighting conditions. Our results show that dark mode graphics on OST-HMDs have significant benefits for visual acuity, fatigue, and usability, while user preferences depend largely on the lighting in the physical environment. We discuss the implications of these effects on user interfaces and applications. |

| Nahal Norouzi; Kangsoo Kim; Myungho Lee; Ryan Schubert; Austin Erickson; Jeremy Bailenson; Gerd Bruder; Greg Welch

Walking Your Virtual Dog: Analysis of Awareness and Proxemics with Simulated Support Animals in Augmented Reality Proceedings Article In: Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2019, pp. 253-264, IEEE, 2019, ISBN: 978-1-7281-4765-9. @inproceedings{Norouzi2019cb,

title = {Walking Your Virtual Dog: Analysis of Awareness and Proxemics with Simulated Support Animals in Augmented Reality },

author = {Nahal Norouzi and Kangsoo Kim and Myungho Lee and Ryan Schubert and Austin Erickson and Jeremy Bailenson and Gerd Bruder and Greg Welch

},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/10/Final__AR_Animal_ISMAR.pdf},

doi = {10.1109/ISMAR.2019.00040},

isbn = {978-1-7281-4765-9},

year = {2019},

date = {2019-10-16},

urldate = {2019-10-16},

booktitle = {Proceedings of the IEEE International Symposium on Mixed and Augmented Reality (ISMAR), 2019},

pages = {253-264},

publisher = {IEEE},

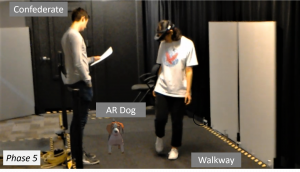

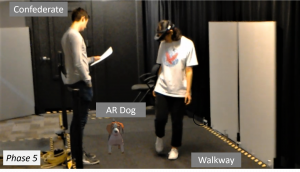

abstract = {Domestic animals have a long history of enriching human lives physically and mentally by filling a variety of different roles, such as service animals, emotional support animals, companions, and pets. Despite this, technological realizations of such animals in augmented reality (AR) are largely underexplored in terms of their behavior and interactions as well as effects they might have on human users' perception or behavior. In this paper, we describe a simulated virtual companion animal, in the form of a dog, in a shared AR space. We investigated its effects on participants' perception and behavior, including locomotion related to proxemics, with respect to their AR dog and other real people in the environment. We conducted a 2 by 2 mixed factorial human-subject study, in which we varied (i) the AR dog's awareness and behavior with respect to other people in the physical environment and (ii) the awareness and behavior of those people with respect to the AR dog. Our results show that having an AR companion dog changes participants' locomotion behavior, proxemics, and social interaction with other people who can or can not see the AR dog. We also show that the AR dog's simulated awareness and behaviors have an impact on participants' perception, including co-presence, animalism, perceived physicality, and dog's perceived awareness of the participant and environment. We discuss our findings and present insights and implications for the realization of effective AR animal companions.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Domestic animals have a long history of enriching human lives physically and mentally by filling a variety of different roles, such as service animals, emotional support animals, companions, and pets. Despite this, technological realizations of such animals in augmented reality (AR) are largely underexplored in terms of their behavior and interactions as well as effects they might have on human users' perception or behavior. In this paper, we describe a simulated virtual companion animal, in the form of a dog, in a shared AR space. We investigated its effects on participants' perception and behavior, including locomotion related to proxemics, with respect to their AR dog and other real people in the environment. We conducted a 2 by 2 mixed factorial human-subject study, in which we varied (i) the AR dog's awareness and behavior with respect to other people in the physical environment and (ii) the awareness and behavior of those people with respect to the AR dog. Our results show that having an AR companion dog changes participants' locomotion behavior, proxemics, and social interaction with other people who can or can not see the AR dog. We also show that the AR dog's simulated awareness and behaviors have an impact on participants' perception, including co-presence, animalism, perceived physicality, and dog's perceived awareness of the participant and environment. We discuss our findings and present insights and implications for the realization of effective AR animal companions. |

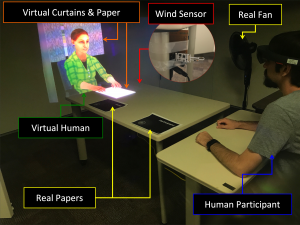

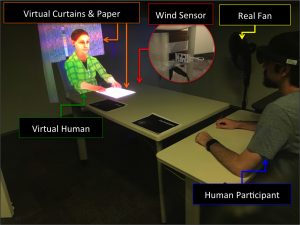

| Kangsoo Kim; Ryan Schubert; Jason Hochreiter; Gerd Bruder; Gregory Welch Blowing in the Wind: Increasing Social Presence with a Virtual Human via Environmental Airflow Interaction in Mixed Reality Journal Article In: Elsevier Computers and Graphics, vol. 83, no. October 2019, pp. 23-32, 2019. @article{Kim2019blow,

title = {Blowing in the Wind: Increasing Social Presence with a Virtual Human via Environmental Airflow Interaction in Mixed Reality},

author = {Kangsoo Kim and Ryan Schubert and Jason Hochreiter and Gerd Bruder and Gregory Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2019/06/ELSEVIER_C_G2019_Special_BlowWindinMR_ICAT_EGVE2018_20190606_reduced.pdf},

doi = {10.1016/j.cag.2019.06.006},

year = {2019},

date = {2019-07-05},

journal = {Elsevier Computers and Graphics},

volume = {83},

number = {October 2019},

pages = {23-32},

abstract = {In this paper, we describe two human-subject studies in which we explored and investigated the effects of subtle multimodal interaction on social presence with a virtual human (VH) in mixed reality (MR). In the studies, participants interacted with a VH, which was co-located with them across a table, with two different platforms: a projection based MR environment and an optical see-through head-mounted display (OST-HMD) based MR environment. While the two studies were not intended to be directly comparable, the second study with an OST-HMD was carefully designed based on the insights and lessons learned from the first projection-based study. For both studies, we compared two levels of gradually increased multimodal interaction: (i) virtual objects being affected by real airflow (e.g., as commonly experienced with fans during warm weather), and (ii) a VH showing awareness of this airflow. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher social presence with airflow influence than without it, and the social presence would be even higher when the VH showed awareness of the airflow. We observed an increased social presence in the second study when both physical–virtual interaction via airflow and VH awareness behaviors were present, but we observed no clear difference in participant-reported social presence with the VH in the first study. As the considered environmental factors are incidental to the direct interaction with the real human, i.e., they are not significant or necessary for the interaction task, they can provide a reasonably generalizable approach to increase social presence in HMD-based MR environments beyond the specific scenario and environment described here.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

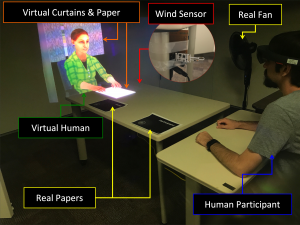

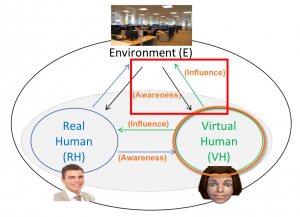

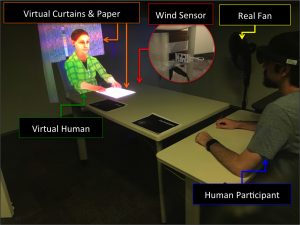

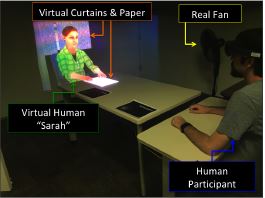

In this paper, we describe two human-subject studies in which we explored and investigated the effects of subtle multimodal interaction on social presence with a virtual human (VH) in mixed reality (MR). In the studies, participants interacted with a VH, which was co-located with them across a table, with two different platforms: a projection based MR environment and an optical see-through head-mounted display (OST-HMD) based MR environment. While the two studies were not intended to be directly comparable, the second study with an OST-HMD was carefully designed based on the insights and lessons learned from the first projection-based study. For both studies, we compared two levels of gradually increased multimodal interaction: (i) virtual objects being affected by real airflow (e.g., as commonly experienced with fans during warm weather), and (ii) a VH showing awareness of this airflow. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher social presence with airflow influence than without it, and the social presence would be even higher when the VH showed awareness of the airflow. We observed an increased social presence in the second study when both physical–virtual interaction via airflow and VH awareness behaviors were present, but we observed no clear difference in participant-reported social presence with the VH in the first study. As the considered environmental factors are incidental to the direct interaction with the real human, i.e., they are not significant or necessary for the interaction task, they can provide a reasonably generalizable approach to increase social presence in HMD-based MR environments beyond the specific scenario and environment described here. |

2018

|

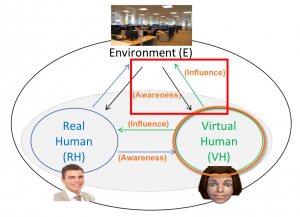

| Kangsoo Kim Environmental Physical–Virtual Interaction to Improve Social Presence of a Virtual Human in Mixed Reality PhD Thesis The University of Central Florida, 2018. @phdthesis{Kim2018Thesis,

title = {Environmental Physical–Virtual Interaction to Improve Social Presence of a Virtual Human in Mixed Reality},

author = {Kangsoo Kim},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/KangsooKIM_PhD_Dissertation_20181119.pdf},

year = {2018},

date = {2018-11-21},

school = {The University of Central Florida},

abstract = {Interactive Virtual Humans (VHs) are increasingly used to replace or assist real humans in various applications, e.g., military and medical training, education, or entertainment. In most VH research, the perceived social presence with a VH, which denotes the user's sense of being socially connected or co-located with the VH, is the decisive factor in evaluating the social influence of the VH—a phenomenon where human users' emotions, opinions, or behaviors are affected by the VH. The purpose of this dissertation is to develop new knowledge about how characteristics and behaviors of a VH in a Mixed Reality (MR) environment can affect the perception of and resulting behavior with the VH, and to find effective and efficient ways to improve the quality and performance of social interactions with VHs. Important issues and challenges in real–virtual human interactions in MR, e.g., lack of physical–virtual interaction, are identified and discussed through several user studies incorporating interactions with VH systems. In the studies, different features of VHs are prototyped and evaluated, such as a VH's ability to be aware of and influence the surrounding physical environment, while measuring objective behavioral data as well as collecting subjective responses from the participants. The results from the studies support the idea that the VH's aware- ness and influence of the physical environment can improve not only the perceived social presence with the VH, but also the trustworthiness of the VH within a social context. The findings will contribute towards designing more influential VHs that can benefit a wide range of simulation and training applications for which a high level of social realism is important, and that can be more easily incorporated into our daily lives as social companions, providing reliable relationships and convenience in assisting with daily tasks.},

keywords = {},

pubstate = {published},

tppubtype = {phdthesis}

}

Interactive Virtual Humans (VHs) are increasingly used to replace or assist real humans in various applications, e.g., military and medical training, education, or entertainment. In most VH research, the perceived social presence with a VH, which denotes the user's sense of being socially connected or co-located with the VH, is the decisive factor in evaluating the social influence of the VH—a phenomenon where human users' emotions, opinions, or behaviors are affected by the VH. The purpose of this dissertation is to develop new knowledge about how characteristics and behaviors of a VH in a Mixed Reality (MR) environment can affect the perception of and resulting behavior with the VH, and to find effective and efficient ways to improve the quality and performance of social interactions with VHs. Important issues and challenges in real–virtual human interactions in MR, e.g., lack of physical–virtual interaction, are identified and discussed through several user studies incorporating interactions with VH systems. In the studies, different features of VHs are prototyped and evaluated, such as a VH's ability to be aware of and influence the surrounding physical environment, while measuring objective behavioral data as well as collecting subjective responses from the participants. The results from the studies support the idea that the VH's aware- ness and influence of the physical environment can improve not only the perceived social presence with the VH, but also the trustworthiness of the VH within a social context. The findings will contribute towards designing more influential VHs that can benefit a wide range of simulation and training applications for which a high level of social realism is important, and that can be more easily incorporated into our daily lives as social companions, providing reliable relationships and convenience in assisting with daily tasks. |

| Kangsoo Kim; Gerd Bruder; Gregory F. Welch Blowing in the Wind: Increasing Copresence with a Virtual Human via Airflow Influence in Augmented Reality Proceedings Article In: Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018, pp. 183-190, 2018, (Honorable Mention Award). @inproceedings{Kim2018c,

title = {Blowing in the Wind: Increasing Copresence with a Virtual Human via Airflow Influence in Augmented Reality},

author = {Kangsoo Kim and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/10/Kim_Airflow_ICAT_EGVE2018.pdf},

doi = {10.2312/egve.20181332},

year = {2018},

date = {2018-11-07},

booktitle = {Proceedings of the International Conference on Artificial Reality and Telexistence and Eurographics Symposium on Virtual Environments (ICAT-EGVE 2018), Limassol, Cyprus, November 7–9, 2018},

pages = {183-190},

abstract = {In a social context where two or more interlocutors interact with each other in the same space, one's sense of copresence with the others is an important factor for the quality of communication and engagement in the interaction. Although augmented reality (AR) technology enables the superposition of virtual humans (VHs) as interlocutors in the real world, the resulting sense of copresence is usually far lower than with a real human interlocutor.

In this paper, we describe a human-subject study in which we explored and investigated the effects that subtle multi-modal interaction between the virtual environment and the real world, where a VH and human participants were co-located, can have on copresence. We compared two levels of gradually increased multi-modal interaction: (i) virtual objects being affected by real airflow as commonly experienced with fans in summer, and (ii) a VH showing awareness of this airflow. We chose airflow as one example of an environmental factor that can noticeably affect both the real and virtual worlds, and also cause subtle responses in interlocutors. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher copresence with airflow influence than without it, and the copresence would be even higher when the VH shows awareness of the airflow. The statistical analysis with the participant-reported copresence scores showed that there was an improvement of the perceived copresence with the VH when both the physical–virtual interactivity via airflow and the VH's awareness behaviors were present together. As the considered environmental factors are directed at the VH, i.e., they are not part of the direct interaction with the real human, they can provide a reasonably generalizable approach to support copresence in AR beyond the particular use case in the present experiment.},

note = {Honorable Mention Award},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a social context where two or more interlocutors interact with each other in the same space, one's sense of copresence with the others is an important factor for the quality of communication and engagement in the interaction. Although augmented reality (AR) technology enables the superposition of virtual humans (VHs) as interlocutors in the real world, the resulting sense of copresence is usually far lower than with a real human interlocutor.

In this paper, we describe a human-subject study in which we explored and investigated the effects that subtle multi-modal interaction between the virtual environment and the real world, where a VH and human participants were co-located, can have on copresence. We compared two levels of gradually increased multi-modal interaction: (i) virtual objects being affected by real airflow as commonly experienced with fans in summer, and (ii) a VH showing awareness of this airflow. We chose airflow as one example of an environmental factor that can noticeably affect both the real and virtual worlds, and also cause subtle responses in interlocutors. We hypothesized that our two levels of treatment would increase the sense of being together with the VH gradually, i.e., participants would report higher copresence with airflow influence than without it, and the copresence would be even higher when the VH shows awareness of the airflow. The statistical analysis with the participant-reported copresence scores showed that there was an improvement of the perceived copresence with the VH when both the physical–virtual interactivity via airflow and the VH's awareness behaviors were present together. As the considered environmental factors are directed at the VH, i.e., they are not part of the direct interaction with the real human, they can provide a reasonably generalizable approach to support copresence in AR beyond the particular use case in the present experiment. |

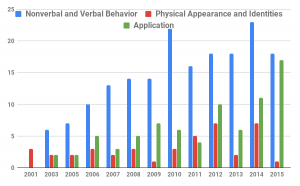

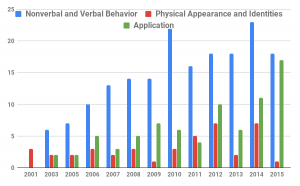

| Nahal Norouzi; Kangsoo Kim; Jason Hochreiter; Myungho Lee; Salam Daher; Gerd Bruder; Gregory Welch A Systematic Survey of 15 Years of User Studies Published in the Intelligent Virtual Agents Conference Proceedings Article In: IVA '18 Proceedings of the 18th International Conference on Intelligent Virtual Agents, pp. 17-22, ACM ACM, 2018, ISBN: 978-1-4503-6013-5/18/11. @inproceedings{Norouzi2018c,

title = {A Systematic Survey of 15 Years of User Studies Published in the Intelligent Virtual Agents Conference},

author = {Nahal Norouzi and Kangsoo Kim and Jason Hochreiter and Myungho Lee and Salam Daher and Gerd Bruder and Gregory Welch },

url = {https://sreal.ucf.edu/wp-content/uploads/2018/11/p17-norouzi-2.pdf},

doi = {10.1145/3267851.3267901},

isbn = {978-1-4503-6013-5/18/11},

year = {2018},

date = {2018-11-05},

booktitle = {IVA '18 Proceedings of the 18th International Conference on Intelligent Virtual Agents},

pages = {17-22},

publisher = {ACM},

organization = {ACM},

abstract = {The field of intelligent virtual agents (IVAs) has evolved immensely over the past 15 years, introducing new application opportunities in areas such as training, health care, and virtual assistants. In this survey paper, we provide a systematic review of the most influential user studies published in the IVA conference from 2001 to 2015 focusing on IVA development, human perception, and interactions. A total of 247 papers with 276 user studies have been classified and reviewed based on their contributions and impact. We identify the different areas of research and provide a summary of the papers with the highest impact. With the trends of past user studies and the current state of technology, we provide insights into future trends and research challenges.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

The field of intelligent virtual agents (IVAs) has evolved immensely over the past 15 years, introducing new application opportunities in areas such as training, health care, and virtual assistants. In this survey paper, we provide a systematic review of the most influential user studies published in the IVA conference from 2001 to 2015 focusing on IVA development, human perception, and interactions. A total of 247 papers with 276 user studies have been classified and reviewed based on their contributions and impact. We identify the different areas of research and provide a summary of the papers with the highest impact. With the trends of past user studies and the current state of technology, we provide insights into future trends and research challenges. |

![[POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality](https://sreal.ucf.edu/wp-content/uploads/2018/08/Haesler2018Thumb-300x189.png) | Steffen Haesler; Kangsoo Kim; Gerd Bruder; Gregory F. Welch [POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality Proceedings Article In: Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018, 2018. @inproceedings{Haesler2018,

title = {[POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality},

author = {Steffen Haesler and Kangsoo Kim and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/08/Haesler2018.pdf},

doi = {10.1109/ISMAR-Adjunct.2018.00067},

year = {2018},

date = {2018-10-16},

booktitle = {Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018},

abstract = {Voice-activated Intelligent Virtual Assistants (IVAs) such as Amazon Alexa offer a natural and realistic form of interaction that pursues the level of social interaction among real humans. The user experience with such technologies depends to a large degree on the perceived trust in and reliability of the IVA. In this poster, we explore the effects of a three-dimensional embodied representation of Amazon Alexa in Augmented Reality (AR) on the user’s perceived trust in her being able to control Internet of Things (IoT) devices in a smart home environment. We present a preliminary study and discuss the potential of positive effects in perceived trust due to the embodied representation compared to a voice-only condition.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Voice-activated Intelligent Virtual Assistants (IVAs) such as Amazon Alexa offer a natural and realistic form of interaction that pursues the level of social interaction among real humans. The user experience with such technologies depends to a large degree on the perceived trust in and reliability of the IVA. In this poster, we explore the effects of a three-dimensional embodied representation of Amazon Alexa in Augmented Reality (AR) on the user’s perceived trust in her being able to control Internet of Things (IoT) devices in a smart home environment. We present a preliminary study and discuss the potential of positive effects in perceived trust due to the embodied representation compared to a voice-only condition. |

| Kangsoo Kim; Luke Boelling; Steffen Haesler; Jeremy N. Bailenson; Gerd Bruder; Gregory F. Welch Does a Digital Assistant Need a Body? The Influence of Visual Embodiment and Social Behavior on the Perception of Intelligent Virtual Agents in AR Proceedings Article In: Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018, 2018. @inproceedings{Kim2018a,

title = {Does a Digital Assistant Need a Body? The Influence of Visual Embodiment and Social Behavior on the Perception of Intelligent Virtual Agents in AR},

author = {Kangsoo Kim and Luke Boelling and Steffen Haesler and Jeremy N. Bailenson and Gerd Bruder and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/08/Kim2018a.pdf},

doi = {10.1109/ISMAR.2018.00039},

year = {2018},

date = {2018-10-16},

booktitle = {Proceedings of the 17th IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2018), Munich, Germany, October 16–20, 2018},

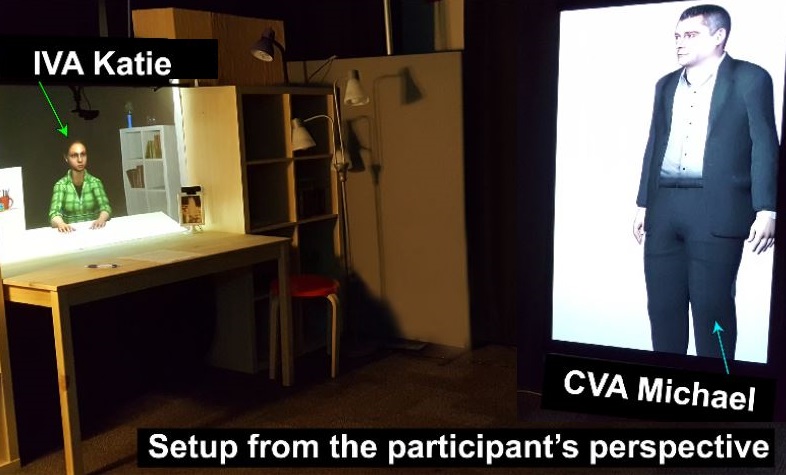

abstract = {Intelligent Virtual Agents (IVAs) are becoming part of our everyday life, thanks to artificial intelligence technology and Internet of Things devices. For example, users can control their connected home appliances through natural voice commands to the IVA. However, most current-state commercial IVAs, such as Amazon Alexa, mainly focus on voice commands and voice feedback, and lack the ability to provide non-verbal cues which are an important part of social interaction. Augmented Reality (AR) has the potential to overcome this challenge by providing a visual embodiment of the IVA.

In this paper we investigate how visual embodiment and social behaviors influence the perception of the IVA. We hypothesize that a user's confidence in an IVA's ability to perform tasks is improved when imbuing the agent with a human body and social behaviors compared to the agent solely depending on voice feedback. In other words, an agent's embodied gesture and locomotion behavior exhibiting awareness of the surrounding real world or exerting influence over the environment can improve the perceived social presence with and confidence in the agent. We present a human-subject study, in which we evaluated the hypothesis and compared different forms of IVAs with speech, gesturing, and locomotion behaviors in an interactive AR scenario. The results show support for the hypothesis with measures of confidence, trust, and social presence. We discuss implications for future developments in the field of IVAs.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Intelligent Virtual Agents (IVAs) are becoming part of our everyday life, thanks to artificial intelligence technology and Internet of Things devices. For example, users can control their connected home appliances through natural voice commands to the IVA. However, most current-state commercial IVAs, such as Amazon Alexa, mainly focus on voice commands and voice feedback, and lack the ability to provide non-verbal cues which are an important part of social interaction. Augmented Reality (AR) has the potential to overcome this challenge by providing a visual embodiment of the IVA.

In this paper we investigate how visual embodiment and social behaviors influence the perception of the IVA. We hypothesize that a user's confidence in an IVA's ability to perform tasks is improved when imbuing the agent with a human body and social behaviors compared to the agent solely depending on voice feedback. In other words, an agent's embodied gesture and locomotion behavior exhibiting awareness of the surrounding real world or exerting influence over the environment can improve the perceived social presence with and confidence in the agent. We present a human-subject study, in which we evaluated the hypothesis and compared different forms of IVAs with speech, gesturing, and locomotion behaviors in an interactive AR scenario. The results show support for the hypothesis with measures of confidence, trust, and social presence. We discuss implications for future developments in the field of IVAs. |

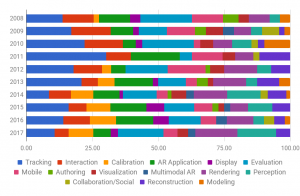

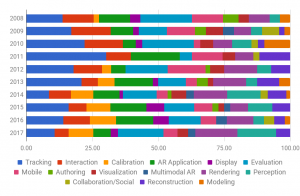

| Kangsoo Kim; Mark Billinghurst; Gerd Bruder; Henry Been-Lirn Duh; Gregory F. Welch Revisiting Trends in Augmented Reality Research: A Review of the 2nd Decade of ISMAR (2008–2017) Journal Article In: IEEE Transactions on Visualization and Computer Graphics, vol. 24, no. 11, pp. 2947-2962, 2018, ISSN: 1077-2626. @article{Kim2018b,

title = {Revisiting Trends in Augmented Reality Research: A Review of the 2nd Decade of ISMAR (2008–2017)},

author = {Kangsoo Kim and Mark Billinghurst and Gerd Bruder and Henry Been-Lirn Duh and Gregory F. Welch},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/08/Kim2018b.pdf},

doi = {10.1109/TVCG.2018.2868591},

issn = {1077-2626},

year = {2018},

date = {2018-09-06},

journal = {IEEE Transactions on Visualization and Computer Graphics},

volume = {24},

number = {11},

pages = {2947-2962},

abstract = {In 2008, Zhou et al. presented a survey paper summarizing the previous ten years of ISMAR publications, which provided invaluable insights into the research challenges and trends associated with that time period. Ten years later, we review the research that has been presented at ISMAR conferences since the survey of Zhou et al., at a time when both academia and the AR industry are enjoying dramatic technological changes. Here we consider the research results and trends of the last decade of ISMAR by carefully reviewing the ISMAR publications from the period of 2008–2017, in the context of the first ten years. The numbers of papers for different research topics and their impacts by citations were analyzed while reviewing them—which reveals that there is a sharp increase in AR evaluation and rendering research. Based on this review we offer some observations related to potential future research areas or trends, which could be helpful to AR researchers and industry members looking ahead.},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

In 2008, Zhou et al. presented a survey paper summarizing the previous ten years of ISMAR publications, which provided invaluable insights into the research challenges and trends associated with that time period. Ten years later, we review the research that has been presented at ISMAR conferences since the survey of Zhou et al., at a time when both academia and the AR industry are enjoying dramatic technological changes. Here we consider the research results and trends of the last decade of ISMAR by carefully reviewing the ISMAR publications from the period of 2008–2017, in the context of the first ten years. The numbers of papers for different research topics and their impacts by citations were analyzed while reviewing them—which reveals that there is a sharp increase in AR evaluation and rendering research. Based on this review we offer some observations related to potential future research areas or trends, which could be helpful to AR researchers and industry members looking ahead. |

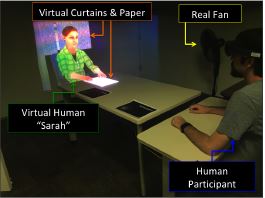

| Kangsoo Kim Improving Social Presence with a Virtual Human via Multimodal Physical–Virtual Interactivity in AR Proceedings Article In: Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada — April 21 - 26, 2018 , pp. SRC09:1–SRC09:6, ACM, New York, NY, USA, 2018, ISBN: 978-1-4503-5621-3. @inproceedings{Kim2018,

title = {Improving Social Presence with a Virtual Human via Multimodal Physical–Virtual Interactivity in AR},

author = {Kangsoo Kim},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/Kim2018.pdf},

doi = {10.1145/3170427.3180291},

isbn = {978-1-4503-5621-3},

year = {2018},

date = {2018-04-26},

urldate = {2018-05-11},

booktitle = {Extended Abstracts of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal QC, Canada — April 21 - 26, 2018 },

number = {SRC09},

pages = {SRC09:1--SRC09:6},

publisher = {ACM},

address = {New York, NY, USA},

series = {CHI EA '18},

abstract = {In a social context where a real human interacts with a virtual human (VH) in the same space, one's sense of social/co-presence with the VH is an important factor for the quality of interaction and the VH's social influence to the human user in context. Although augmented reality (AR) enables the superposition of VHs in the real world, the resulting sense of social/co-presence is usually far lower than with a real human. In this paper, we introduce a research approach employing multimodal interactivity between the virtual environment and the physical world, where a VH and a human user are co-located, to improve the social/co-presence with the VH. A preliminary study suggests a promising effect on the sense of copresence with a VH when a subtle airflow from a real fan can blow a virtual paper and curtains next to the VH as a physical–virtual interactivity. Our approach can be generalized to support social/co-presence with any virtual contents in AR beyond the particular VH scenarios.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In a social context where a real human interacts with a virtual human (VH) in the same space, one's sense of social/co-presence with the VH is an important factor for the quality of interaction and the VH's social influence to the human user in context. Although augmented reality (AR) enables the superposition of VHs in the real world, the resulting sense of social/co-presence is usually far lower than with a real human. In this paper, we introduce a research approach employing multimodal interactivity between the virtual environment and the physical world, where a VH and a human user are co-located, to improve the social/co-presence with the VH. A preliminary study suggests a promising effect on the sense of copresence with a VH when a subtle airflow from a real fan can blow a virtual paper and curtains next to the VH as a physical–virtual interactivity. Our approach can be generalized to support social/co-presence with any virtual contents in AR beyond the particular VH scenarios. |

2017

|

![[POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality](https://sreal.ucf.edu/wp-content/uploads/2018/05/Jo2017.jpg) | Dongsik Jo; Kangsoo Kim; Gregory F. Welch; Woojin Jeon; Yongwan Kim; Ki-Hong Kim; Gerard Jounghyun Kim [POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality Proceedings Article In: Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology, pp. 77:1–77:2, ACM, Gothenburg, Sweden, 2017, ISBN: 978-1-4503-5548-3. @inproceedings{Jo2017,

title = {[POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality},

author = {Dongsik Jo and Kangsoo Kim and Gregory F. Welch and Woojin Jeon and Yongwan Kim and Ki-Hong Kim and Gerard Jounghyun Kim},

url = {https://sreal.ucf.edu/wp-content/uploads/2018/05/Jo2017.pdf},

doi = {10.1145/3139131.3141214},

isbn = {978-1-4503-5548-3},

year = {2017},

date = {2017-11-08},

booktitle = {Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology},

pages = {77:1--77:2},

publisher = {ACM},

address = {Gothenburg, Sweden},

series = {VRST '17},

abstract = {In this paper we report on an investigation of the effects of a self-avatar's visual similarity to a user's actual appearance, on their perceptions of the avatar in an immersive virtual reality (IVR) experience. We conducted a user study to examine the participant's sense of body ownership, presence and visual realism under three levels of avatar-owner visual similarity: (L1) an avatar reconstructed from real imagery of the participant's appearance, (L2) a cartoon-like virtual avatar created by a 3D artist for each participant, where the avatar shoes and clothing mimic that of the participant, but using a low-fidelity model, and (L3) a cartoon-like virtual avatar with a pre-defined appearance for the shoes and clothing. Surprisingly, the results indicate that the participants generally exhibited the highest sense of body ownership and presence when inhabiting the cartoon-like virtual avatar mimicking the outfit of the participant (L2), despite the relatively low participant similarity. We present our experiment and main findings, also, discuss the potential impact of a self-avatar's visual differences on human perceptions in IVR.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we report on an investigation of the effects of a self-avatar's visual similarity to a user's actual appearance, on their perceptions of the avatar in an immersive virtual reality (IVR) experience. We conducted a user study to examine the participant's sense of body ownership, presence and visual realism under three levels of avatar-owner visual similarity: (L1) an avatar reconstructed from real imagery of the participant's appearance, (L2) a cartoon-like virtual avatar created by a 3D artist for each participant, where the avatar shoes and clothing mimic that of the participant, but using a low-fidelity model, and (L3) a cartoon-like virtual avatar with a pre-defined appearance for the shoes and clothing. Surprisingly, the results indicate that the participants generally exhibited the highest sense of body ownership and presence when inhabiting the cartoon-like virtual avatar mimicking the outfit of the participant (L2), despite the relatively low participant similarity. We present our experiment and main findings, also, discuss the potential impact of a self-avatar's visual differences on human perceptions in IVR. |

| Salam Daher; Kangsoo Kim; Myungho Lee; Ryan Schubert; Gerd Bruder; Jeremy Bailenson; Greg Welch Effects of Social Priming on Social Presence with Intelligent Virtual Agents Book Chapter In: Beskow, Jonas; Peters, Christopher; Castellano, Ginevra; O'Sullivan, Carol; Leite, Iolanda; Kopp, Stefan (Ed.): Intelligent Virtual Agents: 17th International Conference, IVA 2017, Stockholm, Sweden, August 27-30, 2017, Proceedings, vol. 10498, pp. 87-100, Springer International Publishing, 2017. @inbook{Daher2017ab,

title = {Effects of Social Priming on Social Presence with Intelligent Virtual Agents},

author = {Salam Daher and Kangsoo Kim and Myungho Lee and Ryan Schubert and Gerd Bruder and Jeremy Bailenson and Greg Welch},

editor = {Jonas Beskow and Christopher Peters and Ginevra Castellano and Carol O'Sullivan and Iolanda Leite and Stefan Kopp},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/12/Daher2017ab.pdf},

doi = {10.1007/978-3-319-67401-8_10},

year = {2017},

date = {2017-08-26},

booktitle = {Intelligent Virtual Agents: 17th International Conference, IVA 2017, Stockholm, Sweden, August 27-30, 2017, Proceedings},

volume = {10498},

pages = {87-100},

publisher = {Springer International Publishing},

keywords = {},

pubstate = {published},

tppubtype = {inbook}

}

|

![[Tutorial] Developing Embodied Interactive Virtual Characters for Human-Subjects Studies](https://sreal.ucf.edu/wp-content/uploads/2020/04/IEEEVR2020Tutorial-300x176.png)

![[POSTER] Seeing is Believing: Improving the Perceived Trust in Visually Embodied Alexa in Augmented Reality](https://sreal.ucf.edu/wp-content/uploads/2018/08/Haesler2018Thumb-300x189.png)

![[POSTER] The Impact of Avatar-owner Visual Similarity on Body Ownership in Immersive Virtual Reality](https://sreal.ucf.edu/wp-content/uploads/2018/05/Jo2017.jpg)