2009

|

| Frank Steinicke; Gerd Bruder; Anthony Steed; Klaus H. Hinrichs; Alexander Gerlach Does a Gradual Transition to the Virtual World increase Presence? Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 203–210, IEEE Press, 2009. @inproceedings{SBSHG09,

title = {Does a Gradual Transition to the Virtual World increase Presence?},

author = { Frank Steinicke and Gerd Bruder and Anthony Steed and Klaus H. Hinrichs and Alexander Gerlach},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBSHG09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {203--210},

publisher = {IEEE Press},

abstract = {In order to increase a user's sense of presence in an artificial environment some researchers propose a gradual transition from reality to the virtual world instead of immersing users into the virtual world directly. One approach is to start the VR experience in a virtual replica of the physical space to accustom users to the characteristics of VR, e.g., latency, reduced field of view or tracking errors, in a known environment. Although this procedure is already applied in VR demonstrations, until now it has not been verified whether the usage of such a transitional environment - as transition between real and virtual environment - increases someone's sense of presence. We have observed subjective, physiological and behavioral reactions of subjects during a fully-immersive flight phobia experiment under two different conditions: the virtual flight environment was displayed immediately, or subjects visited a transitional environment before entering the virtual flight environment. We have quantified to what extent a gradual transition to the VE via a transitional environment increases the level of presence. We have found that subjective responses show significantly higher scores for the user's sense of presence, and that subjects' behavioral reactions change when a transitional environment is shown first. Considering physiological reactions, no significant difference could be found.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In order to increase a user's sense of presence in an artificial environment some researchers propose a gradual transition from reality to the virtual world instead of immersing users into the virtual world directly. One approach is to start the VR experience in a virtual replica of the physical space to accustom users to the characteristics of VR, e.g., latency, reduced field of view or tracking errors, in a known environment. Although this procedure is already applied in VR demonstrations, until now it has not been verified whether the usage of such a transitional environment - as transition between real and virtual environment - increases someone's sense of presence. We have observed subjective, physiological and behavioral reactions of subjects during a fully-immersive flight phobia experiment under two different conditions: the virtual flight environment was displayed immediately, or subjects visited a transitional environment before entering the virtual flight environment. We have quantified to what extent a gradual transition to the VE via a transitional environment increases the level of presence. We have found that subjective responses show significantly higher scores for the user's sense of presence, and that subjects' behavioral reactions change when a transitional environment is shown first. Considering physiological reactions, no significant difference could be found. |

| Annika Busch; Marius Staggenborg; Tobias Brix; Gerd Bruder; Frank Steinicke; Klaus H. Hinrichs Darstellung physikalischer Objekte in Immersiven Head-Mounted Display Umgebungen Proceedings Article In: Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR), pp. 233–244, Shaker Verlag, 2009. @inproceedings{BSBBSH09,

title = {Darstellung physikalischer Objekte in Immersiven Head-Mounted Display Umgebungen},

author = { Annika Busch and Marius Staggenborg and Tobias Brix and Gerd Bruder and Frank Steinicke and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSBBSH09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR)},

pages = {233--244},

publisher = {Shaker Verlag},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

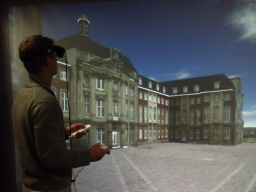

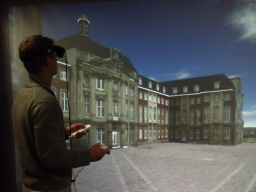

| Gerd Bruder; Frank Steinicke; Klaus H. Hinrichs Arch-Explore: A Natural User Interface for Immersive Architectural Walkthroughs Proceedings Article In: Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI), pp. 75–82, IEEE Press, 2009. @inproceedings{BSH09,

title = {Arch-Explore: A Natural User Interface for Immersive Architectural Walkthroughs},

author = { Gerd Bruder and Frank Steinicke and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/BSH09.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI)},

pages = {75--82},

publisher = {IEEE Press},

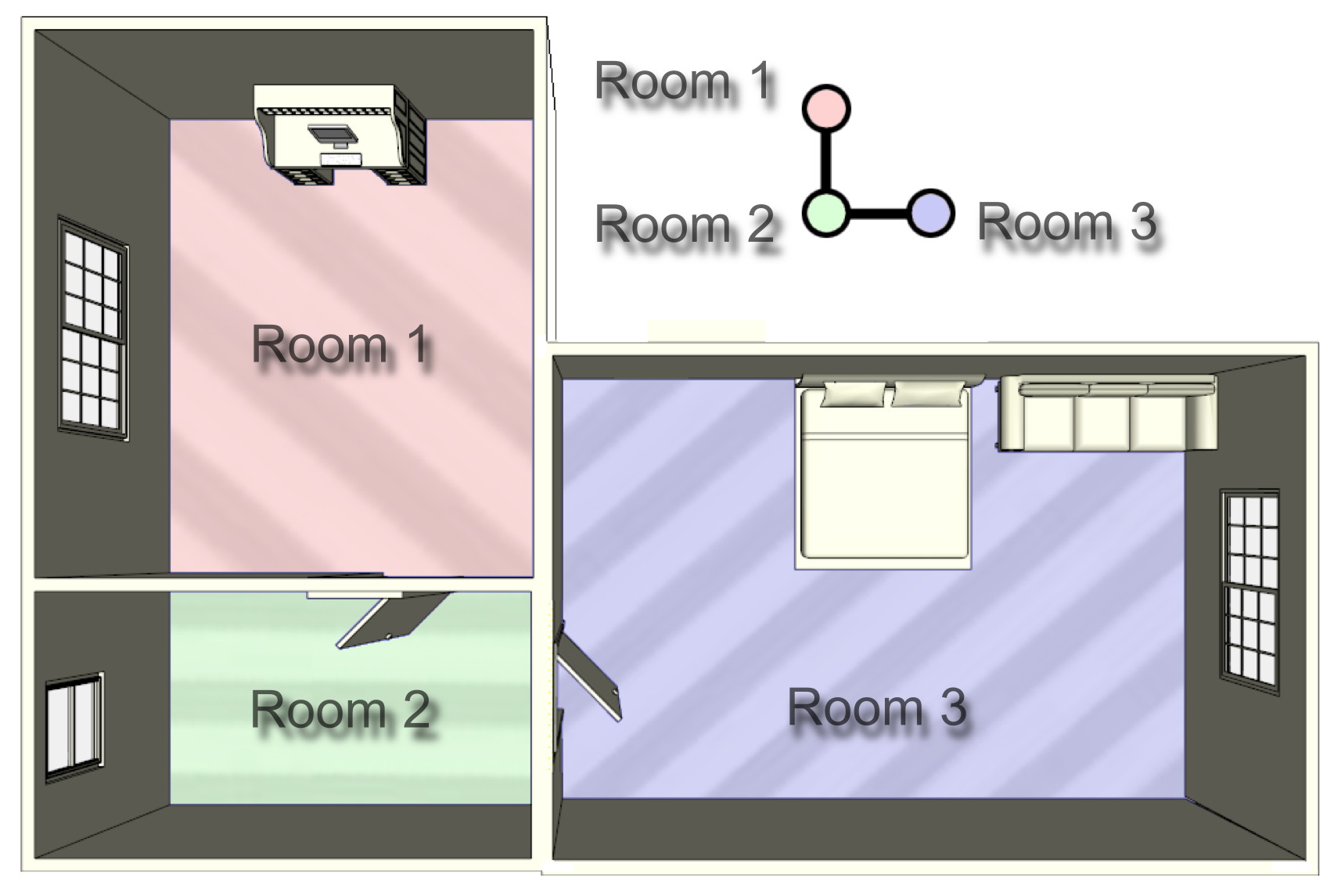

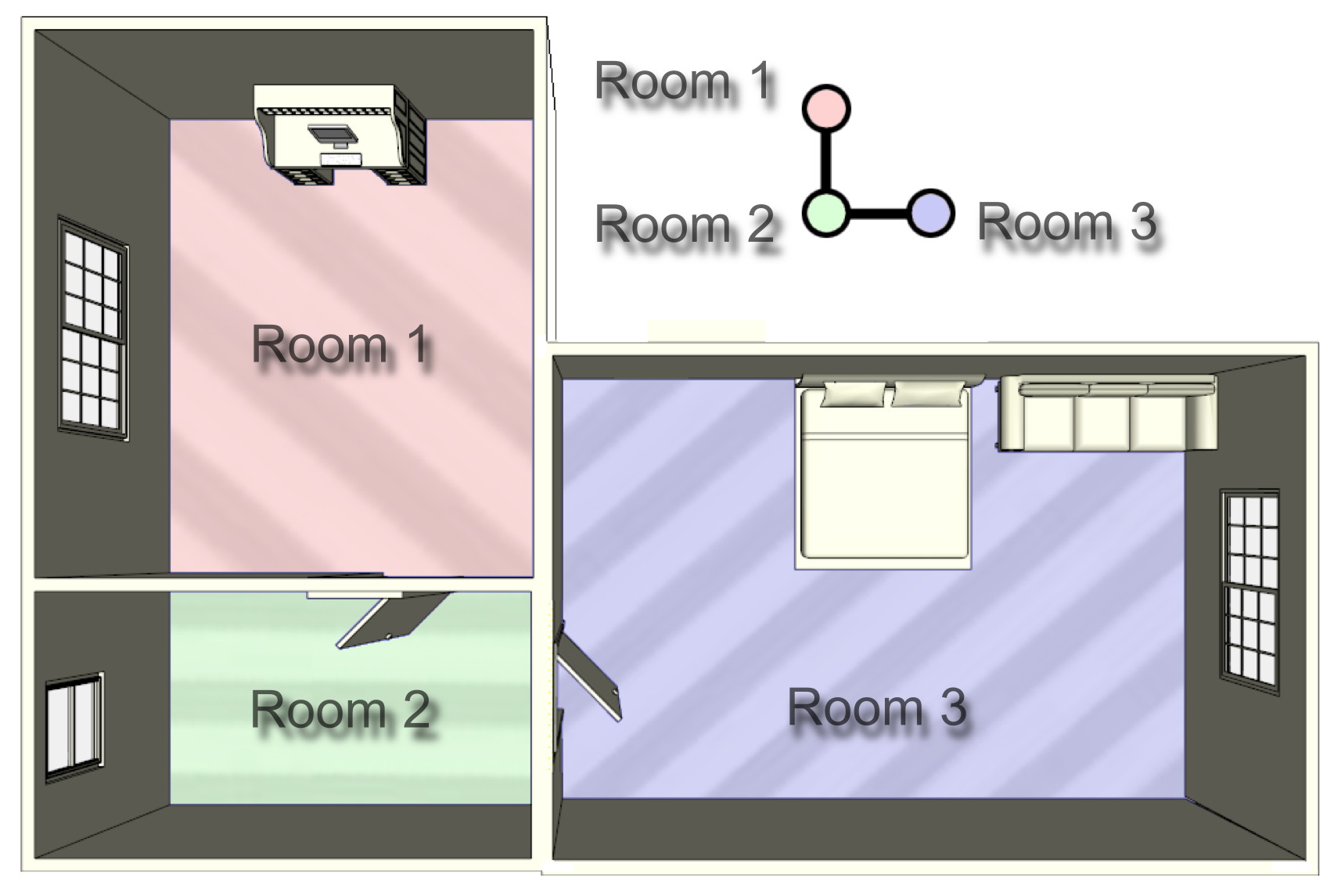

abstract = {In this paper we propose the Arch-Explore user interface, which supports natural exploration of architectural 3D models at different scales in a real walking virtual reality (VR) environment such as head-mounted display (HMD) or CAVE setups. We discuss in detail how user movements can be transferred to the virtual world to enable walking through virtual indoor environments. To overcome the limited interaction space in small VR laboratory setups, we have implemented redirected walking techniques to support natural exploration of comparably large-scale virtual models. Furthermore, the concept of virtual portals provides a means to cover long distances intuitively within architectural models. We describe the software and hardware setup and discuss benefits of Arch-Explore.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we propose the Arch-Explore user interface, which supports natural exploration of architectural 3D models at different scales in a real walking virtual reality (VR) environment such as head-mounted display (HMD) or CAVE setups. We discuss in detail how user movements can be transferred to the virtual world to enable walking through virtual indoor environments. To overcome the limited interaction space in small VR laboratory setups, we have implemented redirected walking techniques to support natural exploration of comparably large-scale virtual models. Furthermore, the concept of virtual portals provides a means to cover long distances intuitively within architectural models. We describe the software and hardware setup and discuss benefits of Arch-Explore. |

| Frank Steinicke; Gerd Bruder; Kai Rothaus; Klaus H. Hinrichs POSTER: Visual Identity from Egocentric Camera Images for Head-Mounted Display Environments Proceedings Article In: Proceedings of the Virtual Reality International Conference (VRIC) (Poster Proceedings), pp. 289–290, 2009. @inproceedings{SBRH09,

title = {POSTER: Visual Identity from Egocentric Camera Images for Head-Mounted Display Environments},

author = { Frank Steinicke and Gerd Bruder and Kai Rothaus and Klaus H. Hinrichs},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the Virtual Reality International Conference (VRIC) (Poster Proceedings)},

pages = {289--290},

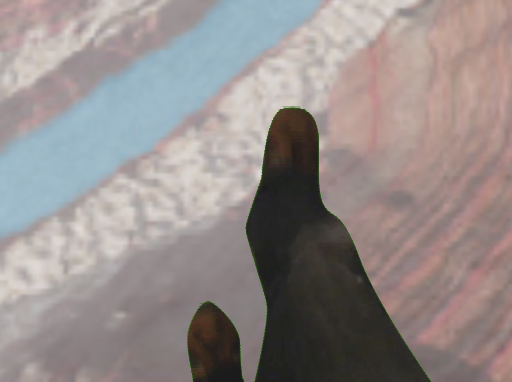

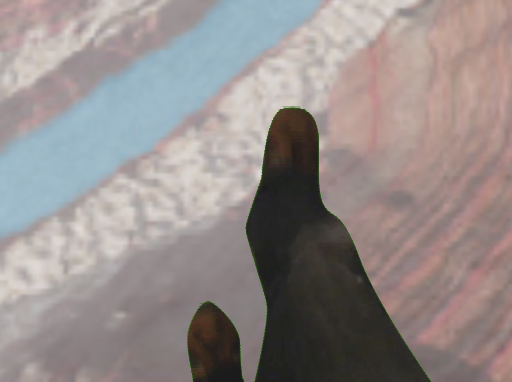

abstract = {A number of researchers have reported that a fully-articulated visual representation of oneself in an immersive virtual environment (IVE) has considerable impact on social interaction and the subjective sense of presence in the virtual world. Therefore, many approaches address this challenge and incorporate a virtual model of the user’s body in the VE. Usually, a fully-articulated visual identity or or socalled “virtual body†is manipulated according to user motions which are defined by feature points detected by a tracking system. Therefore, markers have to be attached to certain feature points as done, for instance, with full-body motion coats which have to be worn by the user. Such instrumentation is unsuitable in scenarios which involve multiple persons simultaneously or in which participants frequently change. Furthermore, individual characteristics such as skin pigmentation, hairiness or clothes are not considered by this procedure where the tracked data is always mapped to the same invariant 3D model. In this paper we present a software-based approach that allows to incorporate a realistic visual identity of oneself in the VE, which can be integrated easily into existing hardware setups. In our setup we focus on visual representation of a user's arms and hands. The idea is to make use of images captured by cameras that are attached to video-see-through head-mounted displays. These egocentric frames can be segmented into foreground showing parts of the human body, i. e., the human's hands, and background. Then the extremities can be overlayed with the user's current view of the virtual world, and thus a high-fidelity virtual body can be visualized.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

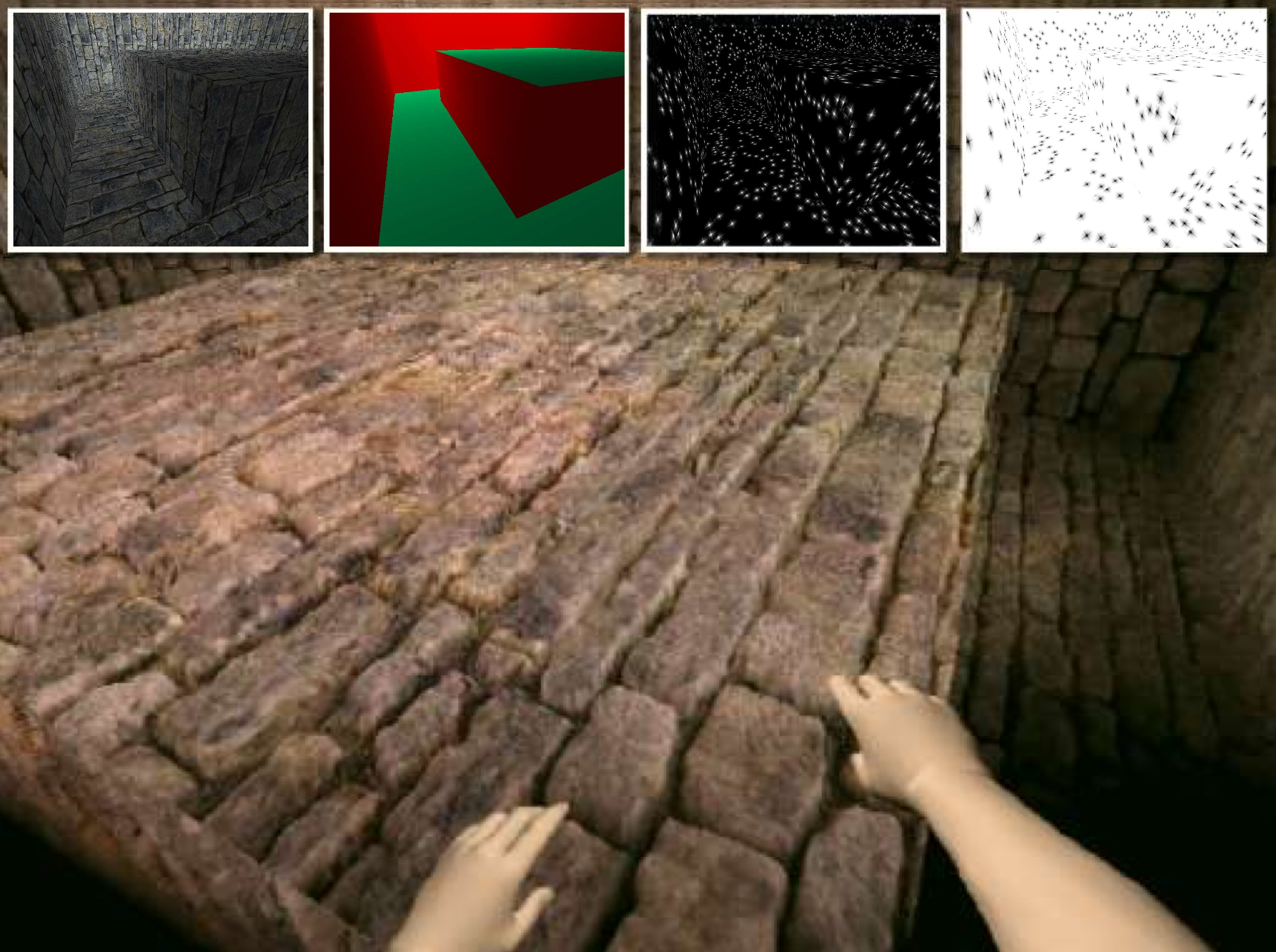

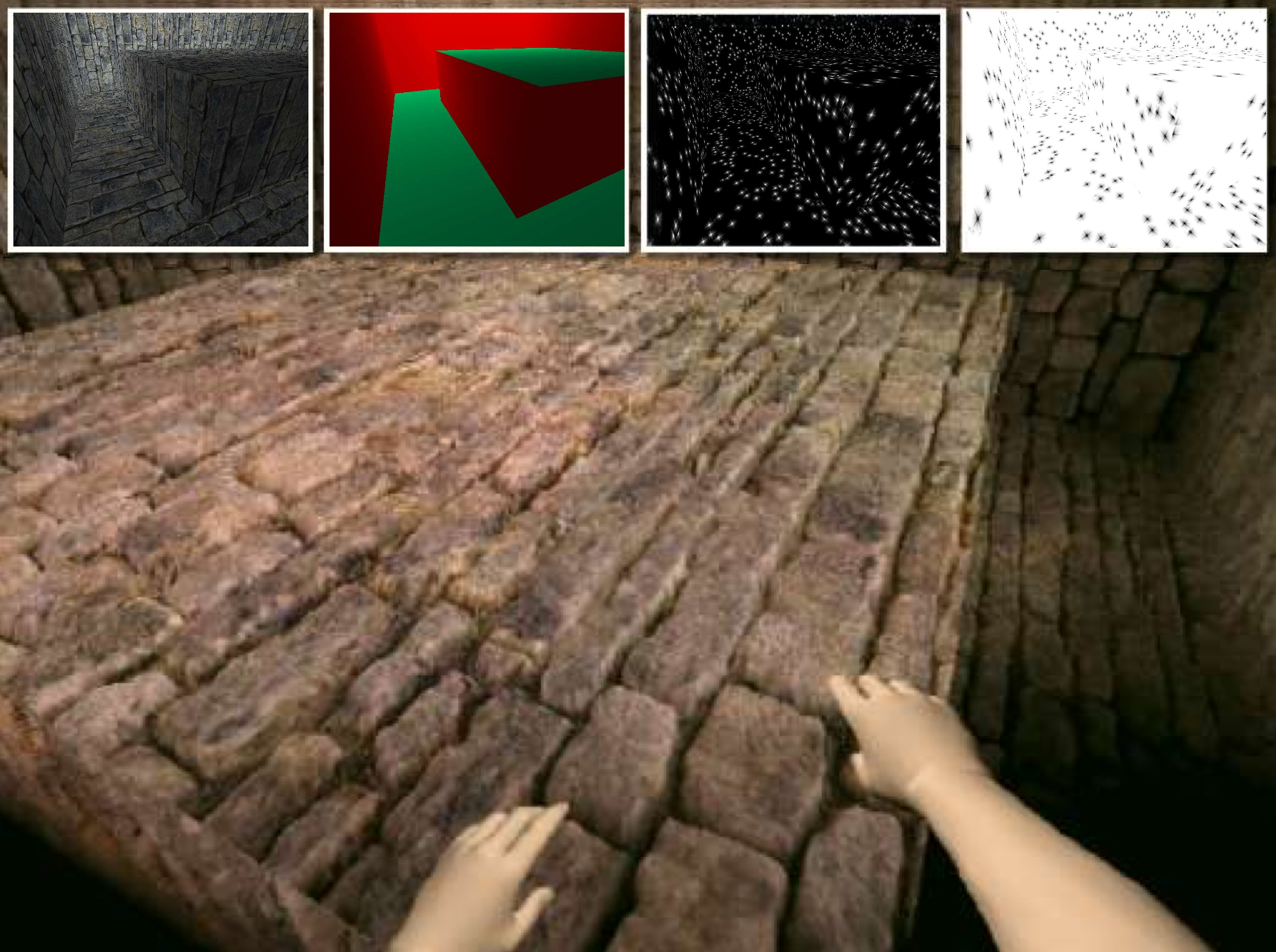

A number of researchers have reported that a fully-articulated visual representation of oneself in an immersive virtual environment (IVE) has considerable impact on social interaction and the subjective sense of presence in the virtual world. Therefore, many approaches address this challenge and incorporate a virtual model of the user’s body in the VE. Usually, a fully-articulated visual identity or or socalled “virtual body†is manipulated according to user motions which are defined by feature points detected by a tracking system. Therefore, markers have to be attached to certain feature points as done, for instance, with full-body motion coats which have to be worn by the user. Such instrumentation is unsuitable in scenarios which involve multiple persons simultaneously or in which participants frequently change. Furthermore, individual characteristics such as skin pigmentation, hairiness or clothes are not considered by this procedure where the tracked data is always mapped to the same invariant 3D model. In this paper we present a software-based approach that allows to incorporate a realistic visual identity of oneself in the VE, which can be integrated easily into existing hardware setups. In our setup we focus on visual representation of a user's arms and hands. The idea is to make use of images captured by cameras that are attached to video-see-through head-mounted displays. These egocentric frames can be segmented into foreground showing parts of the human body, i. e., the human's hands, and background. Then the extremities can be overlayed with the user's current view of the virtual world, and thus a high-fidelity virtual body can be visualized. |

![[POSTER] A Virtual Body for Augmented Virtuality by Chroma-Keying of Egocentric Videos](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRH09a_a.jpg) | Frank Steinicke; Gerd Bruder; Kai Rothaus; Klaus H. Hinrichs [POSTER] A Virtual Body for Augmented Virtuality by Chroma-Keying of Egocentric Videos Proceedings Article In: Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI) (Poster Presentation), pp. 125–126, IEEE Press, 2009. @inproceedings{SBRH09a,

title = {[POSTER] A Virtual Body for Augmented Virtuality by Chroma-Keying of Egocentric Videos},

author = { Frank Steinicke and Gerd Bruder and Kai Rothaus and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRH09a.pdf},

year = {2009},

date = {2009-01-01},

booktitle = {Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI) (Poster Presentation)},

pages = {125--126},

publisher = {IEEE Press},

abstract = {A fully-articulated visual representation of oneself in an immersive virtual environment has considerable impact on the subjective sense of presence in the virtual world. Therefore, many approaches address this challenge and incorporate a virtual model of the user's body in the VE. Such a virtual body (VB) is manipulated according to user motions which are defined by feature points detected by a tracking system. The required tracking devices are unsuitable in scenarios which involve multiple persons simultaneously or in which participants frequently change. Furthermore, individual characteristics such as skin pigmentation, hairiness or clothes are not considered by this procedure. In this paper we present a software-based approach that allows to incorporate a realistic visual representation of oneself in the VE. The idea is to make use of images captured by cameras that are attached to video-see-through head-mounted displays. These egocentric frames can be segmented into foreground showing parts of the human body and background. Then the extremities can be overlayed with the user's current view of the virtual world, and thus a high-fidelity virtual body can be visualized.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

A fully-articulated visual representation of oneself in an immersive virtual environment has considerable impact on the subjective sense of presence in the virtual world. Therefore, many approaches address this challenge and incorporate a virtual model of the user's body in the VE. Such a virtual body (VB) is manipulated according to user motions which are defined by feature points detected by a tracking system. The required tracking devices are unsuitable in scenarios which involve multiple persons simultaneously or in which participants frequently change. Furthermore, individual characteristics such as skin pigmentation, hairiness or clothes are not considered by this procedure. In this paper we present a software-based approach that allows to incorporate a realistic visual representation of oneself in the VE. The idea is to make use of images captured by cameras that are attached to video-see-through head-mounted displays. These egocentric frames can be segmented into foreground showing parts of the human body and background. Then the extremities can be overlayed with the user's current view of the virtual world, and thus a high-fidelity virtual body can be visualized. |

2008

|

| Frank Steinicke; Gerd Bruder; Timo Ropinski; Klaus H. Hinrichs The Holodeck Construction Manual Proceedings Article In: Proceedings of the ACM International Conference and Exhibition on Computer Graphics and Interactive Techniques (SIGGRAPH) (Conference DVD), 2008. @inproceedings{SBRH08,

title = {The Holodeck Construction Manual},

author = { Frank Steinicke and Gerd Bruder and Timo Ropinski and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRH08.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of the ACM International Conference and Exhibition on Computer Graphics and Interactive Techniques (SIGGRAPH) (Conference DVD)},

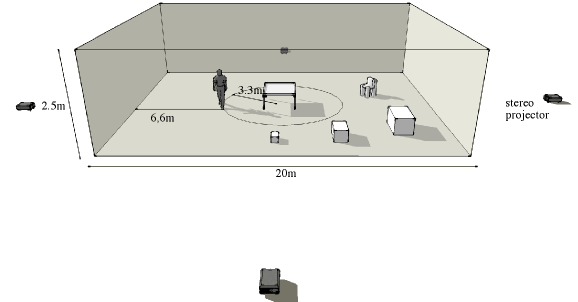

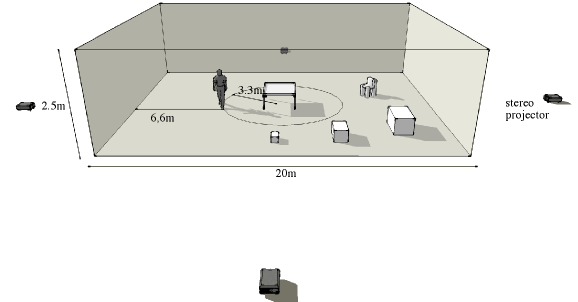

abstract = {Immersive virtual reality (IVR) systems allow users to interact in virtual environments (VEs), but in these systems, e.g., six-wall CAVEs with outside-in optical tracking, presence is limited to the virtual world and the physical surrounding cannot be perceived. Real walking is the most intuitive way of moving through such a setup as well as through our real world. Unfortunately, typical IVEs have only limited interaction space in contrast to the potentially infinite VE. In the last years an enormous effort has been undertaken in order to allow omnidirectional walking of arbitrary distances in VEs. Appropriate hardware-based approaches are very costly Bouguila and thus will probably not get beyond a prototype stage in the near future. We propose an alternative approach, which is motivated by the theory of perception. We exploit the fact that the human's visual sense may vary from the proprioceptive and vestibular senses without humans noticing a difference. Thus it becomes possible to direct the user on a physical path which may vary from the path perceived in the IVE. To exploit this limitation of the human sensory system we have extended redirected walking by introducing motion compression and gains, which scale the real distance a user walks, rotation compression and gains, which make the real turns smaller or larger, and curvature gains, which bend the user's walking direction such that s/he walks on a curve. Furthermore, we propose the concept of dynamic passive haptics which extends passive haptics in such a way that any number of virtual objects can be sensed by means of real proxy objects having similar haptic capabilities. Thus, dynamic passive haptics provide the user with the illusion of interacting with a desired virtual object by touching a corresponding proxy object. By exploiting these proposed concepts, finally the virtual holodeck construction manual can be written. Such an IVE provides sufficient space to make the users walk arbitrarily and sense any objects in the VE by means of touching an associated proxy object.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Immersive virtual reality (IVR) systems allow users to interact in virtual environments (VEs), but in these systems, e.g., six-wall CAVEs with outside-in optical tracking, presence is limited to the virtual world and the physical surrounding cannot be perceived. Real walking is the most intuitive way of moving through such a setup as well as through our real world. Unfortunately, typical IVEs have only limited interaction space in contrast to the potentially infinite VE. In the last years an enormous effort has been undertaken in order to allow omnidirectional walking of arbitrary distances in VEs. Appropriate hardware-based approaches are very costly Bouguila and thus will probably not get beyond a prototype stage in the near future. We propose an alternative approach, which is motivated by the theory of perception. We exploit the fact that the human's visual sense may vary from the proprioceptive and vestibular senses without humans noticing a difference. Thus it becomes possible to direct the user on a physical path which may vary from the path perceived in the IVE. To exploit this limitation of the human sensory system we have extended redirected walking by introducing motion compression and gains, which scale the real distance a user walks, rotation compression and gains, which make the real turns smaller or larger, and curvature gains, which bend the user's walking direction such that s/he walks on a curve. Furthermore, we propose the concept of dynamic passive haptics which extends passive haptics in such a way that any number of virtual objects can be sensed by means of real proxy objects having similar haptic capabilities. Thus, dynamic passive haptics provide the user with the illusion of interacting with a desired virtual object by touching a corresponding proxy object. By exploiting these proposed concepts, finally the virtual holodeck construction manual can be written. Such an IVE provides sufficient space to make the users walk arbitrarily and sense any objects in the VE by means of touching an associated proxy object. |

| Frank Steinicke; Gerd Bruder; Luv Kohli; Jason Jerald; Klaus H. Hinrichs Taxonomy and Implementation of Redirection Techniques for Ubiquitous Passive Haptic Feedback Proceedings Article In: Proceedings of the International Conference on Cyberworlds (CW), pp. 217–223, IEEE Press, 2008, ((acceptance rate 39%)). @inproceedings{SBKJH08,

title = {Taxonomy and Implementation of Redirection Techniques for Ubiquitous Passive Haptic Feedback},

author = { Frank Steinicke and Gerd Bruder and Luv Kohli and Jason Jerald and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBKJH08.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of the International Conference on Cyberworlds (CW)},

pages = {217--223},

publisher = {IEEE Press},

abstract = {Traveling through immersive virtual environments (IVEs) by means of real walking is an important activity to increase naturalness of VR-based interaction. However, the size of the virtual world often exceeds the size of the tracked space so that a straightforward implementation of omni-directional and unlimited walking is not possible. Redirected walking is one concept to solve this problem of walking in VEs by inconspicuously guiding the user on a physical path that may differ from the path the user visually perceives. When the user approaches a virtual object she can be redirected to a real proxy object that is registered to the virtual counterpart and provides passive haptic feedback. In such passive haptic environments, any number of virtual objects can be mapped to proxy objects having similar haptic properties, e.g., size, shape and texture. The user can sense a virtual object by touching its real world counterpart. Redirecting a user to a registered proxy object makes it necessary to predict the user's target location in the VE. Based on this prediction we determine a path through the physical space such that the user is guided to the registered proxy object. We present a taxonomy of possible redirection techniques that enable user guidance such that inconsistencies between visual and proprioceptive stimuli are imperceptible. We describe how a user's target in the virtual world can be predicted reliably and how a corresponding real-world path to the registered proxy object can be derived.},

note = {(acceptance rate 39%)},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Traveling through immersive virtual environments (IVEs) by means of real walking is an important activity to increase naturalness of VR-based interaction. However, the size of the virtual world often exceeds the size of the tracked space so that a straightforward implementation of omni-directional and unlimited walking is not possible. Redirected walking is one concept to solve this problem of walking in VEs by inconspicuously guiding the user on a physical path that may differ from the path the user visually perceives. When the user approaches a virtual object she can be redirected to a real proxy object that is registered to the virtual counterpart and provides passive haptic feedback. In such passive haptic environments, any number of virtual objects can be mapped to proxy objects having similar haptic properties, e.g., size, shape and texture. The user can sense a virtual object by touching its real world counterpart. Redirecting a user to a registered proxy object makes it necessary to predict the user's target location in the VE. Based on this prediction we determine a path through the physical space such that the user is guided to the registered proxy object. We present a taxonomy of possible redirection techniques that enable user guidance such that inconsistencies between visual and proprioceptive stimuli are imperceptible. We describe how a user's target in the virtual world can be predicted reliably and how a corresponding real-world path to the registered proxy object can be derived. |

| Frank Steinicke; Gerd Bruder; Timo Ropinski; Klaus H. Hinrichs Moving Towards Generally Applicable Redirected Walking Proceedings Article In: Proceedings of the Virtual Reality International Conference (VRIC), pp. 15–24, IEEE Press, 2008. @inproceedings{SBRH08a,

title = {Moving Towards Generally Applicable Redirected Walking},

author = { Frank Steinicke and Gerd Bruder and Timo Ropinski and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRH08a.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of the Virtual Reality International Conference (VRIC)},

pages = {15--24},

publisher = {IEEE Press},

abstract = {Walking is the most natural way of moving within a virtual environment (VE). Mapping the user's movement one-to-one to the real world clearly has the drawback that the limited range of the tracking sensors and a rather small working space in the real word restrict the user's interaction. In this paper we introduce concepts for virtual locomotion interfaces that support exploration of large-scale virtual environments by redirected walking. Based on the results of a user study we have quantified to which degree users can unknowingly be redirected in order to guide them through an arbitrarily sized VE in which virtual paths differ from the paths tracked in the real working space. We describe the concepts of generic redirected walking in detail and present implications that have been derived from the initially conducted user study. Furthermore we discuss example applications from different domains in order to point out the benefits of our approach.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Walking is the most natural way of moving within a virtual environment (VE). Mapping the user's movement one-to-one to the real world clearly has the drawback that the limited range of the tracking sensors and a rather small working space in the real word restrict the user's interaction. In this paper we introduce concepts for virtual locomotion interfaces that support exploration of large-scale virtual environments by redirected walking. Based on the results of a user study we have quantified to which degree users can unknowingly be redirected in order to guide them through an arbitrarily sized VE in which virtual paths differ from the paths tracked in the real working space. We describe the concepts of generic redirected walking in detail and present implications that have been derived from the initially conducted user study. Furthermore we discuss example applications from different domains in order to point out the benefits of our approach. |

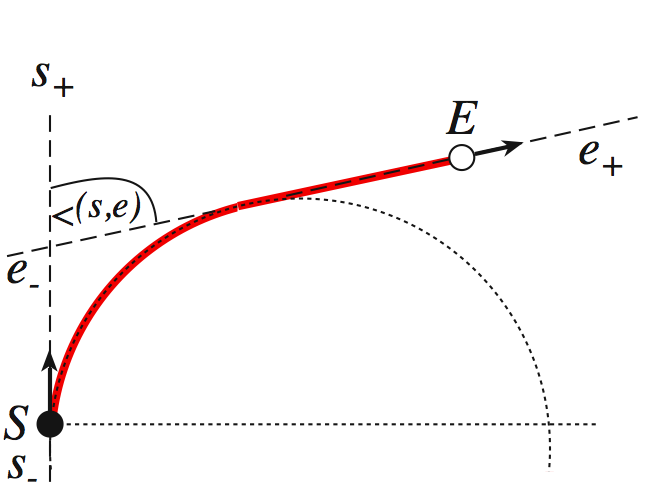

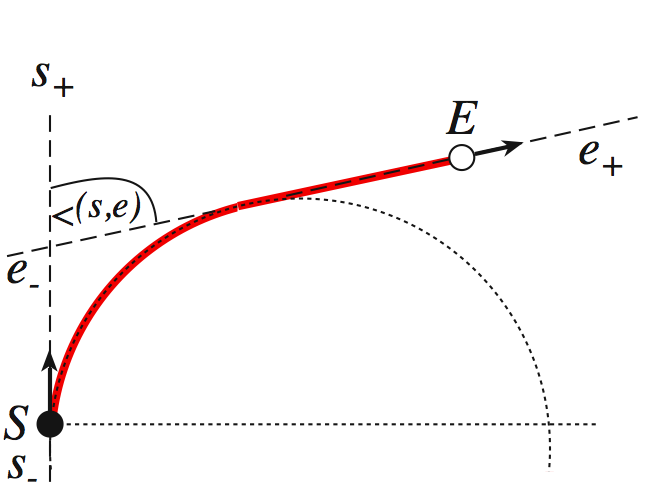

| Frank Steinicke; Gerd Bruder; Jason Jerald; Harald Frenz; Markus Lappe Analyses of Human Sensitivity to Redirected Walking Proceedings Article In: Proceedings of the ACM Symposium on Virtual Reality Software and Technology (VRST), pp. 149–156, 2008, ((acceptance rate 17%)). @inproceedings{SBJFL08,

title = {Analyses of Human Sensitivity to Redirected Walking},

author = { Frank Steinicke and Gerd Bruder and Jason Jerald and Harald Frenz and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBJFL08.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of the ACM Symposium on Virtual Reality Software and Technology (VRST)},

pages = {149--156},

abstract = {Redirected walking allows users to walk through large-scale immersive virtual environments (IVEs) while physically remaining in a reasonably small workspace by intentionally injecting scene motion into the IVE. In a constant stimuli experiment with a two-alternative-forced-choice task we have quantified how much humans can unknowingly be redirected on virtual paths which are different from the paths they actually walk. 18 subjects have been tested in four different experiments: (E1a) discrimination between virtual and physical rotation, (E1b) discrimination between two successive rotations, (E2) discrimination between virtual and physical translation, and discrimination of walking direction (E3a) without and (E3b) with start-up. In experiment E1a subjects performed rotations to which different gains have been applied, and then had to choose whether or not the visually perceived rotation was greater than the physical rotation. In experiment E1b subjects discriminated between two successive rotations where different gains have been applied to the physical rotation. In experiment E2 subjects chose if they thought that the physical walk was longer than the visually perceived scaled travel distance. In experiment E3a subjects walked a straight path in the IVE which was physically bent to the left or to the right, and they estimate the direction of the curvature. In experiment E3a the gain was applied immediately, whereas the gain was applied after a start-up of two meters in experiment E3b. Our results show that users can be turned physically about 68% more or 10% less than the perceived virtual rotation, distances can be up- or down-scaled by 22%, and users can be redirected on an circular arc with a radius greater than $24$ meters while they believe they are walking straight.},

note = {(acceptance rate 17%)},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Redirected walking allows users to walk through large-scale immersive virtual environments (IVEs) while physically remaining in a reasonably small workspace by intentionally injecting scene motion into the IVE. In a constant stimuli experiment with a two-alternative-forced-choice task we have quantified how much humans can unknowingly be redirected on virtual paths which are different from the paths they actually walk. 18 subjects have been tested in four different experiments: (E1a) discrimination between virtual and physical rotation, (E1b) discrimination between two successive rotations, (E2) discrimination between virtual and physical translation, and discrimination of walking direction (E3a) without and (E3b) with start-up. In experiment E1a subjects performed rotations to which different gains have been applied, and then had to choose whether or not the visually perceived rotation was greater than the physical rotation. In experiment E1b subjects discriminated between two successive rotations where different gains have been applied to the physical rotation. In experiment E2 subjects chose if they thought that the physical walk was longer than the visually perceived scaled travel distance. In experiment E3a subjects walked a straight path in the IVE which was physically bent to the left or to the right, and they estimate the direction of the curvature. In experiment E3a the gain was applied immediately, whereas the gain was applied after a start-up of two meters in experiment E3b. Our results show that users can be turned physically about 68% more or 10% less than the perceived virtual rotation, distances can be up- or down-scaled by 22%, and users can be redirected on an circular arc with a radius greater than $24$ meters while they believe they are walking straight. |

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Timo Ropinski; Mario Lopes Advances in Human-Computer Interaction: 3D User Interfaces for Collaborative Works Book Chapter In: pp. 279–294, In-Tech, 2008. @inbook{SBHRL08,

title = {Advances in Human-Computer Interaction: 3D User Interfaces for Collaborative Works},

author = { Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Timo Ropinski and Mario Lopes},

year = {2008},

date = {2008-01-01},

pages = {279--294},

publisher = {In-Tech},

abstract = {Desktop environments have proven to be a powerful user interface and are used as the de facto standard human-computer interaction paradigm for over 20 years. However, there is a rising demand on 3D applications dealing with complex datasets, which exceeds the possibilities provided by traditional devices or two-dimensional display. For these domains more immersive and intuitive interfaces are required. But in order to get the users' acceptance, technology-driven solutions that require inconvenient instrumentation, e.g., stereo glasses or tracked gloves, should be avoided. Autostereoscopic display environments equipped with tracking systems enable users to experience 3D virtual environments more natural without annoying devices, for instance via gestures. However, currently these approaches are only applied for specially designed or adapted applications without universal usability. Although these systems provide enough space to support multi-user, additional costs and inconvenient instrumentation hinder acceptance of these user interfaces. In this chapter we introduce new collaborative 3D user interface concepts for such setups where minimal instrumentation of the user is required such that the strategies can be easily integrated in everyday working environments. Therefore, we propose an interaction system and framework, which allows displaying and interacting with both mono- as well as stereoscopic content in parallel. Furthermore, the setup enables multiple users to view the same data simultaneously. The challenges for combined mouse-, keyboard- and gesture-based input paradigms in such an environment are pointed out and novel interaction strategies are introduced.},

keywords = {},

pubstate = {published},

tppubtype = {inbook}

}

Desktop environments have proven to be a powerful user interface and are used as the de facto standard human-computer interaction paradigm for over 20 years. However, there is a rising demand on 3D applications dealing with complex datasets, which exceeds the possibilities provided by traditional devices or two-dimensional display. For these domains more immersive and intuitive interfaces are required. But in order to get the users' acceptance, technology-driven solutions that require inconvenient instrumentation, e.g., stereo glasses or tracked gloves, should be avoided. Autostereoscopic display environments equipped with tracking systems enable users to experience 3D virtual environments more natural without annoying devices, for instance via gestures. However, currently these approaches are only applied for specially designed or adapted applications without universal usability. Although these systems provide enough space to support multi-user, additional costs and inconvenient instrumentation hinder acceptance of these user interfaces. In this chapter we introduce new collaborative 3D user interface concepts for such setups where minimal instrumentation of the user is required such that the strategies can be easily integrated in everyday working environments. Therefore, we propose an interaction system and framework, which allows displaying and interacting with both mono- as well as stereoscopic content in parallel. Furthermore, the setup enables multiple users to view the same data simultaneously. The challenges for combined mouse-, keyboard- and gesture-based input paradigms in such an environment are pointed out and novel interaction strategies are introduced. |

![[POSTER] A Universal Virtual Locomotion System: Supporting Generic Redirected Walking and Dynamic Passive Haptics within Legacy 3D Graphics Applications](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRHFL08a.png) | Frank Steinicke; Gerd Bruder; Timo Ropinski; Klaus H. Hinrichs; Harald Frenz; Markus Lappe [POSTER] A Universal Virtual Locomotion System: Supporting Generic Redirected Walking and Dynamic Passive Haptics within Legacy 3D Graphics Applications Proceedings Article In: Proceedings of IEEE Virtual Reality (VR) (Poster Presentation), pp. 192–193, IEEE Press, 2008. @inproceedings{SBRHFL08a,

title = {[POSTER] A Universal Virtual Locomotion System: Supporting Generic Redirected Walking and Dynamic Passive Haptics within Legacy 3D Graphics Applications},

author = { Frank Steinicke and Gerd Bruder and Timo Ropinski and Klaus H. Hinrichs and Harald Frenz and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRHFL08a.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR) (Poster Presentation)},

pages = {192--193},

publisher = {IEEE Press},

abstract = {General interest in visualizations of digital 3D cityscapes is growing rapidly, and several applications are already available that display such models very realistically. In order to generate a virtual 3D city model different approaches exist which use miscellaneous input data sources, for example, 2D maps, aerial images, or laser-scanned data. However, 3D landmarks which denote highly-complex and architecturally prominent buildings, e.g., churches, castles, spires etc., cannot be reproduced in an adequate manner by automatic reconstruction. Therefore, these entities are usually modeled manually by architectural offices or 3D design companies. In recent years user interfaces of 3D modeling applications have evolved such that these applications are widely accepted and easy-to-use - even for non-experts. In this paper we present a field report on the manual modeling of 3D landmarks in which two classes have participated. In cooperation with two different schools we have performed two projects which eight and ninth grades students. In each project every student has chosen a particular 3D building of sufficient complexity to model. All models have been integrated into our city visualization environment. We present the results as well as the experience that we have made during this project.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

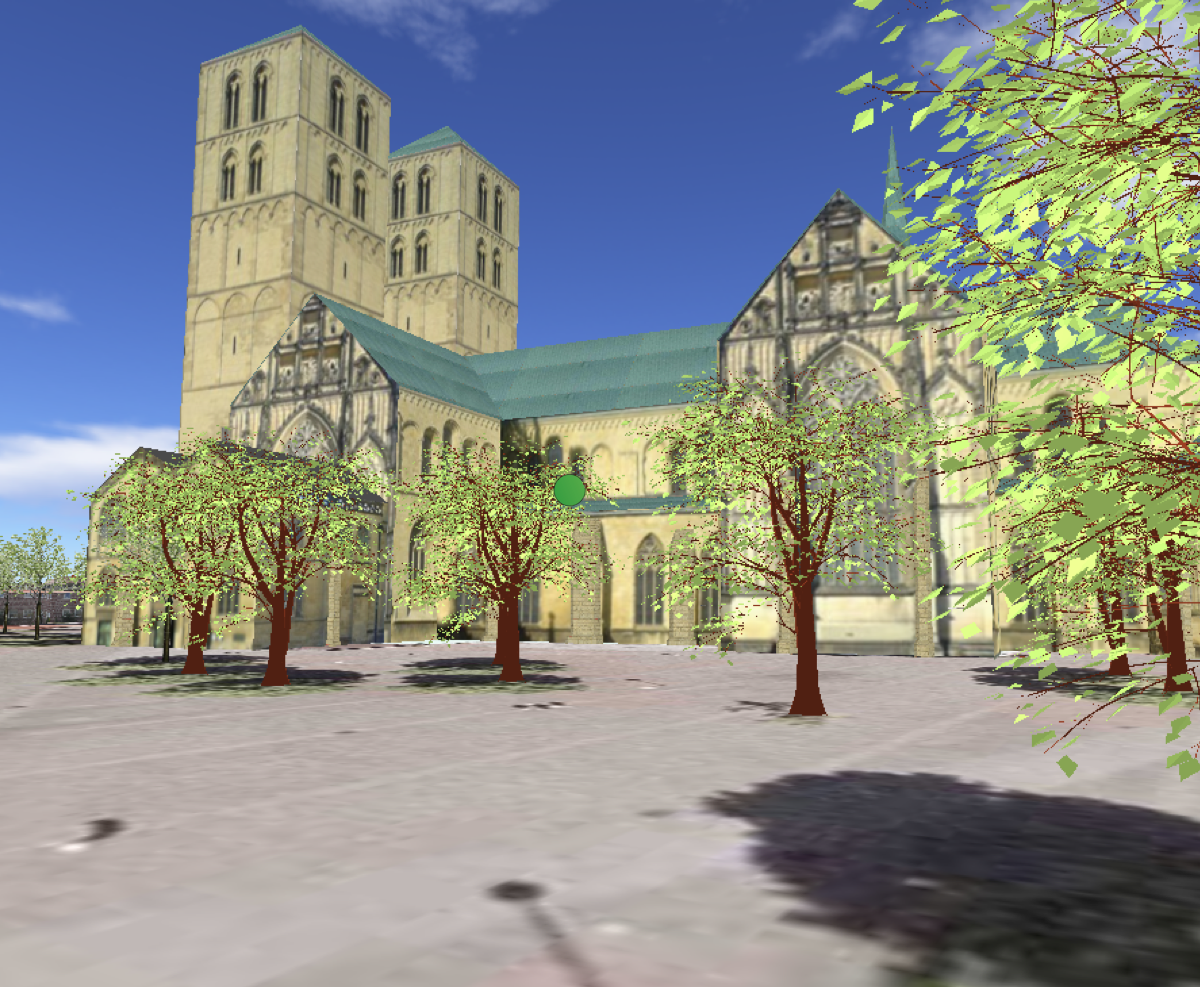

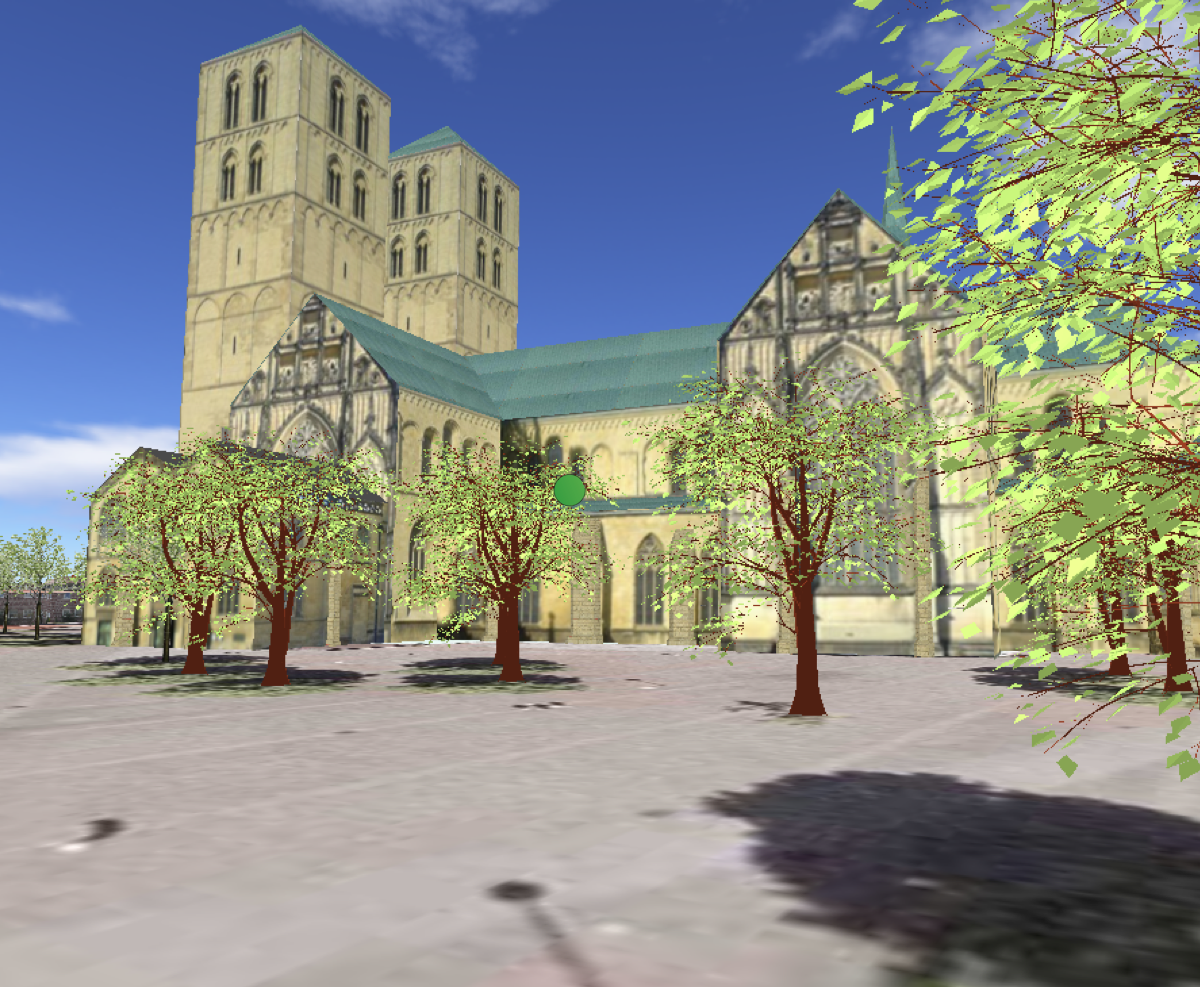

General interest in visualizations of digital 3D cityscapes is growing rapidly, and several applications are already available that display such models very realistically. In order to generate a virtual 3D city model different approaches exist which use miscellaneous input data sources, for example, 2D maps, aerial images, or laser-scanned data. However, 3D landmarks which denote highly-complex and architecturally prominent buildings, e.g., churches, castles, spires etc., cannot be reproduced in an adequate manner by automatic reconstruction. Therefore, these entities are usually modeled manually by architectural offices or 3D design companies. In recent years user interfaces of 3D modeling applications have evolved such that these applications are widely accepted and easy-to-use - even for non-experts. In this paper we present a field report on the manual modeling of 3D landmarks in which two classes have participated. In cooperation with two different schools we have performed two projects which eight and ninth grades students. In each project every student has chosen a particular 3D building of sufficient complexity to model. All models have been integrated into our city visualization environment. We present the results as well as the experience that we have made during this project. |

![[POSTER] A User Guidance Approach for Passive Haptic Environments](https://sreal.ucf.edu/wp-content/uploads/2017/02/SWBH08.jpg) | Frank Steinicke; Hanno Weltzel; Gerd Bruder; Klaus H. Hinrichs [POSTER] A User Guidance Approach for Passive Haptic Environments Proceedings Article In: Proceedings of the Eurographics Symposium on Virtual Environments (EGVE) (Short Paper and Poster Proceedings), pp. 31–34, 2008. @inproceedings{SWBH08,

title = {[POSTER] A User Guidance Approach for Passive Haptic Environments},

author = { Frank Steinicke and Hanno Weltzel and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SWBH08.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of the Eurographics Symposium on Virtual Environments (EGVE) (Short Paper and Poster Proceedings)},

pages = {31--34},

abstract = {Traveling through virtual environments (VEs) by means of real walking is a challenging task since usually the size of the virtual world exceeds the size of the tracked interaction space. Redirected walking is one concept to solve this problem by guiding the user on a physical path which differs from the path the user visually perceives, for example, in head-mounted display (HMD) environments. The user can be redirected to certain locations in the physical space, in particular to real proxy objects which provide emphpassive feedback. In such passive haptic environments, any number of virtual objects can be mapped to proxy objects having similar haptic properties, i.e., size, shape and surface structure. When the user is guided to corresponding proxy objects, s/he can sense virtual objects by touching their real world counterparts. Therefore it is vital to predict the user's movements in the virtual world in order to recognize the target location. Based on the prediction a transformed path can determined in the physical space on which the user is guided to the desired proxy object. In this paper we present concepts how a user's path can be predicted reliably and how a corresponding path to a desired proxy object can be derived on which the user does not observe inconsistencies between vision and proprioception.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

Traveling through virtual environments (VEs) by means of real walking is a challenging task since usually the size of the virtual world exceeds the size of the tracked interaction space. Redirected walking is one concept to solve this problem by guiding the user on a physical path which differs from the path the user visually perceives, for example, in head-mounted display (HMD) environments. The user can be redirected to certain locations in the physical space, in particular to real proxy objects which provide emphpassive feedback. In such passive haptic environments, any number of virtual objects can be mapped to proxy objects having similar haptic properties, i.e., size, shape and surface structure. When the user is guided to corresponding proxy objects, s/he can sense virtual objects by touching their real world counterparts. Therefore it is vital to predict the user's movements in the virtual world in order to recognize the target location. Based on the prediction a transformed path can determined in the physical space on which the user is guided to the desired proxy object. In this paper we present concepts how a user's path can be predicted reliably and how a corresponding path to a desired proxy object can be derived on which the user does not observe inconsistencies between vision and proprioception. |

![[POSTER] Generic Redirected Walking & Dynamic Passive Haptics: Evaluation and Implications for Virtual Locomotion Interfaces](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRHFL08.png) | Frank Steinicke; Gerd Bruder; Timo Ropinski; Klaus H. Hinrichs; Harald Frenz; Markus Lappe [POSTER] Generic Redirected Walking & Dynamic Passive Haptics: Evaluation and Implications for Virtual Locomotion Interfaces Proceedings Article In: Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI) (Poster Presentation), pp. 147–148, IEEE Press, 2008. @inproceedings{SBRHFL08,

title = {[POSTER] Generic Redirected Walking & Dynamic Passive Haptics: Evaluation and Implications for Virtual Locomotion Interfaces},

author = { Frank Steinicke and Gerd Bruder and Timo Ropinski and Klaus H. Hinrichs and Harald Frenz and Markus Lappe},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRHFL08.pdf},

year = {2008},

date = {2008-01-01},

booktitle = {Proceedings of the IEEE Symposium on 3D User Interfaces (3DUI) (Poster Presentation)},

pages = {147--148},

publisher = {IEEE Press},

abstract = {In this paper we introduce a virtual locomotion system that allows navigation within any large-scale virtual environment (VE) by real walking. Based on the results of a user study we have quantified how much users can unknowingly be redirected in order to guide them through an arbitrarily sized VE in which virtual paths differ from the paths tracked in the real working space. Furthermore we introduce the new concept of dynamic passive haptics. This concept allows to map any number of virtual objects to real emphproxy objects having similar haptic properties, i.e., size, shape and surface structure, such that the user can sense these virtual objects by touching their real world counterparts. This mapping may be changed dynamically during runtime and need not be one-to-one. Thus dynamic passive haptics provides the user with the illusion of interacting with a desired virtual object by redirecting her/him to the corresponding proxy object. Since the mapping between virtual and proxy objects can be changed dynamically, a small number of proxy objects suffices to represent a much larger number of virtual objects. We describe the concepts in detail and discuss their parameterization which has been derived from the initially conducted user study. Furthermore we explain technical details regarding the integration into legacy 3D graphics applications, which is based on an interceptor library allowing to trace and modify 3D graphics calls. Thus when the user is tracked s/he is able to explore any 3D scene by natural walking, which we demonstrate by 3D graphics applications from different domains.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we introduce a virtual locomotion system that allows navigation within any large-scale virtual environment (VE) by real walking. Based on the results of a user study we have quantified how much users can unknowingly be redirected in order to guide them through an arbitrarily sized VE in which virtual paths differ from the paths tracked in the real working space. Furthermore we introduce the new concept of dynamic passive haptics. This concept allows to map any number of virtual objects to real emphproxy objects having similar haptic properties, i.e., size, shape and surface structure, such that the user can sense these virtual objects by touching their real world counterparts. This mapping may be changed dynamically during runtime and need not be one-to-one. Thus dynamic passive haptics provides the user with the illusion of interacting with a desired virtual object by redirecting her/him to the corresponding proxy object. Since the mapping between virtual and proxy objects can be changed dynamically, a small number of proxy objects suffices to represent a much larger number of virtual objects. We describe the concepts in detail and discuss their parameterization which has been derived from the initially conducted user study. Furthermore we explain technical details regarding the integration into legacy 3D graphics applications, which is based on an interceptor library allowing to trace and modify 3D graphics calls. Thus when the user is tracked s/he is able to explore any 3D scene by natural walking, which we demonstrate by 3D graphics applications from different domains. |

2007

|

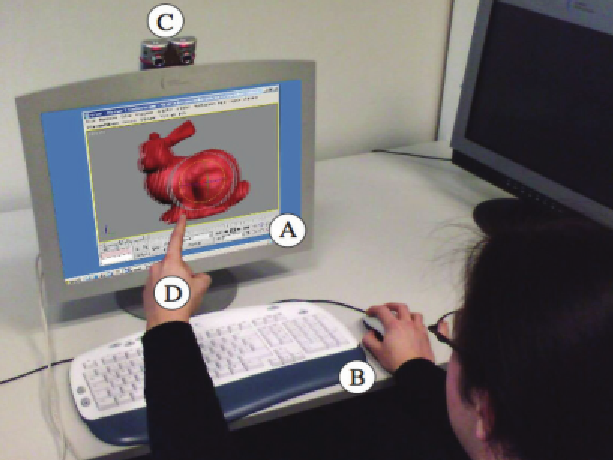

| Frank Steinicke; Timo Ropinski; Gerd Bruder; Klaus H. Hinrichs Towards Applicable 3D User Interfaces for Everyday Working Environments Proceedings Article In: Proceedings of the International Conference on Human-Computer Interaction (INTERACT), pp. 546–559, Springer, 2007. @inproceedings{SRBH07b,

title = {Towards Applicable 3D User Interfaces for Everyday Working Environments},

author = { Frank Steinicke and Timo Ropinski and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SRBH07b.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Proceedings of the International Conference on Human-Computer Interaction (INTERACT)},

volume = {4662},

pages = {546--559},

publisher = {Springer},

series = {Lecture Notes in Computer Science},

abstract = {Desktop environments have proven to be a powerful user interface and are used as the de facto standard human-computer interaction paradigm for over 40 years. However, there is a rising demand on 3D applications dealing with complex datasets, which exceeds the possibilities provided by traditional devices or two-dimensional display.For these domains more immersive and intuitive interfaces are required. But in order to get the users' acceptance, technology-driven solutions that require inconvenient instrumentation, e.g., stereo glasses or tracked gloves, should be avoided. Autostereoscopic display environments equipped with tracking systems enable users to experience 3D virtual environments more natural without annoying devices, for instance via gestures. However, currently these approaches are only applied for specially designed or adapted applications without universal usability. In this paper we introduce new 3D user interface concepts for such setups where minimal instrumentation of the user is required such that the strategies can be easily integrated in everyday working environments. Therefore, we propose an interaction system and framework which allows to display and interact with both mono- as well as stereoscopic content simultaneously. The challenges for combined mouse-, keyboard- and gesture-based input paradigms in such an environment are pointed out and novel interaction strategies are introduced.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

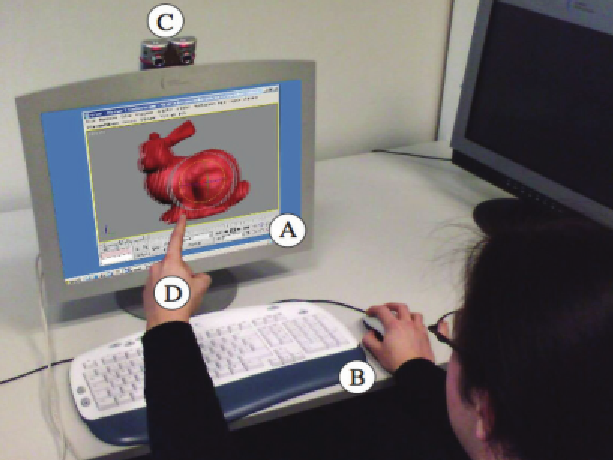

Desktop environments have proven to be a powerful user interface and are used as the de facto standard human-computer interaction paradigm for over 40 years. However, there is a rising demand on 3D applications dealing with complex datasets, which exceeds the possibilities provided by traditional devices or two-dimensional display.For these domains more immersive and intuitive interfaces are required. But in order to get the users' acceptance, technology-driven solutions that require inconvenient instrumentation, e.g., stereo glasses or tracked gloves, should be avoided. Autostereoscopic display environments equipped with tracking systems enable users to experience 3D virtual environments more natural without annoying devices, for instance via gestures. However, currently these approaches are only applied for specially designed or adapted applications without universal usability. In this paper we introduce new 3D user interface concepts for such setups where minimal instrumentation of the user is required such that the strategies can be easily integrated in everyday working environments. Therefore, we propose an interaction system and framework which allows to display and interact with both mono- as well as stereoscopic content simultaneously. The challenges for combined mouse-, keyboard- and gesture-based input paradigms in such an environment are pointed out and novel interaction strategies are introduced. |

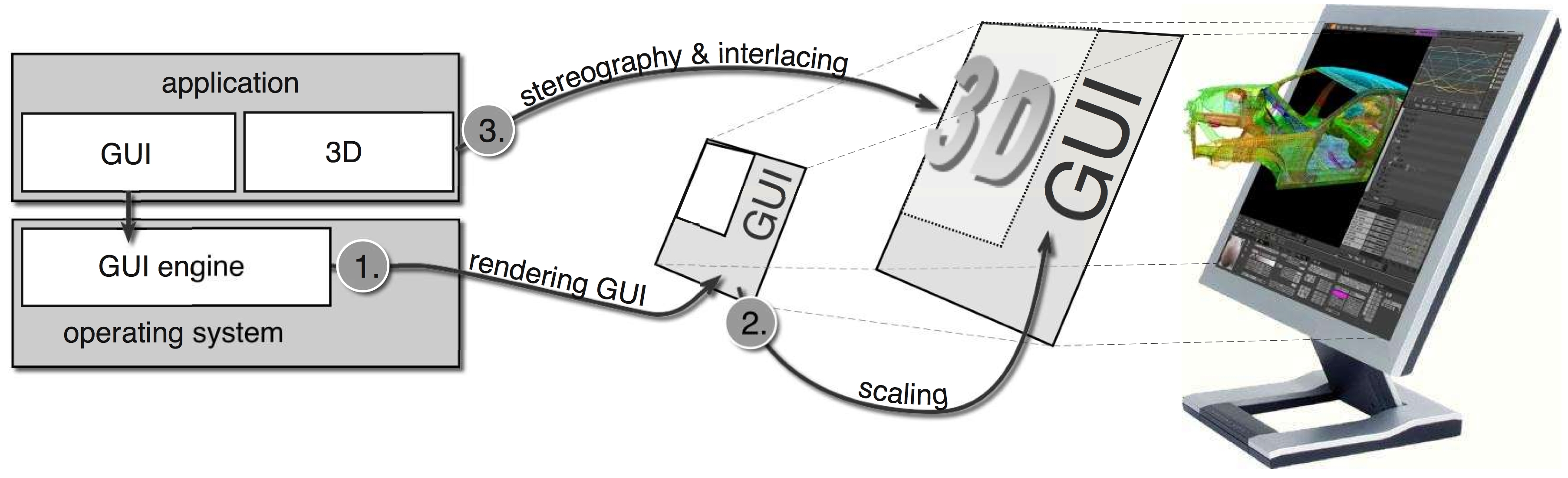

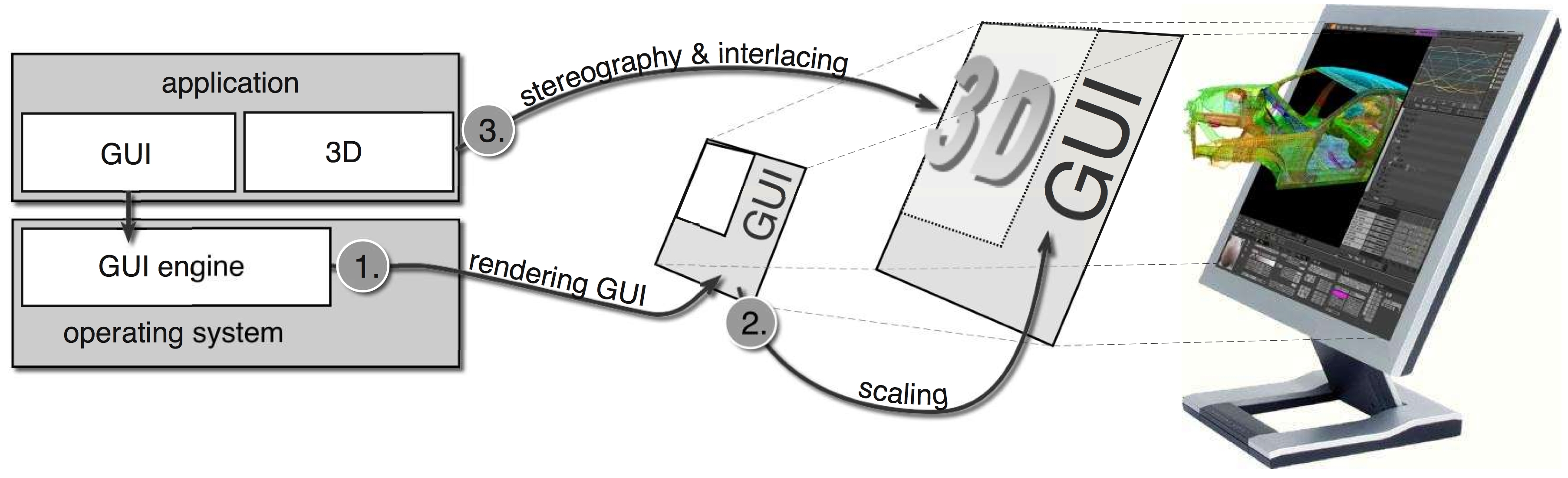

| Frank Steinicke; Timo Ropinski; Gerd Bruder; Klaus H. Hinrichs Interscopic User Interface Concepts for Fish Tank Virtual Reality Systems Proceedings Article In: Proceedings of IEEE Virtual Reality (VR), pp. 27–34, IEEE Press, 2007, ((acceptance rate 20%)). @inproceedings{SRBH07,

title = {Interscopic User Interface Concepts for Fish Tank Virtual Reality Systems},

author = { Frank Steinicke and Timo Ropinski and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SRBH07.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Proceedings of IEEE Virtual Reality (VR)},

pages = {27--34},

publisher = {IEEE Press},

abstract = {In this paper we introduce new user interface concepts for fish tank virtual reality (VR) systems based on autostereoscopic (AS) display technologies. Such AS displays allow to view stereoscopic content without requiring special glasses. Unfortunately, until now simultaneous monoscopic and stereoscopic display was not possible. Hence prior work on fish tank VR systems focussed either on 2D or 3D interactions. In this paper we introduce so called interscopic interaction concepts providing an improved working experience, which enable great potentials in terms of the interaction between 2D elements, which may be displayed either in monoscopic or stereoscopic, e.g., GUI items, and the 3D virtual environment usually displayed stereoscopically. We present a framework which is based on a software layer between the operating system and its graphical user interface supporting the display of both mono- as well as stereoscopic content in arbitrary regions of an autostereoscopic display. The proposed concepts open up new vistas for the interaction in environments where essential parts of the GUI are displayed monoscopically and other parts are rendered stereoscopically. We address some essential issues of such fish tank VR systems and introduce intuitive interaction concepts which we have realized.},

note = {(acceptance rate 20%)},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we introduce new user interface concepts for fish tank virtual reality (VR) systems based on autostereoscopic (AS) display technologies. Such AS displays allow to view stereoscopic content without requiring special glasses. Unfortunately, until now simultaneous monoscopic and stereoscopic display was not possible. Hence prior work on fish tank VR systems focussed either on 2D or 3D interactions. In this paper we introduce so called interscopic interaction concepts providing an improved working experience, which enable great potentials in terms of the interaction between 2D elements, which may be displayed either in monoscopic or stereoscopic, e.g., GUI items, and the 3D virtual environment usually displayed stereoscopically. We present a framework which is based on a software layer between the operating system and its graphical user interface supporting the display of both mono- as well as stereoscopic content in arbitrary regions of an autostereoscopic display. The proposed concepts open up new vistas for the interaction in environments where essential parts of the GUI are displayed monoscopically and other parts are rendered stereoscopically. We address some essential issues of such fish tank VR systems and introduce intuitive interaction concepts which we have realized. |

| Timo Ropinski; Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs Focus+Context Resolution Adaption for Autostereoscopic Displays Proceedings Article In: Butz, Andreas; Fisher, Brian D.; Krüger, Antonio; Olivier, Patrick; Owada, Shigeru (Ed.): Smart Graphics, pp. 188–193, Springer, 2007. @inproceedings{RSBH07,

title = {Focus+Context Resolution Adaption for Autostereoscopic Displays},

author = { Timo Ropinski and Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

editor = {Andreas Butz and Brian D. Fisher and Antonio Krüger and Patrick Olivier and Shigeru Owada},

year = {2007},

date = {2007-01-01},

booktitle = {Smart Graphics},

volume = {4569},

pages = {188--193},

publisher = {Springer},

series = {Lecture Notes in Computer Science},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

| Frank Steinicke; Gerd Bruder; Harald Frenz A Multimodal Locomotion User Interface for Immersive Geospatial Information Systems Proceedings Article In: Proceedings of GI-Days, pp. 289–293, 2007. @inproceedings{SBF07,

title = {A Multimodal Locomotion User Interface for Immersive Geospatial Information Systems},

author = { Frank Steinicke and Gerd Bruder and Harald Frenz},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBF07.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Proceedings of GI-Days},

pages = {289--293},

abstract = {In this paper we present a new multimodal locomotion user interface that enables users to travel through 3D environments displayed in geospatial information systems, e.g., Google Earth or Microsoft Virtual Earth. When using the proposed interface the geospatial data can be explored in immersive virtual environments (VEs) using stereoscopic visualization on a head-mounted display (HMD). When using certain tracking approaches the entire body can be tracked in order to support natural traveling by real walking. Moreover, intuitive devices are provided for both-handed interaction to complete the navigation process. We introduce the setup as well as corresponding interaction concepts.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we present a new multimodal locomotion user interface that enables users to travel through 3D environments displayed in geospatial information systems, e.g., Google Earth or Microsoft Virtual Earth. When using the proposed interface the geospatial data can be explored in immersive virtual environments (VEs) using stereoscopic visualization on a head-mounted display (HMD). When using certain tracking approaches the entire body can be tracked in order to support natural traveling by real walking. Moreover, intuitive devices are provided for both-handed interaction to complete the navigation process. We introduce the setup as well as corresponding interaction concepts. |

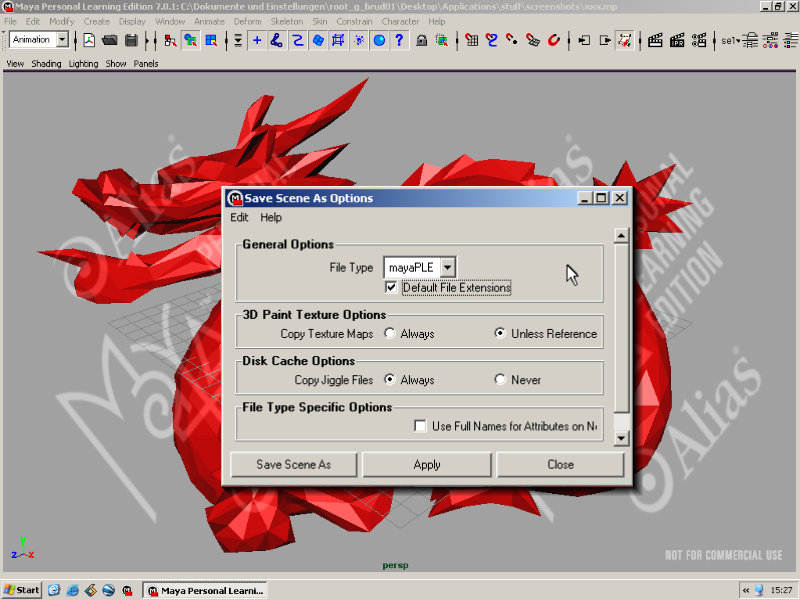

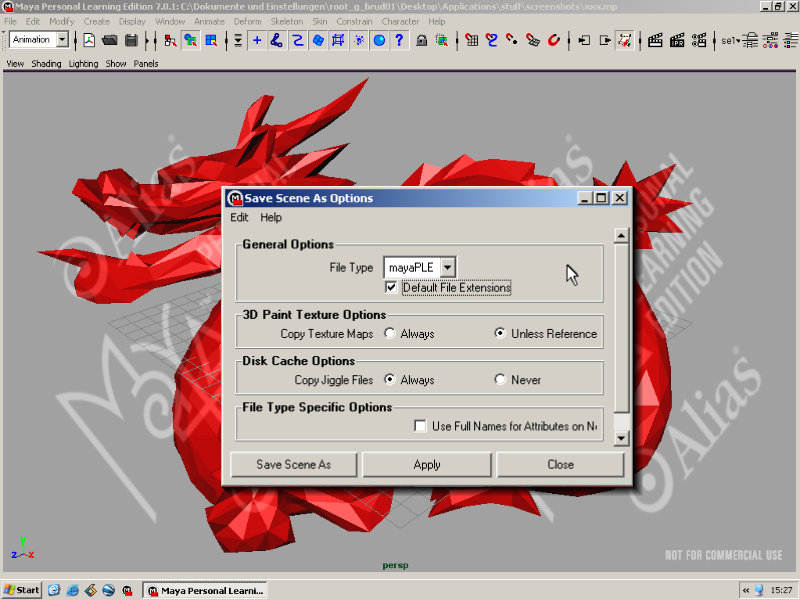

| Frank Steinicke; Timo Ropinski; Gerd Bruder; Klaus H. Hinrichs 3D Modeling and Design Supported via Interscopic Interaction Strategies Proceedings Article In: Proceedings of HCI International, pp. 1160–1169, Springer, 2007. @inproceedings{SRBH07c,

title = {3D Modeling and Design Supported via Interscopic Interaction Strategies},

author = { Frank Steinicke and Timo Ropinski and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SRBH07c.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Proceedings of HCI International},

volume = {4553},

pages = {1160--1169},

publisher = {Springer},

series = {Lecture Notes in Computer Science},

abstract = {3D modeling applications are widely used in many application domains ranging from CAD to industrial or graphics design. Desktop environments have proven to be a powerful user interface for such tasks. However, the raising complexity of 3D dataset exceeds the possibilities provided by traditional devices or two-dimensional display. Thus, more natural and intuitive interfaces are required. But in order to get the users' acceptance technology-driven solutions that require inconvenient instrumentation, e.g., stereo glasses or tracked gloves, should be avoided. Autostereoscopic display environments in combination with 3D desktop devices enable users to experience virtual environments more immersive without annoying devices. In this paper we introduce interaction strategies with special consideration of the requirements of 3D modelers. We propose an interscopic display environment with implicated user interface strategies that allow displaying and interacting with both mono-, e.g., 2D elements, and stereoscopic content, which is beneficial for the 3D environment, which has to be manipulated. These concepts are discussed with special consideration of the requirements of 3D modeler and designers.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

3D modeling applications are widely used in many application domains ranging from CAD to industrial or graphics design. Desktop environments have proven to be a powerful user interface for such tasks. However, the raising complexity of 3D dataset exceeds the possibilities provided by traditional devices or two-dimensional display. Thus, more natural and intuitive interfaces are required. But in order to get the users' acceptance technology-driven solutions that require inconvenient instrumentation, e.g., stereo glasses or tracked gloves, should be avoided. Autostereoscopic display environments in combination with 3D desktop devices enable users to experience virtual environments more immersive without annoying devices. In this paper we introduce interaction strategies with special consideration of the requirements of 3D modelers. We propose an interscopic display environment with implicated user interface strategies that allow displaying and interacting with both mono-, e.g., 2D elements, and stereoscopic content, which is beneficial for the 3D environment, which has to be manipulated. These concepts are discussed with special consideration of the requirements of 3D modeler and designers. |

| Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs; Timo Ropinski Simultane 2D/3D User Interface Konzepte f"ur Autostereoskopische Desktop-VR Systeme Proceedings Article In: Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR), pp. 125–132, Shaker, 2007. @inproceedings{SBHR07,

title = {Simultane 2D/3D User Interface Konzepte f"ur Autostereoskopische Desktop-VR Systeme},

author = { Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs and Timo Ropinski},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBHR07.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Proceedings of the GI Workshop on Virtual and Augmented Reality (GI VR/AR)},

pages = {125--132},

publisher = {Shaker},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

|

![[POSTER] Hybrid Traveling in Fully-Immersive Large-Scale Geographic Environments](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBH07.png) | Frank Steinicke; Gerd Bruder; Klaus H. Hinrichs [POSTER] Hybrid Traveling in Fully-Immersive Large-Scale Geographic Environments Proceedings Article In: Proceedings of the ACM Symposium on Virtual Reality and Software Technology (VRST) (Poster Presentation), pp. 229–230, 2007. @inproceedings{SBH07,

title = {[POSTER] Hybrid Traveling in Fully-Immersive Large-Scale Geographic Environments},

author = { Frank Steinicke and Gerd Bruder and Klaus H. Hinrichs},

url = {https://sreal.ucf.edu/wp-content/uploads/2017/02/SBH07.pdf},

year = {2007},

date = {2007-01-01},

booktitle = {Proceedings of the ACM Symposium on Virtual Reality and Software Technology (VRST) (Poster Presentation)},

pages = {229--230},

abstract = {In this paper we present hybrid traveling concepts that enable users to navigate immersively through 3D geospatial environments displayed by applications such as Google Earth or Microsoft Virtual Earth. We propose a framework which allows to integrate virtual reality (VR) based interaction devices and concepts into such applications that do not support VR technologies natively. In our proposed setup the content displayed by a geospatial application is visualized stereoscopically on a head-mounted display (HMD) for immersive exploration. The user's body can be tracked by using appropriate technologies in order to support natural traveling through the VE via a walking metaphor. Since the VE usually exceeds the dimension of the area in which the user can be tracked, we propose different strategies to map the user's movement into the virtual world. Moreover, intuitive devices and interaction techniques are presented for both-handed interaction to enrich the navigation process. In this paper we will describe the technical system setup as well as integrated interaction concepts and discuss scenarios based on existing geospatial visualization applications.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

In this paper we present hybrid traveling concepts that enable users to navigate immersively through 3D geospatial environments displayed by applications such as Google Earth or Microsoft Virtual Earth. We propose a framework which allows to integrate virtual reality (VR) based interaction devices and concepts into such applications that do not support VR technologies natively. In our proposed setup the content displayed by a geospatial application is visualized stereoscopically on a head-mounted display (HMD) for immersive exploration. The user's body can be tracked by using appropriate technologies in order to support natural traveling through the VE via a walking metaphor. Since the VE usually exceeds the dimension of the area in which the user can be tracked, we propose different strategies to map the user's movement into the virtual world. Moreover, intuitive devices and interaction techniques are presented for both-handed interaction to enrich the navigation process. In this paper we will describe the technical system setup as well as integrated interaction concepts and discuss scenarios based on existing geospatial visualization applications. |

![[POSTER] A Virtual Body for Augmented Virtuality by Chroma-Keying of Egocentric Videos](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRH09a_a.jpg)

![[POSTER] A Universal Virtual Locomotion System: Supporting Generic Redirected Walking and Dynamic Passive Haptics within Legacy 3D Graphics Applications](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRHFL08a.png)

![[POSTER] A User Guidance Approach for Passive Haptic Environments](https://sreal.ucf.edu/wp-content/uploads/2017/02/SWBH08.jpg)

![[POSTER] Generic Redirected Walking & Dynamic Passive Haptics: Evaluation and Implications for Virtual Locomotion Interfaces](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBRHFL08.png)

![[POSTER] Hybrid Traveling in Fully-Immersive Large-Scale Geographic Environments](https://sreal.ucf.edu/wp-content/uploads/2017/02/SBH07.png)